TECHNICAL ASSET FINGERPRINT

dc538a0648cdaec1f0dde0c5

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

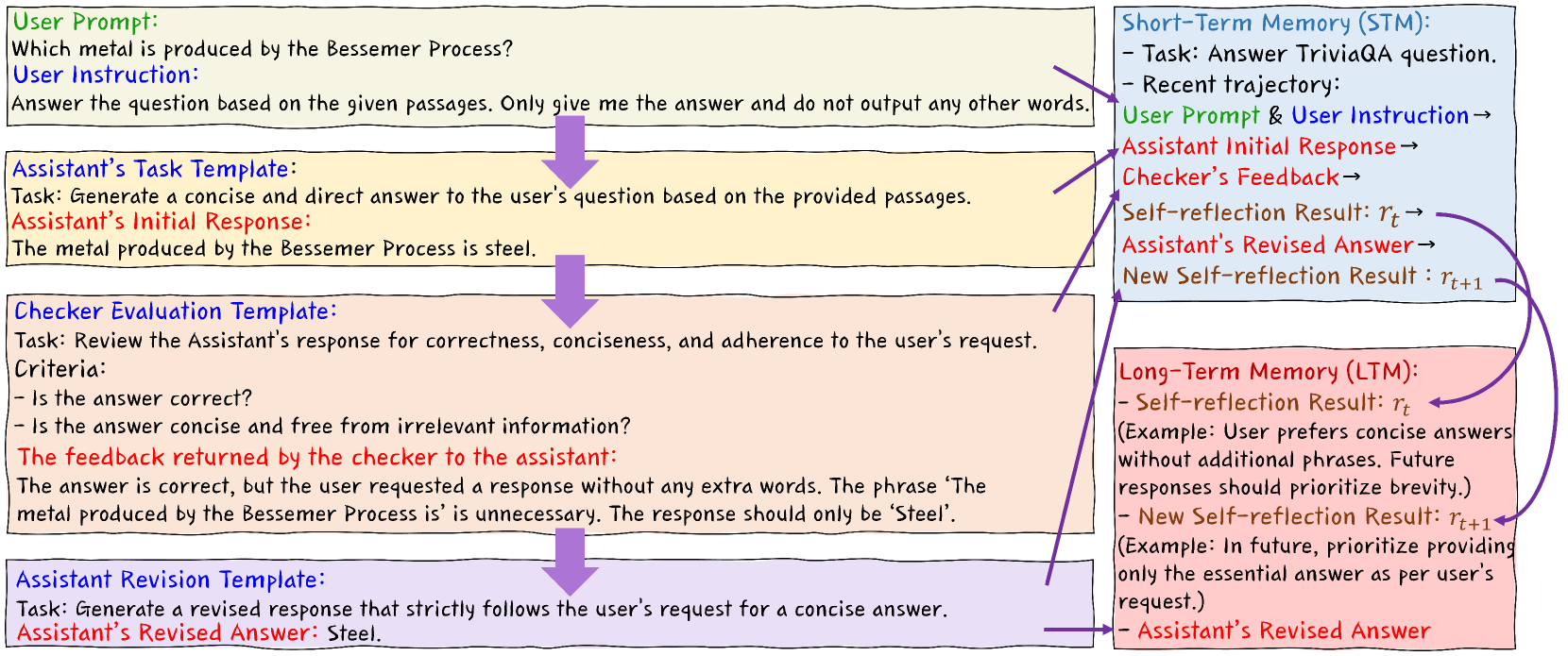

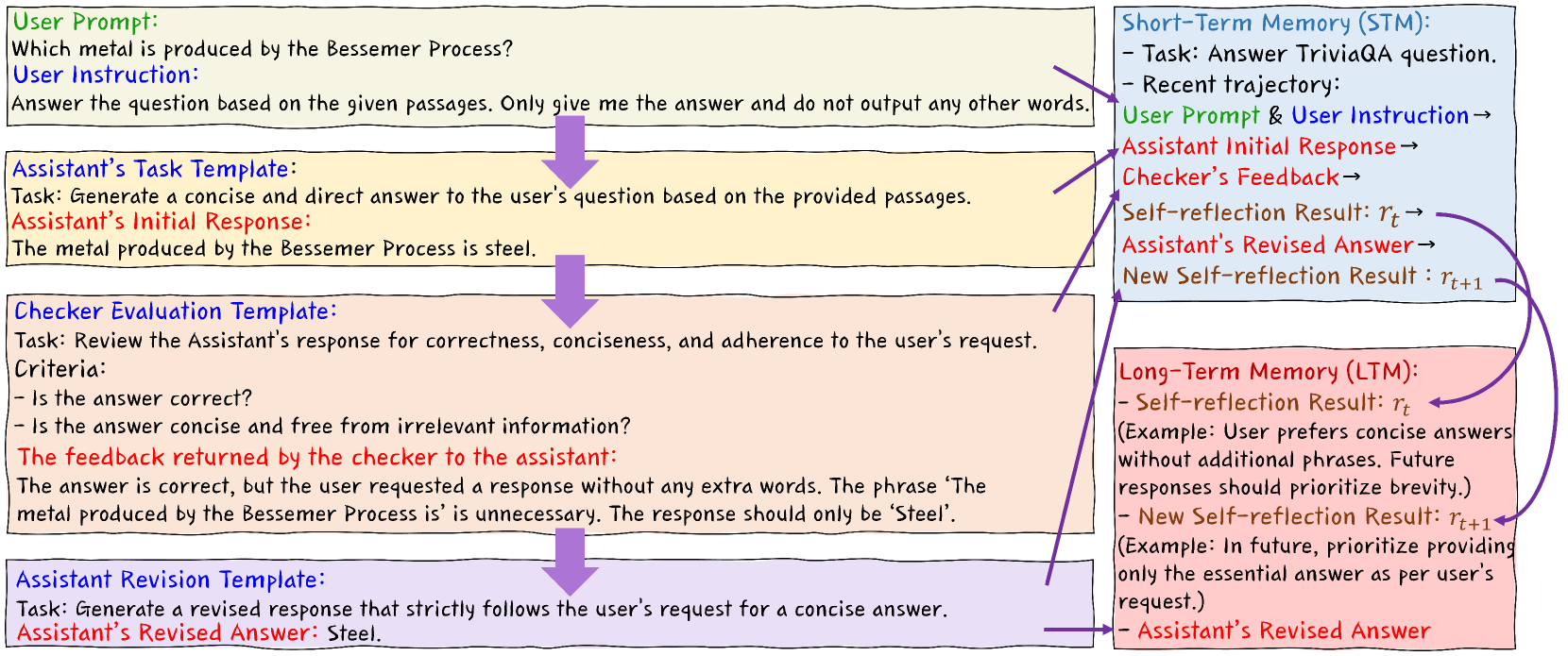

## Flow Diagram: Assistant Response Refinement

### Overview

The image is a flow diagram illustrating the process of refining an assistant's response to a user's question through a series of steps involving task templates, checker evaluations, and memory components (Short-Term Memory and Long-Term Memory). The diagram shows how the assistant's response is iteratively improved based on feedback and self-reflection.

### Components/Axes

* **User Prompt (Top-Left, Green Box):**

* "Which metal is produced by the Bessemer Process?"

* "Answer the question based on the given passages. Only give me the answer and do not output any other words."

* **Assistant's Task Template (Middle-Left, Yellow Box):**

* "Task: Generate a concise and direct answer to the user's question based on the provided passages."

* "Assistant's Initial Response: The metal produced by the Bessemer Process is steel."

* **Checker Evaluation Template (Middle-Left, Pink Box):**

* "Task: Review the Assistant's response for correctness, conciseness, and adherence to the user's request."

* "Criteria: Is the answer correct? Is the answer concise and free from irrelevant information?"

* "The feedback returned by the checker to the assistant: The answer is correct, but the user requested a response without any extra words. The phrase 'The metal produced by the Bessemer Process is' is unnecessary. The response should only be 'Steel'."

* **Assistant Revision Template (Bottom-Left, Purple Box):**

* "Task: Generate a revised response that strictly follows the user's request for a concise answer."

* "Assistant's Revised Answer: Steel."

* **Short-Term Memory (STM) (Top-Right, Blue Box):**

* "Task: Answer TriviaQA question."

* "Recent trajectory:"

* "User Prompt & User Instruction →"

* "Assistant Initial Response →"

* "Checker's Feedback →"

* "Self-reflection Result: rt →"

* "Assistant's Revised Answer →"

* "New Self-reflection Result: rt+1"

* **Long-Term Memory (LTM) (Bottom-Right, Red Box):**

* "Self-reflection Result: rt (Example: User prefers concise answers without additional phrases. Future responses should prioritize brevity.)"

* "New Self-reflection Result: rt+1 (Example: In future, prioritize providing only the essential answer as per user's request.)"

* "Assistant's Revised Answer"

* **Arrows:** Purple arrows indicate the flow of information and the iterative process.

### Detailed Analysis or Content Details

The diagram illustrates a process where a user provides a prompt and instruction. The assistant generates an initial response based on a task template. A checker evaluates the response based on predefined criteria and provides feedback. The assistant then revises the response based on this feedback, using a revision template. The Short-Term Memory (STM) tracks the recent trajectory of this process, while the Long-Term Memory (LTM) stores self-reflection results to improve future responses.

The flow starts with the User Prompt and Instruction, which leads to the Assistant's Task Template and Initial Response. The Checker Evaluation Template then provides feedback, which is used by the Assistant Revision Template to generate a revised answer. The STM tracks this process, and the LTM stores self-reflection results to improve future responses. The LTM feeds back into the STM, creating a loop for continuous improvement.

### Key Observations

* The process emphasizes iterative refinement of the assistant's response.

* The checker's feedback is crucial for guiding the revision process.

* Both Short-Term and Long-Term Memory play a role in improving the assistant's performance over time.

* The example provided in the LTM highlights the importance of understanding user preferences for concise answers.

### Interpretation

The diagram demonstrates a system designed to improve the quality and relevance of an assistant's responses. By incorporating feedback from a checker and leveraging both short-term and long-term memory, the system aims to provide more accurate, concise, and user-aligned answers. The iterative nature of the process allows the assistant to learn from its mistakes and adapt to user preferences over time. The use of task templates and revision templates ensures consistency and adherence to predefined criteria. The system is designed to learn and adapt to user preferences, as demonstrated by the example in the Long-Term Memory.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Self-Reflective Learning Loop

### Overview

The image depicts a diagram illustrating a self-reflective learning loop within an AI system, specifically focusing on a question-answering task. It showcases the interaction between a User, an Assistant, a Checker, and two memory components: Short-Term Memory (STM) and Long-Term Memory (LTM). The diagram highlights the iterative process of generating, evaluating, and revising answers based on feedback.

### Components/Axes

The diagram is structured into three main sections: User Interaction (left), Short-Term Memory (top-right), and Long-Term Memory (bottom-right). Arrows indicate the flow of information. Text boxes contain descriptions of tasks and responses.

* **User Prompt:** "Which metal is produced by the Bessemer Process?"

* **User Instruction:** "Answer the question based on the given passages. Only give me the answer and do not output any other words."

* **Assistant's Task Template:** "Task: Generate a concise and direct answer to the user's question based on the provided passages."

* **Assistant's Initial Response:** "The metal produced by the Bessemer Process is steel."

* **Checker Evaluation Template:** "Task: Review the Assistant's response for correctness, conciseness, and adherence to the user's request. Criteria: - Is the answer correct? - Is the answer concise and free from irrelevant information?"

* **Checker Feedback:** "The answer is correct, but the user requested a response without any extra words. The phrase 'The metal produced by the Bessemer Process is' is unnecessary. The response should only be 'Steel'."

* **Assistant Revision Template:** "Task: Generate a revised response that strictly follows the user's request for a concise answer."

* **Assistant's Revised Answer:** "Steel."

* **Short-Term Memory (STM):** Labeled with "Task: Answer TriviaQA question." and "Recent trajectory: User Prompt & User Instruction -> Assistant Initial Response -> Checker's Feedback -> Self-reflection Result: τ<sub>i</sub> -> Assistant's Revised Answer"

* **Long-Term Memory (LTM):** Labeled with "Self-reflection Result: τ<sub>i</sub> (Example: User prefers concise answers without additional phrases. Future responses should prioritize brevity.)" and "New Self-reflection Result: τ<sub>i+1</sub> (Example: In future, prioritize providing only the essential answer as per user's request.)"

### Detailed Analysis or Content Details

The diagram illustrates a four-step process:

1. **Initial Response Generation:** The Assistant generates an initial response to the User Prompt based on the provided Task Template.

2. **Evaluation & Feedback:** The Checker evaluates the Assistant's response against the User Instruction and provides feedback.

3. **Revision:** The Assistant revises its response based on the Checker's feedback, guided by the Revision Template.

4. **Memory Update:** The learning process is captured in both Short-Term Memory (STM) and Long-Term Memory (LTM). STM records the recent trajectory of the interaction, while LTM stores generalized self-reflection results for future use.

The initial response is verbose ("The metal produced by the Bessemer Process is steel."). The feedback highlights the need for conciseness. The revised response is concise ("Steel."). The STM shows the flow of information, and the LTM shows how the system learns from the interaction to improve future responses.

### Key Observations

* The diagram emphasizes the importance of adhering to user instructions, even if the initial response is technically correct.

* The self-reflection mechanism allows the system to learn from its mistakes and improve its performance over time.

* The distinction between STM and LTM suggests a hierarchical memory structure where short-term interactions inform long-term learning.

* The use of τ<sub>i</sub> and τ<sub>i+1</sub> notation indicates a mathematical or algorithmic representation of the self-reflection process.

### Interpretation

This diagram demonstrates a sophisticated approach to AI learning, going beyond simple accuracy to focus on user satisfaction and adherence to specific requests. The self-reflective loop, combined with the memory components, allows the system to adapt its behavior based on past interactions. This is a key aspect of building more intelligent and user-friendly AI assistants. The diagram suggests a system designed for iterative refinement, where feedback is not just used to correct errors but also to shape the system's future responses. The use of mathematical notation (τ<sub>i</sub>, τ<sub>i+1</sub>) implies a formal model underlying the learning process, potentially involving reinforcement learning or similar techniques. The diagram is not presenting data in the traditional sense, but rather illustrating a *process* and a *system architecture*. It's a conceptual model of how an AI agent can learn to better understand and respond to user needs.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: AI Assistant Self-Improvement Feedback Loop

### Overview

The image is a flowchart diagram illustrating a process for an AI assistant to generate, evaluate, and revise its responses based on user instructions and feedback. It depicts a cyclical workflow involving a user prompt, an assistant's initial response, a checker's evaluation, and a revised answer, with connections to short-term and long-term memory components. The diagram uses colored boxes and directional arrows to show the flow of information and tasks.

### Components/Axes

The diagram is organized into two primary vertical columns.

**Left Column (Process Flow):**

1. **Top Box (Light Green):** Labeled "User Prompt:" and "User Instruction:".

2. **Second Box (Light Yellow):** Labeled "Assistant's Task Template:" and "Assistant's Initial Response:".

3. **Third Box (Light Pink):** Labeled "Checker Evaluation Template:" and "The feedback returned by the checker to the assistant:".

4. **Bottom Box (Light Purple):** Labeled "Assistant Revision Template:" and "Assistant's Revised Answer:".

**Right Column (Memory Components):**

1. **Top Box (Light Blue):** Labeled "Short-Term Memory (STM):".

2. **Bottom Box (Light Red):** Labeled "Long-Term Memory (LTM):".

**Connectors:**

* Thick, downward-pointing purple arrows connect the four boxes in the left column sequentially.

* Thin, purple arrows connect elements from the left column to the memory boxes on the right and vice versa, indicating data flow and feedback loops.

### Detailed Analysis

**Left Column - Process Flow:**

* **User Prompt Box:**

* **User Prompt:** "Which metal is produced by the Bessemer Process?"

* **User Instruction:** "Answer the question based on the given passages. Only give me the answer and do not output any other words."

* **Assistant's Task Template Box:**

* **Task:** "Generate a concise and direct answer to the user's question based on the provided passages."

* **Assistant's Initial Response:** "The metal produced by the Bessemer Process is steel."

* **Checker Evaluation Template Box:**

* **Task:** "Review the Assistant's response for correctness, conciseness, and adherence to the user's request."

* **Criteria:**

* "Is the answer correct?"

* "Is the answer concise and free from irrelevant information?"

* **The feedback returned by the checker to the assistant:** "The answer is correct, but the user requested a response without any extra words. The phrase 'The metal produced by the Bessemer Process is' is unnecessary. The response should only be 'Steel'."

* **Assistant Revision Template Box:**

* **Task:** "Generate a revised response that strictly follows the user's request for a concise answer."

* **Assistant's Revised Answer:** "Steel."

**Right Column - Memory Components:**

* **Short-Term Memory (STM) Box:**

* **Task:** "Answer TriviaQA question."

* **Recent trajectory:** A list showing the flow:

* "User Prompt & User Instruction →"

* "Assistant Initial Response →"

* "Checker's Feedback →"

* "Self-reflection Result: r_t →"

* "Assistant's Revised Answer →"

* "New Self-reflection Result: r_{t+1}"

* An arrow points from "Self-reflection Result: r_t" in this list down to the Long-Term Memory box.

* **Long-Term Memory (LTM) Box:**

* **Self-reflection Result: r_t** (with an arrow pointing to it from STM).

* **(Example:** "User prefers concise answers without additional phrases. Future responses should prioritize brevity.")

* **New Self-reflection Result: r_{t+1}** (with an arrow pointing to it from the STM's "New Self-reflection Result: r_{t+1}").

* **Assistant's Revised Answer** (with an arrow pointing to it from the left column's "Assistant's Revised Answer").

### Key Observations

1. **Iterative Refinement:** The core process is a closed loop: Prompt → Initial Response → Evaluation/Feedback → Revised Response.

2. **Memory Integration:** The process is not stateless. Short-Term Memory tracks the immediate trajectory of the current interaction. Long-Term Memory stores generalized learnings (self-reflection results) from past interactions to inform future behavior.

3. **Explicit Criteria:** The checker's evaluation is based on specific, predefined criteria (correctness, conciseness, adherence to instruction).

4. **Learning Mechanism:** The "Self-reflection Result" (r_t) is generated from the checker's feedback and is used to update the assistant's strategy. This updated strategy (r_{t+1}) is then stored in LTM for future use.

5. **Visual Coding:** Different colors are used to distinguish between user input (green), assistant processes (yellow, purple), checker processes (pink), and memory stores (blue, red).

### Interpretation

This diagram models a **self-improving AI assistant system** designed to adhere strictly to user constraints. It goes beyond a simple request-response model by incorporating an internal critic (the Checker) and a learning mechanism.

* **The Data Suggests:** The system is engineered to handle tasks where response format is as critical as content accuracy. The example shows the assistant learning to strip conversational filler to meet a "concise answer only" instruction.

* **Relationships:** The Checker acts as a quality control layer, enforcing rules that the assistant might initially overlook. The memory components bridge individual interactions, allowing the system to accumulate experience. The feedback from the Checker is transformed into a "self-reflection" that becomes a stored policy (e.g., "prioritize brevity").

* **Notable Anomaly/Feature:** The system explicitly separates the *instance-specific* feedback ("your phrase was unnecessary") from the *generalized lesson* ("future responses should prioritize brevity"). This is a key feature for scalable learning, preventing the memory from being cluttered with one-off comments.

* **Underlying Principle:** The flowchart embodies a **Peircean investigative cycle** of learning: it makes a conjecture (initial response), tests it against a rule-based critic (checker), and abductively infers a new rule (self-reflection) to improve future conjectures. The "Long-Term Memory" serves as the evolving repository of these abductive insights.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Bessemer Process QA System Workflow

### Overview

The flowchart depicts an iterative question-answering system for technical queries, emphasizing conciseness and correctness. It includes feedback loops, memory components, and revision mechanisms.

### Components/Axes

1. **User Prompt (Green Box)**:

- Text: "Which metal is produced by the Bessemer Process?"

- User Instruction: "Answer the question based on the given passages. Only give me the answer and do not output any other words."

2. **Assistant’s Task Template (Blue Box)**:

- Task: Generate a concise and direct answer based on provided passages.

- Initial Response: "The metal produced by the Bessemer Process is steel."

3. **Checker Evaluation Template (Orange Box)**:

- Task: Review response for correctness, conciseness, and adherence to instructions.

- Criteria:

- Is the answer correct?

- Is the answer concise and free from irrelevant information?

- Feedback: "The answer is correct, but the user requested a response without any extra words. The phrase ‘The metal produced by the Bessemer Process is’ is unnecessary. The response should only be ‘Steel.’"

4. **Assistant Revision Template (Purple Box)**:

- Task: Generate a revised response following the user’s request for conciseness.

- Revised Answer: "Steel."

5. **Short-Term Memory (STM) (Blue Box)**:

- Task: Answer TriviaQA questions.

- Recent Trajectory:

- User Prompt & Instruction → Assistant Initial Response → Checker Feedback → Self-reflection Result (`r_t`) → Assistant Revised Answer → New Self-reflection Result (`r_{t+1}`).

6. **Long-Term Memory (LTM) (Pink Box)**:

- Self-reflection Result (`r_t`): Example: "User prefers concise answers without additional phrases. Future responses should prioritize brevity."

- New Self-reflection Result (`r_{t+1}`): Example: "Prioritize providing only the essential answer as per user’s request."

### Detailed Analysis

- **Flow Direction**:

- User input → Assistant’s initial response → Checker evaluation → Feedback → Revised answer.

- STM and LTM components influence future responses via self-reflection results.

- **Textual Content**:

- All boxes contain explicit instructions, criteria, and examples. No numerical data or trends are present.

### Key Observations

- The system enforces strict adherence to user instructions (e.g., removing unnecessary phrases).

- Feedback loops ensure iterative improvement of responses.

- Memory components (`r_t`, `r_{t+1}`) store preferences for future interactions.

### Interpretation

This flowchart illustrates a closed-loop QA system where user feedback drives continuous refinement of answers. The integration of STM and LTM allows the system to adapt to user preferences over time, prioritizing brevity and precision. The example of correcting "steel" to "Steel" highlights the system’s focus on eliminating redundant language while maintaining accuracy.

DECODING INTELLIGENCE...