## Diagram: Self-Reflective Learning Loop

### Overview

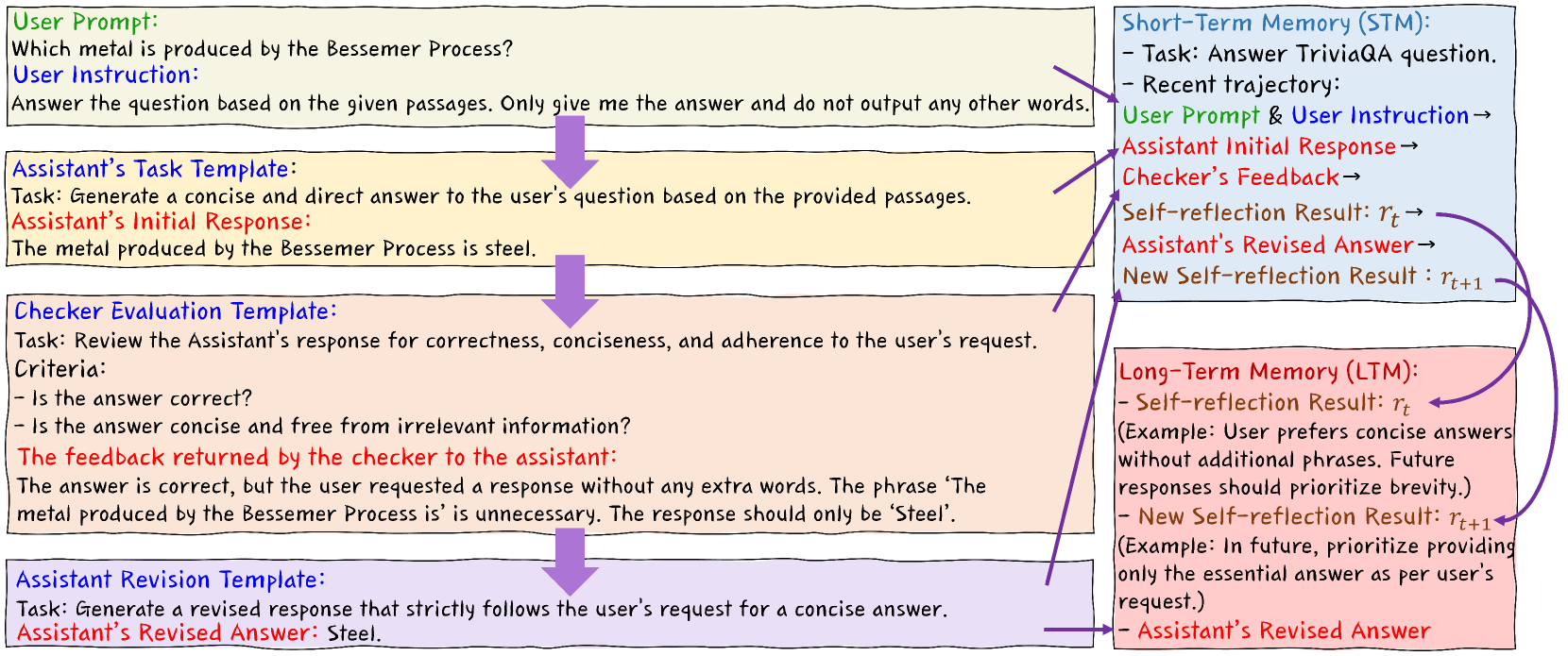

The image depicts a diagram illustrating a self-reflective learning loop within an AI system, specifically focusing on a question-answering task. It showcases the interaction between a User, an Assistant, a Checker, and two memory components: Short-Term Memory (STM) and Long-Term Memory (LTM). The diagram highlights the iterative process of generating, evaluating, and revising answers based on feedback.

### Components/Axes

The diagram is structured into three main sections: User Interaction (left), Short-Term Memory (top-right), and Long-Term Memory (bottom-right). Arrows indicate the flow of information. Text boxes contain descriptions of tasks and responses.

* **User Prompt:** "Which metal is produced by the Bessemer Process?"

* **User Instruction:** "Answer the question based on the given passages. Only give me the answer and do not output any other words."

* **Assistant's Task Template:** "Task: Generate a concise and direct answer to the user's question based on the provided passages."

* **Assistant's Initial Response:** "The metal produced by the Bessemer Process is steel."

* **Checker Evaluation Template:** "Task: Review the Assistant's response for correctness, conciseness, and adherence to the user's request. Criteria: - Is the answer correct? - Is the answer concise and free from irrelevant information?"

* **Checker Feedback:** "The answer is correct, but the user requested a response without any extra words. The phrase 'The metal produced by the Bessemer Process is' is unnecessary. The response should only be 'Steel'."

* **Assistant Revision Template:** "Task: Generate a revised response that strictly follows the user's request for a concise answer."

* **Assistant's Revised Answer:** "Steel."

* **Short-Term Memory (STM):** Labeled with "Task: Answer TriviaQA question." and "Recent trajectory: User Prompt & User Instruction -> Assistant Initial Response -> Checker's Feedback -> Self-reflection Result: τ<sub>i</sub> -> Assistant's Revised Answer"

* **Long-Term Memory (LTM):** Labeled with "Self-reflection Result: τ<sub>i</sub> (Example: User prefers concise answers without additional phrases. Future responses should prioritize brevity.)" and "New Self-reflection Result: τ<sub>i+1</sub> (Example: In future, prioritize providing only the essential answer as per user's request.)"

### Detailed Analysis or Content Details

The diagram illustrates a four-step process:

1. **Initial Response Generation:** The Assistant generates an initial response to the User Prompt based on the provided Task Template.

2. **Evaluation & Feedback:** The Checker evaluates the Assistant's response against the User Instruction and provides feedback.

3. **Revision:** The Assistant revises its response based on the Checker's feedback, guided by the Revision Template.

4. **Memory Update:** The learning process is captured in both Short-Term Memory (STM) and Long-Term Memory (LTM). STM records the recent trajectory of the interaction, while LTM stores generalized self-reflection results for future use.

The initial response is verbose ("The metal produced by the Bessemer Process is steel."). The feedback highlights the need for conciseness. The revised response is concise ("Steel."). The STM shows the flow of information, and the LTM shows how the system learns from the interaction to improve future responses.

### Key Observations

* The diagram emphasizes the importance of adhering to user instructions, even if the initial response is technically correct.

* The self-reflection mechanism allows the system to learn from its mistakes and improve its performance over time.

* The distinction between STM and LTM suggests a hierarchical memory structure where short-term interactions inform long-term learning.

* The use of τ<sub>i</sub> and τ<sub>i+1</sub> notation indicates a mathematical or algorithmic representation of the self-reflection process.

### Interpretation

This diagram demonstrates a sophisticated approach to AI learning, going beyond simple accuracy to focus on user satisfaction and adherence to specific requests. The self-reflective loop, combined with the memory components, allows the system to adapt its behavior based on past interactions. This is a key aspect of building more intelligent and user-friendly AI assistants. The diagram suggests a system designed for iterative refinement, where feedback is not just used to correct errors but also to shape the system's future responses. The use of mathematical notation (τ<sub>i</sub>, τ<sub>i+1</sub>) implies a formal model underlying the learning process, potentially involving reinforcement learning or similar techniques. The diagram is not presenting data in the traditional sense, but rather illustrating a *process* and a *system architecture*. It's a conceptual model of how an AI agent can learn to better understand and respond to user needs.