TECHNICAL ASSET FINGERPRINT

dc82e7efc09e35d870be48d3

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

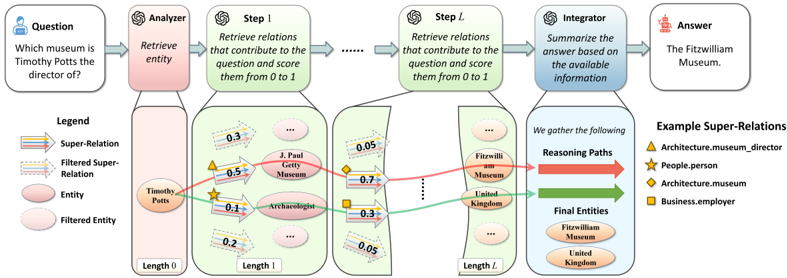

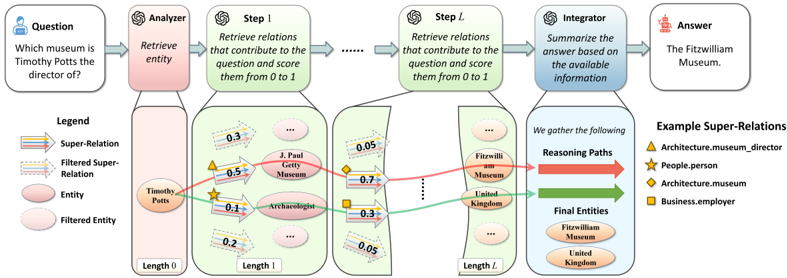

## Reasoning Path Diagram: Question Answering System

### Overview

The image presents a diagram illustrating a reasoning path for a question-answering system. It outlines the process from receiving a question to providing an answer, involving entity retrieval, relation scoring, and information summarization. The diagram uses visual elements like arrows, shapes, and colors to represent different components and their relationships.

### Components/Axes

* **Question:** The initial input, represented by a speech bubble icon and the text "Which museum is Timothy Potts the director of?".

* **Analyzer:** A step that retrieves the entity from the question.

* **Step 1:** Retrieves relations that contribute to the question and scores them from 0 to 1.

* **Step L:** Retrieves relations that contribute to the question and scores them from 0 to 1.

* **Integrator:** Summarizes the answer based on the available information.

* **Answer:** The final output, "The Fitzwilliam Museum."

* **Legend:** Located on the left side of the diagram.

* **Super-Relation:** Represented by a solid arrow.

* **Filtered Super-Relation:** Represented by a dashed arrow.

* **Entity:** Represented by a filled oval.

* **Filtered Entity:** Represented by an unfilled oval.

* **Example Super-Relations:** Located on the right side of the diagram.

* Architecture.museum\_director (Triangle)

* People.person (Star)

* Architecture.museum (Diamond)

* Business.employer (Square)

* **Reasoning Paths:**

* Red line

* Green line

* **Final Entities:**

* Fitzwilliam Museum

* United Kingdom

* **Length:**

* Length 0

* Length 1

* Length L

### Detailed Analysis or Content Details

The diagram shows a flow from left to right, starting with the question and ending with the answer.

1. **Question:** "Which museum is Timothy Potts the director of?"

2. **Analyzer:** Retrieves the entity "Timothy Potts".

3. **Step 1:**

* From "Timothy Potts", a red line (Reasoning Path) leads to "J. Paul Getty Museum". A filtered super-relation (dashed arrow) with a score of approximately 0.3 points away from "J. Paul Getty Museum" with a triangle symbol (Architecture.museum\_director). Another filtered super-relation with a score of approximately 0.5 points away from "J. Paul Getty Museum" with a triangle symbol (Architecture.museum\_director).

* From "Timothy Potts", a green line (Reasoning Path) leads to "Archaeologist". A filtered super-relation (dashed arrow) with a score of approximately 0.1 points away from "Archaeologist" with a star symbol (People.person). Another filtered super-relation with a score of approximately 0.2 points away from "Archaeologist" with a triangle symbol (Architecture.museum\_director).

4. **Step L:**

* A red line (Reasoning Path) leads to "Fitzwilliam Museum". A filtered super-relation (dashed arrow) with a score of approximately 0.05 points away from "Fitzwilliam Museum" with a diamond symbol (Architecture.museum). Another filtered super-relation with a score of approximately 0.7 points away from "Fitzwilliam Museum" with a triangle symbol (Architecture.museum\_director).

* A green line (Reasoning Path) leads to "United Kingdom". A filtered super-relation (dashed arrow) with a score of approximately 0.3 points away from "United Kingdom" with a square symbol (Business.employer). Another filtered super-relation with a score of approximately 0.05 points away from "United Kingdom" with a triangle symbol (Architecture.museum\_director).

5. **Integrator:** Summarizes the answer based on the available information.

6. **Answer:** "The Fitzwilliam Museum."

### Key Observations

* The diagram uses different colored lines (red and green) to represent different reasoning paths.

* The filtered super-relations are scored between 0 and 1, indicating the strength of the relation.

* The example super-relations provide context for the types of relationships being considered.

### Interpretation

The diagram illustrates a knowledge graph traversal approach to answering questions. The system starts with an initial entity, explores related entities through different reasoning paths, and scores these relations to arrive at the final answer. The use of filtered super-relations and scoring allows the system to prioritize more relevant information. The diagram highlights the importance of entity recognition, relation extraction, and reasoning in question-answering systems. The different reasoning paths suggest that the system explores multiple possibilities before arriving at the final answer.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 2

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Knowledge Graph Reasoning Process

### Overview

This diagram illustrates a multi-step process for answering a question using a knowledge graph. The process involves an Analyzer, multiple Reasoning Steps (Step 1 to Step L), an Integrator, and ultimately provides an Answer. The diagram visually represents the flow of information and relationships between entities within the knowledge graph.

### Components/Axes

The diagram is structured horizontally, representing a sequential process. Key components include:

* **Question:** "Which museum is Timothy Potts the director of?" (Top-left, within a speech bubble)

* **Analyzer:** A box labeled "Analyzer" with the text "Retrieve relations that contribute to the entity question and score them from 0 to 1".

* **Step 1 to Step L:** Multiple boxes labeled "Step 1" through "Step L", each containing a knowledge graph fragment and the text "Retrieve relations that contribute to the question and score them from 0 to 1".

* **Integrator:** A box labeled "Integrator" with the text "Summarize the answer based on the available information".

* **Answer:** "The Fitzwilliam Museum." (Top-right, within a speech bubble)

* **Legend:** Located on the bottom-left, defining the visual representation of relationships and entities.

* Orange Arrow: Super-Relation

* Light Blue Arrow: Filtered Super-Relation

* Light Green Circle: Entity

* Light Blue Circle: Filtered Entity

* **Example Super-Relations:** Located on the bottom-right, defining the color-coding for relation types.

* Yellow Triangle: Architecture.museum.director

* Red Circle: People.person

* Green Rectangle: Architecture.museum

* Blue Square: Business.employer

* **Entities:** Timothy Potts, J. Paul Getty Museum, Archaeologist, Fitzwilliam Museum, United Kingdom.

* **Reasoning Paths:** Text block stating "We gather the following Reasoning Paths".

* **Final Entities:** Fitzwilliam Museum, United Kingdom.

### Detailed Analysis or Content Details

The diagram shows the progression of reasoning through the knowledge graph.

**Step 1:**

* Timothy Potts (Entity, Light Green Circle) is connected to J. Paul Getty Museum (Entity, Light Green Circle) via a Super-Relation (Orange Arrow) with a score of 0.4.

* Timothy Potts is connected to Archaeologist (Entity, Light Green Circle) via a Super-Relation with a score of 0.1.

* Archaeologist is connected to J. Paul Getty Museum via a Super-Relation with a score of 0.2.

* Length 1 is indicated below this step.

**Step L:**

* Fitzwilliam Museum (Entity, Light Green Circle) is connected to United Kingdom (Entity, Light Green Circle) via a Super-Relation (Orange Arrow) with a score of 0.7.

* Fitzwilliam Museum is connected to an unnamed entity (Entity, Light Green Circle) via a Super-Relation with a score of 0.05.

* Length L is indicated below this step.

The connections between entities are represented by arrows with associated numerical scores (between 0 and 1). The arrows indicate the strength or relevance of the relationship. Filtered Super-Relations (Light Blue Arrows) are also present, but their specific connections are not clearly defined in the visible portion of the diagram.

### Key Observations

* The diagram demonstrates a process of iteratively refining relationships between entities to arrive at an answer.

* The scores associated with the relationships suggest varying degrees of confidence or relevance.

* The diagram highlights the importance of multiple reasoning steps (Step 1 to Step L) in complex knowledge graph queries.

* The final answer, "The Fitzwilliam Museum," is derived from the accumulated evidence gathered through the reasoning steps.

### Interpretation

The diagram illustrates a knowledge graph-based question answering system. The system begins with a natural language question and transforms it into a series of graph traversals. Each step refines the search by retrieving relevant relationships and scoring them based on their contribution to the answer. The Integrator component then synthesizes the information from all steps to provide a final answer. The use of scores suggests a probabilistic or weighted approach to reasoning, where stronger relationships contribute more to the final result. The diagram emphasizes the importance of representing knowledge as a graph and leveraging graph algorithms for complex reasoning tasks. The example super-relations provide a taxonomy of the types of relationships stored within the knowledge graph. The diagram does not provide any quantitative data about the performance of the system, but it visually conveys the underlying logic and flow of information.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Knowledge Graph Question-Answering Process Flowchart

### Overview

The image is a technical flowchart illustrating a multi-step process for answering a natural language question by retrieving and reasoning over a knowledge graph. The process begins with a specific question, retrieves relevant entities and relations, scores and filters them, and finally integrates the information to produce a concise answer. The diagram uses a combination of boxes, arrows, icons, and a legend to explain the components and data flow.

### Components/Axes

The diagram is organized into five main vertical stages, flowing from left to right:

1. **Question (Far Left):** A box containing the input query: "Which museum is Timothy Potts the director of?"

2. **Analyzer (Left):** A pink box labeled "Analyzer" with the sub-task "Retrieve entity." An arrow points from the Question to this box.

3. **Step I (Center-Left):** A green box labeled "Step I" with the description: "Retrieve relations that contribute to the question and score them from 0 to 1." This box contains a sub-diagram showing the retrieval process.

4. **Step L (Center-Right):** A green box labeled "Step L" (indicating a later or final step in a sequence) with the same description as Step I. It contains another sub-diagram showing further relation retrieval and scoring.

5. **Integrator & Answer (Right):** A blue box labeled "Integrator" with the task: "Summarize the answer based on the available information." This leads to the final "Answer" box containing: "The Fitzwilliam Museum."

**Legend (Bottom-Left):**

* **Super-Relation:** Represented by a solid, multi-colored (red, yellow, green) arrow.

* **Filtered Super-Relation:** Represented by a dashed, multi-colored arrow.

* **Entity:** Represented by a solid pink oval.

* **Filtered Entity:** Represented by a dashed pink oval.

**Example Super-Relations (Far Right):**

A key listing relation types with corresponding icons:

* ▲ `Architecture.museum_director`

* ★ `People.person`

* ◆ `Architecture.museum`

* ■ `Business.employer`

### Detailed Analysis

The process flow and data within each stage are as follows:

**1. Entity Retrieval (Analyzer Stage):**

* The initial entity retrieved from the question is **"Timothy Potts"** (shown in a solid pink oval).

**2. First Relation Retrieval & Scoring (Step I):**

* From "Timothy Potts," several relations are retrieved and assigned scores:

* A **Super-Relation** (solid arrow) with score **0.5** connects to the entity **"J. Paul Getty Museum"**. This relation is tagged with the icon for `Architecture.museum_director` (▲).

* A **Super-Relation** (solid arrow) with score **0.1** connects to the entity **"Archaeologist"**. This relation is tagged with the icon for `People.person` (★).

* Two **Filtered Super-Relations** (dashed arrows) with scores **0.3** and **0.2** are shown but lead to no specific entity in this step, indicating they were deemed less relevant.

* The length of this processing step is noted as **"Length 0"** at the bottom.

**3. Subsequent Relation Retrieval & Scoring (Step L):**

* This step shows relations retrieved from the entities found in Step I.

* From **"J. Paul Getty Museum"**:

* A **Super-Relation** (solid arrow) with a high score of **0.7** connects to the entity **"Fitzwilliam Museum"**. This relation is tagged with the icon for `Architecture.museum` (◆).

* A **Filtered Super-Relation** (dashed arrow) with a low score of **0.05** is shown.

* From **"Archaeologist"**:

* A **Super-Relation** (solid arrow) with a score of **0.3** connects to the entity **"United Kingdom"**. This relation is tagged with the icon for `Business.employer` (■).

* A **Filtered Super-Relation** (dashed arrow) with a score of **0.05** is shown.

* The length of this processing step is noted as **"Length L"** at the bottom.

**4. Integration and Answer Formulation (Integrator Stage):**

* The Integrator box states: **"We gather the following"**.

* It visualizes two **"Reasoning Paths"** using thick, colored arrows:

* A **red path** (corresponding to the `museum_director` relation chain).

* A **green path** (corresponding to the `person` and `employer` relation chain).

* It lists the **"Final Entities"** identified as most relevant:

* **Fitzwilliam Museum** (in a solid pink oval).

* **United Kingdom** (in a solid pink oval).

* The final output is the answer: **"The Fitzwilliam Museum."**

### Key Observations

* **Scoring and Filtering:** The system assigns numerical confidence scores (0 to 1) to retrieved relations. Relations with low scores (e.g., 0.05, 0.2) are visually filtered out (dashed lines), while higher-scoring relations (0.5, 0.7, 0.3) are retained to form reasoning paths.

* **Multi-Hop Reasoning:** The answer is not found in a single step. The system first links "Timothy Potts" to the "J. Paul Getty Museum" (as a director), and then from that museum, finds a relation to the "Fitzwilliam Museum," suggesting a chain of connections.

* **Role of Different Relation Types:** The process uses multiple relation types (`museum_director`, `person`, `museum`, `employer`) to build context, though the final answer appears to derive primarily from the `museum_director` and `museum` relation chain.

* **Spatial Layout:** The legend is positioned in the bottom-left for reference. The main process flows linearly left-to-right. The "Example Super-Relations" key is placed on the far right, separate from the main flow but accessible for decoding the icons used within the diagram.

### Interpretation

This diagram demonstrates a **structured, score-based approach to question answering over a knowledge graph**. It breaks down the complex task of answering "Which museum is Timothy Potts the director of?" into discrete, manageable sub-tasks: entity recognition, relation retrieval, relevance scoring, filtering, and path integration.

The process highlights several important concepts in AI and knowledge representation:

1. **Uncertainty Management:** The use of scores from 0 to 1 explicitly models the system's confidence in each piece of retrieved information, allowing it to prioritize strong evidence.

2. **Explainability:** The visualization of "Reasoning Paths" (red and green arrows) makes the chain of logic leading to the answer transparent. One can trace that the answer "Fitzwilliam Museum" was reached via a high-confidence (0.7) `museum` relation from the "J. Paul Getty Museum," which itself was linked to Timothy Potts via a `museum_director` relation.

3. **Efficiency through Filtering:** By visually and logically filtering out low-scoring relations (dashed lines), the system focuses computational resources on the most promising data, mimicking a form of attention or pruning.

The presence of the "United Kingdom" as a final entity, linked via an `employer` relation from "Archaeologist," suggests the system also retrieved tangential but potentially relevant contextual information, even if it wasn't directly used for the final answer. This indicates a comprehensive retrieval strategy that gathers broader context before integrative summarization.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Entity and Relation Analysis for Question Answering

### Overview

The flowchart illustrates a multi-step process for answering the question: "Which museum is Timothy Potts the director of?" It demonstrates how an AI system retrieves entities, analyzes relationships, scores contributions, and integrates information to arrive at the answer: "The Fitzwilliam Museum." The diagram includes confidence scores for each step and visualizes reasoning paths.

### Components/Axes

1. **Legend** (bottom-left):

- **Super-Relation**: Red line (e.g., Architecture.museum_director)

- **Filtered Super-Relation**: Green line (e.g., People.person)

- **Entity**: Pink oval (e.g., Timothy Potts)

- **Filtered Entity**: Light pink oval (e.g., United Kingdom)

2. **Process Flow**:

- **Question** (top-left): "Which museum is Timothy Potts the director of?"

- **Analyzer** (pink box): Retrieves the entity "Timothy Potts" (Length 0).

- **Step 1** (green box): Retrieves relations (e.g., J. Paul Getty Museum, Architecture.museum) and scores them (0.3–0.5).

- **Step L** (green box): Continues retrieving and scoring relations (e.g., Fitzwilliam Museum, Architecture.museum) with scores (0.1–0.7).

- **Integrator** (blue box): Summarizes information to determine the final answer.

- **Answer** (top-right): "The Fitzwilliam Museum."

3. **Example Super-Relations** (right):

- Architecture.museum_director (triangle)

- People.person (star)

- Architecture.museum (diamond)

- Business.employer (square)

### Detailed Analysis

1. **Step 1 (Length 1)**:

- **Timothy Potts** (Entity) connects to:

- J. Paul Getty Museum (Super-Relation: Architecture.museum_director, score 0.5)

- Architect (Filtered Super-Relation: People.person, score 0.1)

- Confidence scores decrease for less relevant entities (e.g., 0.2 for "Length 0").

2. **Step L (Length L)**:

- **Timothy Potts** connects to:

- Fitzwilliam Museum (Super-Relation: Architecture.museum, score 0.7)

- United Kingdom (Filtered Super-Relation: Business.employer, score 0.3)

- Higher scores indicate stronger relevance to the question.

3. **Integrator**:

- Combines reasoning paths:

- Red path (Super-Relation): Timothy Potts → Fitzwilliam Museum (score 0.7)

- Green path (Filtered Super-Relation): Timothy Potts → United Kingdom (score 0.3)

- Final answer prioritizes the highest-scoring path.

### Key Observations

- **Confidence Scores**: Scores range from 0.1 (low confidence) to 0.7 (high confidence). The Fitzwilliam Museum has the highest score (0.7), making it the most likely answer.

- **Filtering**: Filtered entities (e.g., United Kingdom) have lower scores, indicating indirect relevance.

- **Flow Direction**: The process moves from entity retrieval (Step 0) to iterative relation analysis (Steps 1–L) and final integration.

### Interpretation

The diagram demonstrates a knowledge graph traversal system that:

1. **Breaks down questions** into entities and relationships.

2. **Scores relevance** at each step using confidence metrics.

3. **Filters noise** by prioritizing direct relationships (e.g., Architecture.museum_director over People.person).

4. **Integrates paths** to resolve ambiguity, favoring the highest-confidence route.

The Fitzwilliam Museum is identified as the answer because its direct super-relation (Architecture.museum) with Timothy Potts has the highest score (0.7), outweighing indirect connections like Business.employer (United Kingdom, score 0.3). This reflects the system's ability to distinguish primary vs. secondary roles in hierarchical data.

DECODING INTELLIGENCE...