## Flowchart: Benchmark Generation Pipeline

### Overview

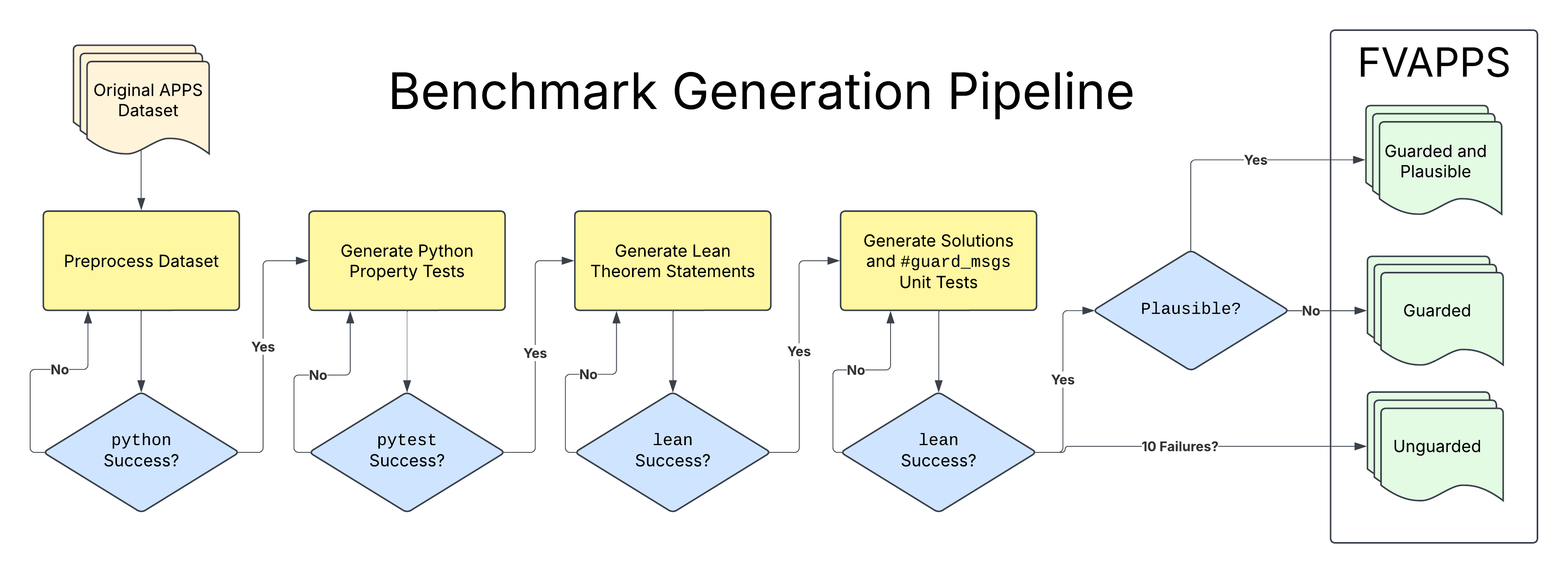

The flowchart illustrates a multi-stage pipeline for generating and validating benchmark solutions. It begins with an original dataset and progresses through preprocessing, test generation, and validation stages. The final output categorizes solutions into three FVAPPS classifications: Guarded and Plausible, Guarded, and Unguarded.

### Components/Axes

- **Nodes**:

- Process steps (yellow boxes): Preprocess Dataset, Generate Python Property Tests, Generate Lean Theorem Statements, Generate Solutions and #guard_msgs Unit Tests

- Decision points (blue diamonds): Python Success?, pytest Success?, lean Success?, Plausible?, Lean Success?

- **Arrows**:

- Solid arrows indicate sequential flow

- Dashed arrows represent conditional loops

- **Legend**:

- Right-side vertical box categorizes final outputs:

- Guarded and Plausible (green)

- Guarded (green)

- Unguarded (green)

### Detailed Analysis

1. **Preprocess Dataset** → **Python Success?**

- Yes: Proceed to Generate Python Property Tests

- No: Loop back to Preprocess Dataset

2. **Generate Python Property Tests** → **pytest Success?**

- Yes: Proceed to Generate Lean Theorem Statements

- No: Loop back to Preprocess Dataset

3. **Generate Lean Theorem Statements** → **lean Success?**

- Yes: Proceed to Generate Solutions and #guard_msgs Unit Tests

- No: Loop back to Generate Python Property Tests

4. **Generate Solutions and #guard_msgs Unit Tests** → **Plausible?**

- Yes: Final output categorized as "Guarded and Plausible"

- No:

- If 10 failures: Categorized as "Unguarded"

- Otherwise: Categorized as "Guarded"

### Key Observations

- **Iterative Design**: All process steps include retry mechanisms (loops) when validation fails

- **Validation Hierarchy**:

- Python tests → pytest → lean → Plausibility checks

- Each stage gates progression to subsequent steps

- **Final Categorization**:

- "Guarded and Plausible" requires passing all validation stages

- "Unguarded" only occurs after 10 consecutive failures in the final test

### Interpretation

This pipeline demonstrates a rigorous, multi-layered validation process for generating benchmark solutions. The inclusion of multiple testing frameworks (Python, pytest, lean) suggests a focus on cross-platform verification. The final "Guarded" categorization appears to be the default outcome unless solutions fail repeatedly, indicating a conservative approach to solution acceptance. The "Plausible" check introduces a qualitative assessment layer beyond automated testing, potentially evaluating solution logic or edge-case handling. The pipeline's structure implies that solution quality is directly tied to successful validation at each stage, with the most stringent requirements applied to the final output classification.