TECHNICAL ASSET FINGERPRINT

dca45e2953d2069c29672a16

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

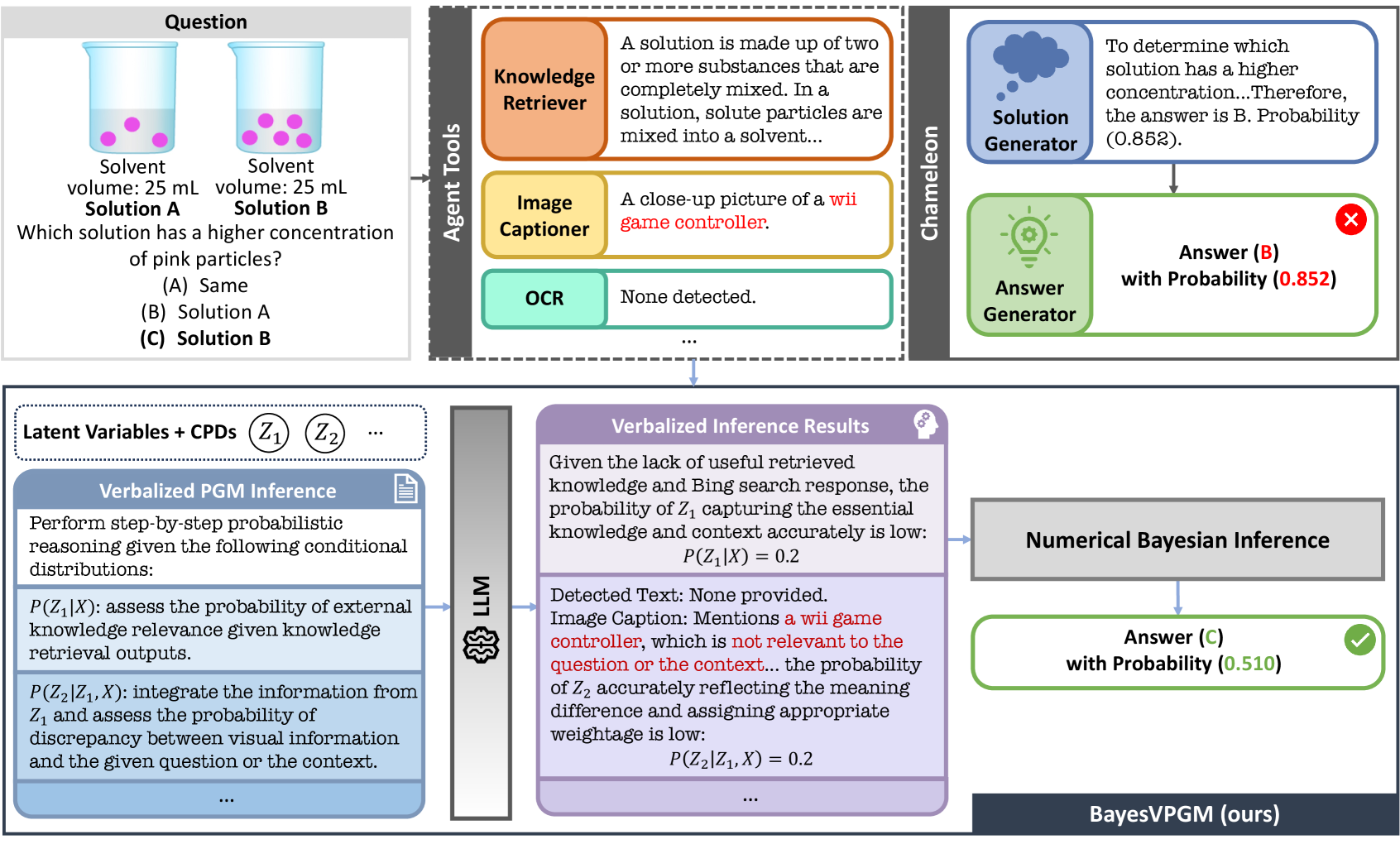

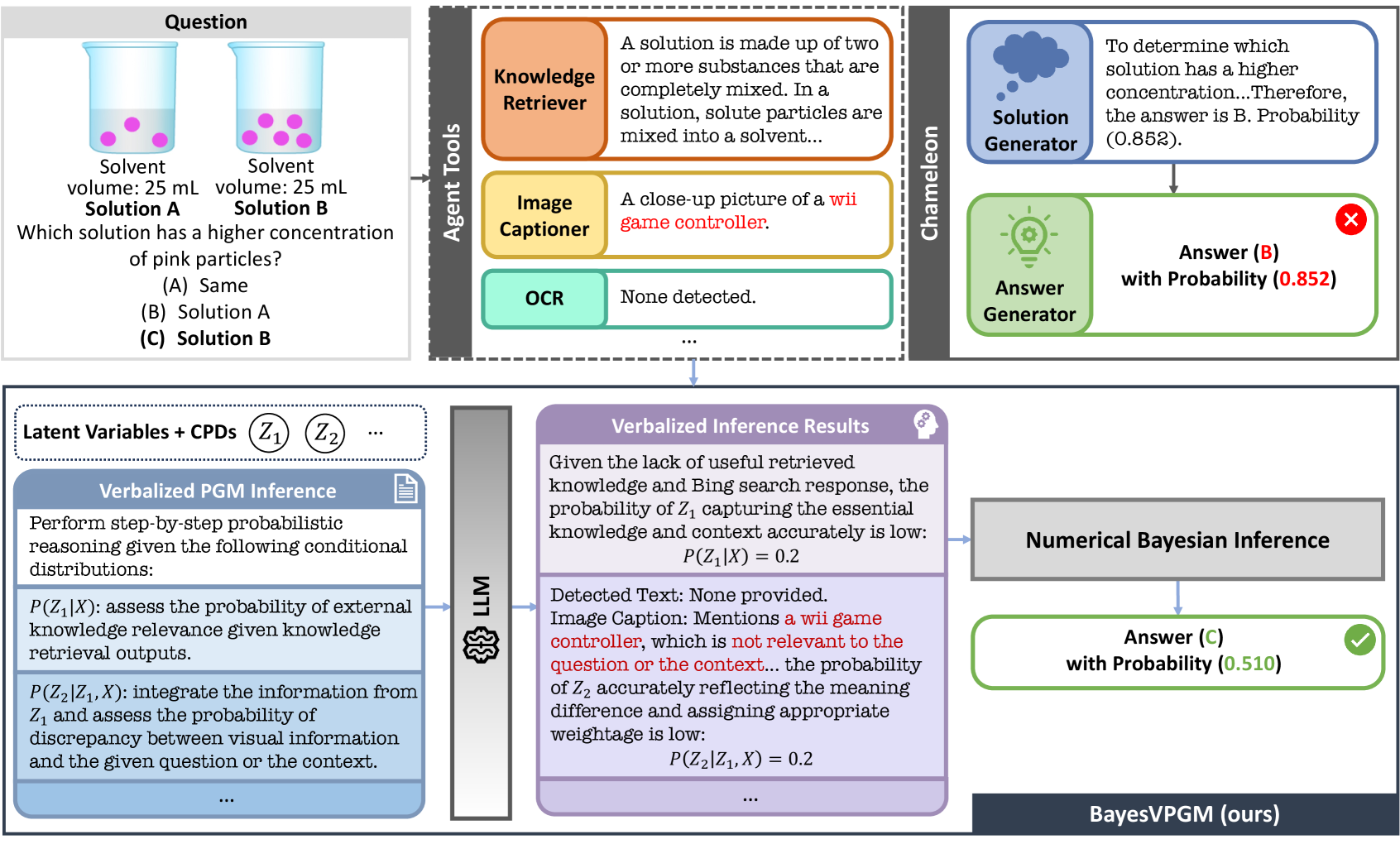

## Hybrid Reasoning Diagram: BayesVPGM

### Overview

The image presents a diagram illustrating the reasoning process of a system called BayesVPGM (ours) in answering a question about the concentration of particles in two solutions. It combines visual information, knowledge retrieval, and probabilistic inference to arrive at an answer. The diagram is divided into sections representing different stages and components of the reasoning process.

### Components/Axes

* **Question (Top-Left)**: Presents the visual input and the question to be answered.

* Two beakers labeled "Solution A" and "Solution B" each containing a solvent and pink particles.

* Solvent volume for both solutions is 25 mL.

* Question: "Which solution has a higher concentration of pink particles?"

* Possible answers: (A) Same, (B) Solution A, (C) Solution B.

* **Agent Tools (Top-Middle-Left)**: Lists the tools used by the system.

* Knowledge Retriever (Orange): Retrieves relevant knowledge. Text: "A solution is made up of two or more substances that are completely mixed. In a solution, solute particles are mixed into a solvent..."

* Image Captioner (Yellow): Provides a description of the image. Text: "A close-up picture of a wii game controller."

* OCR (Green): Performs optical character recognition. Text: "None detected."

* **Chameleon (Top-Right)**: Represents the reasoning process.

* Solution Generator (Blue): Generates a possible solution. Text: "To determine which solution has a higher concentration...Therefore, the answer is B. Probability (0.852)."

* Answer Generator (Green): Generates the final answer. Text: "Answer (B) with Probability (0.852)" with a red "X" indicating it's incorrect.

* **Latent Variables + CPDs (Bottom-Left)**: Describes the probabilistic reasoning process.

* Verbalized PGM Inference (Blue): Performs step-by-step probabilistic reasoning.

* P(Z₁|X): assess the probability of external knowledge relevance given knowledge retrieval outputs.

* P(Z₂|Z₁, X): integrate the information from Z₁ and assess the probability of discrepancy between visual information and the given question or the context.

* **LLM (Bottom-Middle-Left)**: Large Language Model.

* **Verbalized Inference Results (Bottom-Middle)**: Shows the results of the probabilistic inference.

* Given the lack of useful retrieved knowledge and Bing search response, the probability of Z₁ capturing the essential knowledge and context accurately is low: P(Z₁|X) = 0.2

* Detected Text: None provided.

* Image Caption: Mentions a wii game controller, which is not relevant to the question or the context... the probability of Z₂ accurately reflecting the meaning difference and assigning appropriate weightage is low: P(Z₂|Z₁, X) = 0.2

* **Numerical Bayesian Inference (Bottom-Middle-Right)**: Performs numerical Bayesian inference.

* **Final Answer (Bottom-Right)**: Presents the final answer.

* Answer (C) with Probability (0.510) with a green checkmark indicating it's correct.

* **BayesVPGM (ours) (Bottom)**: Labels the system.

### Detailed Analysis or Content Details

* **Question**: The question asks which solution has a higher concentration of pink particles. Solution A and Solution B both have a solvent volume of 25 mL. Visually, Solution B appears to have a slightly higher concentration of pink particles.

* **Agent Tools**:

* The Knowledge Retriever provides a general definition of a solution.

* The Image Captioner incorrectly identifies a "wii game controller," indicating a failure in image understanding.

* The OCR detects no text in the image.

* **Chameleon**:

* The Solution Generator initially suggests Solution B with a probability of 0.852.

* The Answer Generator outputs Answer (B) with a probability of 0.852, which is marked as incorrect.

* **Latent Variables + CPDs**: The system uses probabilistic reasoning based on latent variables Z₁ and Z₂.

* **Verbalized Inference Results**: The probabilities P(Z₁|X) and P(Z₂|Z₁, X) are both low (0.2), indicating uncertainty in knowledge retrieval and visual understanding.

* **Numerical Bayesian Inference**: The system performs numerical Bayesian inference to arrive at the final answer.

* **Final Answer**: The final answer is (C) Solution B with a probability of 0.510, which is marked as correct.

### Key Observations

* The Image Captioner's failure to correctly identify the image content highlights a weakness in the system's visual understanding capabilities.

* The initial answer generated by the Solution Generator is incorrect, indicating that the system's initial reasoning is flawed.

* The probabilistic inference process assigns low probabilities to knowledge retrieval and visual understanding, reflecting the system's uncertainty.

* The final answer, obtained through numerical Bayesian inference, is correct, suggesting that the system is able to overcome its initial shortcomings through further processing.

### Interpretation

The diagram illustrates a hybrid reasoning approach that combines visual information, knowledge retrieval, and probabilistic inference. The system's performance is affected by limitations in image understanding and knowledge retrieval, as evidenced by the incorrect image caption and low probabilities assigned to these processes. However, the system is able to arrive at the correct answer through numerical Bayesian inference, suggesting that this final stage of processing is crucial for overcoming initial shortcomings. The diagram highlights the importance of robust visual understanding and knowledge retrieval capabilities for effective reasoning in complex tasks. The fact that the system initially fails, but then corrects itself, demonstrates the value of iterative refinement and probabilistic reasoning in AI systems.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Chameleon - Visual Reasoning System

### Overview

This diagram illustrates the architecture and workflow of a visual reasoning system named "Chameleon". The system aims to answer questions based on visual input (two beakers with pink particles) by integrating knowledge retrieval, image captioning, and probabilistic reasoning. The diagram showcases the process from question input to answer generation, highlighting the probabilities associated with each potential answer.

### Components/Axes

The diagram is segmented into several key areas:

* **Question:** Located on the left, presenting a visual question with multiple-choice answers.

* **Agent Tools:** A vertical block containing "Knowledge Retriever", "Image Captioner", and "OCR".

* **Chameleon:** A central block representing the core reasoning engine, divided into "Solution Generator" and "Answer Generator".

* **Verbalized Inference Results:** A lower-left block detailing the probabilistic graphical model (PGM) inference process.

* **Numerical Bayesian Inference:** A lower-right block showing the results of numerical Bayesian inference.

* **BayesVPGM (ours):** A label indicating the system's approach.

The diagram also includes probability values associated with answers and inference steps.

### Detailed Analysis or Content Details

**Question:**

* Visual: Two beakers, labeled "Solution A" and "Solution B". Each beaker contains a solvent volume of 25 mL and pink particles.

* Text: "Which solution has a higher concentration of pink particles?"

* Options: (A) Same, (B) Solution A, (C) Solution B

**Agent Tools:**

* **Knowledge Retriever:** Text: "A solution is made up of two or more substances that are completely mixed. In a solution, solute particles are mixed into a solvent..."

* **Image Captioner:** Text: "A close-up picture of a Wii game controller."

* **OCR:** Text: "None detected."

**Chameleon:**

* **Solution Generator:** Text: "To determine which solution has a higher concentration... Therefore, the answer is B. Probability (0.852)."

* **Answer Generator:** Text: "Answer (B) with Probability (0.852)" - Marked with a red 'X'.

**Verbalized Inference Results:**

* Text: "Given the lack of useful retrieved knowledge and Bing search response, the probability of Z1 capturing the essential knowledge and context accurately is low: P(Z1|X) = 0.2"

* Text: "Detected Text: None provided. Image Caption: Mentions a Wii game controller, which is not relevant to the question or the context... the probability of Z2 accurately reflecting the meaning difference and assigning appropriate weightage is low: P(Z2|X) = 0.2"

**Numerical Bayesian Inference:**

* Text: "Answer (C) with Probability (0.510)" - Marked with a green checkmark.

**Latent Variables + CPDs:** Z1, Z2, ... (represented as boxes)

**LM:** A symbol representing a Language Model.

### Key Observations

* The system initially suggests "Solution A" (B) with a high probability (0.852), but this is marked as incorrect.

* The Bayesian inference then points to "Solution B" (C) with a probability of 0.510, which is the correct answer.

* The Image Captioner provides an irrelevant caption ("Wii game controller"), indicating a failure in visual understanding.

* The Knowledge Retriever provides a basic definition of a solution, which is relevant but not sufficient to answer the question directly.

* The PGM inference assigns low probabilities (0.2) to both Z1 and Z2, suggesting that the retrieved knowledge and image caption are not helpful for accurate reasoning.

### Interpretation

The diagram demonstrates a visual reasoning pipeline that struggles with contextual understanding and relevance. While the system can retrieve knowledge and generate potential solutions, it initially arrives at an incorrect answer due to the misleading image caption. The subsequent Bayesian inference corrects this error, but with a lower confidence level. This highlights the importance of accurate image understanding and relevant knowledge retrieval for effective visual reasoning. The low probabilities assigned to the latent variables (Z1, Z2) indicate that the system is uncertain about the quality of the information it's using. The discrepancy between the initial high-probability answer and the final correct answer suggests a need for improved integration of different reasoning components and a more robust mechanism for filtering irrelevant information. The system's architecture, "BayesVPGM", appears to be an attempt to address these challenges by incorporating Bayesian inference and probabilistic graphical models. The diagram effectively illustrates the complexities of building a system that can reason about visual information and provide accurate answers to complex questions.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Diagram: Comparison of AI Reasoning Systems (Chameleon vs. BayesVPGM)

### Overview

This image is a technical diagram comparing the reasoning processes of two AI systems, "Chameleon" and "BayesVPGM (ours)", when presented with the same visual science question. The diagram illustrates how each system processes the input, the tools or methods they employ, and the final answer they generate, highlighting a failure case for Chameleon and a success case for the proposed BayesVPGM method.

### Components/Axes

The diagram is divided into two primary horizontal sections:

1. **Top Section (Chameleon System):**

* **Input (Left):** A "Question" box containing an image of two beakers (Solution A and Solution B) and a multiple-choice question.

* **Agent Tools (Center):** A vertical block listing tools used: "Knowledge Retriever", "Image Captioner", and "OCR".

* **Chameleon Pipeline (Right):** A flow from "Solution Generator" to "Answer Generator", culminating in a final answer box with a red "X" icon.

2. **Bottom Section (BayesVPGM System):**

* **Input (Left):** A box labeled "Latent Variables + CPDs" with symbols Z₁, Z₂, etc.

* **Verbalized PGM Inference (Left-Center):** A blue box detailing probabilistic reasoning steps.

* **LLM (Center):** A vertical block representing a Large Language Model.

* **Verbalized Inference Results (Center-Right):** A purple box showing the LLM's assessment of probabilities.

* **Numerical Bayesian Inference (Right):** A gray box leading to the final answer.

* **Final Output (Bottom-Right):** A green box with a checkmark icon, labeled "BayesVPGM (ours)".

### Detailed Analysis

**1. The Question (Common Input):**

* **Image:** Two beakers labeled "Solution A" and "Solution B". Both have a "Solvent volume: 25 mL". Solution A contains 3 pink particles. Solution B contains 6 pink particles.

* **Text:** "Which solution has a higher concentration of pink particles? (A) Same (B) Solution A **(C) Solution B**" (The correct answer, (C), is bolded in the diagram).

**2. Chameleon System Process & Output:**

* **Knowledge Retriever Output:** "A solution is made up of two or more substances that are completely mixed. In a solution, solute particles are mixed into a solvent..."

* **Image Captioner Output:** "A close-up picture of a **wii game controller**." (The phrase "wii game controller" is highlighted in red, indicating an error).

* **OCR Output:** "None detected."

* **Solution Generator Reasoning:** "To determine which solution has a higher concentration...Therefore, the answer is B. Probability (0.852)."

* **Final Answer Generator Output:** "**Answer (B) with Probability (0.852)**" accompanied by a red "X" icon, indicating this is incorrect.

**3. BayesVPGM System Process & Output:**

* **Verbalized PGM Inference Steps:**

* `P(Z₁|X)`: "assess the probability of external knowledge relevance given knowledge retrieval outputs."

* `P(Z₂|Z₁, X)`: "integrate the information from Z₁ and assess the probability of discrepancy between visual information and the given question or the context."

* **Verbalized Inference Results (from LLM):**

* Assessment of Z₁: "Given the lack of useful retrieved knowledge and Bing search response, the probability of Z₁ capturing the essential knowledge and context accurately is low: `P(Z₁|X) = 0.2`"

* Assessment of Z₂: "Detected Text: None provided. Image Caption: Mentions **a wii game controller**, which is **not relevant to the question or the context**... the probability of Z₂ accurately reflecting the meaning difference and assigning appropriate weightage is low: `P(Z₂|Z₁, X) = 0.2`"

* **Final Output:** Answer (C) with Probability (0.510) accompanied by a green checkmark icon, indicating this is correct.

### Key Observations

1. **Critical Failure in Chameleon:** The "Image Captioner" tool in the Chameleon pipeline catastrophically misidentifies the beaker diagram as "a wii game controller." This erroneous visual input propagates through the system.

2. **Chameleon's Overconfidence:** Despite the flawed visual input, the Chameleon "Solution Generator" produces a high-confidence (0.852) but incorrect answer (B).

3. **BayesVPGM's Error Detection:** The BayesVPGM system explicitly identifies the irrelevance of the "wii game controller" caption (`P(Z₂|Z₁, X) = 0.2`), demonstrating a capacity for self-critique and uncertainty quantification.

4. **Probabilistic Reasoning:** BayesVPGM uses verbalized conditional probabilities (`P(Z₁|X)`, `P(Z₂|Z₁, X)`) to model its own uncertainty about the quality of its reasoning steps before arriving at a final numerical probability.

5. **Outcome:** The system with explicit uncertainty modeling (BayesVPGM) arrives at the correct answer (C), albeit with lower confidence (0.510), while the system without it (Chameleon) fails confidently.

### Interpretation

This diagram serves as a case study and argument for the proposed "BayesVPGM" method. It demonstrates a scenario where a standard multimodal AI pipeline (Chameleon) fails due to a severe error in one of its sub-components (the image captioner). The failure is compounded because the system lacks a mechanism to question or down-weight the confidence of that faulty component.

The BayesVPGM approach is presented as a solution. By "verbalizing" its probabilistic graphical model (PGM) inference, it forces the underlying LLM to explicitly reason about the reliability of its own tools and retrieved information. The low probabilities assigned to the latent variables (`Z₁`, `Z₂`) reflect the system's awareness that its inputs are unreliable. This self-aware uncertainty allows the final Bayesian inference step to correctly discount the misleading information and converge on the right answer, even if with modest confidence.

The core message is that for robust AI reasoning, especially in multimodal settings, it is crucial to move beyond generating single-point answers and instead model and communicate the system's own uncertainty about its reasoning process. The diagram visually contrasts the "black-box" failure of one system with the "self-reflective" success of the other.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

# Bayesian Probabilistic Generation Model (BayesVPGM) Technical Diagram Analysis

## **Main Components and Flow**

The diagram illustrates a Bayesian probabilistic framework for question-answering systems, integrating agent tools, inference reasoning, and numerical validation.

---

### **1. Agent Tools**

#### **a. Knowledge Retriever**

- **Function**: Defines solutions as mixtures of substances. Example: "A solution is made up of two or more substances that are completely mixed. In a solution, solute particles are mixed into a solvent..."

- **Color**: **#FFC3A0** (Salmon)

#### **b. Image Captioner**

- **Function**: Analyzes visual inputs. Example: "A close-up picture of a wii game controller."

- **Color**: **#FFE1A1** (Khaki)

#### **c. OCR (Optical Character Recognition)**

- **Function**: Text extraction from images. Output: "None detected."

- **Color**: **#B0E3C4** (Mint Green)

#### **d. Solution Generator**

- **Function**: Proposes answers with confidence probabilities.

- **Answer B**: "The answer is B. Probability (0.852)."

- **Color**: **#5E60B8** (Royal Blue)

- **Answer Marking**: Incorrect (Red X)

---

### **2. Question Input**

- **Problem Statement**:

- Two solutions (A and B) with 25mL solvent each.

- Task: Determine which has higher pink particle concentration.

- **Options**:

- (A) Solution A

- (B) Solution B

- (C) Same

---

### **3. Verbalized Inference Results**

- **Probabilistic Reasoning**:

- **P(Z₁|X)**: Probability of external knowledge relevance = **0.2** (Low confidence).

- **P(Z₂|Z₁,X)**: Probability of image-text alignment = **0.2** (Low confidence).

- **Text Detected**: None.

- **Image Caption Relevance**: Wii controller (Irrelevant to question).

---

### **4. Numerical Bayesian Inference**

- **Final Output**:

- **Answer C**: "Answer (C) with Probability (0.510)."

- **Validation**: Green checkmark indicates correct answer.

- **Bayesian Model**: BayesVPGM (Proposed Framework).

---

### **5. Latent Variables and Conditional Probabilities**

- **Variables**:

- **Z₁**: External knowledge relevance.

- **Z₂**: Visual-context alignment.

- **Process**:

1. Perform step-by-step probabilistic reasoning.

2. Assess relevance of retrieved knowledge.

3. Integrate visual and contextual discrepancies.

---

### **6. Color Legend & Spatial Grounding**

- **Legend Colors**:

- **#FFC3A0**: Knowledge Retriever.

- **#FFE1A1**: Image Captioner.

- **#B0E3C4**: OCR.

- **#5E60B8**: Solution Generator.

- **Flow**: Question → Agent Tools → Verbalized Inference → Numerical Inference.

---

### **7. Trend Verification**

- **Agent Tools Output**: Dominated by textual reasoning (low visual alignment probability: **P(Z₂|Z₁,X) = 0.2**).

- **Numerical Inference**: Overrides verbalized results, prioritizing final probabilistic output (**P(C) = 0.510**).

---

### **8. Diagram Structure**

| **Region** | **Components** |

|----------------------|-------------------------------------------------------------------------------|

| **Header** | Question input, agent tools (Knowledge Retriever, Image Captioner, OCR). |

| **Main Chart** | Verbalized inference steps (P(Z₁|X), P(Z₂|Z₁,X), relevance analysis). |

| **Footer** | Numerical Bayesian Inference (Answer C with P = 0.510). |

---

### **9. Key Observations**

- **Incorrect Verbalized Output**: Agent tools incorrectly selected Answer B (P = 0.852) due to low contextual relevance.

- **Correct Final Answer**: Numerical inference corrected the error, selecting Answer C (P = 0.510) via probabilistic integration.

- **Model Strength**: BayesVPGM effectively combines knowledge retrieval, image analysis, and numerical validation to resolve ambiguities.

---

### **10. Limitations**

- **Low Confidence in Early Stages**: Both **P(Z₁|X)** and **P(Z₂|Z₁,X)** were ≤ 0.2, indicating poor initial alignment.

- **Relevance Gaps**: Image Captioner introduced irrelevant visual data (Wii controller).

---

### **11. Recommendations**

- Improve contextual alignment (Z₂) by filtering irrelevant image captions.

- Enhance knowledge retrieval accuracy to reduce low-confidence probabilities.

DECODING INTELLIGENCE...