## Diagram: Transformer Model Inference Process (Prefill and Decode Phases)

### Overview

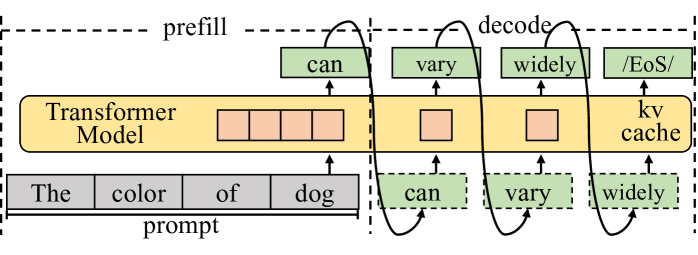

The image is a technical diagram illustrating the two-phase inference process of a Transformer-based language model. It visually separates the initial processing of an input prompt ("prefill") from the subsequent autoregressive generation of output tokens ("decode"). The diagram uses a combination of labeled boxes, arrows, and dashed lines to show data flow and component relationships.

### Components/Axes

The diagram is divided into two primary regions by a vertical dashed line:

1. **Left Region (Prefill Phase):** Labeled "prefill" at the top.

2. **Right Region (Decode Phase):** Labeled "decode" at the top.

**Central Component:**

* A large, horizontal, yellow rectangle labeled **"Transformer Model"** spans both phases.

* Inside this rectangle, on the left (prefill side), are four small, empty, peach-colored squares arranged horizontally.

* On the right (decode side), there are two individual peach-colored squares and a final block labeled **"kv cache"**.

**Input (Bottom):**

* A gray bar labeled **"prompt"** at the bottom left.

* The prompt is segmented into four discrete tokens: **"The"**, **"color"**, **"of"**, **"dog"**.

* Arrows point upward from each prompt token into the "Transformer Model" rectangle.

**Output (Top):**

* A sequence of green boxes representing generated tokens, positioned above the "Transformer Model".

* The sequence is: **"can"**, **"vary"**, **"widely"**, **"/EoS/"** (End of Sequence token).

* Arrows point upward from the model to each output token.

* Curved arrows connect the output tokens in sequence: from "can" to "vary", from "vary" to "widely", and from "widely" to "/EoS/".

**Data Flow Arrows:**

* **Prefill Flow:** Straight arrows from the "prompt" tokens up into the model.

* **Decode Flow:** A more complex, cyclical flow is shown:

1. An arrow from the model points up to the first output token, **"can"**.

2. A curved arrow loops from the **"can"** output token back down and into the model on the decode side.

3. This pattern repeats: an arrow from the model points up to **"vary"**, which then loops back into the model.

4. The same occurs for **"widely"**.

5. Finally, an arrow from the model points up to the terminal **"/EoS/"** token.

### Detailed Analysis

The diagram explicitly maps the flow of information during text generation:

1. **Prefill Phase (Parallel Processing):**

* The entire input prompt ("The color of dog") is fed into the Transformer Model simultaneously.

* The four peach squares inside the model during this phase likely represent the parallel processing of these four input tokens.

* This phase results in the generation of the first output token: **"can"**.

2. **Decode Phase (Autoregressive, Sequential Processing):**

* This phase is iterative. Each step generates one new token.

* The diagram shows three iterative steps before termination:

* **Step 1:** The model (using the prompt context and the "kv cache") generates **"vary"**.

* **Step 2:** The model (now using prompt context, "can", "vary", and the updated "kv cache") generates **"widely"**.

* **Step 3:** The model generates the end-of-sequence token **"/EoS/"**, signaling completion.

* The **"kv cache"** (Key-Value cache) is a critical component shown in the decode phase. It stores intermediate computations from previous steps to avoid redundant calculations, making the sequential generation process efficient.

* The curved arrows visually represent the **autoregressive property**: the output of one step (e.g., "can") becomes part of the input for the next step.

### Key Observations

* **Clear Phase Separation:** The dashed line provides a strict visual boundary between the one-time, parallel prefill and the iterative, sequential decode.

* **Tokenization:** Both input and output are shown as discrete, segmented units (tokens), which is fundamental to how language models process text.

* **The KV Cache is Central:** Its explicit labeling and placement within the decode section of the model highlight its importance for performance during text generation.

* **Terminal Symbol:** The use of **"/EoS/"** is a standard convention to mark the end of generated text.

* **Flow Complexity:** The decode phase's flow is intentionally more complex (with loops) than the prefill's linear flow, accurately reflecting the computational difference.

### Interpretation

This diagram is a pedagogical tool explaining the core mechanics of how a Transformer model like GPT generates text. It answers the question: "What happens when you give a model a prompt?"

* **What it demonstrates:** It shows that generation is not a single, magical step. It's a two-stage process: first, the model "reads" and encodes the entire prompt (prefill). Then, it "writes" the response one word at a time, using its own previous outputs as context for the next word (decode), while relying on the KV cache for efficiency.

* **Relationship between elements:** The prompt is the seed. The Transformer Model is the engine. The prefill phase is the engine revving up and engaging the initial gear. The decode phase is the engine running through subsequent gears, with the KV cache acting as the transmission, remembering the rotation of previous gears to make the next shift smooth. The output tokens are the resulting motion.

* **Underlying message:** The diagram demystifies AI text generation, framing it as a structured, computational process rather than an inscrutable black box. It emphasizes the sequential, dependent nature of generation ("vary" depends on "can", "widely" depends on "vary" and "can"), which is key to understanding model behavior, including phenomena like repetition or error propagation. The inclusion of the KV cache specifically targets a technical audience interested in the optimization and implementation details of model inference.