TECHNICAL ASSET FINGERPRINT

dd0eaabc128e9d991eb0f4a7

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

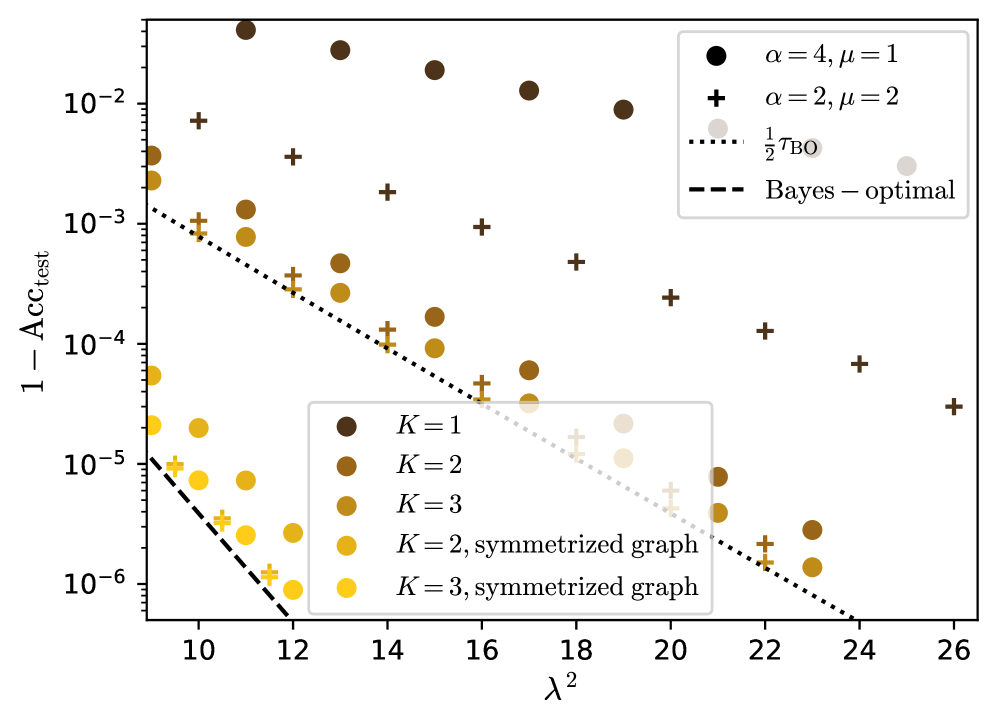

## Scatter Plot: Accuracy vs. Lambda Squared

### Overview

The image is a scatter plot showing the relationship between `1 - Acc_test` (error rate) and `λ^2` (lambda squared). The plot includes multiple data series, each representing different configurations of parameters `K`, `α`, and `μ`, as well as two reference lines: `1/2 τ_BO` and `Bayes-optimal`. The y-axis is on a logarithmic scale.

### Components/Axes

* **X-axis:** `λ^2` (lambda squared), with a linear scale ranging from approximately 9 to 26.

* **Y-axis:** `1 - Acc_test` (error rate), with a logarithmic scale ranging from 10^-6 to 10^-2.

* **Legend (Top-Right):**

* Black circle: `α = 4, μ = 1`

* Black plus sign: `α = 2, μ = 2`

* Dotted line: `1/2 τ_BO`

* Dashed line: `Bayes - optimal`

* **Legend (Center):**

* Dark brown circle: `K = 1`

* Brown circle: `K = 2`

* Dark yellow circle: `K = 3`

* Yellow circle: `K = 2, symmetrized graph`

* Light yellow circle: `K = 3, symmetrized graph`

### Detailed Analysis

* **Data Series: `α = 4, μ = 1` (Black Circles)**

* Trend: The error rate decreases as `λ^2` increases.

* Data Points:

* λ^2 = 10, 1 - Acc_test ≈ 0.015

* λ^2 = 16, 1 - Acc_test ≈ 0.008

* λ^2 = 22, 1 - Acc_test ≈ 0.006

* λ^2 = 26, 1 - Acc_test ≈ 0.005

* **Data Series: `α = 2, μ = 2` (Black Plus Signs)**

* Trend: The error rate decreases as `λ^2` increases.

* Data Points:

* λ^2 = 10, 1 - Acc_test ≈ 0.006

* λ^2 = 16, 1 - Acc_test ≈ 0.002

* λ^2 = 22, 1 - Acc_test ≈ 0.0005

* λ^2 = 26, 1 - Acc_test ≈ 0.0003

* **Data Series: `K = 1` (Dark Brown Circles)**

* Trend: The error rate decreases as `λ^2` increases.

* Data Points:

* λ^2 = 10, 1 - Acc_test ≈ 0.004

* λ^2 = 16, 1 - Acc_test ≈ 0.0004

* λ^2 = 22, 1 - Acc_test ≈ 0.00008

* **Data Series: `K = 2` (Brown Circles)**

* Trend: The error rate decreases as `λ^2` increases.

* Data Points:

* λ^2 = 10, 1 - Acc_test ≈ 0.001

* λ^2 = 16, 1 - Acc_test ≈ 0.0001

* λ^2 = 22, 1 - Acc_test ≈ 0.00002

* **Data Series: `K = 3` (Dark Yellow Circles)**

* Trend: The error rate decreases as `λ^2` increases.

* Data Points:

* λ^2 = 10, 1 - Acc_test ≈ 0.0002

* λ^2 = 16, 1 - Acc_test ≈ 0.00003

* λ^2 = 22, 1 - Acc_test ≈ 0.000006

* **Data Series: `K = 2, symmetrized graph` (Yellow Circles)**

* Trend: The error rate decreases as `λ^2` increases.

* Data Points:

* λ^2 = 10, 1 - Acc_test ≈ 0.00002

* λ^2 = 16, 1 - Acc_test ≈ 0.000004

* λ^2 = 22, 1 - Acc_test ≈ 0.000001

* **Data Series: `K = 3, symmetrized graph` (Light Yellow Circles)**

* Trend: The error rate decreases as `λ^2` increases.

* Data Points:

* λ^2 = 10, 1 - Acc_test ≈ 0.000005

* λ^2 = 16, 1 - Acc_test ≈ 0.000001

* λ^2 = 22, 1 - Acc_test ≈ 0.0000003

* **Reference Line: `1/2 τ_BO` (Dotted Line)**

* Trend: Decreases as `λ^2` increases.

* At λ^2 = 10, 1 - Acc_test ≈ 0.001

* At λ^2 = 26, 1 - Acc_test ≈ 0.000001

* **Reference Line: `Bayes - optimal` (Dashed Line)**

* Trend: Decreases as `λ^2` increases.

* At λ^2 = 10, 1 - Acc_test ≈ 0.00001

* At λ^2 = 12, 1 - Acc_test ≈ 0.000001

### Key Observations

* The error rate (`1 - Acc_test`) generally decreases as `λ^2` increases across all data series.

* The data series with higher `α` and lower `μ` (black circles) generally have higher error rates compared to those with lower `α` and higher `μ` (black plus signs).

* Symmetrizing the graph (yellow and light yellow circles) results in lower error rates compared to the non-symmetrized versions (brown and dark yellow circles) for the same `K` value.

* Increasing `K` generally leads to lower error rates.

* The `Bayes - optimal` line represents the lowest achievable error rate, and all data series approach this limit as `λ^2` increases.

### Interpretation

The plot illustrates the impact of different parameters (`α`, `μ`, `K`) and graph symmetrization on the test accuracy of a model as a function of `λ^2`. The decreasing error rates with increasing `λ^2` suggest that higher regularization (represented by `λ^2`) improves the model's generalization performance. The comparison between different parameter configurations highlights the trade-offs between model complexity and accuracy. Symmetrizing the graph appears to be an effective technique for reducing the error rate. The plot also demonstrates how the model's performance approaches the theoretical `Bayes - optimal` limit with increasing regularization.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

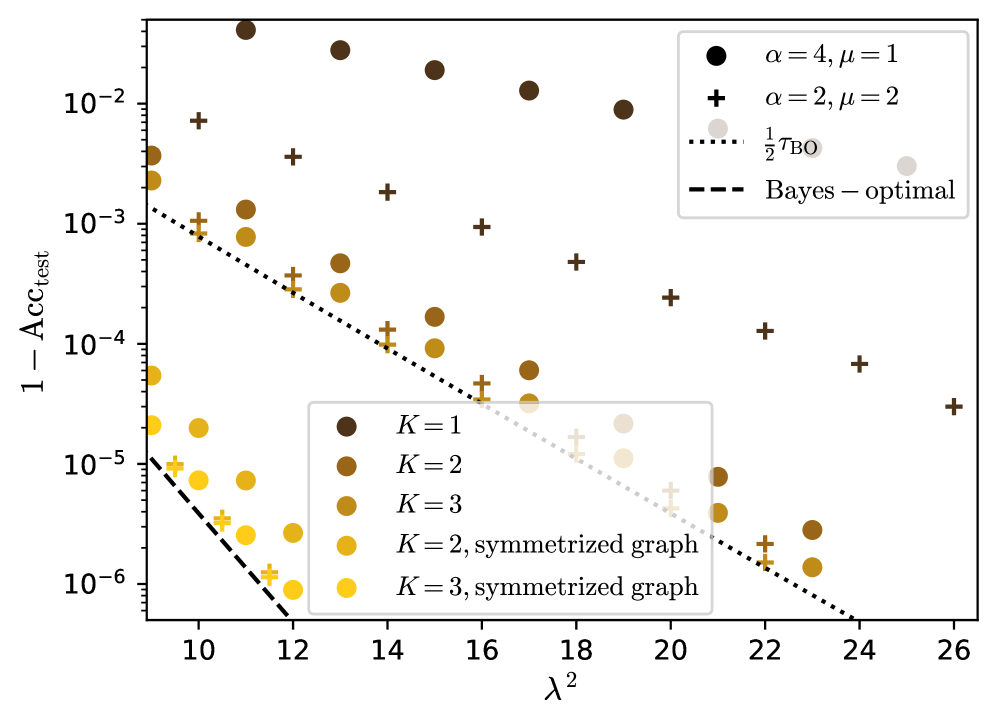

## Scatter Plot: Test Error vs. Lambda Squared

### Overview

This image presents a scatter plot comparing the test error (1 - Acc_test) against lambda squared (λ²). The plot displays data for different values of alpha (α) and mu (μ), along with theoretical bounds represented by dotted and dashed lines. The data points are color-coded based on the value of K.

### Components/Axes

* **X-axis:** λ² (Lambda Squared) - Scale ranges from approximately 10 to 26.

* **Y-axis:** 1 - Acc_test (1 minus Test Accuracy) - Logarithmic scale ranging from approximately 1e-6 to 0.02.

* **Legend (Top-Right):**

* Black Circles: α = 4, μ = 1

* Black Plus Signs: α = 2, μ = 2

* Black Dotted Line: 1/2 TBO (Theoretical Bound Optimization)

* Black Dashed Line: Bayes – optimal

* **Legend (Bottom-Center):**

* Dark Brown Circles: K = 1

* Brown Circles: K = 2

* Dark Yellow Circles: K = 3

* Yellow Circles: K = 2, symmetrized graph

* Light Yellow Circles: K = 3, symmetrized graph

### Detailed Analysis

The plot shows several data series. Let's analyze each:

* **α = 4, μ = 1 (Black Circles):** This series shows a generally decreasing trend as λ² increases, but with significant fluctuations.

* At λ² ≈ 10.5, 1 - Acc_test ≈ 0.015

* At λ² ≈ 14, 1 - Acc_test ≈ 0.005

* At λ² ≈ 18, 1 - Acc_test ≈ 0.02

* At λ² ≈ 24, 1 - Acc_test ≈ 0.003

* **α = 2, μ = 2 (Black Plus Signs):** This series also shows a decreasing trend, but the values are generally lower than the α = 4, μ = 1 series.

* At λ² ≈ 10.5, 1 - Acc_test ≈ 0.003

* At λ² ≈ 14, 1 - Acc_test ≈ 0.002

* At λ² ≈ 18, 1 - Acc_test ≈ 0.003

* At λ² ≈ 24, 1 - Acc_test ≈ 0.005

* **1/2 TBO (Black Dotted Line):** This line represents a theoretical bound. It shows a decreasing trend, starting at approximately 1 - Acc_test ≈ 0.001 at λ² ≈ 10 and decreasing to approximately 1 - Acc_test ≈ 0.0005 at λ² ≈ 26.

* **Bayes – optimal (Black Dashed Line):** This line represents the optimal Bayesian performance. It shows a steep decrease from approximately 1 - Acc_test ≈ 0.0002 at λ² ≈ 10 to approximately 1 - Acc_test ≈ 0.00005 at λ² ≈ 26.

* **K = 1 (Dark Brown Circles):** This series shows a decreasing trend, starting at approximately 1 - Acc_test ≈ 0.0002 at λ² ≈ 10 and decreasing to approximately 1 - Acc_test ≈ 0.00005 at λ² ≈ 26.

* **K = 2 (Brown Circles):** This series shows a decreasing trend, starting at approximately 1 - Acc_test ≈ 0.001 at λ² ≈ 10 and decreasing to approximately 1 - Acc_test ≈ 0.00005 at λ² ≈ 26.

* **K = 3 (Dark Yellow Circles):** This series shows a decreasing trend, starting at approximately 1 - Acc_test ≈ 0.002 at λ² ≈ 10 and decreasing to approximately 1 - Acc_test ≈ 0.00005 at λ² ≈ 26.

* **K = 2, symmetrized graph (Yellow Circles):** This series shows a decreasing trend, starting at approximately 1 - Acc_test ≈ 0.0005 at λ² ≈ 10 and decreasing to approximately 1 - Acc_test ≈ 0.00005 at λ² ≈ 26.

* **K = 3, symmetrized graph (Light Yellow Circles):** This series shows a decreasing trend, starting at approximately 1 - Acc_test ≈ 0.001 at λ² ≈ 10 and decreasing to approximately 1 - Acc_test ≈ 0.00005 at λ² ≈ 26.

### Key Observations

* The theoretical bounds (1/2 TBO and Bayes – optimal) provide a benchmark for the performance of the other data series.

* The α = 4, μ = 1 series consistently exhibits higher test error compared to the α = 2, μ = 2 series.

* The K values seem to influence the test error, with higher K values generally leading to lower test error.

* The "symmetrized graph" versions of K=2 and K=3 show lower test error than their non-symmetrized counterparts.

### Interpretation

The plot demonstrates the relationship between lambda squared (λ²) and test error (1 - Acc_test) under different parameter settings (α, μ, K). The decreasing trend in most series suggests that increasing λ² generally improves test accuracy. However, the fluctuations in the α = 4, μ = 1 series indicate that this relationship is not always straightforward and may be sensitive to the specific parameter values.

The theoretical bounds (1/2 TBO and Bayes – optimal) provide a reference point for evaluating the performance of the empirical data. The fact that the empirical data series generally fall above these bounds suggests that there is room for improvement in the model's performance.

The influence of K on test error suggests that the complexity of the model (as represented by K) plays a role in its ability to generalize to unseen data. The symmetrized graph versions of K=2 and K=3 performing better than their non-symmetrized counterparts suggests that the symmetrization process helps to reduce overfitting and improve generalization.

The logarithmic scale on the y-axis emphasizes the differences in test error, particularly at lower error rates. This is important for understanding the practical significance of the observed differences in performance.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Scatter Plot: Test Error vs. λ² for Different Model Configurations

### Overview

This image is a scatter plot on a logarithmic scale comparing the test error (1 - Acc_test) against the parameter λ² for various model configurations. The plot includes two theoretical benchmark lines and data points for two primary model families (α=4, μ=1 and α=2, μ=2), each further subdivided by a parameter K and whether the graph is symmetrized. The overall trend shows test error decreasing as λ² increases.

### Components/Axes

* **X-axis:** Labeled "λ²". It is a linear scale with major tick marks at 10, 12, 14, 16, 18, 20, 22, 24, and 26.

* **Y-axis:** Labeled "1 - Acc_test". It is a logarithmic scale (base 10) with major tick marks at 10⁻⁶, 10⁻⁵, 10⁻⁴, 10⁻³, and 10⁻².

* **Primary Legend (Top-Right):**

* Filled Circle (●): Corresponds to model parameters α=4, μ=1.

* Plus Sign (+): Corresponds to model parameters α=2, μ=2.

* Dotted Line (····): Labeled "½ τ_BO". This is a theoretical benchmark line.

* Dashed Line (----): Labeled "Bayes – optimal". This is a theoretical lower bound line.

* **Secondary Legend (Bottom-Left):** This legend maps colors to the parameter K and graph type. The colors apply to both the circle and plus sign data points.

* Dark Brown: K=1

* Medium Brown: K=2

* Light Brown: K=3

* Dark Yellow: K=2, symmetrized graph

* Bright Yellow: K=3, symmetrized graph

### Detailed Analysis

**Trend Verification:**

* **Bayes-optimal Line (Dashed):** Slopes downward steeply from left to right. It represents the best possible theoretical performance.

* **½ τ_BO Line (Dotted):** Slopes downward, but less steeply than the Bayes-optimal line. It represents another theoretical benchmark.

* **Data Points (All Series):** All data series (circles and plus signs, across all colors) show a general downward trend as λ² increases, indicating that test error decreases with larger λ².

**Data Point Extraction (Approximate Values):**

* **Bayes-optimal Line:** At λ²=10, y ≈ 10⁻⁵. At λ²=12, y ≈ 10⁻⁶.

* **½ τ_BO Line:** At λ²=10, y ≈ 1.5x10⁻³. At λ²=20, y ≈ 10⁻⁵.

* **α=4, μ=1 (Circles):**

* K=1 (Dark Brown): Starts near y=4x10⁻² at λ²=10, decreases to ~10⁻⁴ at λ²=20.

* K=2 (Medium Brown): Starts near y=2x10⁻³ at λ²=10, decreases to ~10⁻⁵ at λ²=22.

* K=3 (Light Brown): Points are generally lower than K=2 for the same λ².

* K=2, symmetrized (Dark Yellow): Points are consistently lower than non-symmetrized K=2 circles. At λ²=10, y ≈ 5x10⁻⁵.

* K=3, symmetrized (Bright Yellow): The lowest circle points. At λ²=10, y ≈ 2x10⁻⁵. At λ²=12, y ≈ 10⁻⁶.

* **α=2, μ=2 (Plus Signs):**

* K=1 (Dark Brown): Starts near y=7x10⁻³ at λ²=10, decreases to ~3x10⁻⁵ at λ²=26.

* K=2 (Medium Brown): Points are generally lower than K=1 plus signs.

* K=3 (Light Brown): Points are generally lower than K=2 plus signs.

* K=2, symmetrized (Dark Yellow): Points are lower than non-symmetrized K=2 plus signs.

* K=3, symmetrized (Bright Yellow): The lowest plus sign points. At λ²=10, y ≈ 10⁻⁵.

### Key Observations

1. **Hierarchy of Performance:** For both model families (α=4,μ=1 and α=2,μ=2), performance improves (error decreases) as K increases from 1 to 3.

2. **Symmetrization Benefit:** For a fixed K (2 or 3), the "symmetrized graph" variants (yellow points) consistently achieve lower test error than their non-symmetrized counterparts (brown points) at similar λ² values.

3. **Model Family Comparison:** At similar λ² and K values, the α=4, μ=1 models (circles) generally achieve lower error than the α=2, μ=2 models (plus signs). For example, at λ²=16, the K=3 symmetrized circle is near 10⁻⁵, while the K=3 symmetrized plus sign is near 5x10⁻⁵.

4. **Proximity to Bounds:** The best-performing models (K=3, symmetrized) approach the ½ τ_BO line and, at higher λ², get closer to the Bayes-optimal bound. The K=1 models are far above both theoretical lines.

### Interpretation

This plot investigates how test error scales with the parameter λ² for different graph neural network or kernel machine configurations, likely in a theoretical or controlled experimental setting. The parameters α, μ, and K probably control aspects of the model's architecture or the data's structure (e.g., graph degree, feature dimension, or number of layers/hops).

The key findings are:

1. **λ² is a key driver of performance:** Increasing λ² consistently reduces test error across all tested configurations, suggesting it is a beneficial regularization parameter or a measure of signal-to-noise ratio.

2. **Model complexity helps:** Increasing K (which could represent the number of propagation steps or model depth) improves performance, indicating that capturing more complex, multi-hop relationships is beneficial.

3. **Symmetry is powerful:** Enforcing symmetry in the graph representation ("symmetrized graph") provides a significant and consistent performance boost. This suggests that the underlying problem or data has an inherent symmetry that, when leveraged by the model, leads to better generalization.

4. **Theoretical guides are informative:** The data points follow the slope of the theoretical ½ τ_BO line, and the best models trend toward the Bayes-optimal limit. This validates the theoretical framework and shows that practical models can approach fundamental limits with the right inductive biases (like symmetry and sufficient depth).

**Notable Anomaly:** The data points for K=1 (dark brown) are clustered in the upper-left region, showing high error and weak scaling with λ². This indicates that a model with K=1 is fundamentally limited and cannot take advantage of increasing λ² to reduce error effectively, unlike deeper (K=2,3) models.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Scatter Plot: Error Rate vs. Regularization Parameter (λ²)

### Overview

This scatter plot compares the test error rate (1 - Acc_test) across different regularization parameter values (λ²) for various model configurations. The plot includes multiple data series differentiated by model complexity (K), graph symmetrization, and theoretical benchmarks (Bayes-optimal and τ_BO).

### Components/Axes

- **X-axis**: λ² (regularization parameter), logarithmic scale from 10 to 26

- **Y-axis**: 1 - Acc_test (test error rate), logarithmic scale from 10⁻⁶ to 10⁻²

- **Legend**:

- Symbols:

- `●` = α=4, μ=1

- `+` = α=2, μ=2

- Lines:

- `---` = Bayes-optimal

- `...` = ½τ_BO

- Colors:

- Dark brown = K=1

- Medium brown = K=2

- Light brown = K=3

- Yellow = Symmetrized graphs (K=2 and K=3)

### Detailed Analysis

1. **Bayes-optimal reference**: The dashed line shows the theoretical minimum error rate, serving as a performance benchmark.

2. **τ_BO reference**: The dotted line represents half the Bayes-optimal error rate (1/2τ_BO), acting as an intermediate target.

3. **Model complexity (K)**:

- K=1 (dark brown circles): Highest error rates, consistently above τ_BO line

- K=2 (medium brown plus signs): Moderate error rates, approaching τ_BO line

- K=3 (light brown circles): Lowest error rates, closest to τ_BO line

4. **Symmetrized graphs**:

- K=2 (yellow circles): Slightly higher error than non-symmetrized K=2

- K=3 (yellow circles): Similar performance to non-symmetrized K=3

5. **Parameter combinations**:

- α=4, μ=1 (dark brown circles): Best performance across all K values

- α=2, μ=2 (brown plus signs): Worst performance, consistently above τ_BO line

### Key Observations

1. **Error reduction trend**: All data series show decreasing error rates as λ² increases, following the Bayes-optimal trend line.

2. **Symmetrization impact**: Symmetrized graphs (yellow circles) show minimal performance difference compared to non-symmetrized versions for K≥2.

3. **Parameter sensitivity**: The α=4, μ=1 configuration (dark brown circles) consistently outperforms α=2, μ=2 (brown plus signs) across all K values.

4. **Convergence pattern**: For λ² > 18, all configurations approach within 10⁻⁴ of the Bayes-optimal error rate.

### Interpretation

The plot demonstrates that:

1. **Regularization strength** (λ²) is critical for error minimization, with optimal performance achieved at λ² > 18.

2. **Model complexity** (K) directly impacts error rates, with higher K values enabling better approximation of the Bayes-optimal solution.

3. **Symmetrization** provides limited benefits for K≥2, suggesting diminishing returns in graph structure optimization.

4. **Parameter selection** (α, μ) significantly affects performance, with α=4, μ=1 being the most effective combination.

5. The τ_BO line (1/2τ_BO) acts as a practical target, with all configurations except α=2, μ=2 approaching this threshold at higher λ² values.

This analysis suggests that optimal model configuration requires balancing regularization strength (λ² > 18), model complexity (K≥2), and parameter selection (α=4, μ=1), while graph symmetrization offers minimal additional benefits for K≥2.

DECODING INTELLIGENCE...