## Line Graph: Test Accuracy vs. Time for Neural Network Training

### Overview

The image contains two primary components:

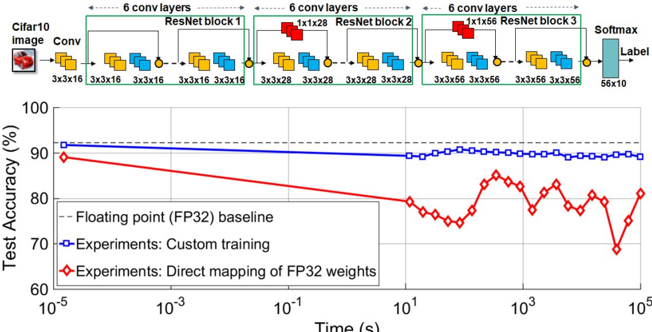

1. A **neural network architecture diagram** (top) depicting a ResNet-based model for CIFAR-10 image classification.

2. A **line graph** (bottom) comparing test accuracy over time for three training methods: FP32 baseline, custom training, and direct mapping of FP32 weights.

---

### Components/Axes

#### Neural Network Diagram

- **Input**: CIFAR-10 image (3x32x32)

- **Layers**:

- 6 convolutional layers (Conv) with 3x3x16 filters

- 3 ResNet blocks (each with 6 convolutional layers):

- Block 1: 3x16x16 → 3x28x28

- Block 2: 3x28x28 → 3x56x56

- Block 3: 3x56x56 → 3x56x56

- Output: Softmax layer (56x10) for label prediction

- **Color Coding**:

- Yellow: Convolutional layers

- Blue: ResNet blocks

- Red: ResNet blocks (highlighted in diagram)

- Gray: Softmax layer

#### Line Graph

- **X-axis**: Time (s) on logarithmic scale (10⁻⁵ to 10⁵)

- **Y-axis**: Test Accuracy (%) from 60% to 100%

- **Legend**:

- Dashed gray: FP32 baseline (90% accuracy)

- Solid blue: Custom training experiments (90% accuracy)

- Red diamonds: Direct mapping of FP32 weights (80–85% accuracy)

---

### Detailed Analysis

#### Neural Network Diagram

- **Flow**:

- Input → 6 Conv layers → ResNet Block 1 → ResNet Block 2 → ResNet Block 3 → Softmax → Label

- **Key Details**:

- ResNet blocks use residual connections (indicated by red arrows in diagram).

- Output dimensions grow from 3x16x16 to 3x56x56 across blocks.

#### Line Graph

1. **FP32 Baseline (Gray Dashed Line)**:

- Constant at ~90% accuracy across all time scales.

2. **Custom Training (Blue Line)**:

- Stable at ~90% accuracy, matching the FP32 baseline.

3. **Direct Mapping of FP32 Weights (Red Diamonds)**:

- Starts at ~85% accuracy, dips below 80% at ~10¹ seconds, then recovers to ~80% by 10³ seconds.

- Exhibits significant volatility compared to other methods.

---

### Key Observations

1. **Accuracy Stability**:

- Custom training and FP32 baseline maintain near-identical accuracy (~90%), suggesting robust performance.

2. **Direct Mapping Limitations**:

- Red line shows a 10% accuracy drop relative to the baseline, with erratic fluctuations.

3. **Time Correlation**:

- Direct mapping’s performance degradation occurs at intermediate time scales (~10¹–10³ seconds).

---

### Interpretation

1. **Model Architecture**:

- The ResNet-based design (with residual connections) likely enables efficient feature extraction, contributing to high accuracy.

2. **Training Method Impact**:

- Direct mapping of FP32 weights introduces instability, possibly due to quantization errors or suboptimal weight initialization.

- Custom training avoids these issues, maintaining performance parity with the FP32 baseline.

3. **Practical Implications**:

- Direct mapping may be unsuitable for production without additional optimization (e.g., fine-tuning).

- The FP32 baseline serves as a critical reference for evaluating quantization trade-offs.

---

### Notable Anomalies

- **Red Line Dip**: The sharp accuracy drop at ~10¹ seconds suggests a potential instability during mid-training phases for direct mapping.

- **Recovery at 10³ Seconds**: Partial recovery implies some adaptation to the training process, but residual performance gaps persist.