# Technical Document: Retrieval-Augmented Generation (RAG) Workflow Diagram

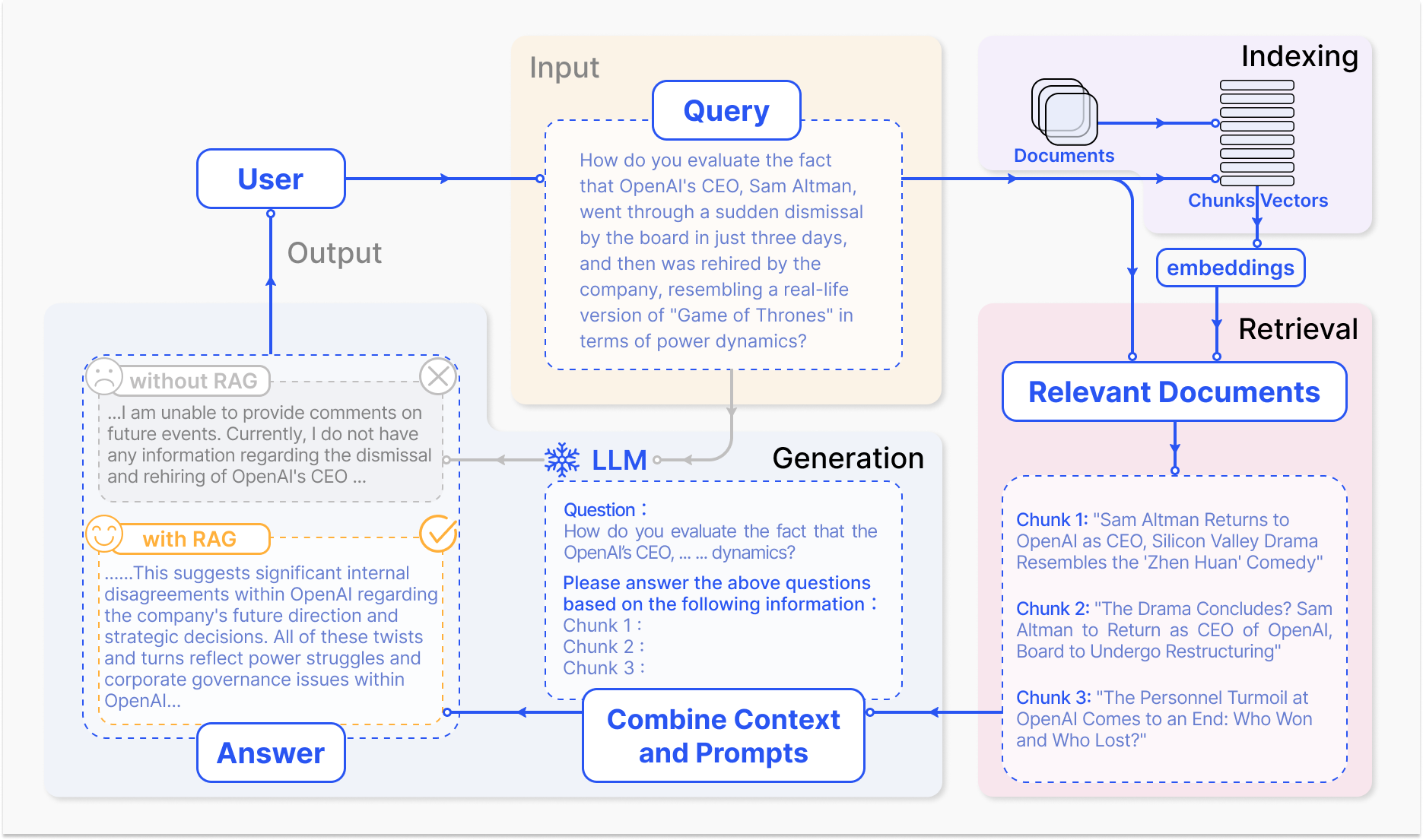

This document provides a comprehensive extraction and analysis of the provided technical diagram, which illustrates the architectural flow of a Retrieval-Augmented Generation (RAG) system compared to a standard LLM response.

## 1. High-Level System Overview

The diagram depicts a four-stage process for processing a user query through an AI system: **Input**, **Indexing**, **Retrieval**, and **Generation**. It specifically highlights the difference in output quality when using RAG versus a standard LLM.

---

## 2. Component Breakdown by Region

### Region 1: Input (Top Center)

* **Actor:** `User` (Top Left)

* **Component:** `Query` (contained in a dashed blue box within a tan background).

* **Transcribed Text:**

> "How do you evaluate the fact that OpenAI's CEO, Sam Altman, went through a sudden dismissal by the board in just three days, and then was rehired by the company, resembling a real-life version of 'Game of Thrones' in terms of power dynamics?"

### Region 2: Indexing (Top Right)

This region represents the data preparation phase, contained in a light purple background.

* **Components:**

* **Documents**: Represented by a stack of document icons.

* **Chunks**: The documents are broken down into smaller horizontal segments.

* **Vectors**: Vertical lines indicating the mathematical representation of the chunks.

* **embeddings**: A blue pill-shaped button indicating the process of converting text to vector space.

### Region 3: Retrieval (Bottom Right)

This region represents the search phase, contained in a light pink background.

* **Component:** `Relevant Documents` (Blue header box).

* **Data Content (Retrieved Chunks):**

* **Chunk 1:** "Sam Altman Returns to OpenAI as CEO, Silicon Valley Drama Resembles the 'Zhen Huan' Comedy"

* **Chunk 2:** "The Drama Concludes? Sam Altman to Return as CEO of OpenAI, Board to Undergo Restructuring"

* **Chunk 3:** "The Personnel Turmoil at OpenAI Comes to an End: Who Won and Who Lost?"

### Region 4: Generation (Bottom Center & Left)

This region represents the final processing and output, contained in a light grey background.

* **Component:** `Combine Context and Prompts` (Blue header box).

* **Internal Prompt Logic:**

* `Question :` (References the original Query).

* `Please answer the above questions based on the following information :`

* `Chunk 1 :`, `Chunk 2 :`, `Chunk 3 :` (Placeholders for retrieved data).

* **Processor:** `LLM` (Represented by a snowflake icon).

---

## 3. Comparative Analysis: Output Results

The diagram compares two output paths originating from the **LLM** and leading back to the **User**.

### Path A: "without RAG" (Top Output Box)

* **Visual Indicator:** Grey dashed box, sad face icon (☹️), and a red "X" icon.

* **Trend:** The model fails to provide specific information because the event is outside its training data.

* **Transcribed Text:**

> "...I am unable to provide comments on future events. Currently, I do not have any information regarding the dismissal and rehiring of OpenAI's CEO ..."

### Path B: "with RAG" (Bottom Output Box)

* **Visual Indicator:** Gold dashed box, happy face icon (😊), and a gold checkmark icon (✔️).

* **Trend:** The model provides a detailed, context-aware analysis based on the retrieved chunks.

* **Transcribed Text:**

> "......This suggests significant internal disagreements within OpenAI regarding the company's future direction and strategic decisions. All of these twists and turns reflect power struggles and corporate governance issues within OpenAI..."

---

## 4. Process Flow (Spatial Logic)

1. **Initiation:** The `User` sends a `Query` to the `Input` block.

2. **Data Preparation (Parallel/Pre-emptive):** `Documents` are processed into `Chunks` and `Vectors` via `embeddings` in the `Indexing` stage.

3. **Retrieval:** The `Query` is sent to the `Retrieval` stage to find `Relevant Documents` (Chunks 1-3).

4. **Augmentation:** The `Relevant Documents` and the original `Query` are merged in the `Combine Context and Prompts` block.

5. **Inference:** The combined prompt is fed into the `LLM`.

6. **Delivery:** The `LLM` generates the `Answer` (specifically the "with RAG" version), which is sent as `Output` back to the `User`.

---

## 5. Language Declaration

* **Primary Language:** English.

* **Secondary Language:** None (Note: The text mentions "Zhen Huan," which is a reference to a Chinese television drama, but the text itself is written in English characters).