## Grouped Bar Chart: Latency vs. Batch Size for FP16 and INT8 Precision

### Overview

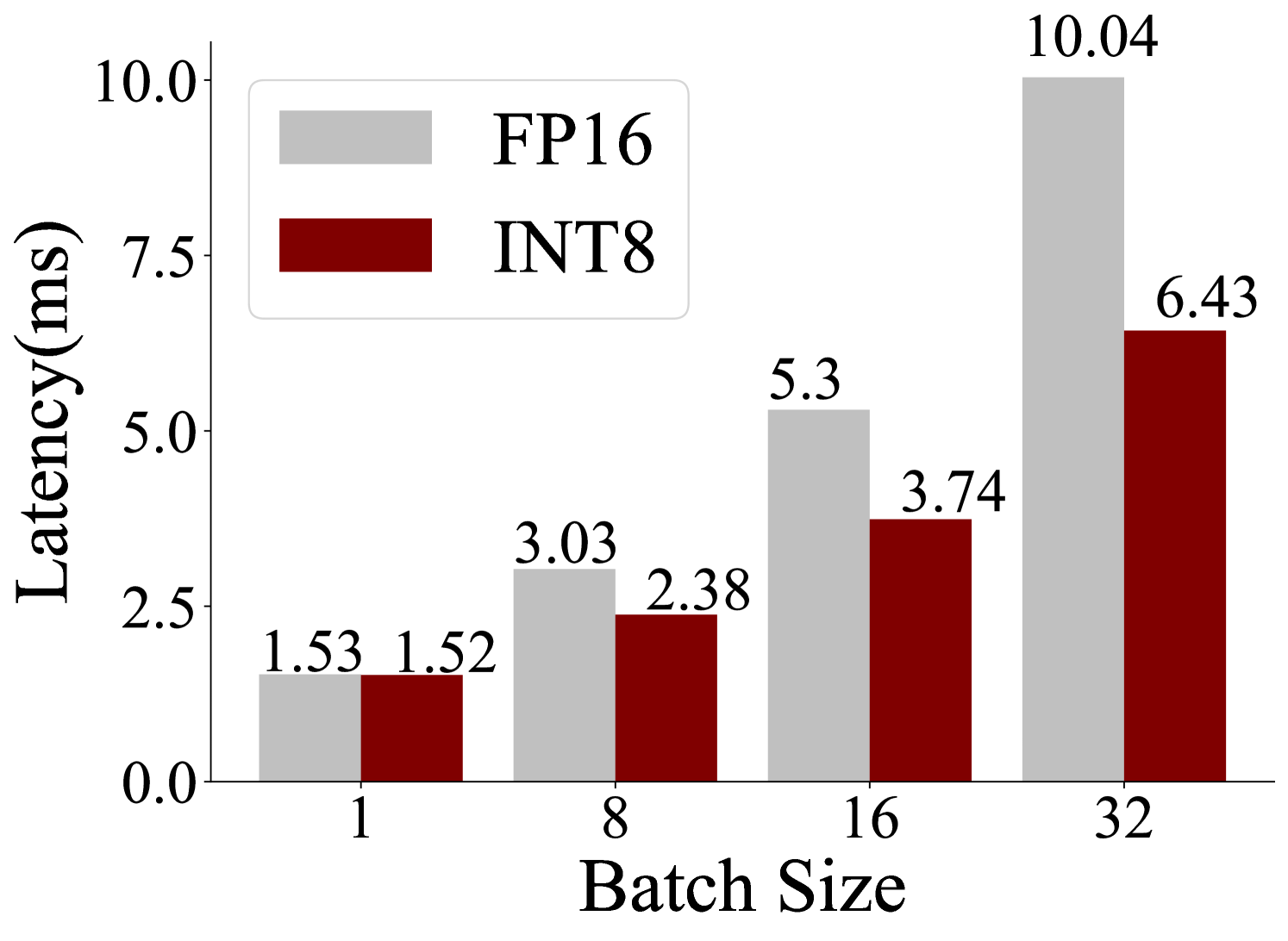

The image displays a grouped bar chart comparing the inference latency (in milliseconds) of two numerical precision formats, FP16 (16-bit floating point) and INT8 (8-bit integer), across four different batch sizes. The chart demonstrates how latency scales with increasing batch size for each precision type.

### Components/Axes

* **Chart Type:** Grouped (clustered) bar chart.

* **X-Axis (Horizontal):**

* **Label:** "Batch Size"

* **Categories/Markers:** 1, 8, 16, 32. These represent the number of input samples processed in a single inference pass.

* **Y-Axis (Vertical):**

* **Label:** "Latency(ms)"

* **Scale:** Linear scale from 0.0 to 10.0, with major tick marks at 0.0, 2.5, 5.0, 7.5, and 10.0.

* **Legend:**

* **Position:** Top-left corner of the chart area.

* **Items:**

* A light gray rectangle labeled "FP16".

* A dark red (maroon) rectangle labeled "INT8".

* **Data Labels:** Numerical values are printed directly above each bar, indicating the precise latency measurement.

### Detailed Analysis

The chart presents the following data points, grouped by batch size:

| Batch Size | FP16 Latency (ms) | INT8 Latency (ms) |

| :--- | :--- | :--- |

| **1** | 1.53 | 1.52 |

| **8** | 3.03 | 2.38 |

| **16** | 5.3 | 3.74 |

| **32** | 10.04 | 6.43 |

**Trend Verification:**

* **FP16 Series (Light Gray Bars):** The latency shows a clear, steep upward trend as batch size increases. The increase appears to be more than linear, accelerating notably between batch sizes 16 and 32.

* **INT8 Series (Dark Red Bars):** The latency also increases with batch size, but the slope is consistently less steep than that of the FP16 series. The growth appears more gradual and controlled.

### Key Observations

1. **Performance Crossover at Low Batch Size:** At a batch size of 1, the latencies are nearly identical (1.53 ms vs. 1.52 ms), with INT8 being marginally faster.

2. **Diverging Performance Gap:** As batch size increases, the performance gap between INT8 and FP16 widens significantly. The advantage of INT8 becomes more pronounced with larger batches.

3. **Maximum Observed Difference:** The largest absolute difference occurs at batch size 32, where INT8 (6.43 ms) is approximately 3.61 ms faster than FP16 (10.04 ms), representing a ~36% reduction in latency.

4. **Scaling Behavior:** FP16 latency scales poorly with batch size, nearly doubling from batch 16 (5.3 ms) to batch 32 (10.04 ms). INT8 latency scales more favorably, increasing by a smaller factor over the same range (3.74 ms to 6.43 ms).

### Interpretation

This chart provides a clear performance comparison relevant to machine learning model deployment and optimization. The data suggests that **using INT8 quantization offers a significant latency advantage over FP16, and this advantage becomes increasingly valuable as the workload (batch size) grows.**

* **Why it matters:** In production environments where throughput (processing many samples quickly) is critical, using INT8 precision can lead to substantially faster inference times, especially for services that handle large batches of requests. This can translate to better user experience, lower computational costs, and higher system efficiency.

* **Underlying reason:** The trend is consistent with the expected benefits of quantization. INT8 operations typically require less memory bandwidth and computational resources than FP16 operations. The widening gap suggests that the overhead of managing larger batches exacerbates the inherent efficiency differences between the two numerical formats.

* **Practical implication:** For applications where batch size is large (e.g., offline processing, high-throughput servers), adopting INT8 quantization is strongly indicated. For very small batch sizes (e.g., real-time, single-request serving), the performance benefit may be negligible, and other factors like model accuracy post-quantization would become the primary consideration.