## Line Graphs and Histograms: Human Consistency and Accuracy Analysis

### Overview

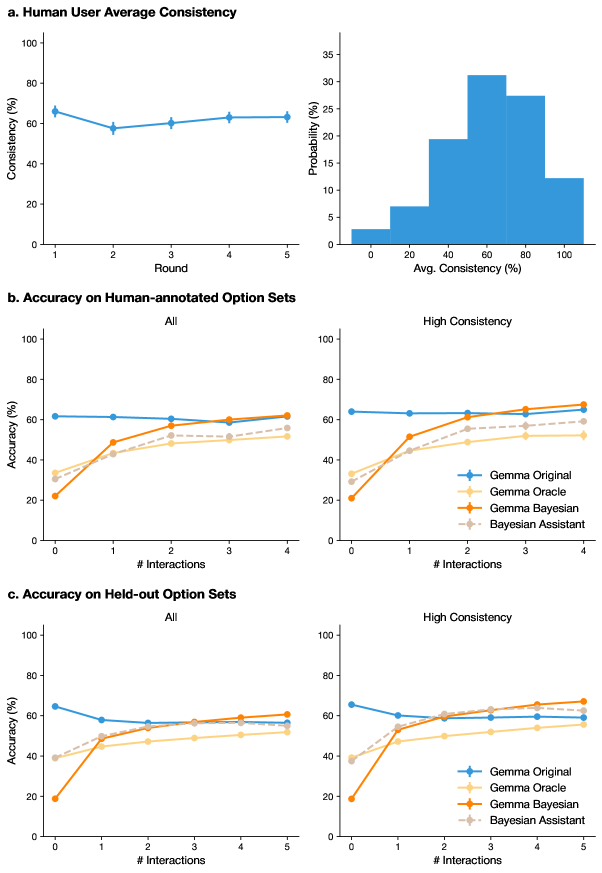

The image contains six visualizations analyzing human consistency and accuracy across different interaction scenarios. Key elements include line graphs tracking performance over rounds/interactions and a histogram showing consistency distribution. Four data series are compared: Gemma Original, Gemma Oracle, Gemma Bayesian, and Bayesian Assistant.

### Components/Axes

1. **Top Left (a. Human User Average Consistency)**

- X-axis: "Round" (1–5)

- Y-axis: "Consistency (%)" (0–100)

- Data: Blue line with markers showing slight dip in Round 2, stabilizing at ~60–65% thereafter.

2. **Top Right (b. Accuracy on Human-annotated Option Sets)**

- X-axis: "Avg. Consistency (%)" (0–100)

- Y-axis: "Probability (%)" (0–35)

- Data: Blue histogram bars peaking at 60–80% consistency.

3. **Middle Left (c. Accuracy on Held-out Option Sets - All)**

- X-axis: "# Interactions" (0–5)

- Y-axis: "Accuracy (%)" (0–100)

- Data: Four lines (blue, yellow, orange, gray) representing different models.

4. **Middle Right (High Consistency Subset)**

- Same axes as Middle Left but filtered for high-consistency users.

5. **Bottom Left (c. Accuracy on Held-out Option Sets - All)**

- Same as Middle Left but extended to 5 rounds.

6. **Bottom Right (High Consistency Subset)**

- Same as Middle Right but extended to 5 rounds.

### Detailed Analysis

#### a. Human User Average Consistency

- **Trend**: Consistency starts at ~65% (Round 1), drops to ~58% (Round 2), then rises to ~62% (Round 3) and stabilizes (~63–64%) in Rounds 4–5.

- **Uncertainty**: Approximate values due to lack of gridlines; error bars not visible.

#### b. Accuracy on Human-annotated Option Sets

- **Distribution**: 70% of users fall within 60–80% consistency. Lower tails (0–40%) have negligible probability.

#### c. Accuracy on Held-out Option Sets (All)

- **Gemma Original (Blue)**: Starts at ~62% (0 interactions), dips to ~58% (Round 5).

- **Gemma Oracle (Yellow)**: Starts at ~30% (0 interactions), rises to ~50% (Round 5).

- **Gemma Bayesian (Orange)**: Starts at ~20% (0 interactions), surges to ~60% (Round 5).

- **Bayesian Assistant (Gray)**: Starts at ~35% (0 interactions), reaches ~55% (Round 5).

#### High Consistency Subset

- **Trends**: All models show steeper improvement. Gemma Bayesian jumps from ~25% (0 interactions) to ~65% (Round 5).

### Key Observations

1. **Consistency Stability**: Human users maintain ~60–65% consistency across rounds, with minor fluctuations.

2. **Model Performance**:

- Gemma Bayesian outperforms others in held-out sets, especially with high consistency users.

- Bayesian Assistant shows moderate improvement but lags behind Gemma Bayesian.

3. **Interaction Impact**: Accuracy improves significantly with more interactions for all models.

### Interpretation

- **Human Behavior**: Stable consistency suggests reliable user performance, though slight dips may indicate task fatigue or learning curves.

- **Model Efficacy**:

- Gemma Bayesian’s rapid improvement implies strong adaptability to user feedback.

- Oracle and Assistant models perform better with high-consistency users, highlighting the importance of data quality.

- **Practical Implications**: Bayesian models (Gemma Bayesian, Bayesian Assistant) are more effective in dynamic environments requiring iterative learning. High-consistency users may represent a subset where models achieve near-human performance.

*Note: All values are approximate due to the absence of gridlines or exact numerical labels in the image.*