TECHNICAL ASSET FINGERPRINT

dee216170bde4225546055aa

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

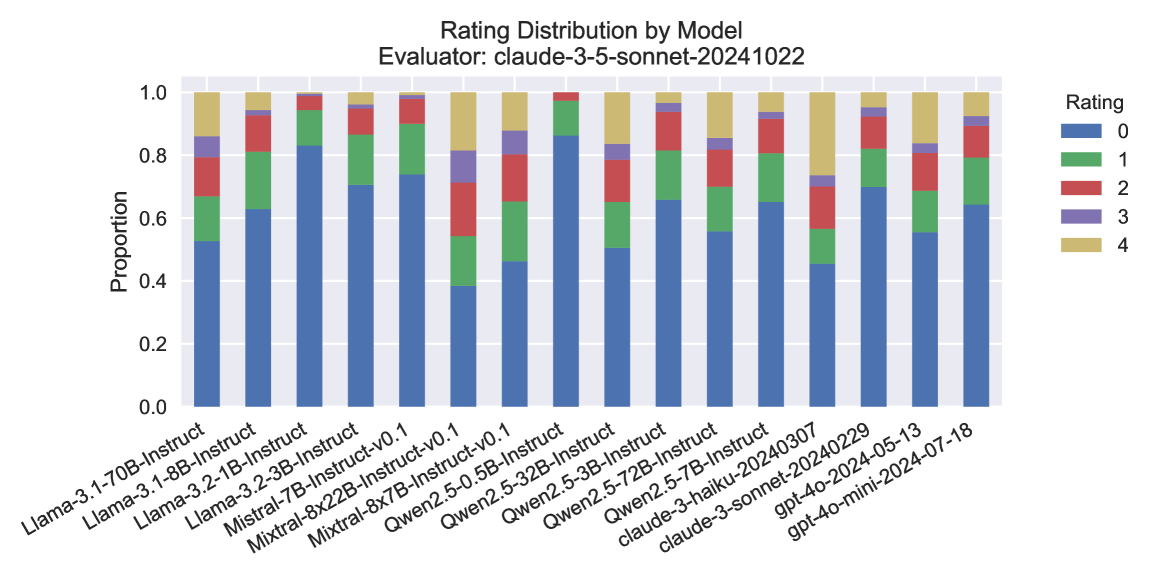

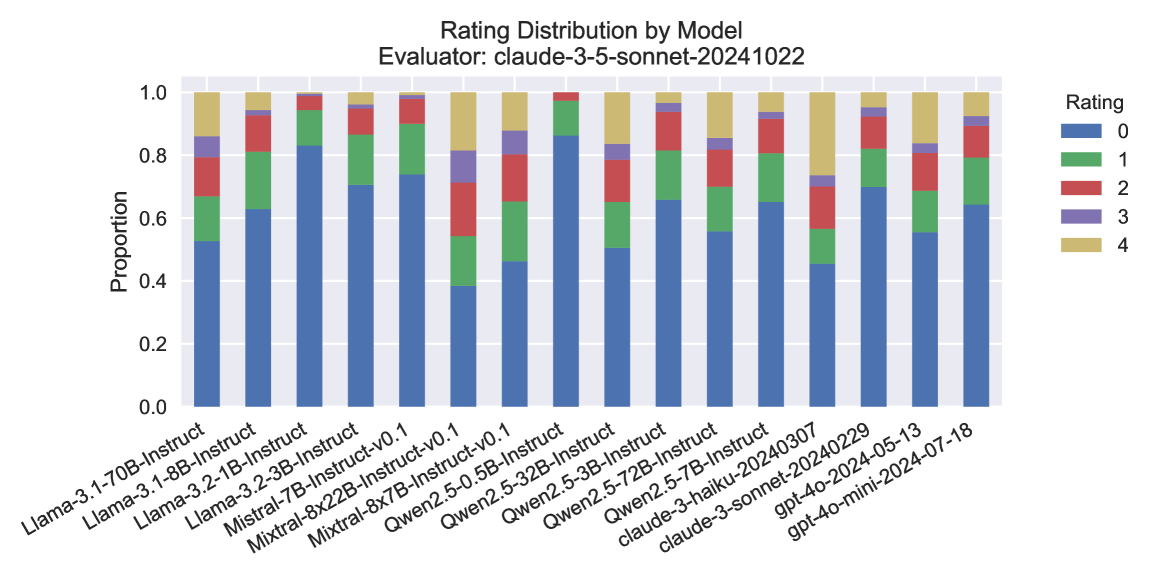

## Stacked Bar Chart: Rating Distribution by Model

### Overview

The image is a stacked bar chart illustrating the distribution of ratings for various language models. The x-axis represents different models, and the y-axis represents the proportion of each rating (0-4). The chart also specifies the evaluator used: "claude-3-5-sonnet-20241022".

### Components/Axes

* **Title:** Rating Distribution by Model

* **Subtitle:** Evaluator: claude-3-5-sonnet-20241022

* **X-axis:** Language Models (categorical):

* Llama-3.1-70B-Instruct

* Llama-3.1-8B-Instruct

* Llama-3.2-1B-Instruct

* Llama-3.2-3B-Instruct

* Mistral-7B-Instruct-v0.1

* Mixtral-8x22B-Instruct-v0.1

* Mixtral-8x7B-Instruct-v0.1

* Qwen2.5-0.5B-Instruct

* Qwen2.5-32B-Instruct

* Qwen2.5-3B-Instruct

* Qwen2.5-72B-Instruct

* Qwen2.5-7B-Instruct

* claude-3-haiku-20240307

* claude-3-sonnet-20240229

* gpt-4o-2024-05-13

* gpt-4o-mini-2024-07-18

* **Y-axis:** Proportion (numerical), ranging from 0.0 to 1.0 in increments of 0.2.

* **Legend:** Located on the top-right of the chart.

* Blue: 0

* Green: 1

* Red: 2

* Purple: 3

* Tan/Beige: 4

### Detailed Analysis

The chart displays the proportion of each rating (0 to 4) for each language model. Each bar represents a model, and the height of each colored segment within the bar indicates the proportion of that rating for the model.

* **Llama-3.1-70B-Instruct:**

* Rating 0 (Blue): ~0.6

* Rating 1 (Green): ~0.2

* Rating 2 (Red): ~0.1

* Rating 3 (Purple): ~0.05

* Rating 4 (Tan): ~0.05

* **Llama-3.1-8B-Instruct:**

* Rating 0 (Blue): ~0.6

* Rating 1 (Green): ~0.2

* Rating 2 (Red): ~0.1

* Rating 3 (Purple): ~0.05

* Rating 4 (Tan): ~0.05

* **Llama-3.2-1B-Instruct:**

* Rating 0 (Blue): ~0.6

* Rating 1 (Green): ~0.2

* Rating 2 (Red): ~0.1

* Rating 3 (Purple): ~0.05

* Rating 4 (Tan): ~0.05

* **Llama-3.2-3B-Instruct:**

* Rating 0 (Blue): ~0.6

* Rating 1 (Green): ~0.2

* Rating 2 (Red): ~0.1

* Rating 3 (Purple): ~0.05

* Rating 4 (Tan): ~0.05

* **Mistral-7B-Instruct-v0.1:**

* Rating 0 (Blue): ~0.5

* Rating 1 (Green): ~0.2

* Rating 2 (Red): ~0.15

* Rating 3 (Purple): ~0.1

* Rating 4 (Tan): ~0.05

* **Mixtral-8x22B-Instruct-v0.1:**

* Rating 0 (Blue): ~0.45

* Rating 1 (Green): ~0.25

* Rating 2 (Red): ~0.15

* Rating 3 (Purple): ~0.1

* Rating 4 (Tan): ~0.05

* **Mixtral-8x7B-Instruct-v0.1:**

* Rating 0 (Blue): ~0.4

* Rating 1 (Green): ~0.3

* Rating 2 (Red): ~0.15

* Rating 3 (Purple): ~0.1

* Rating 4 (Tan): ~0.05

* **Qwen2.5-0.5B-Instruct:**

* Rating 0 (Blue): ~0.7

* Rating 1 (Green): ~0.15

* Rating 2 (Red): ~0.05

* Rating 3 (Purple): ~0.05

* Rating 4 (Tan): ~0.05

* **Qwen2.5-32B-Instruct:**

* Rating 0 (Blue): ~0.8

* Rating 1 (Green): ~0.1

* Rating 2 (Red): ~0.05

* Rating 3 (Purple): ~0.02

* Rating 4 (Tan): ~0.03

* **Qwen2.5-3B-Instruct:**

* Rating 0 (Blue): ~0.6

* Rating 1 (Green): ~0.2

* Rating 2 (Red): ~0.1

* Rating 3 (Purple): ~0.05

* Rating 4 (Tan): ~0.05

* **Qwen2.5-72B-Instruct:**

* Rating 0 (Blue): ~0.7

* Rating 1 (Green): ~0.15

* Rating 2 (Red): ~0.05

* Rating 3 (Purple): ~0.05

* Rating 4 (Tan): ~0.05

* **Qwen2.5-7B-Instruct:**

* Rating 0 (Blue): ~0.6

* Rating 1 (Green): ~0.2

* Rating 2 (Red): ~0.1

* Rating 3 (Purple): ~0.05

* Rating 4 (Tan): ~0.05

* **claude-3-haiku-20240307:**

* Rating 0 (Blue): ~0.5

* Rating 1 (Green): ~0.2

* Rating 2 (Red): ~0.15

* Rating 3 (Purple): ~0.1

* Rating 4 (Tan): ~0.05

* **claude-3-sonnet-20240229:**

* Rating 0 (Blue): ~0.8

* Rating 1 (Green): ~0.1

* Rating 2 (Red): ~0.05

* Rating 3 (Purple): ~0.02

* Rating 4 (Tan): ~0.03

* **gpt-4o-2024-05-13:**

* Rating 0 (Blue): ~0.6

* Rating 1 (Green): ~0.2

* Rating 2 (Red): ~0.1

* Rating 3 (Purple): ~0.05

* Rating 4 (Tan): ~0.05

* **gpt-4o-mini-2024-07-18:**

* Rating 0 (Blue): ~0.6

* Rating 1 (Green): ~0.2

* Rating 2 (Red): ~0.1

* Rating 3 (Purple): ~0.05

* Rating 4 (Tan): ~0.05

### Key Observations

* Most models have a high proportion of rating 0.

* Ratings 3 and 4 have the lowest proportions across all models.

* The Qwen models tend to have a higher proportion of rating 0 compared to the other models.

* The Mixtral models have a relatively higher proportion of ratings 1, 2, and 3 compared to the other models.

### Interpretation

The chart provides a comparative overview of how different language models are rated by the "claude-3-5-sonnet-20241022" evaluator. The dominance of rating 0 suggests that, according to this evaluator, many of the models are frequently assigned the lowest rating. The variations in rating distributions among the models indicate differences in their performance or characteristics as perceived by the evaluator. The Mixtral models seem to receive more diverse ratings, while the Qwen models are more heavily skewed towards the lowest rating. This information can be valuable for understanding the relative strengths and weaknesses of these models and for guiding further development and refinement efforts.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

## Stacked Bar Chart: Rating Distribution by Model

### Overview

This image displays a stacked bar chart showing the distribution of ratings (0 through 4) for various language models, as evaluated by "claude-3-5-sonnet-20241022". Each bar represents a specific model, and the segments within each bar indicate the proportion of responses that received each rating. The chart is designed to visually compare the rating profiles of different models.

### Components/Axes

* **Title:** "Rating Distribution by Model"

* **Subtitle:** "Evaluator: claude-3-5-sonnet-20241022"

* **Y-axis Title:** "Proportion"

* **Y-axis Scale:** Ranges from 0.0 to 1.0, with major ticks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-axis Labels:** These are the names of the different models being evaluated. They are rotated for readability and include:

* Llama-3.1-70B-Instruct

* Llama-3.1-8B-Instruct

* Llama-3.2-1B-Instruct

* Llama-3.2-3B-Instruct

* Mistral-7B-Instruct

* Mistral-8x22B-Instruct-v0.1

* Qwen2.5-0.5B-Instruct-v0.1

* Qwen2.5-2B-Instruct

* Qwen2.5-5.3B-Instruct

* Qwen2.5-72B-Instruct

* claude-3-haiku-20240307

* claude-3-sonnet-20240229

* gpt-4o-20240513

* gpt-4o-mini-2024-07-18

* **Legend:** Located in the top-right corner of the chart. It maps colors to rating values:

* Blue: Rating 0

* Green: Rating 1

* Red: Rating 2

* Purple: Rating 3

* Yellow: Rating 4

### Detailed Analysis

The chart displays 14 distinct models. For each model, the bar is segmented from bottom to top, representing the proportion of ratings from 0 to 4.

* **Llama-3.1-70B-Instruct:**

* Rating 0 (Blue): Approximately 0.55 (55%)

* Rating 1 (Green): Approximately 0.15 (15%), cumulative proportion ~0.70

* Rating 2 (Red): Approximately 0.10 (10%), cumulative proportion ~0.80

* Rating 3 (Purple): Approximately 0.05 (5%), cumulative proportion ~0.85

* Rating 4 (Yellow): Approximately 0.15 (15%), cumulative proportion ~1.00

* **Llama-3.1-8B-Instruct:**

* Rating 0 (Blue): Approximately 0.98 (98%)

* Rating 1 (Green): Approximately 0.01 (1%), cumulative proportion ~0.99

* Rating 2 (Red): Negligible, cumulative proportion ~0.99

* Rating 3 (Purple): Negligible, cumulative proportion ~0.99

* Rating 4 (Yellow): Approximately 0.01 (1%), cumulative proportion ~1.00

* **Llama-3.2-1B-Instruct:**

* Rating 0 (Blue): Approximately 0.98 (98%)

* Rating 1 (Green): Approximately 0.01 (1%), cumulative proportion ~0.99

* Rating 2 (Red): Negligible, cumulative proportion ~0.99

* Rating 3 (Purple): Negligible, cumulative proportion ~0.99

* Rating 4 (Yellow): Approximately 0.01 (1%), cumulative proportion ~1.00

* **Llama-3.2-3B-Instruct:**

* Rating 0 (Blue): Approximately 0.98 (98%)

* Rating 1 (Green): Approximately 0.01 (1%), cumulative proportion ~0.99

* Rating 2 (Red): Negligible, cumulative proportion ~0.99

* Rating 3 (Purple): Negligible, cumulative proportion ~0.99

* Rating 4 (Yellow): Approximately 0.01 (1%), cumulative proportion ~1.00

* **Mistral-7B-Instruct:**

* Rating 0 (Blue): Approximately 0.68 (68%)

* Rating 1 (Green): Approximately 0.15 (15%), cumulative proportion ~0.83

* Rating 2 (Red): Approximately 0.08 (8%), cumulative proportion ~0.91

* Rating 3 (Purple): Approximately 0.03 (3%), cumulative proportion ~0.94

* Rating 4 (Yellow): Approximately 0.06 (6%), cumulative proportion ~1.00

* **Mistral-8x22B-Instruct-v0.1:**

* Rating 0 (Blue): Approximately 0.42 (42%)

* Rating 1 (Green): Approximately 0.25 (25%), cumulative proportion ~0.67

* Rating 2 (Red): Approximately 0.15 (15%), cumulative proportion ~0.82

* Rating 3 (Purple): Approximately 0.08 (8%), cumulative proportion ~0.90

* Rating 4 (Yellow): Approximately 0.10 (10%), cumulative proportion ~1.00

* **Qwen2.5-0.5B-Instruct-v0.1:**

* Rating 0 (Blue): Approximately 0.40 (40%)

* Rating 1 (Green): Approximately 0.25 (25%), cumulative proportion ~0.65

* Rating 2 (Red): Approximately 0.15 (15%), cumulative proportion ~0.80

* Rating 3 (Purple): Approximately 0.08 (8%), cumulative proportion ~0.88

* Rating 4 (Yellow): Approximately 0.12 (12%), cumulative proportion ~1.00

* **Qwen2.5-2B-Instruct:**

* Rating 0 (Blue): Approximately 0.70 (70%)

* Rating 1 (Green): Approximately 0.15 (15%), cumulative proportion ~0.85

* Rating 2 (Red): Approximately 0.05 (5%), cumulative proportion ~0.90

* Rating 3 (Purple): Approximately 0.03 (3%), cumulative proportion ~0.93

* Rating 4 (Yellow): Approximately 0.07 (7%), cumulative proportion ~1.00

* **Qwen2.5-5.3B-Instruct:**

* Rating 0 (Blue): Approximately 0.65 (65%)

* Rating 1 (Green): Approximately 0.15 (15%), cumulative proportion ~0.80

* Rating 2 (Red): Approximately 0.08 (8%), cumulative proportion ~0.88

* Rating 3 (Purple): Approximately 0.05 (5%), cumulative proportion ~0.93

* Rating 4 (Yellow): Approximately 0.07 (7%), cumulative proportion ~1.00

* **Qwen2.5-72B-Instruct:**

* Rating 0 (Blue): Approximately 0.58 (58%)

* Rating 1 (Green): Approximately 0.18 (18%), cumulative proportion ~0.76

* Rating 2 (Red): Approximately 0.10 (10%), cumulative proportion ~0.86

* Rating 3 (Purple): Approximately 0.06 (6%), cumulative proportion ~0.92

* Rating 4 (Yellow): Approximately 0.08 (8%), cumulative proportion ~1.00

* **claude-3-haiku-20240307:**

* Rating 0 (Blue): Approximately 0.75 (75%)

* Rating 1 (Green): Approximately 0.10 (10%), cumulative proportion ~0.85

* Rating 2 (Red): Approximately 0.05 (5%), cumulative proportion ~0.90

* Rating 3 (Purple): Approximately 0.03 (3%), cumulative proportion ~0.93

* Rating 4 (Yellow): Approximately 0.07 (7%), cumulative proportion ~1.00

* **claude-3-sonnet-20240229:**

* Rating 0 (Blue): Approximately 0.60 (60%)

* Rating 1 (Green): Approximately 0.15 (15%), cumulative proportion ~0.75

* Rating 2 (Red): Approximately 0.10 (10%), cumulative proportion ~0.85

* Rating 3 (Purple): Approximately 0.05 (5%), cumulative proportion ~0.90

* Rating 4 (Yellow): Approximately 0.10 (10%), cumulative proportion ~1.00

* **gpt-4o-20240513:**

* Rating 0 (Blue): Approximately 0.58 (58%)

* Rating 1 (Green): Approximately 0.18 (18%), cumulative proportion ~0.76

* Rating 2 (Red): Approximately 0.10 (10%), cumulative proportion ~0.86

* Rating 3 (Purple): Approximately 0.05 (5%), cumulative proportion ~0.91

* Rating 4 (Yellow): Approximately 0.09 (9%), cumulative proportion ~1.00

* **gpt-4o-mini-2024-07-18:**

* Rating 0 (Blue): Approximately 0.55 (55%)

* Rating 1 (Green): Approximately 0.20 (20%), cumulative proportion ~0.75

* Rating 2 (Red): Approximately 0.10 (10%), cumulative proportion ~0.85

* Rating 3 (Purple): Approximately 0.05 (5%), cumulative proportion ~0.90

* Rating 4 (Yellow): Approximately 0.10 (10%), cumulative proportion ~1.00

### Key Observations

* **Dominance of Rating 0:** Most models show a significant proportion of Rating 0 responses, indicating that the lowest rating is the most frequent outcome for many of them.

* **Llama 3.1 Small Models:** The Llama 3.1 8B, 3.2-1B, and 3.2-3B Instruct models exhibit an extremely high proportion of Rating 0 (around 98%) with very little distribution across other ratings. This suggests a consistent, low-quality output or a specific failure mode for these models in this evaluation.

* **Qwen2.5 Models:** The Qwen2.5 models (0.5B, 2B, 5.3B, 72B) show a relatively consistent distribution pattern, with Rating 0 being the largest segment, followed by Rating 1, and then smaller proportions for Ratings 2, 3, and 4. The 72B model has a slightly higher proportion of higher ratings (1-4) compared to its smaller counterparts.

* **Claude Models:** Both Claude models (Haiku and Sonnet) show a substantial proportion of Rating 0, but also a more distributed pattern for higher ratings compared to the Llama small models. Claude-3-haiku-20240307 has a higher proportion of Rating 0 than Claude-3-sonnet-20240229.

* **GPT-4o Models:** The two GPT-4o models (gpt-4o-20240513 and gpt-4o-mini-2024-07-18) have similar rating distributions, with Rating 0 being the largest segment, followed by Rating 1, and then a noticeable presence of Ratings 2 and 4.

* **Mistral Models:** Mistral-7B-Instruct has a higher proportion of Rating 0 than Mistral-8x22B-Instruct-v0.1. The 8x22B model shows a more even distribution across ratings 0, 1, and 2, with a smaller but present proportion of 3 and 4.

### Interpretation

This stacked bar chart provides a comparative view of how different language models perform when evaluated by a specific instance of Claude 3.5 Sonnet. The prevalence of Rating 0 across most models suggests that the evaluation criteria or the nature of the tasks might be challenging, leading to frequent low scores.

The extreme concentration of Rating 0 for the smaller Llama 3.1 models is a significant finding. It implies that these specific versions might be highly prone to errors or not well-suited for the evaluated tasks. In contrast, models like the Qwen2.5 series and the GPT-4o variants show a more nuanced distribution, indicating a broader range of performance rather than a consistent failure.

The presence of higher ratings (1-4) in varying degrees across models suggests that some models are more capable of producing satisfactory or even excellent outputs. The evaluator's specific version ("claude-3-5-sonnet-20241022") is crucial context, as different evaluators or even different versions of the same model could yield different results. The chart allows for a direct comparison of these models' "rating profiles" under identical evaluation conditions. The data suggests that while many models struggle with consistently high ratings, there are clear differences in their ability to avoid the lowest rating and achieve better scores.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Stacked Bar Chart: Rating Distribution by Model

### Overview

The image presents a stacked bar chart visualizing the distribution of ratings (0 to 4) for various language models. The chart is titled "Rating Distribution by Model" and indicates the evaluator used was "claude-3-sonnet-20241022". The x-axis represents different language models, and the y-axis represents the proportion of ratings. Each bar is segmented to show the proportion of each rating level for that model.

### Components/Axes

* **Title:** Rating Distribution by Model

* **Evaluator:** claude-3-sonnet-20241022

* **X-axis:** Model Name (Categorical)

* Llama-3-70B-Instruct

* Llama-3-8B-Instruct

* Llama-3-2-1B-Instruct

* Llama-3-2-3B-Instruct

* Mistral-7B-Instruct

* Mistral-8x22B-Instruct-v0.1

* Mixtral-8x7B-Instruct-v0.1

* Qwen2-3.5-0.5B-Instruct

* Qwen2-5-32B-Instruct

* Qwen2-5-3B-Instruct

* Qwen2-5-72B-Instruct

* Qwen2-5-7B-Instruct

* claude-3-haiku-20240307

* claude-3-sonnet-202405-13

* gpt-4o-mini-2024-07-18

* **Y-axis:** Proportion (Scale: 0.0 to 1.0)

* **Legend:** Rating (Categorical)

* 0 (Blue)

* 1 (Orange)

* 2 (Green)

* 3 (Red)

* 4 (Purple)

### Detailed Analysis

The chart displays the proportion of each rating for each model. The bars are stacked, meaning the total height of each bar represents the total proportion (which should ideally sum to 1.0, though minor rounding errors may exist).

Here's a breakdown of the approximate proportions for each model, based on visual estimation and color-matching to the legend:

* **Llama-3-70B-Instruct:** ~0.05 (0), ~0.1 (1), ~0.2 (2), ~0.4 (3), ~0.25 (4)

* **Llama-3-8B-Instruct:** ~0.1 (0), ~0.15 (1), ~0.25 (2), ~0.35 (3), ~0.15 (4)

* **Llama-3-2-1B-Instruct:** ~0.2 (0), ~0.2 (1), ~0.25 (2), ~0.25 (3), ~0.1 (4)

* **Llama-3-2-3B-Instruct:** ~0.15 (0), ~0.15 (1), ~0.25 (2), ~0.3 (3), ~0.15 (4)

* **Mistral-7B-Instruct:** ~0.1 (0), ~0.15 (1), ~0.2 (2), ~0.35 (3), ~0.2 (4)

* **Mistral-8x22B-Instruct-v0.1:** ~0.05 (0), ~0.1 (1), ~0.2 (2), ~0.4 (3), ~0.25 (4)

* **Mixtral-8x7B-Instruct-v0.1:** ~0.05 (0), ~0.1 (1), ~0.15 (2), ~0.4 (3), ~0.3 (4)

* **Qwen2-3.5-0.5B-Instruct:** ~0.2 (0), ~0.2 (1), ~0.2 (2), ~0.25 (3), ~0.15 (4)

* **Qwen2-5-32B-Instruct:** ~0.05 (0), ~0.1 (1), ~0.15 (2), ~0.4 (3), ~0.3 (4)

* **Qwen2-5-3B-Instruct:** ~0.15 (0), ~0.15 (1), ~0.2 (2), ~0.3 (3), ~0.2 (4)

* **Qwen2-5-72B-Instruct:** ~0.05 (0), ~0.1 (1), ~0.15 (2), ~0.4 (3), ~0.3 (4)

* **Qwen2-5-7B-Instruct:** ~0.1 (0), ~0.15 (1), ~0.2 (2), ~0.35 (3), ~0.2 (4)

* **claude-3-haiku-20240307:** ~0.1 (0), ~0.1 (1), ~0.2 (2), ~0.3 (3), ~0.3 (4)

* **claude-3-sonnet-202405-13:** ~0.05 (0), ~0.05 (1), ~0.1 (2), ~0.3 (3), ~0.5 (4)

* **gpt-4o-mini-2024-07-18:** ~0.05 (0), ~0.05 (1), ~0.1 (2), ~0.2 (3), ~0.6 (4)

### Key Observations

* **gpt-4o-mini-2024-07-18** and **claude-3-sonnet-202405-13** have the highest proportion of rating 4, indicating they received the most positive evaluations.

* **Llama-3-2-1B-Instruct** and **Qwen2-3.5-0.5B-Instruct** have the highest proportion of rating 0, suggesting they received the most negative evaluations.

* Most models have a significant proportion of ratings in the 2 and 3 range, indicating a mixed reception.

* There's a clear trend of larger models (e.g., Llama-3-70B-Instruct, Mixtral-8x7B-Instruct-v0.1) tending to receive higher ratings (more 3s and 4s) compared to smaller models.

### Interpretation

The chart provides a comparative assessment of the performance of different language models, as judged by the "claude-3-sonnet-20241022" evaluator. The stacked bar chart effectively visualizes the distribution of ratings, allowing for quick identification of models that consistently receive high or low scores.

The dominance of ratings 3 and 4 for models like gpt-4o-mini-2024-07-18 and claude-3-sonnet-202405-13 suggests these models are generally considered to be of higher quality or more useful. Conversely, the higher proportion of rating 0 for models like Llama-3-2-1B-Instruct and Qwen2-3.5-0.5B-Instruct indicates potential issues with their performance or usability.

The observed trend of larger models receiving higher ratings aligns with the general expectation that model capacity and complexity correlate with performance. However, it's important to note that this is just one evaluator's perspective, and the results may vary depending on the evaluation criteria and the specific tasks used. Further investigation with different evaluators and datasets would be necessary to draw more definitive conclusions. The data suggests a clear hierarchy of model performance, with the newer and larger models generally outperforming the smaller ones.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Stacked Bar Chart: Rating Distribution by Model

### Overview

This image is a stacked bar chart titled "Rating Distribution by Model," with a subtitle indicating the evaluator is "claude-3-5-sonnet-20241022." The chart displays the proportional distribution of five distinct ratings (0 through 4) assigned to 16 different large language models. The data is presented as proportions summing to 1.0 (or 100%) for each model, allowing for a direct comparison of rating distributions across models.

### Components/Axes

* **Chart Title:** "Rating Distribution by Model"

* **Subtitle/Evaluator:** "Evaluator: claude-3-5-sonnet-20241022"

* **Y-Axis:**

* **Label:** "Proportion"

* **Scale:** Linear scale from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:**

* **Label:** Not explicitly labeled, but contains the names of 16 language models.

* **Model Names (from left to right):**

1. Llama-3.1-70B-Instruct

2. Llama-3.1-8B-Instruct

3. Llama-3.2-1B-Instruct

4. Llama-3.2-3B-Instruct

5. Mistral-7B-Instruct-v0.1

6. Mixtral-8x22B-Instruct-v0.1

7. Mixtral-8x7B-Instruct-v0.1

8. Qwen2.5-0.5B-Instruct

9. Qwen2.5-5B-Instruct

10. Qwen2.5-32B-Instruct

11. Qwen2.5-3B-Instruct

12. Qwen2.5-72B-Instruct

13. Qwen2.5-7B-Instruct

14. claude-3-haiku-20240307

15. claude-3-sonnet-20240229

16. gpt-4o-2024-05-13

17. gpt-4o-mini-2024-07-18

* **Legend:**

* **Title:** "Rating"

* **Position:** Centered on the right side of the chart.

* **Categories & Colors:**

* **0:** Blue

* **1:** Green

* **2:** Red

* **3:** Purple

* **4:** Gold/Yellow

### Detailed Analysis

The chart presents the rating distribution for each model as a vertical bar segmented by color. The height of each colored segment represents the proportion of that rating for the given model. The total height of each bar is 1.0.

**Trend Verification & Data Points (Approximate Proportions):**

The dominant trend across most models is a high proportion of Rating 0 (blue segment at the base). The proportions for other ratings vary significantly.

1. **Llama-3.1-70B-Instruct:** Rating 0 ~0.52, Rating 1 ~0.15, Rating 2 ~0.13, Rating 3 ~0.08, Rating 4 ~0.12.

2. **Llama-3.1-8B-Instruct:** Rating 0 ~0.63, Rating 1 ~0.18, Rating 2 ~0.12, Rating 3 ~0.02, Rating 4 ~0.05.

3. **Llama-3.2-1B-Instruct:** Rating 0 ~0.83, Rating 1 ~0.12, Rating 2 ~0.04, Rating 3 ~0.01, Rating 4 ~0.00.

4. **Llama-3.2-3B-Instruct:** Rating 0 ~0.70, Rating 1 ~0.16, Rating 2 ~0.08, Rating 3 ~0.02, Rating 4 ~0.04.

5. **Mistral-7B-Instruct-v0.1:** Rating 0 ~0.74, Rating 1 ~0.16, Rating 2 ~0.08, Rating 3 ~0.01, Rating 4 ~0.01.

6. **Mixtral-8x22B-Instruct-v0.1:** Rating 0 ~0.38, Rating 1 ~0.16, Rating 2 ~0.17, Rating 3 ~0.10, Rating 4 ~0.19. (Notable for a relatively low Rating 0 proportion).

7. **Mixtral-8x7B-Instruct-v0.1:** Rating 0 ~0.46, Rating 1 ~0.19, Rating 2 ~0.16, Rating 3 ~0.07, Rating 4 ~0.12.

8. **Qwen2.5-0.5B-Instruct:** Rating 0 ~0.86, Rating 1 ~0.09, Rating 2 ~0.04, Rating 3 ~0.01, Rating 4 ~0.00. (Very high Rating 0).

9. **Qwen2.5-5B-Instruct:** Rating 0 ~0.50, Rating 1 ~0.15, Rating 2 ~0.13, Rating 3 ~0.05, Rating 4 ~0.17.

10. **Qwen2.5-32B-Instruct:** Rating 0 ~0.65, Rating 1 ~0.16, Rating 2 ~0.10, Rating 3 ~0.05, Rating 4 ~0.04.

11. **Qwen2.5-3B-Instruct:** Rating 0 ~0.55, Rating 1 ~0.15, Rating 2 ~0.11, Rating 3 ~0.04, Rating 4 ~0.15.

12. **Qwen2.5-72B-Instruct:** Rating 0 ~0.65, Rating 1 ~0.15, Rating 2 ~0.10, Rating 3 ~0.03, Rating 4 ~0.07.

13. **Qwen2.5-7B-Instruct:** Rating 0 ~0.45, Rating 1 ~0.12, Rating 2 ~0.16, Rating 3 ~0.05, Rating 4 ~0.22. (Notable for a high Rating 4 proportion).

14. **claude-3-haiku-20240307:** Rating 0 ~0.70, Rating 1 ~0.12, Rating 2 ~0.10, Rating 3 ~0.02, Rating 4 ~0.06.

15. **claude-3-sonnet-20240229:** Rating 0 ~0.55, Rating 1 ~0.15, Rating 2 ~0.11, Rating 3 ~0.02, Rating 4 ~0.17.

16. **gpt-4o-2024-05-13:** Rating 0 ~0.64, Rating 1 ~0.15, Rating 2 ~0.11, Rating 3 ~0.03, Rating 4 ~0.07.

17. **gpt-4o-mini-2024-07-18:** Rating 0 ~0.64, Rating 1 ~0.15, Rating 2 ~0.11, Rating 3 ~0.03, Rating 4 ~0.07. (Distribution appears identical to gpt-4o-2024-05-13).

### Key Observations

1. **Dominance of Rating 0:** For 15 out of 17 models, Rating 0 (blue) is the largest single segment, often comprising 50% or more of the total proportion. This suggests the evaluator (claude-3-5-sonnet-20241022) frequently assigns the lowest rating.

2. **Notable Outliers:**

* **Mixtral-8x22B-Instruct-v0.1** has the lowest proportion of Rating 0 (~0.38) and the highest proportion of Rating 4 (~0.19) among the non-Claude models, indicating a more favorable evaluation.

* **Qwen2.5-7B-Instruct** has the highest proportion of Rating 4 (~0.22) in the entire chart.

* **Qwen2.5-0.5B-Instruct** and **Llama-3.2-1B-Instruct** have the highest proportions of Rating 0 (~0.86 and ~0.83, respectively), suggesting very poor evaluations.

3. **Model Family Patterns:** Within the Qwen2.5 series, the smallest model (0.5B) performs worst, while the 7B model shows a relatively high Rating 4 proportion. The larger 32B and 72B models have more moderate distributions.

4. **Claude and GPT Models:** The two Claude models and two GPT-4o models show similar, moderate distributions with Rating 0 around 55-70% and a noticeable but not dominant Rating 4 segment.

### Interpretation

This chart provides a comparative snapshot of how a specific evaluator (likely another AI model, claude-3-5-sonnet) rates the outputs or performance of various other language models. The data suggests the evaluator has a strong bias toward assigning low ratings (0), which could indicate a strict evaluation rubric, a challenging task, or a systematic difference in capability between the evaluator and the models being evaluated.

The variation between models is meaningful. The relatively better performance of Mixtral-8x22B and Qwen2.5-7B might indicate these models are better aligned with the evaluator's criteria or possess superior capabilities for the specific task being rated. Conversely, the very low ratings for the smallest models (Qwen2.5-0.5B, Llama-3.2-1B) are expected, highlighting a clear performance gap based on scale.

The identical distributions for `gpt-4o-2024-05-13` and `gpt-4o-mini-2024-07-18` are striking and could imply one of two things: either the models performed identically on the evaluation task, or there may be a data plotting artifact where the values for one were duplicated. Without raw data, this remains an observation of visual identity.

**Peircean Investigation:** The chart is an *index* of the evaluator's judgment. The high frequency of Rating 0 is a sign pointing to a harsh or demanding evaluation context. The variation between models is a sign pointing to real differences in model capability or alignment as perceived by this specific evaluator. To fully understand the "why," one would need the *icon* (the actual prompts and responses) and the *symbol* (the detailed rating rubric used by claude-3-5-sonnet). The chart alone shows the "what" (the distribution) but not the underlying causes.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Rating Distribution by Model

### Overview

The chart displays the distribution of ratings (0-4) across multiple AI models evaluated by "claude-3-5-sonnet-20241022". Each bar represents a model, with stacked segments showing the proportion of ratings received. The y-axis measures proportion (0.0 to 1.0), while the x-axis lists model names.

### Components/Axes

- **X-axis (Models)**:

- Llama-3-1-70B-Instruct

- Llama-3-1-8B-Instruct

- Llama-3-2-3B-Instruct

- Llama-3-2-8B-Instruct

- Mistral-7B-Instruct-v0.1

- Mistral-8x7B-Instruct-v0.1

- Qwen2-5-5B-Instruct

- Qwen2-5-72B-Instruct

- Claude-3-haiku-20240307

- Claude-3-sonnet-20240229

- GPT-40-mini-2024-05-13

- GPT-40-mini-2024-07-18

- **Y-axis (Proportion)**:

- Scale: 0.0 to 1.0 in increments of 0.2

- Labels: "Proportion"

- **Legend (Right)**:

- **Blue**: Rating 0

- **Green**: Rating 1

- **Red**: Rating 2

- **Purple**: Rating 3

- **Yellow**: Rating 4

### Detailed Analysis

- **Rating 0 (Blue)**:

- Dominates most bars (e.g., Llama-3-1-70B-Instruct: ~0.5, Mistral-7B-Instruct-v0.1: ~0.75).

- Claude-3-sonnet-20240229 has the lowest proportion (~0.45).

- **Rating 1 (Green)**:

- Significant in Mistral-7B-Instruct-v0.1 (~0.2) and Claude-3-haiku-20240307 (~0.15).

- Minimal in Qwen2-5-5B-Instruct (~0.05).

- **Rating 2 (Red)**:

- Notable in Llama-3-1-8B-Instruct (~0.15) and Qwen2-5-72B-Instruct (~0.1).

- Absent in Mistral-8x7B-Instruct-v0.1.

- **Rating 3 (Purple)**:

- Small segments in Llama-3-1-70B-Instruct (~0.05) and Qwen2-5-72B-Instruct (~0.05).

- None in Mistral-8x7B-Instruct-v0.1.

- **Rating 4 (Yellow)**:

- Consistently minimal across all models (~0.05-0.1).

- Highest in Qwen2-5-72B-Instruct (~0.1).

### Key Observations

1. **Rating 0 Dominance**: Most models receive the lowest rating, suggesting widespread underperformance or strict evaluation criteria.

2. **Variability in Rating 1**: Mistral and Claude models show moderate proportions of this rating, indicating mixed performance.

3. **Rare High Ratings**: Ratings 3 and 4 are nearly absent, with only Qwen2-5-72B-Instruct showing slight improvement.

4. **Model-Specific Trends**:

- Llama-3-1-70B-Instruct has the highest proportion of Rating 0 (~0.5).

- GPT-40-mini-2024-07-18 shows the most balanced distribution (Rating 0: ~0.6, Rating 1: ~0.2).

### Interpretation

The data suggests that most evaluated models struggle to meet expectations, with Rating 0 being the most common outcome. The presence of Rating 1 in some models (e.g., Mistral, Claude) indicates partial success, but no model achieves high ratings consistently. The evaluator "claude-3-5-sonnet-20241022" likely applied rigorous criteria, as high ratings (3-4) are rare. The slight improvement in Qwen2-5-72B-Instruct and GPT-40-mini-2024-07-18 may reflect architectural or training advantages.

**Note**: Proportions are approximate due to visual estimation from the chart.

DECODING INTELLIGENCE...