\n

## Attention Mechanism Visualization: Word Alignment

### Overview

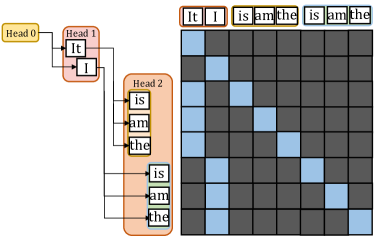

The image depicts a visualization of an attention mechanism, likely from a neural machine translation or similar sequence-to-sequence model. It shows the alignment weights between words in a source sentence ("It I") and a target sentence ("is am the is am the"). The visualization uses a heatmap to represent the attention weights, with darker shades indicating stronger alignment.

### Components/Axes

* **Source Sentence Heads:** Labeled "Head 0", "Head 1". These represent different attention heads focusing on the source sentence.

* **Target Sentence Heads:** Labeled "Head 2". This represents the attention heads focusing on the target sentence.

* **Source Words:** "It", "I" are the words in the source sentence.

* **Target Words:** "is", "am", "the" are the words in the target sentence, repeated.

* **Heatmap:** A grid representing the attention weights between each source word and each target word. The color intensity indicates the strength of the attention.

* **Color Scale:** The heatmap uses a gradient from light blue (low attention) to dark gray (high attention).

### Detailed Analysis

The heatmap is approximately 8x6 in size. The source words "It" and "I" are aligned with the target words "is", "am", and "the".

Here's a breakdown of the attention weights, based on color intensity (approximate values):

* **"It" alignment:**

* "is": ~0.3 (light blue)

* "am": ~0.2 (very light blue)

* "the": ~0.1 (almost white)

* "is": ~0.2 (very light blue)

* "am": ~0.2 (very light blue)

* "the": ~0.1 (almost white)

* **"I" alignment:**

* "is": ~0.5 (medium blue)

* "am": ~0.7 (dark blue)

* "the": ~0.4 (light-medium blue)

* "is": ~0.4 (light-medium blue)

* "am": ~0.6 (medium-dark blue)

* "the": ~0.3 (light blue)

The connections from "Head 0" to "Head 1" and "Head 2" are represented by lines.

### Key Observations

* The word "I" in the source sentence appears to have stronger attention weights with "am" and "the" in the target sentence.

* The word "It" in the source sentence has relatively weak attention weights across all target words.

* The target sentence repeats the sequence "is am the", suggesting a potential pattern or structure in the translation process.

* The attention weights are not uniform, indicating that the model is selectively focusing on different parts of the target sentence when processing each source word.

### Interpretation

This visualization demonstrates how the attention mechanism allows the model to focus on relevant parts of the input sequence when generating the output sequence. The heatmap shows which source words are most strongly associated with each target word. The varying attention weights suggest that the model is learning to capture complex relationships between the source and target languages.

The repetition in the target sentence ("is am the is am the") might indicate a simple translation task or a specific pattern in the training data. The relatively weak attention from "It" could suggest that this word is less important in the translation context or that the model is struggling to find a strong alignment for it.

The use of multiple attention heads ("Head 0", "Head 1", "Head 2") allows the model to capture different aspects of the relationship between the source and target sentences. Each head can learn to focus on different patterns or features, leading to a more robust and accurate translation.