TECHNICAL ASSET FINGERPRINT

def59530999ce1514c4225f8

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

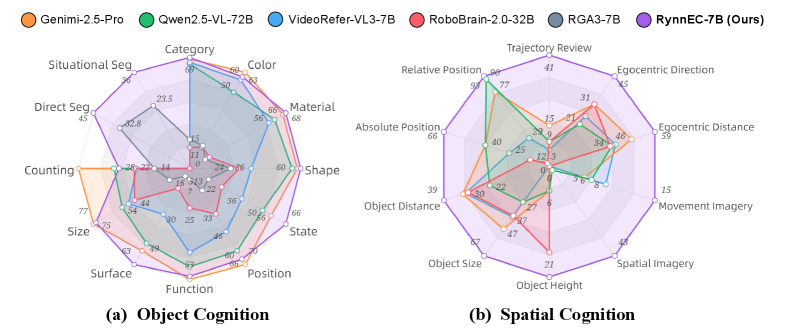

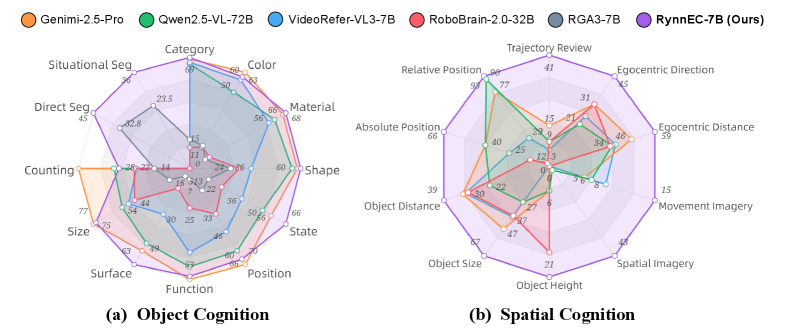

## [Composite Radar Charts]: Comparative Model Performance in Object and Spatial Cognition

### Overview

The image displays two radar charts (also known as spider charts) comparing the performance of six different AI models across two distinct cognitive domains: **Object Cognition** and **Spatial Cognition**. The charts are labeled (a) and (b) respectively. A shared legend at the top identifies each model by name and color. The charts plot model performance scores (presumably percentages or normalized scores) across multiple categorical axes radiating from a central point.

### Components/Axes

**Legend (Top Center):**

* **Genimi-2.5-Pro** (Orange)

* **Qwen2.5-VL-72B** (Green)

* **VideoRefer-VL3-7B** (Blue)

* **RoboBrain-2.0-32B** (Red)

* **RGA3-7B** (Gray)

* **RynnEC-7B (Ours)** (Purple)

**Chart (a) - Object Cognition (Left):**

* **Axes (12 categories, clockwise from top):** Category, Color, Material, Shape, State, Position, Function, Surface, Size, Counting, Direct Seg., Situational Seg.

* **Scale:** Concentric polygons represent score levels. The outermost ring is labeled "100" at the top (Category axis). Inner rings are labeled at intervals: 80, 60, 40, 20, with the center representing 0.

**Chart (b) - Spatial Cognition (Right):**

* **Axes (12 categories, clockwise from top):** Trajectory Review, Egocentric Direction, Egocentric Distance, Movement Imagery, Spatial Imagery, Object Height, Object Size, Object Distance, Absolute Position, Relative Position.

* **Scale:** Identical concentric polygon scale as chart (a), with the outermost ring labeled "100" at the top (Trajectory Review axis).

### Detailed Analysis

**Chart (a): Object Cognition**

* **Trend Verification & Data Points:**

* **RynnEC-7B (Purple):** Forms the largest, most expansive polygon, indicating the highest overall performance. It scores near or at 100 on **Category**, **Color**, and **Material**. It maintains high scores (>80) on **Shape**, **State**, **Position**, and **Function**. Its lowest scores appear to be on **Counting** (~77) and **Direct Seg.** (~45).

* **Genimi-2.5-Pro (Orange):** Shows a strong, broad performance profile, second only to RynnEC-7B. It excels in **Color** (~93), **Material** (~88), and **Shape** (~80). It has a notable dip in **Direct Seg.** (~45).

* **Qwen2.5-VL-72B (Green):** Performs well in **Color** (~83) and **Material** (~68) but shows a more contracted shape, with lower scores in **Counting** (~54), **Size** (~54), and **Surface** (~60).

* **VideoRefer-VL3-7B (Blue):** Has a distinct profile with a very high score in **Category** (~90) but lower scores in many other areas like **Material** (~38), **Shape** (~60), and **Function** (~46).

* **RoboBrain-2.0-32B (Red):** Exhibits the most contracted polygon, indicating the lowest overall performance in this set. Its highest score is in **Category** (~60), with many scores below 40 (e.g., **Material** ~22, **Shape** ~36, **Function** ~25).

* **RGA3-7B (Gray):** Shows a mid-range, somewhat irregular performance. It has a relatively high score in **Category** (~70) but dips significantly in **Direct Seg.** (~28) and **Situational Seg.** (~32).

**Chart (b): Spatial Cognition**

* **Trend Verification & Data Points:**

* **RynnEC-7B (Purple):** Again demonstrates the strongest overall performance, forming the outermost polygon. It scores very high on **Trajectory Review** (~90), **Relative Position** (~90), and **Absolute Position** (~66). Its lowest score is on **Object Height** (~21).

* **Qwen2.5-VL-72B (Green):** Shows a very strong and specific performance spike, achieving the highest score on the chart in **Relative Position** (~97). It also scores well in **Absolute Position** (~60) and **Object Distance** (~67). Its performance is more variable, with lower scores in **Egocentric Distance** (~15) and **Movement Imagery** (~15).

* **Genimi-2.5-Pro (Orange):** Has a balanced, mid-to-high range profile. It performs well in **Trajectory Review** (~77), **Relative Position** (~77), and **Object Size** (~47).

* **RoboBrain-2.0-32B (Red):** Shows a more limited spatial capability profile. Its highest score is in **Egocentric Direction** (~31), with many scores in the 10-20 range (e.g., **Egocentric Distance** ~8, **Movement Imagery** ~15).

* **VideoRefer-VL3-7B (Blue):** Has a focused performance, with a relatively high score in **Egocentric Direction** (~41) but very low scores in **Object Height** (~21) and **Spatial Imagery** (~15).

* **RGA3-7B (Gray):** Displays a mid-range, somewhat flat profile, with most scores clustered between 20 and 40. Its highest point appears to be **Object Size** (~40).

### Key Observations

1. **Dominant Model:** **RynnEC-7B (Ours)** is the clear top performer in both cognitive domains, consistently forming the outermost boundary on both charts.

2. **Specialized Strengths:** **Qwen2.5-VL-72B** exhibits a remarkable, specialized peak in **Relative Position** (Spatial Cognition), outperforming even the top model in that single category.

3. **Domain Variance:** Model rankings are not consistent across domains. For example, **VideoRefer-VL3-7B** is relatively strong in **Category** (Object) but weaker in many spatial tasks.

4. **Common Weakness:** **Direct Segmentation** (in Object Cognition) appears to be a challenging task for all models, with the highest score being only ~77 (RynnEC-7B) and others scoring much lower.

5. **Performance Clustering:** In Spatial Cognition, scores are generally more dispersed and lower on average compared to Object Cognition, suggesting this may be a more difficult domain for the evaluated models.

### Interpretation

This comparative analysis suggests that the **RynnEC-7B** model possesses a more generalized and robust understanding of both object properties and spatial relationships compared to the other models tested. Its architecture or training likely provides a better foundation for multimodal reasoning.

The data highlights that model capability is not monolithic. **Qwen2.5-VL-72B's** exceptional score in **Relative Position** indicates it may have a specialized mechanism or data bias that excels at understanding object-to-object spatial relations, even if its overall spatial reasoning is less comprehensive.

The universally lower scores in tasks like **Direct Segmentation** and **Object Height** point to specific, persistent challenges in computer vision and spatial understanding that current models struggle to solve effectively. The charts effectively visualize the trade-offs between generalist performance (RynnEC-7B) and specialist peaks (Qwen2.5-VL-72B) within the current landscape of multimodal AI models.

**Language Note:** The model names in the legend contain alphanumeric identifiers (e.g., "2.5-Pro", "72B"). The term "(Ours)" next to RynnEC-7B indicates it is the model proposed by the authors of the study from which this image originates. All other text in the image is in English.

DECODING INTELLIGENCE...