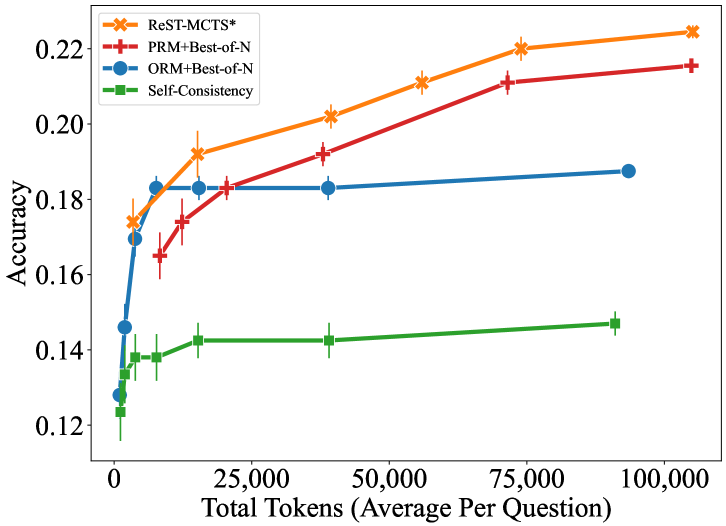

## Line Chart: Accuracy vs. Total Tokens for Different Reasoning Methods

### Overview

This line chart depicts the relationship between accuracy and the total number of tokens used by four different reasoning methods: ReST-MCTS*, PRM+Best-of-N, ORM+Best-of-N, and Self-Consistency. The x-axis represents the total tokens (average per question), and the y-axis represents the accuracy. Error bars are present for each data series, indicating the variability in accuracy.

### Components/Axes

* **X-axis Title:** "Total Tokens (Average Per Question)"

* Scale: 0 to 100,000 tokens.

* Markers: 0, 25,000, 50,000, 75,000, 100,000

* **Y-axis Title:** "Accuracy"

* Scale: 0.12 to 0.22

* Markers: 0.12, 0.14, 0.16, 0.18, 0.20, 0.22

* **Legend:** Located at the top-right of the chart.

* ReST-MCTS* (Orange line with asterisk marker)

* PRM+Best-of-N (Red line with plus marker)

* ORM+Best-of-N (Blue line with circle marker)

* Self-Consistency (Green line with square marker)

### Detailed Analysis

* **ReST-MCTS* (Orange):** The line slopes upward consistently from approximately 0.17 at 0 tokens to approximately 0.22 at 100,000 tokens.

* (0, 0.17) ± ~0.01

* (25,000, 0.19) ± ~0.01

* (50,000, 0.21) ± ~0.01

* (75,000, 0.215) ± ~0.01

* (100,000, 0.22) ± ~0.01

* **PRM+Best-of-N (Red):** The line starts at approximately 0.16 at 0 tokens, rises sharply to approximately 0.21 at 25,000 tokens, and then plateaus, reaching approximately 0.215 at 100,000 tokens.

* (0, 0.16) ± ~0.01

* (25,000, 0.21) ± ~0.01

* (50,000, 0.21) ± ~0.01

* (75,000, 0.21) ± ~0.01

* (100,000, 0.215) ± ~0.01

* **ORM+Best-of-N (Blue):** The line begins at approximately 0.18 at 0 tokens, rises to approximately 0.19 at 25,000 tokens, and then remains relatively flat, ending at approximately 0.19 at 100,000 tokens.

* (0, 0.18) ± ~0.01

* (25,000, 0.19) ± ~0.01

* (50,000, 0.19) ± ~0.01

* (75,000, 0.19) ± ~0.01

* (100,000, 0.19) ± ~0.01

* **Self-Consistency (Green):** The line starts at approximately 0.13 at 0 tokens, rises slightly to approximately 0.145 at 25,000 tokens, and then remains relatively constant, ending at approximately 0.15 at 100,000 tokens.

* (0, 0.13) ± ~0.01

* (25,000, 0.145) ± ~0.01

* (50,000, 0.14) ± ~0.01

* (75,000, 0.14) ± ~0.01

* (100,000, 0.15) ± ~0.01

### Key Observations

* ReST-MCTS* consistently demonstrates the highest accuracy across all token counts.

* PRM+Best-of-N shows a rapid initial increase in accuracy, but then plateaus.

* ORM+Best-of-N exhibits a modest increase in accuracy, followed by a plateau.

* Self-Consistency consistently has the lowest accuracy and shows minimal improvement with increasing tokens.

* The error bars suggest that the variability in accuracy is relatively consistent across different token counts for each method.

### Interpretation

The data suggests that increasing the number of tokens used by these reasoning methods generally improves accuracy, but the rate of improvement varies significantly. ReST-MCTS* benefits the most from increased tokens, indicating that it effectively utilizes additional computational resources. PRM+Best-of-N shows diminishing returns after a certain point, suggesting that the benefits of further token usage are limited. ORM+Best-of-N and Self-Consistency show minimal improvement with increased tokens, indicating that they may be limited by other factors, such as the underlying reasoning algorithm. The plateauing of PRM+Best-of-N and ORM+Best-of-N could indicate a saturation point where the model has extracted all the relevant information from the input. The consistently lower accuracy of Self-Consistency suggests that it is less effective than the other methods for this task. The error bars provide a measure of the confidence in the accuracy estimates, and the relatively small error bars suggest that the observed trends are statistically significant. This data could be used to inform the selection of reasoning methods and the allocation of computational resources for question-answering tasks.