## Diagram: Ethical Reinforcement Learning Loop

### Overview

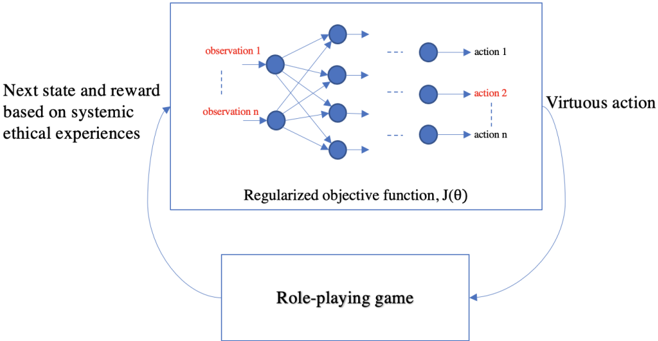

The image depicts a diagram of an ethical reinforcement learning loop. It shows how a role-playing game interacts with a regularized objective function to produce virtuous actions, which in turn influence the next state and reward within the game.

### Components/Axes

* **Top-Left:** "Next state and reward based on systemic ethical experiences"

* **Top-Center:** A rectangular box labeled "Regularized objective function, J(θ)". Inside this box are:

* **Left:** A column of nodes labeled "observation 1" to "observation n" (in red).

* **Center:** A network of connections between the observation nodes and the action nodes.

* **Right:** A column of nodes labeled "action 1" to "action n" (in red).

* **Top-Right:** "Virtuous action"

* **Bottom-Center:** A rectangular box labeled "Role-playing game"

* **Arrows:** Two curved arrows connect the components:

* One from "Next state and reward based on systemic ethical experiences" to the "Regularized objective function, J(θ)".

* One from "Virtuous action" to the "Role-playing game".

### Detailed Analysis or ### Content Details

The diagram illustrates a closed-loop system. The "Role-playing game" provides experiences that lead to the "Next state and reward based on systemic ethical experiences." This information feeds into the "Regularized objective function, J(θ)", which processes observations and generates actions. These actions are considered "Virtuous actions" and influence the "Role-playing game", creating a feedback loop.

The "Regularized objective function, J(θ)" box contains a simplified neural network representation. The input layer consists of "observation 1" to "observation n", which are connected to an output layer of "action 1" to "action n".

### Key Observations

* The diagram emphasizes the iterative nature of the learning process.

* The "Regularized objective function, J(θ)" acts as the core decision-making component.

* The "Role-playing game" provides the environment for ethical experiences.

* The loop suggests a continuous refinement of actions based on the ethical consequences within the game.

### Interpretation

The diagram represents a system designed to train an agent to make ethical decisions within a simulated environment (the role-playing game). The agent learns by interacting with the environment, receiving rewards and punishments based on its actions. The "Regularized objective function, J(θ)" likely represents a machine learning model that is trained to maximize rewards while adhering to ethical constraints. The feedback loop ensures that the agent continuously adapts its behavior to achieve virtuous actions, as defined by the systemic ethical experiences embedded in the game. The diagram highlights the importance of both the environment and the learning algorithm in shaping ethical behavior.