\n

## Diagram: Systemic Ethical Experiences and Virtuous Action

### Overview

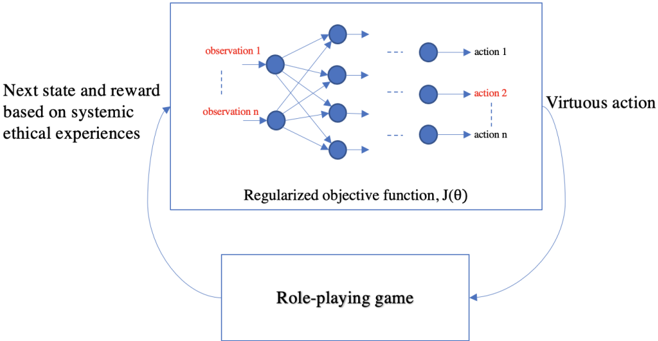

The image depicts a diagram illustrating the relationship between a role-playing game and a "Regularized objective function, J(θ)". The diagram shows a flow of information from the role-playing game to the objective function, and back, representing a feedback loop. The objective function appears to be a neural network-like structure with observations and actions.

### Components/Axes

The diagram consists of two main rectangular blocks connected by curved arrows.

* **Top Block:** Contains a network of nodes labeled "observation 1" through "observation n" and "action 1" through "action n". Below this network is the text "Regularized objective function, J(θ)".

* **Bottom Block:** Contains the text "Role-playing game".

* **Arrows:** Two curved arrows connect the blocks. One arrow originates from the bottom block and points towards the top block, labeled "Next state and reward based on systemic ethical experiences". The other arrow originates from the top block and points towards the bottom block, labeled "Virtuous action".

### Detailed Analysis or Content Details

The top block represents a network. The left side of the network contains nodes labeled "observation 1" through "observation n". These nodes are connected to nodes on the right side labeled "action 1" through "action n". The connections appear to be fully connected, meaning each observation node is connected to each action node. The number of observation and action nodes is not precisely defined, but "n" suggests a variable number.

The text "Regularized objective function, J(θ)" is positioned below the network. J(θ) is a standard notation in machine learning, representing an objective function with parameters θ.

The arrow from the "Role-playing game" to the "Regularized objective function" is labeled "Next state and reward based on systemic ethical experiences". This suggests the role-playing game provides input to the objective function in the form of a new state and a reward signal, informed by ethical considerations within the game.

The arrow from the "Regularized objective function" to the "Role-playing game" is labeled "Virtuous action". This indicates that the objective function outputs an action that is considered "virtuous" and is then implemented within the role-playing game.

### Key Observations

The diagram illustrates a closed-loop system where the role-playing game provides ethical experiences, which are processed by the objective function to generate virtuous actions, which then influence the game state and subsequent ethical experiences. The network structure within the objective function suggests a learning or decision-making process based on observations and actions.

### Interpretation

This diagram likely represents a reinforcement learning system designed to train an agent (represented by the objective function) to behave ethically within a role-playing game environment. The "systemic ethical experiences" suggest that the game is designed to present the agent with complex ethical dilemmas. The objective function, J(θ), is regularized, implying constraints are placed on the learning process to encourage ethical behavior. The feedback loop allows the agent to learn from its actions and improve its ability to make virtuous decisions. The diagram highlights the interplay between game design, ethical considerations, and machine learning algorithms. The use of a role-playing game as the environment suggests a focus on complex, nuanced ethical scenarios that are difficult to model using traditional methods. The diagram does not provide specific data or numerical values, but rather a conceptual framework for understanding the system.