## Diagram: Ethical Reinforcement Learning System

### Overview

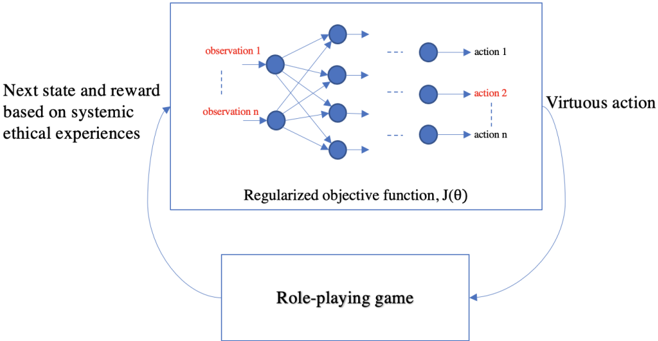

The image is a conceptual flowchart illustrating a reinforcement learning system designed to produce "virtuous action" through a process involving ethical experiences and a role-playing game. The diagram depicts a cyclic process where a neural network processes observations to generate actions, which are evaluated in a simulated environment, and the results feed back to update the system.

### Components/Axes

The diagram consists of several key components connected by directional arrows, indicating a flow of information and feedback.

1. **Central Processing Unit (Main Box):**

* **Label:** "Regularized objective function, J(θ)"

* **Content:** A schematic of a feedforward neural network.

* **Inputs (Left Side):** Labeled in red text as "observation 1" through "observation n", with vertical ellipsis (⋮) indicating a sequence. These are represented by blue circular nodes.

* **Hidden Layers:** Two columns of blue circular nodes connected by lines, representing the network's layers and weights.

* **Outputs (Right Side):** Labeled in black text as "action 1", "action 2" (in red), and "action n", with vertical ellipsis. These are also blue circular nodes.

* **Position:** This is the central and largest element, occupying the upper-middle portion of the diagram.

2. **Input Description (Left Side):**

* **Text:** "Next state and reward based on systemic ethical experiences"

* **Position:** Located to the left of the central box, with an arrow pointing from this text into the left side of the central box.

3. **Output Description (Right Side):**

* **Text:** "Virtuous action"

* **Position:** Located to the right of the central box, with an arrow pointing from the right side of the central box towards this text.

4. **Simulation Environment (Bottom Box):**

* **Label:** "Role-playing game"

* **Position:** A rectangular box centered below the main processing unit.

5. **Flow Arrows:**

* An arrow curves from the "Virtuous action" text down to the "Role-playing game" box.

* Another arrow curves from the "Role-playing game" box up to the left side of the central processing unit, completing the cycle.

### Detailed Analysis

The diagram models a closed-loop system for training an AI agent.

* **Process Flow:**

1. The system begins with input data described as the "Next state and reward based on systemic ethical experiences." This suggests the agent's learning is guided by a framework of ethics.

2. This input is fed into a neural network whose behavior is governed by a "Regularized objective function, J(θ)." The term "regularized" implies a mechanism to prevent overfitting or to incorporate constraints (potentially ethical ones).

3. The network processes the observations (1 to n) through its layers to produce a set of possible actions (1 to n). The label "action 2" is highlighted in red, possibly indicating it as the selected or primary action in this instance.

4. The generated output is characterized as a "Virtuous action," implying the system's goal is aligned with ethical or moral standards.

5. This action is then executed or evaluated within a "Role-playing game" environment. This acts as a simulator or testbed.

6. The outcomes (new state and reward) from the role-playing game are fed back into the system as the next set of "systemic ethical experiences," closing the loop for iterative learning and improvement.

* **Visual Elements:** The neural network nodes are uniformly blue. Text is primarily black, with key labels ("observation 1...n", "action 2") in red for emphasis. The layout is hierarchical and cyclical, emphasizing the continuous learning process.

### Key Observations

* The system explicitly integrates **ethics** at the input stage ("systemic ethical experiences") and defines its output goal as **"virtuous action."** This frames the entire learning process within a moral context.

* The use of a **"Role-playing game"** as the environment is significant. It suggests a controlled, simulated space where actions can be tested and their consequences observed without real-world risk, allowing for safe exploration of ethical decision-making.

* The **regularized objective function, J(θ)**, is the core mathematical driver. The regularization term is crucial, as it likely encodes the ethical constraints or principles that shape the agent's policy away from purely reward-driven behavior.

* The diagram shows a **single, clear feedback loop**, indicating an iterative, trial-and-error learning process akin to reinforcement learning.

### Interpretation

This diagram represents a proposed architecture for **Ethical AI or Moral AI training**. It moves beyond standard reinforcement learning, which optimizes for a given reward signal, by fundamentally structuring the learning process around ethical principles.

* **What it suggests:** The system is designed to learn how to act virtuously by continuously interacting with a simulated social or moral environment (the role-playing game). The "systemic ethical experiences" likely refer to a curated dataset or set of rules that define right and wrong within the simulation. The agent learns not just to maximize a score, but to align its behavior with these ethical norms.

* **How elements relate:** The neural network is the agent's "brain." The role-playing game is its "world." The ethical experiences are its "conscience" or "moral curriculum." The regularized objective function is the "learning rule" that binds them together, ensuring the agent's policy (θ) evolves to produce actions that are both effective (to gain reward) and virtuous (to satisfy the ethical regularization).

* **Notable implications:** This model implies that ethical behavior in AI can be achieved through a structured learning process that simulates moral dilemmas and consequences. The "virtuous action" is not pre-programmed but emerges from the interaction between the agent's learning algorithm, its ethical training data, and the simulated environment. The major challenge, not shown in the diagram, would be defining the "systemic ethical experiences" and the precise form of the regularization in J(θ) in a way that is robust, fair, and aligns with human values.