## Diagram: Ethical Decision-Making System Architecture

### Overview

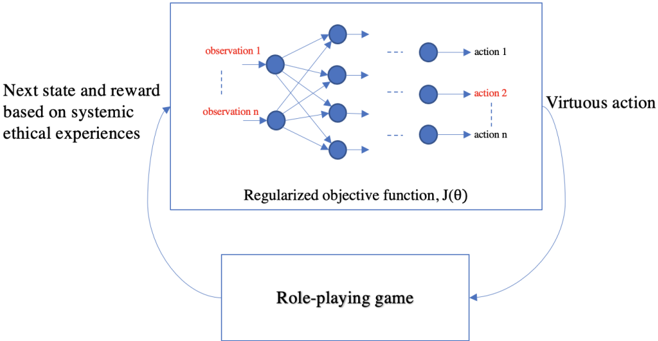

The diagram illustrates a feedback loop between a **Regularized objective function, J(θ)** and a **Role-playing game**. The system integrates **systematic ethical experiences** to inform **next state and reward** calculations, while **virtuous actions** are generated through iterative interactions between the two components.

### Components/Axes

1. **Top Box**:

- **Title**: "Regularized objective function, J(θ)"

- **Elements**:

- **Observations**: Labeled "observation 1" to "observation n" (n unspecified).

- **Actions**: Labeled "action 1" to "action n" (n unspecified).

- **Arrows**: Connect observations to actions, suggesting a mapping from inputs to outputs.

- **Key Text**: "Regularized objective function, J(θ)" (centered).

2. **Bottom Box**:

- **Title**: "Role-playing game"

- **Arrows**:

- **Input**: "Virtuous action" (right arrow pointing to the role-playing game).

- **Output**: "Next state and reward based on systematic ethical experiences" (left arrow pointing to the objective function).

3. **Arrows**:

- **Direction**:

- From the objective function to the role-playing game (virtuous action).

- From the role-playing game back to the objective function (next state/reward).

### Detailed Analysis

- **Observations and Actions**:

- Observations (1 to n) are inputs to the objective function, which maps them to actions (1 to n). The use of "n" implies scalability but lacks specific numerical values.

- The term "regularized" suggests constraints or penalties to prevent overfitting in the objective function.

- **Role-Playing Game**:

- Acts as an external feedback mechanism, generating **virtuous actions** that influence the system’s state and reward.

- The bidirectional flow indicates a dynamic, iterative process where ethical experiences refine the objective function.

- **Systemic Ethical Experiences**:

- Described as the basis for calculating "next state and reward," implying a focus on ethical alignment in decision-making.

### Key Observations

- **Feedback Loop**: The system is designed as a closed-loop architecture, where the role-playing game and objective function continuously influence each other.

- **Abstraction of Scale**: The use of "n" for observations and actions indicates a generalized framework rather than a fixed-size system.

- **Ethical Focus**: The explicit mention of "systematic ethical experiences" and "virtuous action" highlights the system’s purpose in ethical decision-making.

### Interpretation

This diagram represents a **reinforcement learning (RL) framework** with an ethical dimension. The **regularized objective function** likely optimizes for ethical outcomes while balancing exploration and exploitation. The **role-playing game** serves as a simulated environment to test and refine virtuous actions, which are then integrated into the objective function. The bidirectional flow suggests that ethical experiences (e.g., past decisions, societal norms) dynamically shape the system’s behavior, ensuring alignment with ethical principles.

The absence of numerical values or specific metrics implies the diagram is conceptual, emphasizing the **architecture** over implementation details. The regularization of J(θ) may address challenges like overfitting to biased ethical scenarios, promoting robustness. The role-playing game’s role as a feedback mechanism underscores the importance of iterative testing in ethical AI systems.