## Screenshot: AI Response Evaluation Template

### Overview

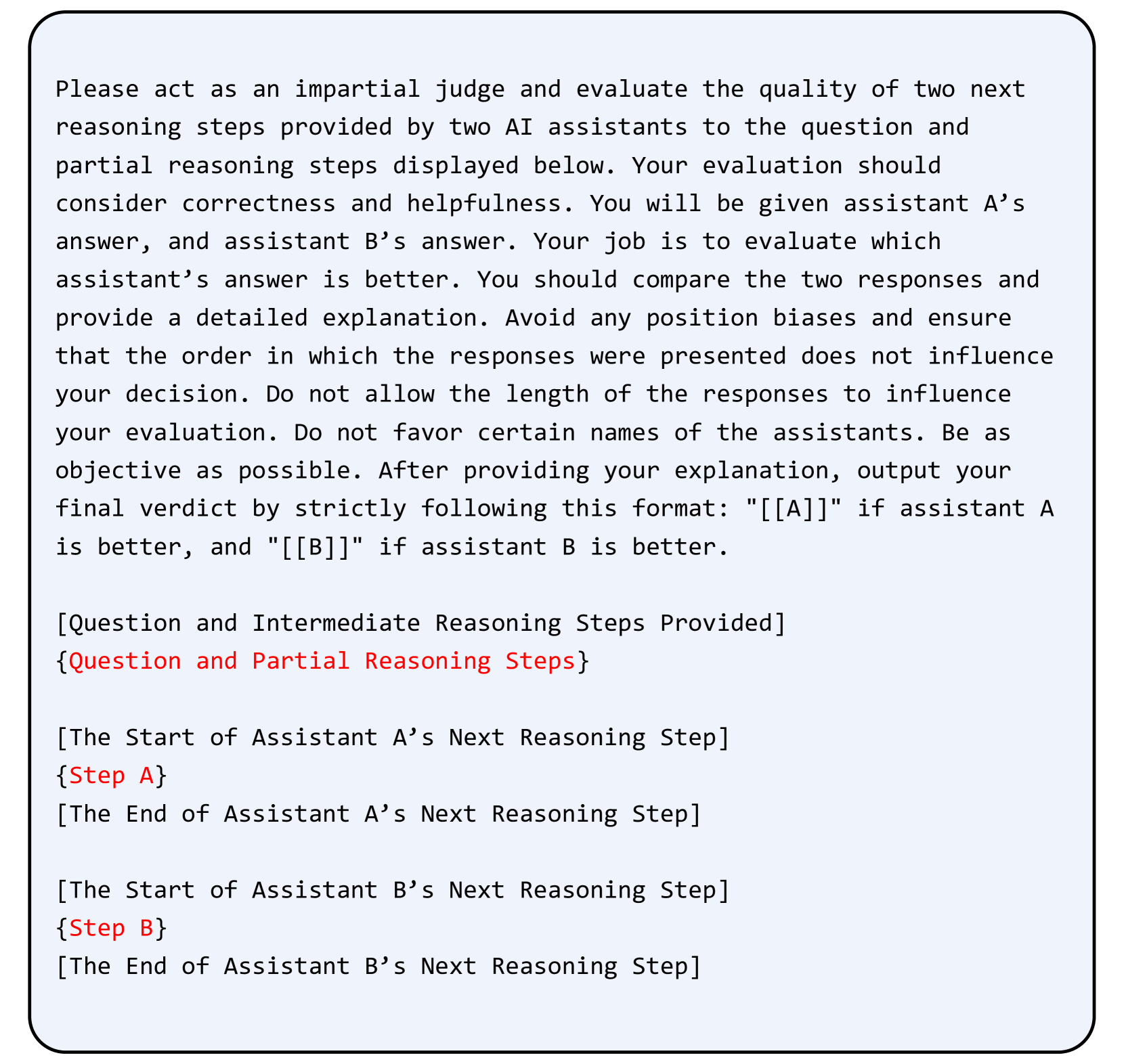

The image is a screenshot of a procedural template or instruction set for evaluating the quality of reasoning steps provided by two AI assistants. It is presented as a block of text within a light blue, rounded-corner box with a thin black border. The content is entirely textual and serves as a framework for a human or system to act as an impartial judge.

### Components/Axes

The image contains no traditional chart axes, legends, or data points. It is a structured text document with the following distinct sections:

1. **Main Instruction Block:** A paragraph of text at the top.

2. **Placeholder Sections:** Four distinct sections marked by square brackets `[]` and curly braces `{}`. Two of these sections contain placeholder text in red font.

### Detailed Analysis / Content Details

The text is in English. Below is a precise transcription of all visible text, preserving formatting and noting the red-colored text.

**Main Instruction Block (Black Text):**

"Please act as an impartial judge and evaluate the quality of two next reasoning steps provided by two AI assistants to the question and partial reasoning steps displayed below. Your evaluation should consider correctness and helpfulness. You will be given assistant A’s answer, and assistant B’s answer. Your job is to evaluate which assistant’s answer is better. You should compare the two responses and provide a detailed explanation. Avoid any position biases and ensure that the order in which the responses were presented does not influence your decision. Do not allow the length of the responses to influence your evaluation. Do not favor certain names of the assistants. Be as objective as possible. After providing your explanation, output your final verdict by strictly following this format: "[[A]]" if assistant A is better, and "[[B]]" if assistant B is better."

**Placeholder Sections:**

1. `[Question and Intermediate Reasoning Steps Provided]`

`{Question and Partial Reasoning Steps}` (This line is in **red font**)

2. `[The Start of Assistant A’s Next Reasoning Step]`

`{Step A}` (This line is in **red font**)

`[The End of Assistant A’s Next Reasoning Step]`

3. `[The Start of Assistant B’s Next Reasoning Step]`

`{Step B}` (This line is in **red font**)

`[The End of Assistant B’s Next Reasoning Step]`

### Key Observations

* **Template Nature:** The document is clearly a template. The content within the square brackets `[]` describes the type of information that should be inserted, while the content within the curly braces `{}` (highlighted in red) represents the actual placeholder for that variable information.

* **Structured Evaluation Criteria:** The instructions explicitly define the evaluation criteria: correctness and helpfulness. It also lists specific biases to avoid (order bias, length bias, name bias).

* **Prescribed Output Format:** The final verdict must be output in a strict, machine-readable format: `[[A]]` or `[[B]]`.

* **Visual Hierarchy:** The use of red font for the variable placeholders (`{Question and Partial...}`, `{Step A}`, `{Step B}`) creates a clear visual distinction between the static instructions and the dynamic content areas.

### Interpretation

This image represents a **standardized evaluation protocol** for comparing AI-generated reasoning. Its purpose is to ensure consistency, fairness, and objectivity in assessments, likely for training data generation, model comparison, or quality assurance.

The Peircean investigation reveals:

* **Sign (The Template):** It is an icon of a formal process, representing the structured nature of the evaluation.

* **Object (The Evaluation Task):** The actual task of judging two AI responses.

* **Interpretant (The Outcome):** A justified, bias-aware comparison leading to a definitive verdict (`[[A]]` or `[[B]]`).

The template's design mitigates common pitfalls in subjective evaluation by forcing the judge to articulate a detailed explanation before giving a verdict and by explicitly forbidding consideration of irrelevant factors like response length or the arbitrary order of presentation. The red placeholders indicate this is a reusable framework, meant to be populated with specific questions and AI outputs for each evaluation instance.