\n

## Document: Text-Based Image - Evaluation Request

### Overview

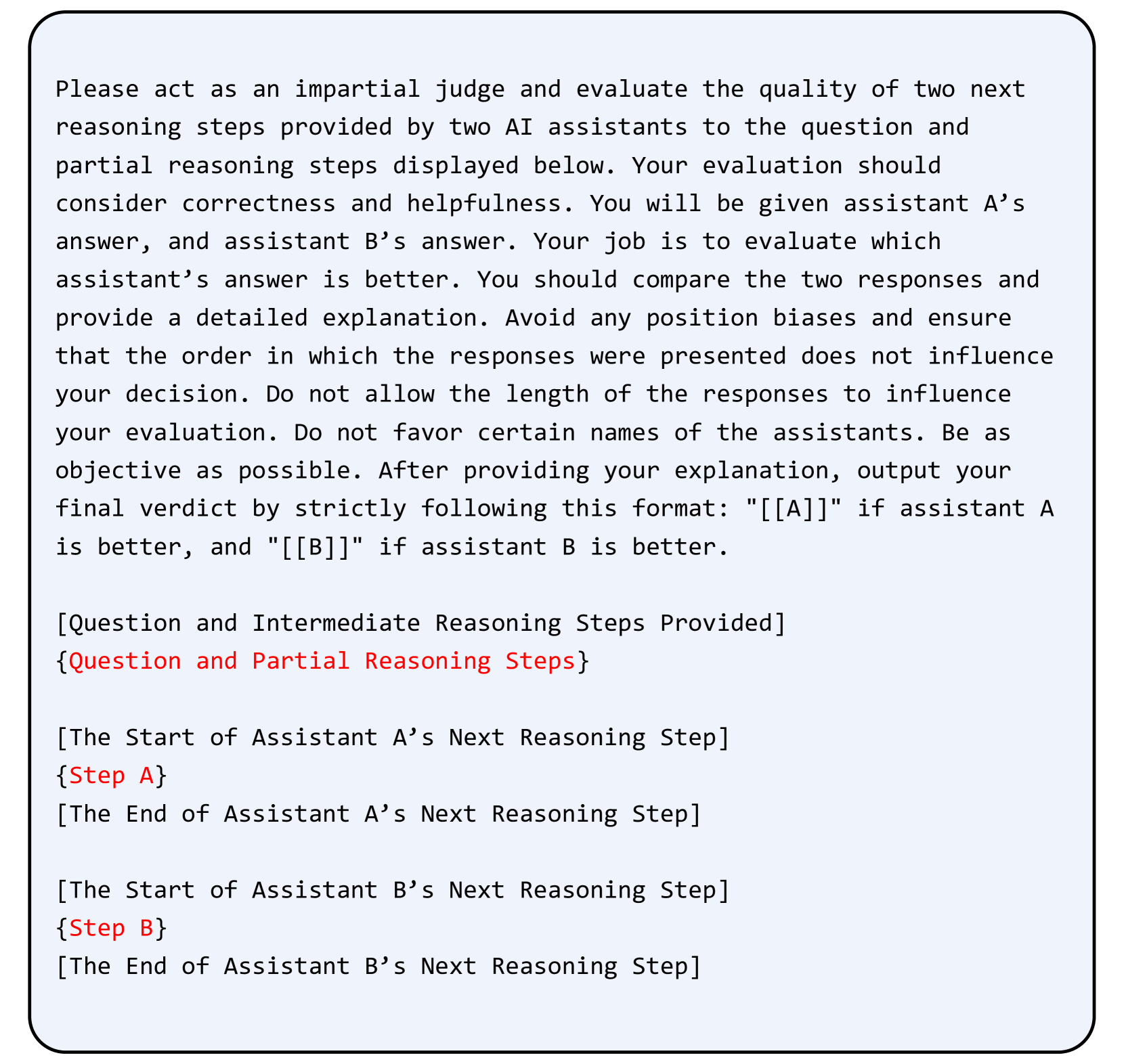

The image presents a text-based document outlining instructions for an AI assistant evaluation task. It details the role of an "impartial judge" and the criteria for evaluating the quality of responses from two AI assistants (A and B). The document emphasizes correctness, helpfulness, objectivity, and a specific output format.

### Components/Axes

The document is structured as a set of instructions. Key components include:

* **Title:** "Please act as an impartial judge..."

* **Role Definition:** Defines the judge's role and responsibilities.

* **Evaluation Criteria:** Lists the factors to consider (correctness, helpfulness, objectivity, avoiding biases).

* **Output Format:** Specifies the required output format: "\[\[A]]" if assistant A is better, and "\[\[B]]" if assistant B is better.

* **Contextual Information:** Explains the input provided (question and intermediate reasoning steps) and the responses to be evaluated (Step A and Step B).

### Detailed Analysis or Content Details

The document's content can be transcribed as follows:

"Please act as an impartial judge and evaluate the quality of two next reasoning steps provided by two AI assistants to the question and partial reasoning steps displayed below. Your evaluation should consider correctness and helpfulness. You will be given assistant A’s answer, and assistant B’s answer. Your job is to evaluate which assistant’s answer is better. You should compare the two responses and provide a detailed explanation. Avoid any position biases and ensure that the order in which the responses were presented does not influence your decision. Do not allow the length of the responses to influence your decision. Do not favor certain names of the assistants. Be as objective as possible. After providing your explanation, output your final verdict by strictly following this format: “\[\[A]]” if assistant A is better, and “\[\[B]]” if assistant B is better.

\[Question and Intermediate Reasoning Steps Provided]

{Question and Partial Reasoning Steps}

\[The Start of Assistant A’s Next Reasoning Step]

{Step A}

\[The End of Assistant A’s Next Reasoning Step]

\[The Start of Assistant B’s Next Reasoning Step]

{Step B}

\[The End of Assistant B’s Next Reasoning Step]"

### Key Observations

The document is highly structured and formal in tone. It prioritizes objectivity and provides clear guidelines for the evaluation process. The use of brackets and placeholders (e.g., {Question and Partial Reasoning Steps}, {Step A}, {Step B}) indicates that this is a template or a framework for a larger evaluation process.

### Interpretation

This document serves as a meta-instruction set for evaluating AI reasoning capabilities. It's designed to ensure a fair and consistent assessment of AI responses by minimizing potential biases and providing a standardized evaluation framework. The emphasis on detailed explanations alongside the final verdict suggests that the *process* of reasoning is as important as the correctness of the answer itself. The document highlights the need for a nuanced understanding of AI responses, going beyond simple accuracy checks to consider factors like helpfulness and objectivity. It is a critical component of a robust AI evaluation pipeline.