## Text-Based Evaluation Form: AI Reasoning Step Comparison

### Overview

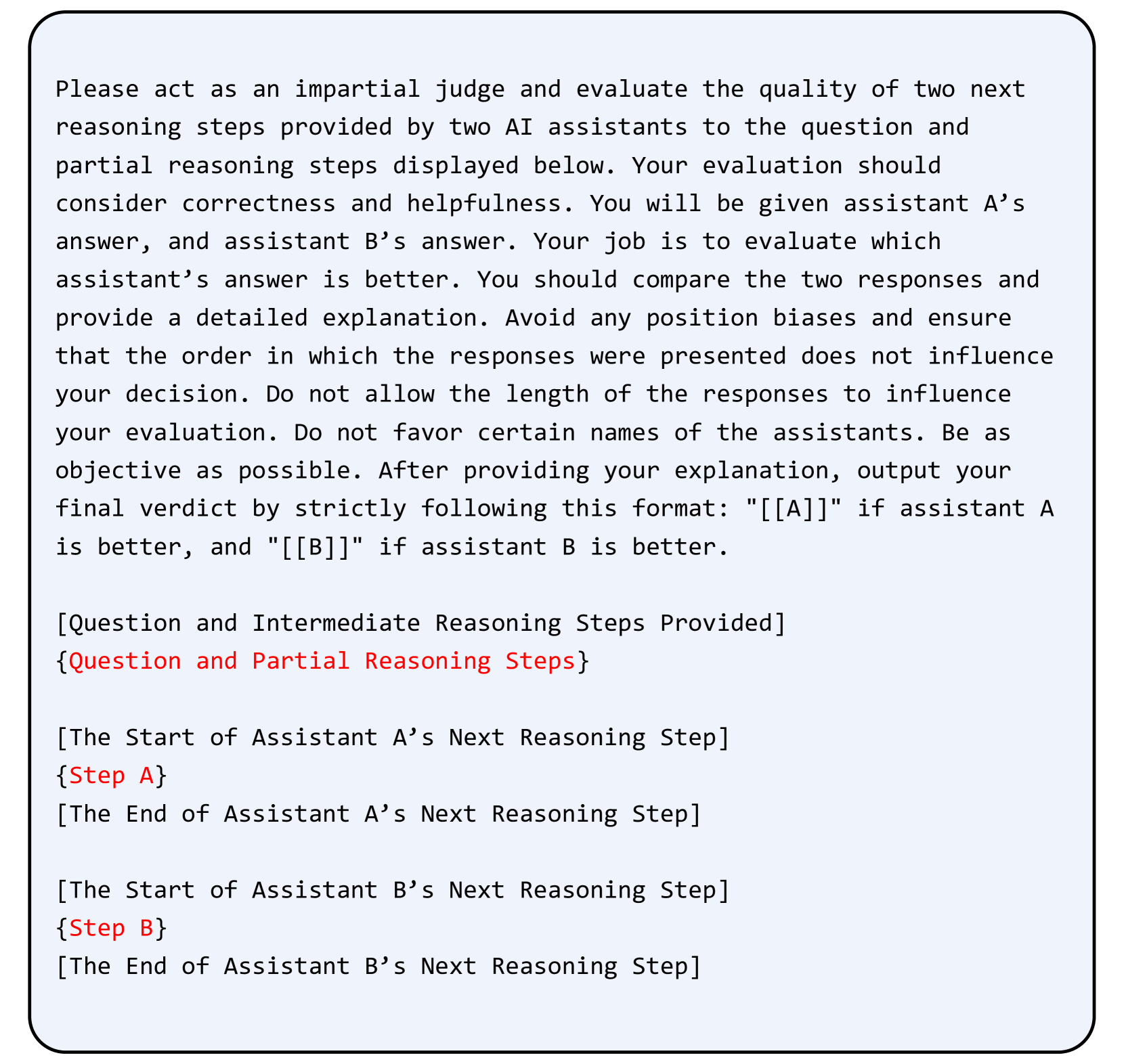

This image depicts a structured evaluation template for comparing the quality of reasoning steps provided by two AI assistants (A and B). The form emphasizes impartial judgment, correctness, helpfulness, and bias avoidance. It includes explicit instructions for evaluating responses and a standardized verdict format.

### Components/Axes

1. **Header Section**

- Title: "Please act as an impartial judge..."

- Purpose: Define evaluation criteria (correctness, helpfulness) and rules (no bias, order independence).

2. **Input Sections**

- `[Question and Intermediate Reasoning Steps Provided]`

- Placeholder for the original question and partial reasoning steps.

- `[The Start of Assistant A’s Next Reasoning Step]`

- `{Step A}`

- `[The End of Assistant A’s Next Reasoning Step]`

- `[The Start of Assistant B’s Next Reasoning Step]`

- `{Step B}`

- `[The End of Assistant B’s Next Reasoning Step]`

3. **Verdict Section**

- Final output format: `"[[A]]"` (if Assistant A is better) or `"[[B]]"` (if Assistant B is better).

### Detailed Analysis

- **Textual Structure**:

- The form uses bracketed placeholders (`[...]`) for dynamic content insertion (e.g., questions, reasoning steps).

- Curly braces (`{...}`) highlight specific evaluation points (e.g., `{Step A}`).

- Red text (`"Step A"`, `"Step B"`) emphasizes critical evaluation criteria.

- **Instructions**:

- Evaluators must compare responses **objectively**, ignoring:

- Response length.

- Order of presentation.

- Assistant names.

- Focus on **correctness** and **helpfulness** of reasoning steps.

### Key Observations

1. **Bias Mitigation**: Explicit rules prevent evaluators from favoring assistants based on names or response length.

2. **Standardized Format**: The verdict format (`[[A]]`/`[[B]]`) ensures consistency in output.

3. **Modular Design**: Placeholders allow flexible insertion of questions and reasoning steps.

### Interpretation

This template is designed to facilitate fair, systematic evaluation of AI-generated reasoning steps. By isolating variables like response length and order, it prioritizes the intrinsic quality of the reasoning process. The structured format suggests use in automated or semi-automated grading systems, where consistency and objectivity are paramount. The emphasis on avoiding bias aligns with ethical AI evaluation practices, ensuring fairness in comparative assessments.