## Line Chart: Performance vs. Training Tokens for SWE-Bench Benchmarks

### Overview

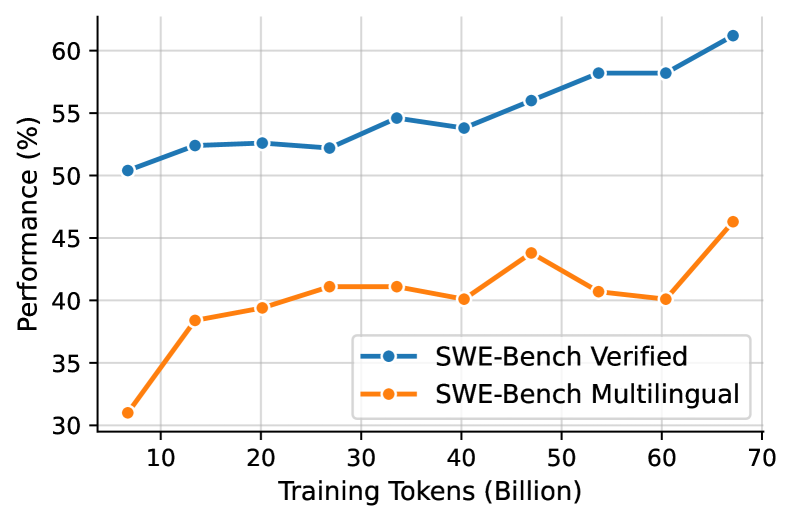

This is a line chart comparing the performance of two benchmarks, "SWE-Bench Verified" and "SWE-Bench Multilingual," as a function of the number of training tokens (in billions). The chart shows a general upward trend for both benchmarks, with the "Verified" set consistently achieving higher performance scores than the "Multilingual" set across all measured training scales.

### Components/Axes

* **Chart Type:** Line chart with markers.

* **X-Axis:**

* **Label:** "Training Tokens (Billion)"

* **Scale:** Linear, ranging from approximately 5 to 70 billion.

* **Major Tick Marks:** 10, 20, 30, 40, 50, 60, 70.

* **Y-Axis:**

* **Label:** "Performance (%)"

* **Scale:** Linear, ranging from 30% to 60%.

* **Major Tick Marks:** 30, 35, 40, 45, 50, 55, 60.

* **Legend:**

* **Position:** Bottom-right corner of the plot area.

* **Entry 1:** Blue line with circular markers, labeled "SWE-Bench Verified".

* **Entry 2:** Orange line with circular markers, labeled "SWE-Bench Multilingual".

### Detailed Analysis

**Data Series 1: SWE-Bench Verified (Blue Line)**

* **Trend:** The line shows a steady, generally upward trend with minor fluctuations. It starts just above 50% and ends above 60%.

* **Approximate Data Points (Training Tokens (B), Performance (%)):**

* (7, 50.5)

* (13, 52.5)

* (20, 52.7)

* (27, 52.3)

* (33, 54.7)

* (40, 53.9)

* (47, 56.0)

* (53, 58.2)

* (60, 58.2)

* (67, 61.2)

**Data Series 2: SWE-Bench Multilingual (Orange Line)**

* **Trend:** The line shows an overall upward trend but with more pronounced volatility compared to the blue line. It starts near 31% and ends near 46%.

* **Approximate Data Points (Training Tokens (B), Performance (%)):**

* (7, 31.0)

* (13, 38.5)

* (20, 39.5)

* (27, 41.0)

* (33, 41.0)

* (40, 40.0)

* (47, 43.8)

* (53, 40.7)

* (60, 40.0)

* (67, 46.3)

### Key Observations

1. **Performance Gap:** The "SWE-Bench Verified" benchmark consistently outperforms the "SWE-Bench Multilingual" benchmark by a significant margin (approximately 15-20 percentage points) at every measured training token scale.

2. **Scaling Law:** Both benchmarks demonstrate a positive correlation between the number of training tokens and performance, suggesting that model capability on these tasks improves with scale.

3. **Volatility Difference:** The "Multilingual" series exhibits more performance volatility (e.g., dips at 40B and 60B tokens) compared to the relatively smoother progression of the "Verified" series.

4. **Final Surge:** Both series show their steepest performance increase in the final segment, from 60B to 67B tokens.

### Interpretation

The chart illustrates a fundamental scaling relationship in machine learning: increasing the volume of training data (tokens) generally leads to better performance on downstream benchmarks. The consistent performance gap between "SWE-Bench Verified" and "SWE-Bench Multilingual" suggests that the multilingual variant of the task is inherently more challenging for the model, possibly due to the need to generalize across multiple programming languages or handle more diverse codebases.

The higher volatility in the multilingual series could indicate that performance on this more complex task is more sensitive to the specific composition of the training data at different scales, or that the model's development path for multilingual understanding is less monotonic. The final sharp uptick for both lines might signify a phase change in model capability or the effect of a specific training strategy employed at the largest scale.

**Language Note:** All text in the image is in English.