## Diagram: Neural Network Architecture with Skip Connections

### Overview

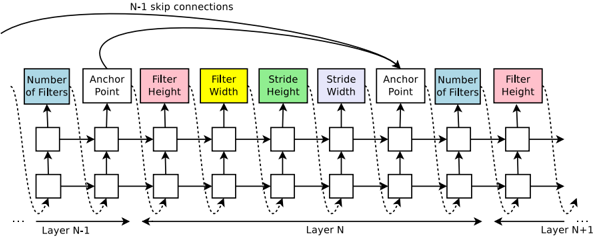

The diagram illustrates a multi-layer neural network architecture with explicit skip connections between layers. It shows the flow of data through sequential processing units (layers) and demonstrates how information is preserved across non-adjacent layers via dashed "N-1 skip connections." The architecture includes configurable parameters for filters, strides, and anchor points.

### Components/Axes

1. **Layers**:

- Layer N-1 (leftmost)

- Layer N (middle)

- Layer N+1 (rightmost)

2. **Processing Units**:

- Each layer contains identical processing blocks arranged horizontally

- Blocks are color-coded with specific parameters:

- **Blue**: Number of Filters

- **White**: Anchor Point

- **Pink**: Filter Height

- **Yellow**: Filter Width

- **Green**: Stride Height

- **Purple**: Stride Width

- **Dashed Lines**: N-1 Skip Connections

3. **Flow Direction**:

- Primary data flow: Left to right through sequential layers

- Skip connections: Dashed arrows from Layer N-1 to Layer N+1

### Detailed Analysis

1. **Layer Structure**:

- All layers share identical block configurations

- Block sequence per layer:

1. Number of Filters (blue)

2. Anchor Point (white)

3. Filter Height (pink)

4. Filter Width (yellow)

5. Stride Height (green)

6. Stride Width (purple)

7. Anchor Point (white)

8. Number of Filters (blue)

2. **Skip Connection Pattern**:

- Dashed lines connect:

- Layer N-1's "Number of Filters" → Layer N+1's "Number of Filters"

- Layer N-1's "Anchor Point" → Layer N+1's "Anchor Point"

- Creates residual pathway bypassing Layer N

3. **Parameter Relationships**:

- Filter dimensions (height/width) and stride dimensions (height/width) appear in paired blocks

- Anchor points are positioned between filter/stride parameters

- Number of filters appears at both input and output of each layer

### Key Observations

1. **Architectural Symmetry**:

- Identical block configurations across all layers suggest uniform processing

- Skip connections create diagonal shortcuts between equivalent layers

2. **Dimensionality Control**:

- Stride parameters (green/purple) likely control spatial resolution reduction

- Filter parameters (pink/yellow) determine feature map size

3. **Information Preservation**:

- Skip connections enable gradient flow through unchanged dimensions

- Critical for training deep networks without vanishing gradients

### Interpretation

This architecture demonstrates a hybrid approach combining:

1. **Sequential Processing**: Standard CNN-like feature extraction through filters and strides

2. **Residual Learning**: Skip connections allow networks to learn residual functions

3. **Anchor Point Integration**: Suggests object detection or spatial anchoring capabilities

The design prioritizes:

- **Training Stability**: Through skip connections maintaining gradient pathways

- **Feature Reusability**: Identical layer configurations across depths

- **Spatial Control**: Explicit stride and filter dimension parameters

Notable patterns include the consistent parameter ordering across layers and the strategic placement of skip connections between equivalent processing stages. The architecture appears optimized for both feature extraction depth and information preservation across layers.