## Horizontal Bar Chart: Logical Consistency of AI Models

### Overview

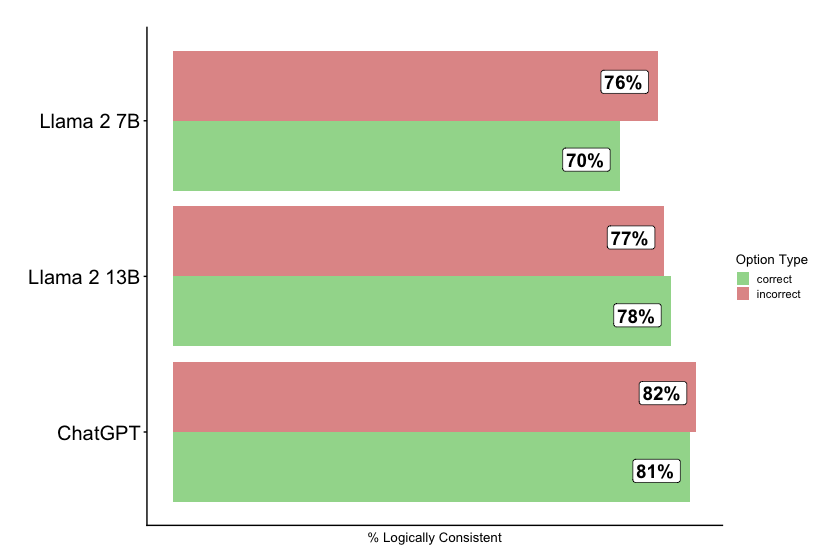

The image is a horizontal bar chart comparing the percentage of logical consistency for three large language models: Llama 2 7B, Llama 2 13B, and ChatGPT. The chart breaks down the consistency score into two categories: "correct" and "incorrect" responses.

### Components/Axes

* **Chart Type:** Horizontal grouped bar chart.

* **Y-Axis (Vertical):** Lists the three AI models being compared. From top to bottom: "Llama 2 7B", "Llama 2 13B", "ChatGPT".

* **X-Axis (Horizontal):** Labeled "% Logically Consistent". It represents a percentage scale, though specific numerical markers on the axis are not visible. The bars extend from left to right.

* **Legend:** Positioned on the right side of the chart, titled "Option Type". It defines the two data series:

* A green square labeled "correct".

* A red/salmon square labeled "incorrect".

* **Data Labels:** Each bar segment has a white box with black text displaying its exact percentage value.

### Detailed Analysis

The chart presents two data points for each model, representing the percentage of responses deemed logically consistent within the "correct" and "incorrect" categories.

**1. Llama 2 7B (Top Group)**

* **Incorrect (Red Bar):** The top bar in this group. It extends further to the right and is labeled **76%**.

* **Correct (Green Bar):** The bottom bar in this group. It is shorter than the red bar and is labeled **70%**.

* **Trend:** The "incorrect" category has a higher logical consistency score than the "correct" category for this model.

**2. Llama 2 13B (Middle Group)**

* **Incorrect (Red Bar):** The top bar. It is labeled **77%**.

* **Correct (Green Bar):** The bottom bar. It is slightly longer than the red bar and is labeled **78%**.

* **Trend:** The scores are very close, with the "correct" category having a marginally higher logical consistency score.

**3. ChatGPT (Bottom Group)**

* **Incorrect (Red Bar):** The top bar. It is the longest red bar in the chart and is labeled **82%**.

* **Correct (Green Bar):** The bottom bar. It is slightly shorter than the red bar and is labeled **81%**.

* **Trend:** Both scores are the highest among the three models, with the "incorrect" category scoring slightly higher.

### Key Observations

1. **Performance Hierarchy:** ChatGPT demonstrates the highest logical consistency percentages in both categories (81-82%), followed by Llama 2 13B (77-78%), and then Llama 2 7B (70-76%).

2. **Category Comparison:** For the two Llama models, the relationship between "correct" and "incorrect" scores flips. Llama 2 7B's "incorrect" score is higher, while Llama 2 13B's "correct" score is higher. ChatGPT's scores are nearly equal.

3. **Narrowing Gap:** The difference between the "correct" and "incorrect" percentages narrows as model capability increases (from a 6-point gap for Llama 2 7B, to a 1-point gap for Llama 2 13B, to a 1-point gap for ChatGPT).

4. **High Baseline:** All logical consistency scores are relatively high, ranging from 70% to 82%, suggesting the evaluation metric or task may yield consistently high scores across these models.

### Interpretation

This chart likely visualizes results from a benchmark testing the logical reasoning or consistency of AI model outputs. The "correct" and "incorrect" labels probably refer to the model's final answer being right or wrong, while the "% Logically Consistent" metric evaluates the soundness of the reasoning steps provided, regardless of the final answer's correctness.

The data suggests a few key insights:

* **Model Scaling Improves Consistency:** Moving from Llama 2 7B to the larger 13B version improves logical consistency scores for both correct and incorrect answers, indicating that model scale contributes to more coherent reasoning.

* **ChatGPT Leads in Reasoning Coherence:** ChatGPT exhibits the highest level of logical consistency in its reasoning processes, whether its final answer is correct or not.

* **The "Incorrect" Paradox:** The fact that "incorrect" answers can have high logical consistency (e.g., 82% for ChatGPT) is significant. It implies that models can construct logically sound arguments that lead to wrong conclusions. This highlights a critical challenge in AI evaluation: a model can be persuasive and logically structured yet factually wrong.

* **Benchmark Design:** The high scores across the board (all >70%) may indicate that the specific benchmark used is not highly discriminative for these top-tier models, or that logical consistency is a relative strength of current LLMs. The narrowing gap between correct and incorrect consistency in more advanced models might suggest their errors become more subtle and logically defended.