## Heatmap Grid: AI Model Head Importance by Cognitive Task

### Overview

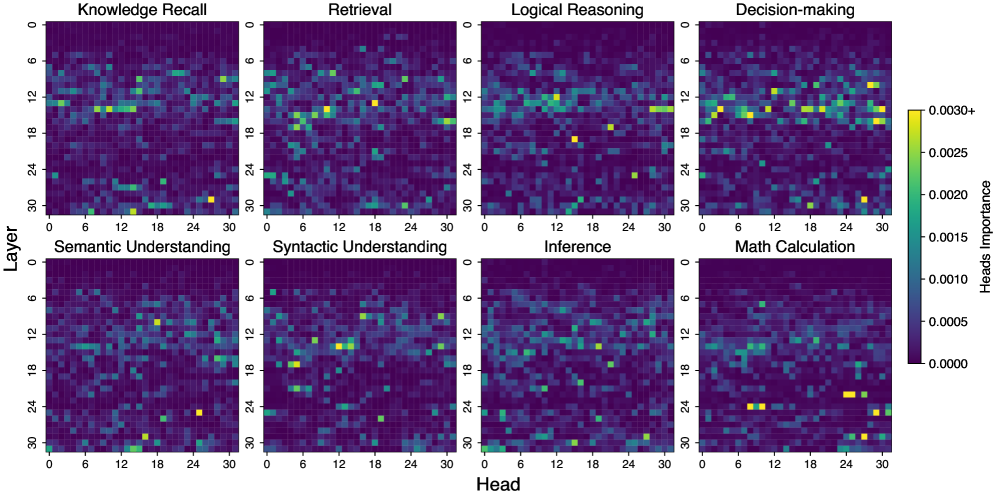

The image displays a grid of eight heatmaps arranged in two rows and four columns. Each heatmap visualizes the "importance" of different attention heads (across layers) within an AI model for a specific cognitive task. The overall purpose is to show which parts of the model (specific layer-head combinations) are most critical for different types of reasoning and understanding.

### Components/Axes

* **Grid Structure:** 8 individual heatmaps in a 2x4 layout.

* **Subplot Titles (Top Row, Left to Right):** "Knowledge Recall", "Retrieval", "Logical Reasoning", "Decision-making".

* **Subplot Titles (Bottom Row, Left to Right):** "Semantic Understanding", "Syntactic Understanding", "Inference", "Math Calculation".

* **Common Y-Axis (Leftmost plots):** Labeled "Layer". Scale runs from 0 at the top to 30 at the bottom, with major ticks at 0, 6, 12, 18, 24, 30.

* **Common X-Axis (Bottom plots):** Labeled "Head". Scale runs from 0 on the left to 30 on the right, with major ticks at 0, 6, 12, 18, 24, 30.

* **Color Scale/Legend (Far Right):** A vertical color bar titled "Heads Importance". The scale is continuous:

* Dark Purple/Black: 0.0000

* Teal/Green: ~0.0010 - 0.0020

* Yellow: 0.0025 to 0.0030+ (brightest yellow indicates highest importance).

### Detailed Analysis

Each heatmap is a 31x31 grid (Layers 0-30 vs. Heads 0-30). The color of each cell represents the importance value for that specific layer-head pair for the given task.

**General Pattern Across All Heatmaps:**

* The background is predominantly dark purple, indicating most layer-head pairs have very low importance (~0.0000) for any given task.

* Importance is highly localized. Scattered "hotspots" of higher importance (teal to yellow) appear, but they are sparse and do not form large, continuous regions.

* The distribution of hotspots varies significantly between tasks, suggesting functional specialization within the model.

**Task-Specific Observations (Spatial Grounding & Trend Verification):**

1. **Knowledge Recall:** Hotspots are scattered. Notable yellow spots appear around (Layer ~12, Head ~12) and (Layer ~28, Head ~24).

2. **Retrieval:** Shows a cluster of moderate-to-high importance (teal/yellow) in the central region, roughly between Layers 12-18 and Heads 6-18.

3. **Logical Reasoning:** Has several distinct yellow hotspots. One prominent spot is near (Layer 15, Head 18). Another is around (Layer 12, Head 28).

4. **Decision-making:** Exhibits a relatively higher density of teal and yellow spots compared to others, particularly in the upper half (Layers 0-15). A bright yellow spot is visible at approximately (Layer 12, Head 6).

5. **Semantic Understanding:** Hotspots are sparse. A clear yellow spot is located near (Layer 10, Head 12). Another is at (Layer 24, Head 24).

6. **Syntactic Understanding:** Shows a notable concentration of activity in the center-left. A bright yellow spot is at (Layer 15, Head 12).

7. **Inference:** Appears to have the fewest high-importance (yellow) spots. Most activity is low-level (teal), with a slightly denser region around Layers 12-18.

8. **Math Calculation:** Displays a very distinct pattern. High-importance yellow spots are concentrated in the lower layers, specifically around (Layer 24, Head 9) and (Layer 27, Head 27). This is a clear outlier in terms of spatial distribution compared to the more distributed patterns of other tasks.

### Key Observations

* **Functional Specialization:** Different cognitive tasks activate distinct, sparse sets of attention heads. There is no single "general reasoning" area.

* **Layer-Head Specificity:** Importance is not uniform across a layer or a head index; it is highly specific to the combination (e.g., Layer 12, Head 12 is important for Knowledge Recall but not necessarily for Math Calculation).

* **Math Calculation Anomaly:** The importance pattern for math is uniquely concentrated in the lower layers (higher layer numbers), whereas other tasks show more mid-layer (layers 10-20) activity.

* **Decision-making Density:** The "Decision-making" task appears to engage a broader set of heads more intensely than tasks like "Inference."

### Interpretation

This visualization provides a Peircean map of the model's internal functional organization. The **icon** is the heatmap grid itself, representing the model's architecture. The **index** is the spatial location of the hotspots, pointing to specific computational units (layer-head pairs). The **symbol** is the assigned task label (e.g., "Logical Reasoning").

The data suggests that the model has developed **modular, distributed expertise**. Rather than a monolithic processor, it uses specialized micro-circuits (specific heads in specific layers) for different cognitive operations. The stark difference in the "Math Calculation" pattern implies that mathematical processing may rely on a fundamentally different or more localized computational pathway within the model compared to linguistic or reasoning tasks.

The sparsity of the hotspots indicates high **efficiency and specialization**; only a tiny fraction of the model's attention capacity is critically important for any single task. This has implications for model interpretability and editing: to influence a specific capability, one might target these identified sparse components rather than the entire model. The variation in patterns across tasks underscores the complexity of artificial cognition and challenges the notion of a single, unified "reasoning engine" within such models.