\n

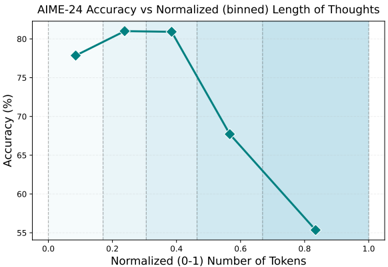

## Line Chart: AIME-24 Accuracy vs Normalized Length of Thoughts

### Overview

This image presents a line chart illustrating the relationship between AIME-24 accuracy and the normalized (binned) length of thoughts, measured in tokens. The chart shows how accuracy changes as the length of thought increases, ranging from 0.0 to 1.0.

### Components/Axes

* **Title:** AIME-24 Accuracy vs Normalized (binned) Length of Thoughts

* **X-axis:** Normalized (0-1) Number of Tokens. The axis ranges from 0.0 to 1.0, with markers at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Y-axis:** Accuracy (%). The axis ranges from approximately 55% to 82%, with markers at 55%, 60%, 65%, 70%, 75%, 80%, and 82%.

* **Data Series:** A single line representing AIME-24 accuracy. The line is teal in color.

* **Background:** A light blue shaded region covers the majority of the chart area, from approximately x=0.4 to x=1.0.

### Detailed Analysis

The line representing AIME-24 accuracy exhibits a non-linear trend.

* **Initial Increase:** From x=0.0 to x=0.2, the line slopes upward.

* At x=0.0, the accuracy is approximately 77%.

* At x=0.2, the accuracy reaches a peak of approximately 81%.

* **Plateau:** From x=0.2 to x=0.4, the line remains relatively flat, indicating stable accuracy.

* At x=0.4, the accuracy is approximately 81%.

* **Rapid Decrease:** From x=0.4 to x=0.8, the line slopes downward sharply.

* At x=0.6, the accuracy decreases to approximately 68%.

* At x=0.8, the accuracy drops to approximately 55%.

* **Continued Decrease:** From x=0.8 to x=1.0, the line continues to slope downward, but at a slower rate.

* At x=1.0, the accuracy is approximately 54%.

### Key Observations

* The highest accuracy is achieved at a normalized token length of approximately 0.2.

* Accuracy decreases significantly as the normalized token length exceeds 0.4.

* There appears to be an optimal length of thought for AIME-24, beyond which performance degrades.

### Interpretation

The data suggests that AIME-24 performs best with relatively short, normalized lengths of thought (around 0.2 tokens). As the length of thought increases, the accuracy declines, potentially indicating that the model struggles with longer, more complex reasoning chains. The initial increase in accuracy could be due to the model needing a minimum amount of context to understand the task, while the subsequent decrease might be caused by issues like information overload, increased noise, or difficulty maintaining coherence over longer sequences. The shaded background may indicate a region where the model's performance is particularly sensitive to changes in token length. This information is valuable for optimizing the use of AIME-24, suggesting that keeping the length of thought concise can lead to better results.