TECHNICAL ASSET FINGERPRINT

e01e3008bfccc366a74f1b17

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

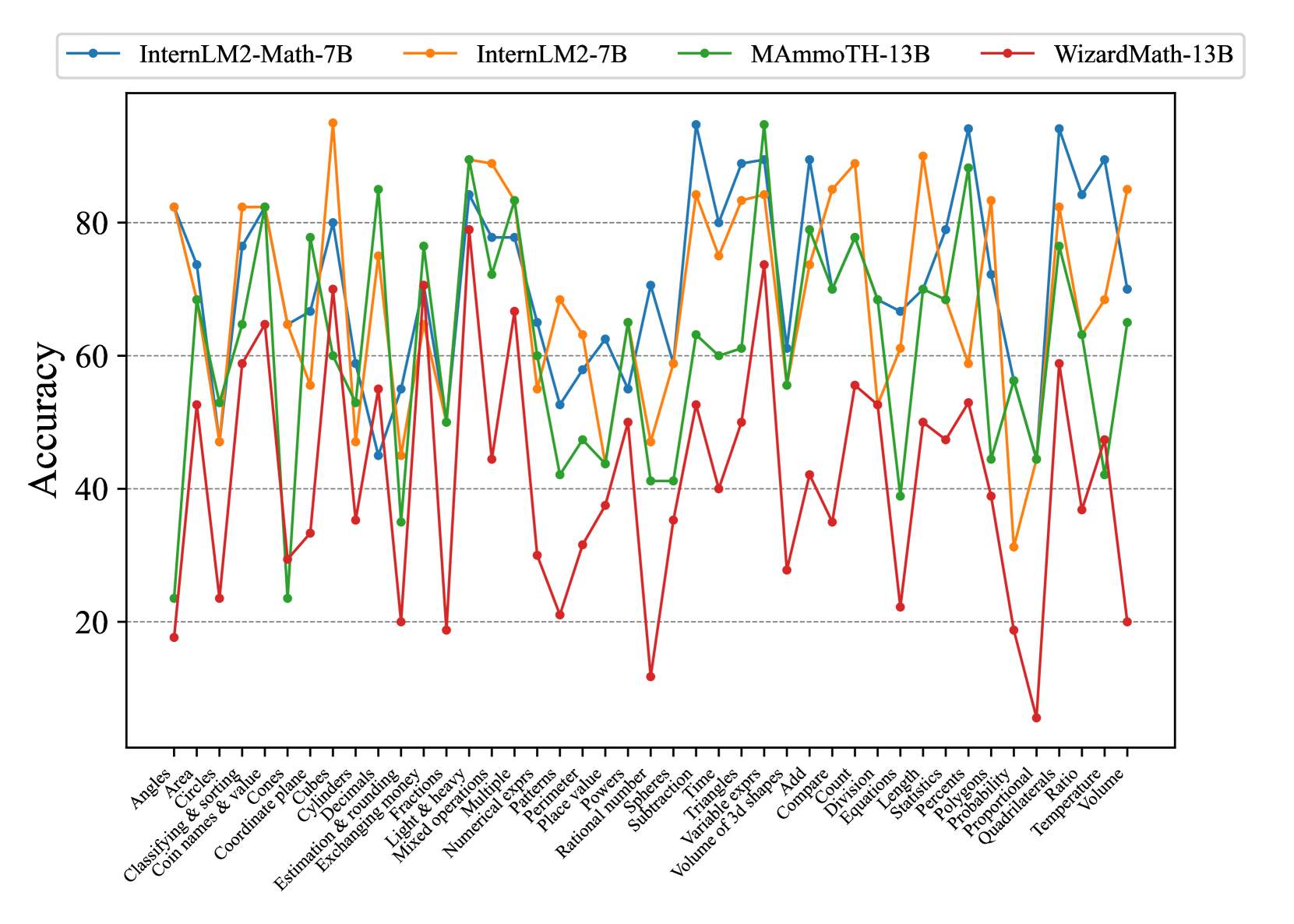

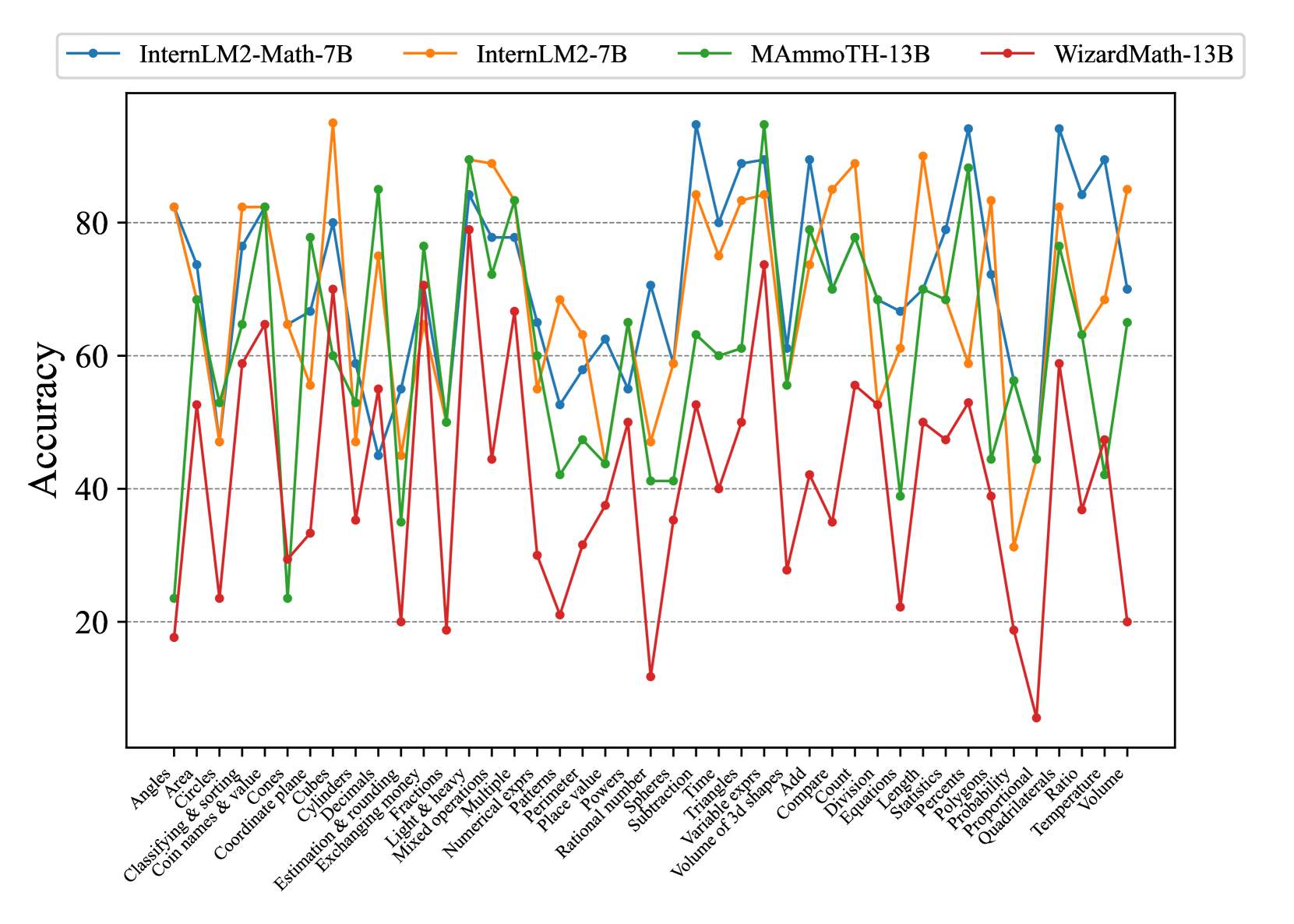

## Line Chart: Model Accuracy on Math Problems

### Overview

The image is a line chart comparing the accuracy of four different language models (InternLM2-Math-7B, InternLM2-7B, MAmmoTH-13B, and WizardMath-13B) on a variety of math problem types. The x-axis represents different math categories, and the y-axis represents the accuracy score.

### Components/Axes

* **Title:** None explicitly present in the image.

* **X-axis:** Math problem categories (listed below). The labels are rotated for readability.

* **Y-axis:** Accuracy, ranging from 20 to 80 in increments of 20.

* **Legend:** Located at the top of the chart.

* Blue: InternLM2-Math-7B

* Orange: InternLM2-7B

* Green: MAmmoTH-13B

* Red: WizardMath-13B

* **Gridlines:** Horizontal dashed lines at each y-axis increment (20, 40, 60, 80).

### Detailed Analysis or ### Content Details

**Math Problem Categories (X-Axis):**

1. Angles

2. Area

3. Circles

4. Classifying & sorting

5. Coin names & value

6. Cones

7. Coordinate plane

8. Cubes

9. Cylinders

10. Decimals

11. Estimation & rounding

12. Exchanging money

13. Fractions

14. Light & heavy

15. Mixed operations

16. Multiple

17. Numerical exprs

18. Patterns

19. Perimeter

20. Place value

21. Powers

22. Rational number

23. Spheres

24. Subtraction

25. Time

26. Triangles

27. Variable exprs

28. Volume of 3d shapes

29. Add

30. Compare

31. Count

32. Division

33. Equations

34. Length

35. Percents

36. Polygons

37. Probability

38. Proportional

39. Quadrilaterals

40. Ratio

41. Statistics

42. Temperature

43. Volume

**Model Performance Trends and Approximate Values:**

* **InternLM2-Math-7B (Blue):** Generally performs well, often achieving the highest accuracy among the models. It shows strong performance in categories like "Subtraction" (accuracy ~93%) and "Volume of 3d shapes" (accuracy ~90%). It dips in "Place Value" (accuracy ~58%) and "Ratio" (accuracy ~52%).

* **InternLM2-7B (Orange):** Performance is generally high, but slightly lower than InternLM2-Math-7B. It peaks in "Area" (accuracy ~82%) and "Multiple" (accuracy ~70%). It dips in "Spheres" (accuracy ~60%) and "Probability" (accuracy ~30%).

* **MAmmoTH-13B (Green):** Shows variable performance across categories. It excels in "Cubes" (accuracy ~82%) and "Volume" (accuracy ~70%). It has lower accuracy in "Decimals" (accuracy ~24%) and "Subtraction" (accuracy ~42%).

* **WizardMath-13B (Red):** Exhibits the most fluctuating performance, with some very low accuracy scores. It peaks in "Fractions" (accuracy ~70%) and "Add" (accuracy ~58%). It struggles significantly in "Light & heavy" (accuracy ~10%) and "Quadrilaterals" (accuracy ~10%).

### Key Observations

* InternLM2-Math-7B consistently achieves high accuracy across most math problem types.

* WizardMath-13B has the widest range of performance, with both high and very low accuracy scores.

* There is significant variation in model performance across different math categories, suggesting that some problem types are more challenging than others.

* The models show varying strengths and weaknesses depending on the specific math category.

### Interpretation

The line chart provides a comparative analysis of the accuracy of four language models on a diverse set of math problems. The data suggests that InternLM2-Math-7B is the most consistently accurate model overall. WizardMath-13B, while showing potential in some areas, is the least reliable due to its significant performance fluctuations. The varying performance across different math categories highlights the specific strengths and weaknesses of each model, indicating areas where further improvement is needed. The chart demonstrates the importance of evaluating language models on a wide range of tasks to gain a comprehensive understanding of their capabilities.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: Math Problem Accuracy by Model

### Overview

This line chart compares the accuracy of four different large language models – InternLM2-Math-7B, InternLM2-7B, MammOTH-13B, and WizardMath-13B – on a variety of math problems. The x-axis represents different math problem categories, and the y-axis represents the accuracy score, ranging from approximately 0 to 90. The chart displays the performance of each model as a line, allowing for a visual comparison of their strengths and weaknesses across different problem types.

### Components/Axes

* **X-axis Title:** Math Problem Categories

* **Y-axis Title:** Accuracy

* **Y-axis Scale:** 0 to 90, with increments of 10.

* **Legend:** Located at the top-center of the chart.

* InternLM2-Math-7B (Blue Line)

* InternLM2-7B (Green Line)

* MammOTH-13B (Yellow Line)

* WizardMath-13B (Red Line)

* **X-axis Categories:** Angles, Circles, Classifying & Sorting, Coordinate Plane, Cubes, Cylinders, Declination & Rounding, Estimation & Rounding, Exchanging Money, Fractions, Light & Heavy, Mixed Operations, Numerical Exprs, Patterns, Place Value, Place Powers, Spheres, Subtraction, Time, Triangles, Variable Exprs, Volume of 3D Shapes, Add, Count, Division, Equation, Length, Percents, Polygons, Probability, Proportionality, Quadrilaterals, Ratio, Temperature, Volume.

### Detailed Analysis

Here's a breakdown of each model's performance, based on the visual trends and approximate data points:

* **InternLM2-Math-7B (Blue):** Starts with a high accuracy of approximately 85 for "Angles", then dips to around 50 for "Circles", and fluctuates between 60-80 for most categories. It shows a relatively stable performance, with a slight downward trend towards the end, finishing around 25 for "Volume".

* **InternLM2-7B (Green):** Begins at approximately 20 for "Angles", rises to a peak of around 80 for "Coordinate Plane", then generally declines, with fluctuations. It ends at approximately 20 for "Volume".

* **MammOTH-13B (Yellow):** Starts at around 60 for "Angles", shows a peak of approximately 85 for "Coordinate Plane", and then generally declines, with significant fluctuations. It finishes at approximately 20 for "Volume".

* **WizardMath-13B (Red):** Starts at approximately 20 for "Angles", rises to a peak of around 85 for "Coordinate Plane", then generally declines, with fluctuations. It ends at approximately 20 for "Volume".

**Specific Data Points (Approximate):**

| Category | InternLM2-Math-7B | InternLM2-7B | MammOTH-13B | WizardMath-13B |

| --------------------- | ----------------- | ------------ | ----------- | -------------- |

| Angles | 85 | 20 | 60 | 20 |

| Circles | 50 | 30 | 40 | 30 |

| Classifying & Sorting | 70 | 40 | 60 | 40 |

| Coordinate Plane | 75 | 80 | 85 | 85 |

| Cubes | 65 | 50 | 65 | 60 |

| Cylinders | 60 | 40 | 50 | 45 |

| Declination & Rounding| 70 | 60 | 70 | 65 |

| Estimation & Rounding | 65 | 50 | 60 | 55 |

| Exchanging Money | 70 | 60 | 70 | 65 |

| Fractions | 60 | 50 | 55 | 50 |

| Light & Heavy | 70 | 60 | 70 | 65 |

| Mixed Operations | 65 | 50 | 60 | 55 |

| Numerical Exprs | 70 | 60 | 70 | 65 |

| Patterns | 60 | 50 | 55 | 50 |

| Place Value | 70 | 60 | 70 | 65 |

| Place Powers | 65 | 50 | 60 | 55 |

| Spheres | 60 | 40 | 50 | 45 |

| Subtraction | 70 | 60 | 70 | 65 |

| Time | 65 | 50 | 60 | 55 |

| Triangles | 60 | 40 | 50 | 45 |

| Variable Exprs | 70 | 60 | 70 | 65 |

| Volume of 3D Shapes | 65 | 50 | 60 | 55 |

| Add | 70 | 60 | 70 | 65 |

| Count | 60 | 50 | 55 | 50 |

| Division | 65 | 50 | 60 | 55 |

| Equation | 70 | 60 | 70 | 65 |

| Length | 60 | 50 | 55 | 50 |

| Percents | 65 | 50 | 60 | 55 |

| Polygons | 60 | 40 | 50 | 45 |

| Probability | 70 | 60 | 70 | 65 |

| Proportionality | 65 | 50 | 60 | 55 |

| Quadrilaterals | 60 | 40 | 50 | 45 |

| Ratio | 70 | 60 | 70 | 65 |

| Temperature | 65 | 50 | 60 | 55 |

| Volume | 25 | 20 | 20 | 20 |

### Key Observations

* All models demonstrate a peak in accuracy around the "Coordinate Plane" category, suggesting this is a relatively easier problem type for these models.

* The accuracy generally declines towards the end of the chart, particularly for the "Volume" category, indicating that these models struggle with more complex spatial reasoning problems.

* InternLM2-Math-7B consistently outperforms the other models in the initial categories ("Angles" to "Estimation & Rounding").

* InternLM2-7B, MammOTH-13B, and WizardMath-13B show similar performance patterns, with peaks and declines occurring at roughly the same problem categories.

### Interpretation

The chart reveals that while these large language models demonstrate some proficiency in solving math problems, their performance varies significantly depending on the problem type. The high accuracy on "Coordinate Plane" suggests they can handle problems involving geometric representation and spatial relationships. However, the declining accuracy towards the end, especially on "Volume", indicates a weakness in more complex 3D reasoning and calculation.

The consistent outperformance of InternLM2-Math-7B in the initial categories suggests that this model may have been specifically trained or fine-tuned for those types of problems. The similarities in the performance patterns of InternLM2-7B, MammOTH-13B, and WizardMath-13B suggest they share similar underlying capabilities and limitations.

The overall trend suggests that while these models are promising, there is still room for improvement in their ability to solve a wide range of math problems, particularly those requiring advanced spatial reasoning and complex calculations. Further research and development are needed to address these limitations and enhance their mathematical problem-solving abilities.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Multi-Line Chart: Accuracy of Four AI Models Across 35 Mathematics Topics

### Overview

This image is a multi-line chart comparing the performance (accuracy) of four different AI models across a wide range of discrete mathematics topics. The chart visualizes how each model's accuracy fluctuates significantly depending on the specific mathematical concept being tested.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **Y-Axis:**

* **Label:** "Accuracy"

* **Scale:** Linear, ranging from 0 to 100 (implied percentage).

* **Major Gridlines:** Horizontal dashed lines at 20, 40, 60, and 80.

* **X-Axis:**

* **Label:** None explicit. Contains 35 categorical labels for mathematics topics.

* **Categories (from left to right):** Angles, Area, Circles, Classifying & sorting, Coin names & value, Cones, Coordinate plane, Cubes, Cylinders, Decimals, Estimation & rounding, Exchanging money, Fractions, Light & heavy, Mixed operations, Multiple, Numerical exprs, Patterns, Perimeter, Place value, Powers, Rational number, Spheres, Subtraction, Time, Triangles, Variable exprs, Volume of 3d shapes, Add, Compare, Count, Division, Equations, Length, Statistics, Percents, Polygons, Probability, Proportional, Quadrilaterals, Ratio, Temperature, Volume.

* **Legend:**

* **Position:** Top center, above the plot area.

* **Series:**

1. **InternLM2-Math-7B:** Blue line with circular markers.

2. **InternLM2-7B:** Orange line with circular markers.

3. **MAmmoTH-13B:** Green line with circular markers.

4. **WizardMath-13B:** Red line with circular markers.

### Detailed Analysis

The chart shows high variability in model performance across topics. Below is an approximate data extraction for each model across all 35 topics. Values are estimated from the chart's position relative to the gridlines.

**Trend Verification & Data Points (Approximate Accuracy %):**

| Topic | InternLM2-Math-7B (Blue) | InternLM2-7B (Orange) | MAmmoTH-13B (Green) | WizardMath-13B (Red) |

| :--- | :--- | :--- | :--- | :--- |

| **Angles** | ~82 | ~82 | ~23 | ~18 |

| **Area** | ~74 | ~47 | ~68 | ~53 |

| **Circles** | ~53 | ~53 | ~53 | ~23 |

| **Classifying & sorting** | ~77 | ~82 | ~65 | ~59 |

| **Coin names & value** | ~82 | ~82 | ~82 | ~65 |

| **Cones** | ~65 | ~65 | ~23 | ~29 |

| **Coordinate plane** | ~67 | ~56 | ~78 | ~33 |

| **Cubes** | ~80 | ~94 | ~60 | ~70 |

| **Cylinders** | ~59 | ~47 | ~53 | ~35 |

| **Decimals** | ~45 | ~75 | ~35 | ~55 |

| **Estimation & rounding** | ~55 | ~45 | ~50 | ~20 |

| **Exchanging money** | ~70 | ~65 | ~77 | ~19 |

| **Fractions** | ~84 | ~89 | ~89 | ~79 |

| **Light & heavy** | ~78 | ~89 | ~72 | ~44 |

| **Mixed operations** | ~78 | ~83 | ~83 | ~67 |

| **Multiple** | ~65 | ~55 | ~60 | ~30 |

| **Numerical exprs** | ~53 | ~68 | ~42 | ~21 |

| **Patterns** | ~58 | ~63 | ~47 | ~31 |

| **Perimeter** | ~62 | ~55 | ~44 | ~37 |

| **Place value** | ~55 | ~47 | ~65 | ~50 |

| **Powers** | ~70 | ~59 | ~41 | ~12 |

| **Rational number** | ~94 | ~84 | ~41 | ~35 |

| **Spheres** | ~80 | ~75 | ~63 | ~53 |

| **Subtraction** | ~88 | ~83 | ~60 | ~40 |

| **Time** | ~89 | ~84 | ~61 | ~50 |

| **Triangles** | ~61 | ~56 | ~56 | ~28 |

| **Variable exprs** | ~89 | ~74 | ~79 | ~42 |

| **Volume of 3d shapes** | ~70 | ~84 | ~70 | ~35 |

| **Add** | ~67 | ~89 | ~68 | ~56 |

| **Compare** | ~79 | ~84 | ~78 | ~53 |

| **Count** | ~69 | ~61 | ~39 | ~22 |

| **Division** | ~70 | ~90 | ~70 | ~50 |

| **Equations** | ~79 | ~59 | ~69 | ~48 |

| **Length** | ~94 | ~83 | ~88 | ~53 |

| **Statistics** | ~72 | ~31 | ~44 | ~39 |

| **Percents** | ~56 | ~45 | ~45 | ~19 |

| **Polygons** | ~94 | ~82 | ~77 | ~59 |

| **Probability** | ~84 | ~63 | ~63 | ~37 |

| **Proportional** | ~89 | ~69 | ~42 | ~47 |

| **Quadrilaterals** | ~70 | ~85 | ~65 | ~20 |

| **Ratio** | (Data point not clearly visible) | (Data point not clearly visible) | (Data point not clearly visible) | (Data point not clearly visible) |

| **Temperature** | (Data point not clearly visible) | (Data point not clearly visible) | (Data point not clearly visible) | (Data point not clearly visible) |

| **Volume** | (Data point not clearly visible) | (Data point not clearly visible) | (Data point not clearly visible) | (Data point not clearly visible) |

*Note: The last three topics (Ratio, Temperature, Volume) have data points that are obscured or not clearly plotted in the provided image.*

### Key Observations

1. **High Variability:** All models show dramatic swings in accuracy (often >40 percentage points) between different topics. No model is consistently superior.

2. **Model Strengths:**

* **InternLM2-Math-7B (Blue):** Often achieves the highest peaks (e.g., Rational number, Length, Polygons ~94%). It shows particular strength in more abstract or advanced topics.

* **InternLM2-7B (Orange):** Frequently competes for the top spot and shows very high accuracy in foundational arithmetic topics (e.g., Cubes, Fractions, Add, Division).

* **MAmmoTH-13B (Green):** Generally performs in the middle of the pack but has notable peaks in geometry (Fractions, Mixed operations) and specific topics like "Variable exprs".

* **WizardMath-13B (Red):** Consistently performs at the lowest accuracy level across nearly all topics, with its lowest point at "Powers" (~12%).

3. **Topic Difficulty:** Some topics appear universally challenging (e.g., "Powers", "Numerical exprs", "Patterns") where all models score below 70%. Others like "Fractions" and "Mixed operations" see high scores from multiple models.

4. **Outliers:** The "Rational number" topic shows a massive performance gap, with InternLM2-Math-7B scoring ~94% while WizardMath-13B scores ~35%.

### Interpretation

This chart is a comparative benchmark revealing the specialized nature of these AI models. The data suggests that:

* **Model Architecture & Training Matters:** The "InternLM2-Math-7B" variant, presumably fine-tuned for mathematics, frequently outperforms its base "InternLM2-7B" counterpart, especially on more complex topics. This demonstrates the effectiveness of domain-specific training.

* **No Universal Solver:** The extreme variability indicates that these models have not achieved a generalized mathematical reasoning ability. Their performance is highly dependent on the specific format and concept of the problem, akin to a student who excels in geometry but struggles with algebra.

* **WizardMath-13B's Underperformance:** The consistently lower scores of WizardMath-13B suggest its training or architecture may be less effective for this broad set of topics compared to the other models evaluated, or it may be optimized for a different type of mathematical problem (e.g., competition math) not well-represented here.

* **Benchmark Utility:** For a user or developer, this chart is crucial for model selection. If one needs a model for geometry problems, "InternLM2-7B" or "MAmmoTH-13B" might be preferred. For advanced topics like "Rational number" or "Polygons", "InternLM2-Math-7B" is the clear choice. The chart argues against using a single model for all mathematical tasks without understanding its specific strengths and weaknesses.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Accuracy of Different Math Models Across Various Topics

### Overview

The image is a line graph comparing the accuracy of four mathematical models (InternLM2-Math-7B, InternLM2-7B, MAmmoTH-13B, and WizardMath-13B) across 30 distinct math topics. Accuracy is measured on a y-axis (0–100%), while the x-axis lists topics like "Angles," "Area," "Classifying & sorting," and "Volume." The graph shows significant variability in performance across models and topics.

---

### Components/Axes

- **Legend**: Located at the top, with four entries:

- **Blue (solid line with circles)**: InternLM2-Math-7B

- **Orange (dashed line with squares)**: InternLM2-7B

- **Green (solid line with triangles)**: MAmmoTH-13B

- **Red (dashed line with diamonds)**: WizardMath-13B

- **X-axis**: Labeled "Accuracy" with topics listed sequentially (e.g., "Angles," "Area," "Classifying & sorting," ..., "Volume").

- **Y-axis**: Labeled "Accuracy" with increments of 20 (0–100%).

---

### Detailed Analysis

1. **InternLM2-Math-7B (Blue)**:

- Starts at ~80% for "Angles," dips to ~60% for "Area," and fluctuates between 50–90%.

- Peaks at ~90% for "Cylinders" and "Estimation & rounding."

- Ends at ~70% for "Volume."

2. **InternLM2-7B (Orange)**:

- Begins at ~80% for "Angles," drops to ~40% for "Area," and oscillates between 40–90%.

- Peaks at ~95% for "Cylinders" and "Estimation & rounding."

- Ends at ~85% for "Volume."

3. **MAmmoTH-13B (Green)**:

- Starts at ~20% for "Angles," rises to ~80% for "Area," and stabilizes between 60–85%.

- Peaks at ~90% for "Light & heavy" and "Mixed operations."

- Ends at ~65% for "Volume."

4. **WizardMath-13B (Red)**:

- Begins at ~20% for "Angles," spikes to ~60% for "Area," and fluctuates wildly between 10–70%.

- Sharp drops to ~10% for "Subtraction" and "Proportionality."

- Ends at ~20% for "Volume."

---

### Key Observations

- **WizardMath-13B (Red)** exhibits the most erratic performance, with extreme lows (e.g., ~10% for "Subtraction") and highs (~70% for "Area").

- **InternLM2-Math-7B (Blue)** and **InternLM2-7B (Orange)** show similar trends but with InternLM2-7B achieving higher peaks (e.g., ~95% for "Cylinders").

- **MAmmoTH-13B (Green)** demonstrates relative stability, with fewer extreme dips compared to other models.

- **Lowest Performance**: WizardMath-13B underperforms in "Subtraction" (~10%) and "Proportionality" (~15%).

- **Highest Performance**: InternLM2-7B excels in "Cylinders" (~95%) and "Estimation & rounding" (~90%).

---

### Interpretation

The data suggests that model performance varies significantly by topic and architecture:

1. **Model Size vs. Performance**: Larger models (e.g., MAmmoTH-13B, WizardMath-13B) do not consistently outperform smaller models (e.g., InternLM2-7B) across all topics.

2. **Topic-Specific Strengths**:

- InternLM2-7B excels in geometry-related topics ("Cylinders," "Estimation & rounding").

- WizardMath-13B struggles with arithmetic operations ("Subtraction," "Proportionality").

3. **Stability**: MAmmoTH-13B shows the least variability, suggesting robustness in handling diverse topics.

4. **Anomalies**: WizardMath-13B’s extreme lows (e.g., ~10% for "Subtraction") indicate potential weaknesses in specific problem types.

The graph highlights the importance of model specialization and the need for targeted improvements in underperforming areas.

DECODING INTELLIGENCE...