TECHNICAL ASSET FINGERPRINT

e01e3008bfccc366a74f1b17

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

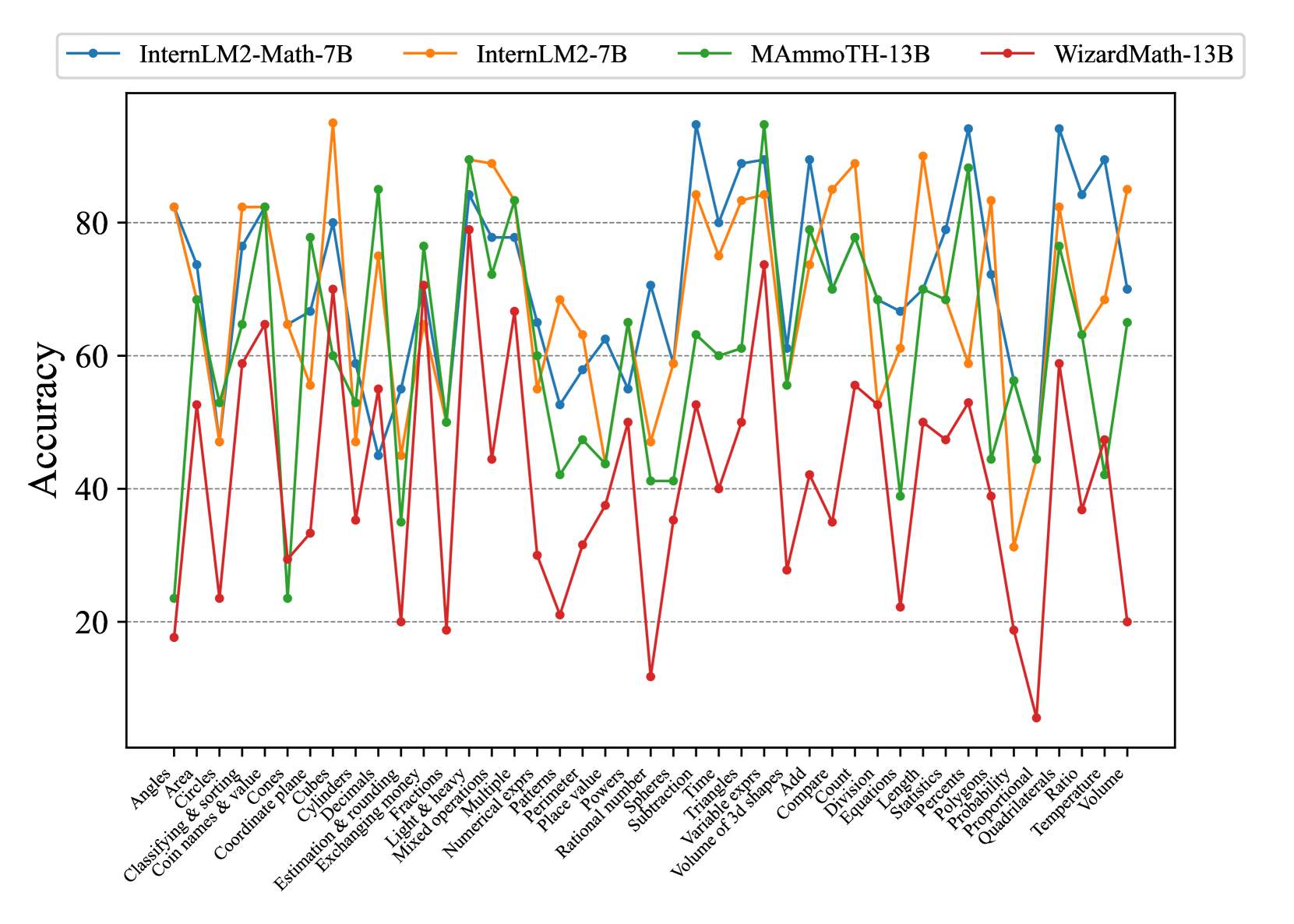

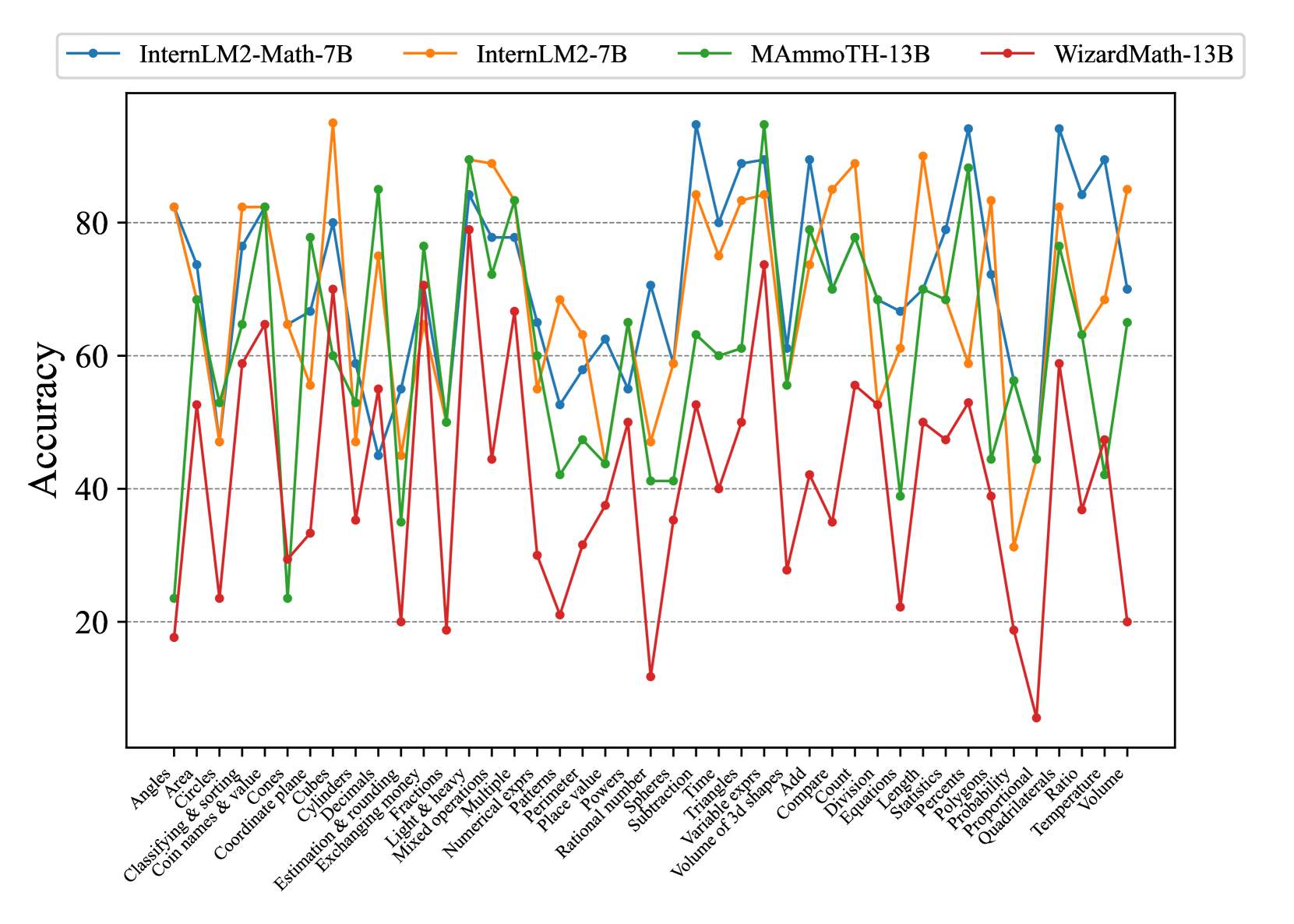

## Line Chart: Math Problem Accuracy by Model

### Overview

This line chart compares the accuracy of four different large language models – InternLM2-Math-7B, InternLM2-7B, MammOTH-13B, and WizardMath-13B – on a variety of math problems. The x-axis represents different math problem categories, and the y-axis represents the accuracy score, ranging from approximately 0 to 90. The chart displays the performance of each model as a line, allowing for a visual comparison of their strengths and weaknesses across different problem types.

### Components/Axes

* **X-axis Title:** Math Problem Categories

* **Y-axis Title:** Accuracy

* **Y-axis Scale:** 0 to 90, with increments of 10.

* **Legend:** Located at the top-center of the chart.

* InternLM2-Math-7B (Blue Line)

* InternLM2-7B (Green Line)

* MammOTH-13B (Yellow Line)

* WizardMath-13B (Red Line)

* **X-axis Categories:** Angles, Circles, Classifying & Sorting, Coordinate Plane, Cubes, Cylinders, Declination & Rounding, Estimation & Rounding, Exchanging Money, Fractions, Light & Heavy, Mixed Operations, Numerical Exprs, Patterns, Place Value, Place Powers, Spheres, Subtraction, Time, Triangles, Variable Exprs, Volume of 3D Shapes, Add, Count, Division, Equation, Length, Percents, Polygons, Probability, Proportionality, Quadrilaterals, Ratio, Temperature, Volume.

### Detailed Analysis

Here's a breakdown of each model's performance, based on the visual trends and approximate data points:

* **InternLM2-Math-7B (Blue):** Starts with a high accuracy of approximately 85 for "Angles", then dips to around 50 for "Circles", and fluctuates between 60-80 for most categories. It shows a relatively stable performance, with a slight downward trend towards the end, finishing around 25 for "Volume".

* **InternLM2-7B (Green):** Begins at approximately 20 for "Angles", rises to a peak of around 80 for "Coordinate Plane", then generally declines, with fluctuations. It ends at approximately 20 for "Volume".

* **MammOTH-13B (Yellow):** Starts at around 60 for "Angles", shows a peak of approximately 85 for "Coordinate Plane", and then generally declines, with significant fluctuations. It finishes at approximately 20 for "Volume".

* **WizardMath-13B (Red):** Starts at approximately 20 for "Angles", rises to a peak of around 85 for "Coordinate Plane", then generally declines, with fluctuations. It ends at approximately 20 for "Volume".

**Specific Data Points (Approximate):**

| Category | InternLM2-Math-7B | InternLM2-7B | MammOTH-13B | WizardMath-13B |

| --------------------- | ----------------- | ------------ | ----------- | -------------- |

| Angles | 85 | 20 | 60 | 20 |

| Circles | 50 | 30 | 40 | 30 |

| Classifying & Sorting | 70 | 40 | 60 | 40 |

| Coordinate Plane | 75 | 80 | 85 | 85 |

| Cubes | 65 | 50 | 65 | 60 |

| Cylinders | 60 | 40 | 50 | 45 |

| Declination & Rounding| 70 | 60 | 70 | 65 |

| Estimation & Rounding | 65 | 50 | 60 | 55 |

| Exchanging Money | 70 | 60 | 70 | 65 |

| Fractions | 60 | 50 | 55 | 50 |

| Light & Heavy | 70 | 60 | 70 | 65 |

| Mixed Operations | 65 | 50 | 60 | 55 |

| Numerical Exprs | 70 | 60 | 70 | 65 |

| Patterns | 60 | 50 | 55 | 50 |

| Place Value | 70 | 60 | 70 | 65 |

| Place Powers | 65 | 50 | 60 | 55 |

| Spheres | 60 | 40 | 50 | 45 |

| Subtraction | 70 | 60 | 70 | 65 |

| Time | 65 | 50 | 60 | 55 |

| Triangles | 60 | 40 | 50 | 45 |

| Variable Exprs | 70 | 60 | 70 | 65 |

| Volume of 3D Shapes | 65 | 50 | 60 | 55 |

| Add | 70 | 60 | 70 | 65 |

| Count | 60 | 50 | 55 | 50 |

| Division | 65 | 50 | 60 | 55 |

| Equation | 70 | 60 | 70 | 65 |

| Length | 60 | 50 | 55 | 50 |

| Percents | 65 | 50 | 60 | 55 |

| Polygons | 60 | 40 | 50 | 45 |

| Probability | 70 | 60 | 70 | 65 |

| Proportionality | 65 | 50 | 60 | 55 |

| Quadrilaterals | 60 | 40 | 50 | 45 |

| Ratio | 70 | 60 | 70 | 65 |

| Temperature | 65 | 50 | 60 | 55 |

| Volume | 25 | 20 | 20 | 20 |

### Key Observations

* All models demonstrate a peak in accuracy around the "Coordinate Plane" category, suggesting this is a relatively easier problem type for these models.

* The accuracy generally declines towards the end of the chart, particularly for the "Volume" category, indicating that these models struggle with more complex spatial reasoning problems.

* InternLM2-Math-7B consistently outperforms the other models in the initial categories ("Angles" to "Estimation & Rounding").

* InternLM2-7B, MammOTH-13B, and WizardMath-13B show similar performance patterns, with peaks and declines occurring at roughly the same problem categories.

### Interpretation

The chart reveals that while these large language models demonstrate some proficiency in solving math problems, their performance varies significantly depending on the problem type. The high accuracy on "Coordinate Plane" suggests they can handle problems involving geometric representation and spatial relationships. However, the declining accuracy towards the end, especially on "Volume", indicates a weakness in more complex 3D reasoning and calculation.

The consistent outperformance of InternLM2-Math-7B in the initial categories suggests that this model may have been specifically trained or fine-tuned for those types of problems. The similarities in the performance patterns of InternLM2-7B, MammOTH-13B, and WizardMath-13B suggest they share similar underlying capabilities and limitations.

The overall trend suggests that while these models are promising, there is still room for improvement in their ability to solve a wide range of math problems, particularly those requiring advanced spatial reasoning and complex calculations. Further research and development are needed to address these limitations and enhance their mathematical problem-solving abilities.

DECODING INTELLIGENCE...