\n

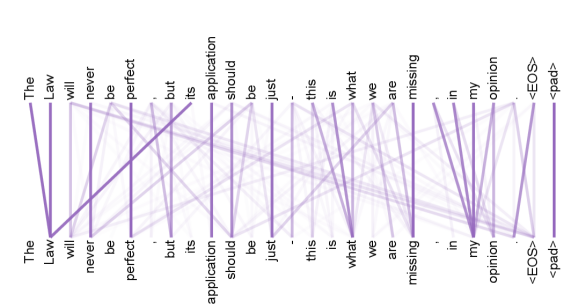

## Text Alignment Visualization: Attention Weights Between Identical Sequences

### Overview

The image is a visualization of word-level alignment or attention weights between two identical sequences of text. It displays two parallel lines of text, one positioned above the other, with a network of connecting lines (edges) between corresponding words. The visualization likely represents the output of an attention mechanism from a natural language processing model, showing how strongly each word in the top sequence "attends to" or aligns with each word in the bottom sequence.

### Components/Axes

* **Text Sequences:** Two identical lines of English text are displayed.

* **Top Sequence (Source):** "The Law will never be perfect, but its application should be just. This is what we are missing, in my opinion. <EOS> <pad>"

* **Bottom Sequence (Target):** "The Law will never be perfect, but its application should be just. This is what we are missing, in my opinion. <EOS> <pad>"

* **Connecting Lines (Edges):** A dense network of purple lines connects words from the top sequence to words in the bottom sequence.

* **Color:** All connecting lines are a shade of purple/violet.

* **Opacity/Thickness:** The lines vary significantly in opacity (transparency). Darker, more opaque lines indicate a stronger alignment or higher attention weight between the connected words. Lighter, more transparent lines indicate weaker connections.

* **Spatial Layout:**

* The source text is aligned horizontally across the top third of the image.

* The target text is aligned horizontally across the bottom third of the image.

* The network of connecting lines occupies the central third of the image, creating a complex web between the two text lines.

### Detailed Analysis

* **Text Transcription:** The complete, identical text in both sequences is:

`The Law will never be perfect, but its application should be just. This is what we are missing, in my opinion. <EOS> <pad>`

* **Language:** English.

* **Special Tokens:** The sequence ends with `<EOS>` (End Of Sequence) and `<pad>` (padding token), which are standard in machine learning text processing.

* **Connection Pattern Analysis:**

* **Self-Alignment Dominance:** The strongest, darkest purple lines connect each word in the top sequence directly to the *same* word in the bottom sequence (e.g., "The" to "The", "Law" to "Law"). This creates a strong, near-vertical line for each word pair.

* **Cross-Alignment Weaker Connections:** Lighter, more transparent lines connect words to other, non-identical words in the opposite sequence. For example, there are faint connections from "perfect" to "application" or from "missing" to "opinion".

* **Token Connections:** The special tokens `<EOS>` and `<pad>` at the end of each sequence show strong self-alignment and also have multiple weaker connections fanning out to various words in the opposite sequence, particularly to the final content words like "opinion".

### Key Observations

1. **Perfect Diagonal:** The primary visual feature is a strong, dark purple diagonal line formed by the self-alignments, indicating the model assigns the highest attention weight to matching words.

2. **Attention Diffusion:** Despite the strong self-attention, there is a significant amount of "diffuse" attention, represented by the web of lighter lines. This shows the model is also considering relationships between different words, albeit with lower confidence.

3. **End-Token Behavior:** The `<EOS>` and `<pad>` tokens act as sinks or hubs, receiving and sending out many weak connections, which is typical as these tokens often aggregate sequence-level information.

4. **Symmetry:** The connection pattern appears largely symmetrical around the central horizontal axis, which is expected since the two sequences are identical.

### Interpretation

This visualization demonstrates the behavior of a **self-attention mechanism** (likely from a Transformer model) applied to a single sentence. The data suggests:

* **Primary Function:** The model's core task here is to reconstruct or align the sequence with itself. The dominant dark diagonal confirms that the most important relationship for each token is its own position in the sequence.

* **Contextual Understanding:** The presence of weaker cross-connections indicates the model is not merely copying. It is building a contextual representation where each word's meaning is informed by its relationship to other words in the sentence (e.g., "application" is weakly connected to "just," its descriptor). This is the foundation for understanding syntax and semantics.

* **Model Confidence:** The variance in line opacity provides a visual proxy for the model's "confidence" or the magnitude of the attention weights. The stark contrast between the dark self-connections and the faint cross-connections implies a model that is highly certain about token identity but still performs broad, low-magnitude contextual integration.

* **Technical Context:** The inclusion of `<EOS>` and `<pad>` tokens frames this as a technical output from a neural network's processing pipeline, not a human-made diagram. It reveals the internal "reasoning" steps of the model as it processes the input text.

**In essence, the image is a window into how a neural network "reads" a sentence: it focuses first and foremost on each word itself, while simultaneously, more faintly, weaving a web of connections between all words to build a unified understanding.**