## Dual Line Charts: Model Scaling vs. Test Loss

### Overview

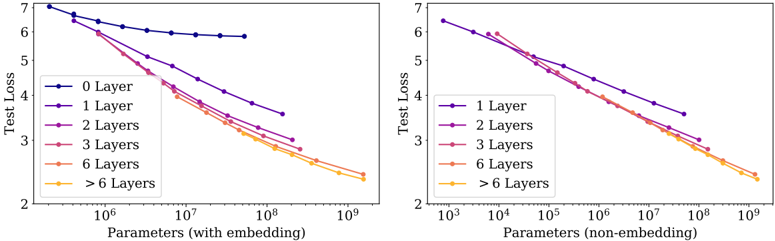

The image displays two side-by-side line charts analyzing the relationship between model size (measured in parameters) and test loss. The left chart uses total parameters including embeddings, while the right chart uses non-embedding parameters. Both charts show that as the number of parameters increases, test loss decreases, with deeper models (more layers) achieving lower loss for a given parameter count.

### Components/Axes

**Common Elements:**

* **Chart Type:** Two line charts with logarithmic x-axes.

* **Y-Axis (Both Charts):** Label: "Test Loss". Scale: Linear, ranging from 2 to 7.

* **Legend (Both Charts):** Located in the top-left corner of each plot area. Contains colored lines and labels for different model depths.

* **Line Colors & Labels:**

* Dark Blue: "0 Layer" (Left chart only)

* Purple: "1 Layer"

* Magenta: "2 Layers"

* Red: "3 Layers"

* Orange: "6 Layers"

* Yellow: "> 6 Layers"

**Left Chart Specifics:**

* **Title/Context:** Implicitly compares models using total parameter count.

* **X-Axis:** Label: "Parameters (with embedding)". Scale: Logarithmic, with major ticks at 10⁶, 10⁷, 10⁸, 10⁹.

**Right Chart Specifics:**

* **Title/Context:** Implicitly compares models using non-embedding parameter count.

* **X-Axis:** Label: "Parameters (non-embedding)". Scale: Logarithmic, with major ticks at 10³, 10⁴, 10⁵, 10⁶, 10⁷, 10⁸, 10⁹.

### Detailed Analysis

**Left Chart (Parameters with embedding):**

* **0 Layer (Dark Blue):** Starts at ~7.0 loss for ~10⁵ parameters. Shows a shallow, nearly flat decline, ending at ~6.0 loss for ~10⁸ parameters. This line is the highest (worst loss) and has the shallowest slope.

* **1 Layer (Purple):** Starts near 7.0 loss for ~10⁵ parameters. Slopes downward more steeply than the 0-layer line, ending at ~3.5 loss for ~10⁹ parameters.

* **2 Layers (Magenta):** Follows a path below the 1-layer line. Starts near 7.0 loss, ends at ~2.8 loss for ~10⁹ parameters.

* **3 Layers (Red):** Follows a path below the 2-layer line. Ends at ~2.6 loss for ~10⁹ parameters.

* **6 Layers (Orange):** Follows a path below the 3-layer line. Ends at ~2.5 loss for ~10⁹ parameters.

* **> 6 Layers (Yellow):** Follows the lowest path, nearly overlapping with the 6-layer line at the high-parameter end. Ends at ~2.4 loss for ~10⁹ parameters.

* **Trend:** All lines show a clear inverse relationship. For a fixed parameter count (e.g., 10⁸), test loss decreases as the number of layers increases (0 Layer > 1 Layer > 2 Layers...).

**Right Chart (Parameters non-embedding):**

* **1 Layer (Purple):** Starts at ~6.5 loss for ~10³ parameters. Slopes downward, ending at ~3.5 loss for ~10⁸ parameters.

* **2 Layers (Magenta):** Follows a path below the 1-layer line. Ends at ~2.8 loss for ~10⁹ parameters.

* **3 Layers (Red):** Follows a path below the 2-layer line. Ends at ~2.6 loss for ~10⁹ parameters.

* **6 Layers (Orange):** Follows a path below the 3-layer line. Ends at ~2.5 loss for ~10⁹ parameters.

* **> 6 Layers (Yellow):** Follows the lowest path, nearly overlapping with the 6-layer line. Ends at ~2.4 loss for ~10⁹ parameters.

* **Trend:** Similar inverse relationship. The lines for 2+ layers are tightly clustered, especially at higher parameter counts, suggesting diminishing returns from adding layers beyond a certain point when controlling for non-embedding parameters.

### Key Observations

1. **The "0 Layer" Baseline:** The 0-layer model (left chart only) performs significantly worse and scales poorly compared to any model with at least one layer.

2. **Layer Efficiency:** For the same total parameter budget (left chart), adding layers consistently improves performance (lowers loss). The gap between lines is most pronounced at lower parameter counts.

3. **Convergence at Scale:** In both charts, the performance lines for models with 3, 6, and >6 layers converge as parameter count increases towards 10⁹. This suggests that at very large scales, the advantage of extreme depth diminishes.

4. **Parameter Accounting:** The right chart shifts all curves to the left (lower parameter values) because it excludes embedding parameters, which can be a large portion of the total in language models. This reveals the scaling behavior of the core transformer layers.

### Interpretation

These charts demonstrate fundamental scaling laws for neural language models. The data suggests:

* **Depth is Crucial:** Moving from a 0-layer to a 1-layer model provides a massive performance leap. Further depth continues to yield benefits, but with diminishing returns.

* **Scaling Efficiency:** The near-linear decline on a log-linear plot (log x, linear y) indicates a power-law relationship between parameters and loss. This is a hallmark of efficient scaling.

* **Architectural Insight:** The convergence of deeper models at high parameter counts implies that given enough capacity, models of varying depths can achieve similar final performance. The choice between a very deep vs. a moderately deep but wider model may then depend on other factors like inference cost or training stability.

* **Practical Implication:** For a fixed compute budget (which correlates with parameters), allocating resources to increase depth (up to a point) is more beneficial than creating a shallow, massive model. The charts provide a visual guide for making such architectural trade-offs.