## Diagram: Evolution of NLP Models (2018-2023)

### Overview

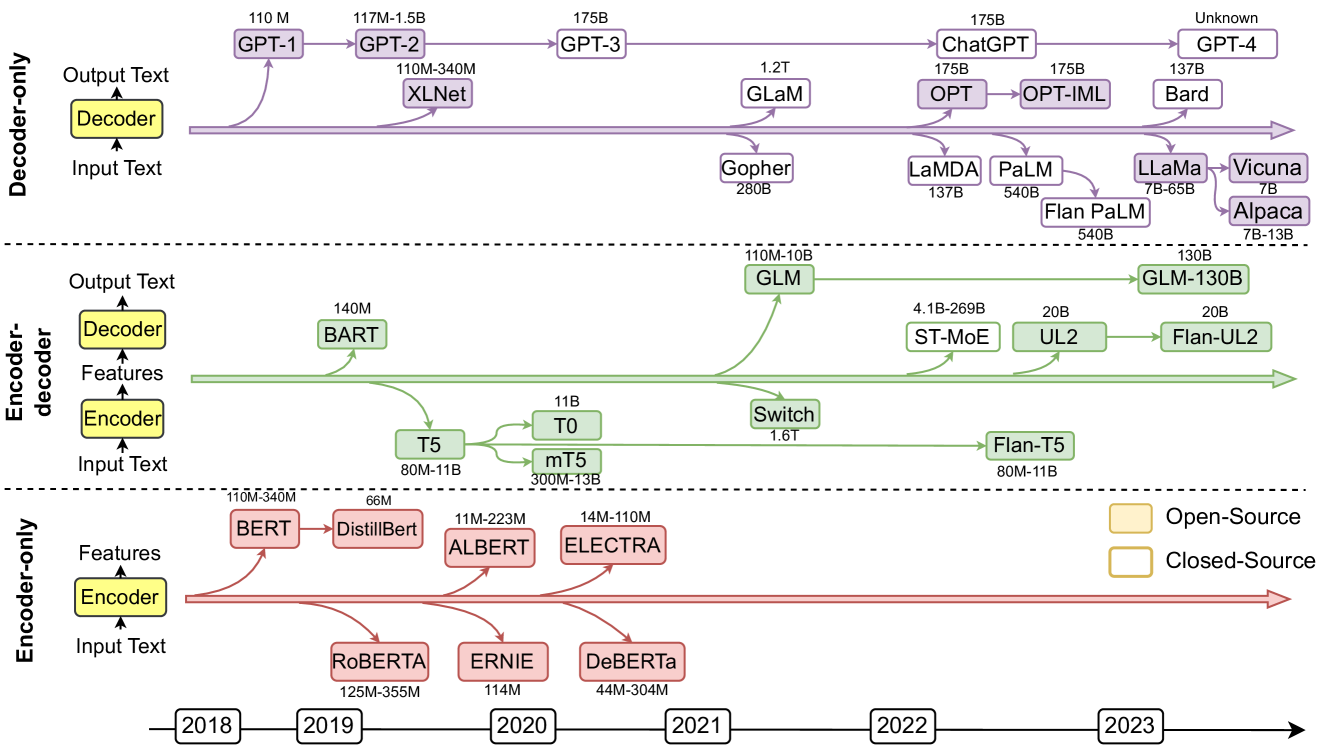

The diagram illustrates the progression of natural language processing (NLP) models across three architectural categories: **Decoder-only**, **Encoder-decoder**, and **Encoder-only**. Models are plotted by release year (x-axis) and parameter size (y-axis), with color-coding for open-source (yellow) and closed-source (orange) licenses. Arrows indicate temporal progression, and dashed lines separate architectural categories.

---

### Components/Axes

- **X-axis**: Years (2018–2023), labeled at yearly intervals.

- **Y-axis**: Model names with parameter sizes (e.g., "GPT-3 (175B)").

- **Legend**:

- Yellow = Open-Source

- Orange = Closed-Source

- **Key Elements**:

- Three horizontal sections separated by dashed lines (Decoder-only, Encoder-decoder, Encoder-only).

- Arrows connecting models to their release years.

- Parameter sizes range from 110M (million) to 1.75T (trillion).

---

### Detailed Analysis

#### Decoder-only Models (Top Section)

- **GPT-1** (110M, 2018, Open-Source)

- **GPT-2** (117M–1.5B, 2019, Open-Source)

- **GPT-3** (175B, 2020, Open-Source)

- **XLNet** (110M–340M, 2019, Open-Source)

- **GLaM** (1.2T, 2021, Closed-Source)

- **OPT** (175B, 2022, Open-Source)

- **OPT-IML** (175B, 2022, Open-Source)

- **Bard** (137B, 2023, Closed-Source)

- **Unknown** (Unlabeled, 2023, Open-Source)

#### Encoder-decoder Models (Middle Section)

- **BART** (140M, 2019, Open-Source)

- **T5** (66M–11B, 2019, Open-Source)

- **T0** (11B, 2020, Open-Source)

- **Switch** (1.6T, 2021, Open-Source)

- **Flan-T5** (80M–11B, 2022, Open-Source)

- **GLaM-110B** (110M–10B, 2021, Open-Source)

- **GLaM-130B** (130B, 2022, Open-Source)

- **ST-MoE** (4.1B–269B, 2022, Open-Source)

- **UL2** (20B, 2022, Open-Source)

- **Flan-UL2** (20B, 2022, Open-Source)

#### Encoder-only Models (Bottom Section)

- **BERT** (110M–340M, 2018, Open-Source)

- **DistilBERT** (66M, 2019, Open-Source)

- **ALBERT** (11M–223M, 2020, Open-Source)

- **ELECTRA** (14M–110M, 2020, Open-Source)

- **RoBERTa** (125M–355M, 2019, Open-Source)

- **ERNIE** (114M, 2020, Open-Source)

- **DeBERTa** (44M–304M, 2021, Open-Source)

---

### Key Observations

1. **Size Trends**:

- Decoder-only models dominate in parameter size, with **GLaM (1.2T)** and **Switch (1.6T)** being the largest (2021).

- Encoder-only models are smaller (e.g., **BERT: 110M–340M**) but foundational (2018–2021).

- Closed-source models (**GLaM, Bard**) are consistently larger than open-source counterparts.

2. **Temporal Progression**:

- **2018–2019**: Encoder-only models (BERT, RoBERTa) and early decoder-only models (GPT-1, GPT-2).

- **2020–2021**: Explosion in decoder-only model sizes (GPT-3, GLaM).

- **2022–2023**: Closed-source models (Bard, OPT-IML) and encoder-decoder hybrids (Flan-UL2).

3. **Licensing**:

- Open-source models dominate early years (2018–2021).

- Closed-source models emerge later (2021–2023), with **Bard (2023)** as the largest.

4. **Architectural Diversity**:

- Encoder-decoder models (e.g., T5, Flan-T5) focus on hybrid architectures.

- Encoder-only models emphasize efficiency (e.g., DistilBERT, ALBERT).

---

### Interpretation

The diagram reveals a clear trend toward **increasing model complexity** over time, driven by both open-source and closed-source initiatives. Open-source models pioneered foundational architectures (e.g., BERT, GPT-3), while closed-source models (e.g., GLaM, Bard) pushed parameter scales to unprecedented levels (1T+). The emergence of encoder-decoder hybrids (e.g., Flan-T5) suggests a shift toward task-specific adaptability. Notably, closed-source models occupy the largest parameter ranges, implying proprietary advancements in computational resources or optimization. The absence of closed-source models before 2021 highlights a recent shift in industry dynamics, possibly due to resource constraints or strategic IP management.