## Multi-Panel Line Chart: Impact of Ablating Attention Heads on Model Performance

### Overview

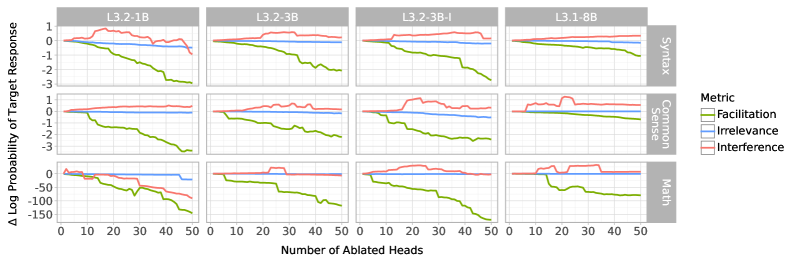

The image displays a 3x4 grid of line charts (12 subplots total) illustrating how the performance of different Large Language Models (LLMs) changes as an increasing number of attention heads are "ablated" (likely disabled or removed). Performance is measured by the change in log probability of the target response. The charts are organized by model variant (columns) and evaluation domain (rows).

### Components/Axes

* **Grid Structure:**

* **Columns (Model Variants):** Labeled at the top of each column. From left to right: `L3.2-1B`, `L3.2-3B`, `L3.2-3B-I`, `L3.1-8B`.

* **Rows (Evaluation Domains):** Labeled on the right side of the grid. From top to bottom: `Syntax`, `Common Sense`, `Math`.

* **Axes:**

* **X-axis (All subplots):** `Number of Ablated Heads`. Linear scale from 0 to 50, with major ticks at 0, 10, 20, 30, 40, 50.

* **Y-axis (All subplots):** `Δ Log Probability of Target Response`. The scale varies by row:

* **Syntax & Common Sense Rows:** Linear scale from approximately -3.5 to +1.5. Major ticks at -3, -2, -1, 0, 1.

* **Math Row:** Linear scale from approximately -160 to +10. Major ticks at -150, -100, -50, 0.

* **Legend:** Located on the far right of the image. Title: `Metric`. Contains three entries with corresponding line colors:

* `Facilitation` - **Green line**

* `Irrelevance` - **Blue line**

* `Interference` - **Red line**

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

**Row 1: Syntax**

* **L3.2-1B:** Green (Facilitation) starts near 0, declines steadily to ~-3.2 at 50 heads. Blue (Irrelevance) remains near 0 throughout. Red (Interference) fluctuates slightly above 0, peaking near +1 at ~15 heads, ending near 0.

* **L3.2-3B:** Green declines to ~-2.2. Blue stays near 0. Red stays near 0 with minor fluctuations.

* **L3.2-3B-I:** Green declines to ~-2.8. Blue stays near 0. Red shows a slight positive bump between 20-40 heads, peaking near +0.8.

* **L3.1-8B:** Green declines to ~-1.2. Blue stays near 0. Red stays near 0.

**Row 2: Common Sense**

* **L3.2-1B:** Green declines to ~-3.0. Blue stays near 0. Red shows a small positive bump, peaking near +0.5 at ~25 heads.

* **L3.2-3B:** Green declines to ~-2.0. Blue stays near 0. Red shows a positive bump, peaking near +1.0 at ~25 heads.

* **L3.2-3B-I:** Green declines to ~-2.5. Blue stays near 0. Red shows a pronounced positive bump, peaking near +1.5 at ~20 heads.

* **L3.1-8B:** Green declines to ~-1.0. Blue stays near 0. Red shows a positive bump, peaking near +1.2 at ~20 heads.

**Row 3: Math**

* **L3.2-1B:** Green shows a dramatic, steep decline, reaching ~-150 at 50 heads. Blue shows a slight negative trend, ending near -10. Red stays near 0.

* **L3.2-3B:** Green declines steeply to ~-100. Blue stays near 0. Red stays near 0.

* **L3.2-3B-I:** Green declines steeply to ~-150. Blue stays near 0. Red stays near 0.

* **L3.1-8B:** Green declines to ~-80. Blue shows a slight positive bump early on. Red shows a positive bump, peaking near +20 at ~25 heads.

### Key Observations

1. **Dominant Negative Trend for Facilitation:** In every single subplot, the green line (`Facilitation`) shows a clear downward trend as more heads are ablated. This negative impact is most severe in the `Math` domain, where the y-axis scale is an order of magnitude larger.

2. **Stability of Irrelevance:** The blue line (`Irrelevance`) remains close to the zero baseline across nearly all conditions, indicating that ablating heads has minimal effect on this metric.

3. **Variable Impact on Interference:** The red line (`Interference`) shows varied behavior. It often remains near zero but exhibits notable positive bumps (improvement) in several `Common Sense` subplots and the `L3.1-8B` Math subplot. It rarely shows a negative trend.

4. **Model Size & Variant Differences:** The largest model (`L3.1-8B`) generally shows the least severe decline in `Facilitation` for `Syntax` and `Common Sense`. The `L3.2-3B-I` variant often shows more pronounced positive `Interference` bumps than its counterparts.

5. **Domain Sensitivity:** The `Math` domain is uniquely sensitive to head ablation for the `Facilitation` metric, with performance dropping catastrophically compared to `Syntax` and `Common Sense`.

### Interpretation

This chart investigates the functional specialization of attention heads in LLMs by measuring the effect of their removal (`ablation`) on different types of reasoning tasks.

* **What the data suggests:** The consistent, steep decline of the `Facilitation` metric indicates that a significant number of attention heads are crucial for the model to correctly generate or support the target response. Their removal directly harms performance. This effect is dramatically amplified for mathematical reasoning, suggesting that math tasks rely on a more fragile or distributed set of attentional mechanisms.

* **Relationship between elements:** The `Irrelevance` metric acts as a control, showing that the ablation procedure itself doesn't randomly affect all predictions. The `Interference` metric's occasional positive bumps are intriguing; they suggest that in some contexts (especially common sense reasoning), removing certain heads might actually *reduce* the model's tendency to generate incorrect or interfering information, thereby improving the relative probability of the correct target.

* **Notable anomalies:** The extreme scale of the `Math` y-axis is the most striking anomaly. It implies that mathematical correctness is highly vulnerable to the perturbation of the model's attention mechanism. The positive `Interference` bumps in `Common Sense` for the `3B-I` and `8B` models could indicate the presence of "counter-productive" heads that the model has learned to use for certain types of distractors.

**In summary, the visualization provides strong evidence that attention heads are not uniformly important; their necessity varies by task domain, with mathematical reasoning being particularly dependent on a large subset of heads for facilitation, while common sense reasoning may involve heads that sometimes introduce interference.**