## Line Chart: Impact of Ablated Attention Heads on Model Performance Across Tasks

### Overview

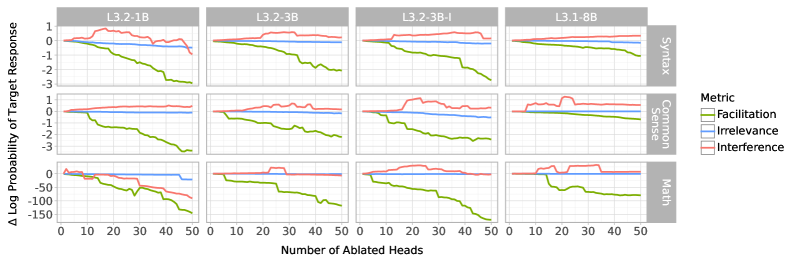

The image displays a multi-panel line chart comparing the effects of ablating attention heads in different language models (L3.2-1B, L3.2-3B, L3.2-3B-I, L3.1-8B) on three metrics: Syntax, Common Sense, and Math. Each panel shows three lines representing "Facilitation" (green), "Irrelevance" (blue), and "Interference" (red), plotted against the number of ablated heads (0–50) and two y-axes: Δ Log Probability of Target Response (top) and Δ Log Probability of Target Response (bottom).

---

### Components/Axes

- **X-axis**: "Number of Ablated Heads" (0–50, integer increments).

- **Y-axes**:

- **Top**: Δ Log Probability of Target Response (range: -150 to 1).

- **Bottom**: Δ Log Probability of Target Response (range: -3 to 1).

- **Legend**:

- Green: Facilitation

- Blue: Irrelevance

- Red: Interference

- **Subplots**:

- **Rows**: Syntax (top row), Common Sense (middle row), Math (bottom row).

- **Columns**: Models (L3.2-1B, L3.2-3B, L3.2-3B-I, L3.1-8B).

---

### Detailed Analysis

#### Syntax Metric

- **L3.2-1B**:

- Green (Facilitation): Starts near 0, declines sharply to ~-3 by 50 heads.

- Blue (Irrelevance): Flat near 0.

- Red (Interference): Peaks at ~0.5, then declines to ~-0.5.

- **L3.2-3B**:

- Green: Declines to ~-2.5.

- Blue: Slightly negative (~-0.2).

- Red: Peaks at ~0.3, then stabilizes.

- **L3.2-3B-I**:

- Green: Declines to ~-2.8.

- Blue: Slightly negative (~-0.1).

- Red: Peaks at ~0.4, then stabilizes.

- **L3.1-8B**:

- Green: Declines to ~-2.2.

- Blue: Slightly negative (~-0.1).

- Red: Peaks at ~0.5, then stabilizes.

#### Common Sense Metric

- **L3.2-1B**:

- Green: Declines to ~-2.5.

- Blue: Flat near 0.

- Red: Peaks at ~0.3, then declines to ~-0.2.

- **L3.2-3B**:

- Green: Declines to ~-2.2.

- Blue: Slightly negative (~-0.1).

- Red: Peaks at ~0.4, then stabilizes.

- **L3.2-3B-I**:

- Green: Declines to ~-2.5.

- Blue: Slightly negative (~-0.1).

- Red: Peaks at ~0.5, then stabilizes.

- **L3.1-8B**:

- Green: Declines to ~-2.0.

- Blue: Slightly negative (~-0.1).

- Red: Peaks at ~0.6, then stabilizes.

#### Math Metric

- **L3.2-1B**:

- Green: Declines to ~-50.

- Blue: Flat near 0.

- Red: Peaks at ~-20, then declines to ~-30.

- **L3.2-3B**:

- Green: Declines to ~-45.

- Blue: Slightly negative (~-0.1).

- Red: Peaks at ~-15, then declines to ~-25.

- **L3.2-3B-I**:

- Green: Declines to ~-48.

- Blue: Slightly negative (~-0.1).

- Red: Peaks at ~-10, then declines to ~-20.

- **L3.1-8B**:

- Green: Declines to ~-40.

- Blue: Slightly negative (~-0.1).

- Red: Peaks at ~-5, then declines to ~-15.

---

### Key Observations

1. **Facilitation (Green)**:

- Consistently declines across all models and metrics as more heads are ablated.

- Most pronounced in the Math metric (e.g., L3.2-1B drops to ~-50).

2. **Irrelevance (Blue)**:

- Remains nearly flat (near 0) across all models and metrics.

3. **Interference (Red)**:

- Peaks early (at ~10–20 ablated heads) and then declines.

- Largest magnitude in the Math metric (e.g., L3.2-1B peaks at ~-20).

---

### Interpretation

The data suggests that ablating attention heads in these models has distinct effects on task performance:

- **Facilitation** (green) decreases as more heads are removed, indicating that certain heads are critical for task-specific processing.

- **Interference** (red) initially increases, suggesting that removing heads may disrupt the model's ability to avoid irrelevant or conflicting information, but this effect diminishes with further ablation.

- **Irrelevance** (blue) remains stable, implying that the models' ability to ignore irrelevant information is less sensitive to head ablation.

- The **Math metric** shows the most drastic changes, particularly in Facilitation, highlighting its sensitivity to attention head configurations.

The trends align with the hypothesis that attention heads play specialized roles, and their removal disproportionately impacts task-specific performance, especially in complex tasks like Math.