## Diagram: System Architecture for Agent-Environment Interaction and Training

### Overview

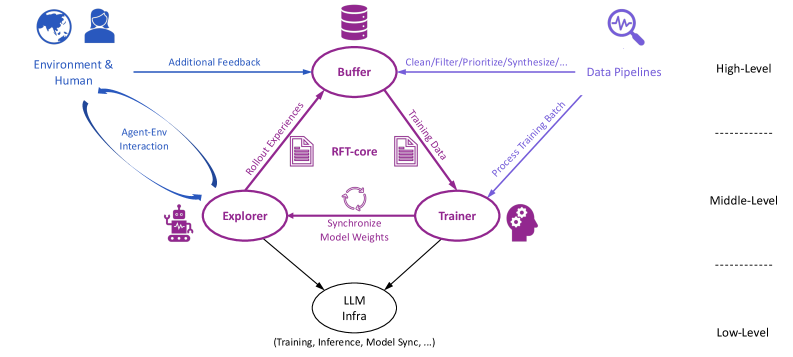

The diagram illustrates a multi-component system architecture for agent-environment interaction, training, and inference. It depicts data flow between components such as the Buffer, Explorer, Trainer, RFT-core, and LLM Infra, with feedback loops and synchronization mechanisms.

### Components/Axes

- **Key Components**:

- **Buffer**: Central node receiving "Additional Feedback" from Environment & Human and processing data via "Clean/Filter/Prioritize/Synthesize..." pipelines.

- **Explorer**: Receives "Rollout Experiences" from Buffer and interacts with Environment & Human via "Agent-Env Interaction" loop.

- **Trainer**: Processes "Training Data" from Buffer and "Process Training Batch" from Explorer, synchronizing model weights with LLM Infra.

- **RFT-core**: Central to feedback loops, connected to both Explorer and Trainer.

- **LLM Infra**: Handles "Training, Inference, Model Sync..." at the lowest level, connected to both Explorer and Trainer.

- **Data Flow**:

- Arrows indicate directional processes (e.g., "Rollout Experiences" from Buffer to Explorer).

- Feedback loops (e.g., "Agent-Env Interaction" between Explorer and Environment & Human).

- Synchronization mechanisms (e.g., "Synchronize Model Weights" between Trainer and LLM Infra).

### Detailed Analysis

- **Buffer**: Acts as a central hub for data preprocessing and prioritization, feeding into both Explorer and Trainer.

- **Explorer**: Engages in active exploration of the environment, generating experiences that inform training.

- **Trainer**: Processes training data and batches, ensuring model updates are synchronized with LLM Infra.

- **RFT-core**: Likely represents a reinforcement learning framework core, enabling iterative learning through feedback.

- **LLM Infra**: Provides the foundational infrastructure for training and inference, ensuring model consistency.

### Key Observations

- **Feedback Loops**: Multiple feedback mechanisms (e.g., "Agent-Env Interaction," "Rollout Experiences") suggest iterative learning and adaptation.

- **Synchronization**: Explicit emphasis on model weight synchronization between Trainer and LLM Infra highlights the importance of consistency.

- **Modular Design**: Components are decoupled but interconnected, allowing scalability and specialization (e.g., Buffer handles data pipelines, LLM Infra focuses on inference).

### Interpretation

This architecture represents a closed-loop system for training and deploying intelligent agents. The Buffer ensures data quality before distribution, while the Explorer and Trainer balance exploration and exploitation. The RFT-core and LLM Infra enable efficient learning and inference, with feedback loops allowing continuous improvement. The system’s modularity suggests adaptability to different environments and tasks, with the Buffer and Data Pipelines acting as critical control points for data integrity.