## Line Graph: LM Loss vs. Number of Hybrid Full Layers

### Overview

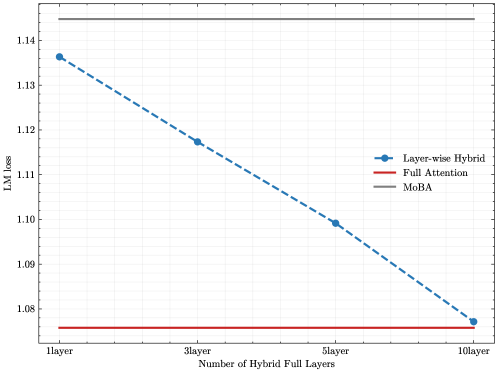

The image is a line graph comparing the Language Model (LM) Loss of three different model architectures or attention mechanisms as a function of the number of "Hybrid Full Layers" used. The graph demonstrates how the loss metric changes for one method ("Layer-wise Hybrid") as a specific parameter increases, while the other two methods ("Full Attention" and "MoBA") serve as constant baselines.

### Components/Axes

* **Chart Type:** Line graph with markers.

* **X-Axis:**

* **Title:** "Number of Hybrid Full Layers"

* **Scale/Markers:** Categorical with four discrete points: "1 layer", "3 layer", "5 layer", "10 layer".

* **Y-Axis:**

* **Title:** "LM Loss"

* **Scale:** Linear, ranging from approximately 1.075 to 1.145. Major tick marks are at 1.08, 1.09, 1.10, 1.11, 1.12, 1.13, and 1.14.

* **Legend:**

* **Position:** Center-right of the plot area.

* **Series 1:** "Layer-wise Hybrid" - Represented by a blue dashed line with circular markers.

* **Series 2:** "Full Attention" - Represented by a solid red line.

* **Series 3:** "MoBA" - Represented by a solid gray line.

* **Background:** White with a light gray grid.

### Detailed Analysis

**1. Layer-wise Hybrid (Blue Dashed Line with Circles):**

* **Trend:** Shows a clear, consistent downward (improving) trend as the number of hybrid full layers increases.

* **Data Points (Approximate):**

* At **1 layer**: LM Loss ≈ 1.136

* At **3 layer**: LM Loss ≈ 1.118

* At **5 layer**: LM Loss ≈ 1.109

* At **10 layer**: LM Loss ≈ 1.077

* **Visual Check:** The line slopes downward from left to right. The blue color and circular markers match the legend entry for "Layer-wise Hybrid".

**2. Full Attention (Solid Red Line):**

* **Trend:** Perfectly horizontal (constant). This indicates its performance is independent of the "Number of Hybrid Full Layers" parameter, serving as a fixed baseline.

* **Value:** LM Loss is constant at approximately **1.075**. This is the lowest (best) loss value on the chart.

* **Visual Check:** The red line is positioned at the very bottom of the plot area, matching the "Full Attention" legend.

**3. MoBA (Solid Gray Line):**

* **Trend:** Perfectly horizontal (constant). Also serves as a fixed baseline.

* **Value:** LM Loss is constant at approximately **1.145**. This is the highest (worst) loss value on the chart.

* **Visual Check:** The gray line is positioned at the very top of the plot area, matching the "MoBA" legend.

### Key Observations

1. **Inverse Relationship:** For the "Layer-wise Hybrid" method, there is a strong inverse relationship between the number of hybrid full layers and LM Loss. More layers lead to significantly lower loss.

2. **Performance Convergence:** The "Layer-wise Hybrid" method's performance improves from being worse than "Full Attention" but better than "MoBA" at 1 layer, to nearly matching the "Full Attention" baseline at 10 layers.

3. **Baseline Spread:** There is a substantial performance gap (≈0.07 in LM Loss) between the two constant baselines, "Full Attention" (best) and "MoBA" (worst).

4. **Diminishing Returns:** The rate of improvement for "Layer-wise Hybrid" appears to slow slightly. The drop from 1 to 3 layers (≈0.018) is larger than the drop from 5 to 10 layers (≈0.032 over 5 layers vs. ≈0.009 over 2 layers).

### Interpretation

This graph presents a technical evaluation likely from a machine learning research paper. It demonstrates the efficacy of a "Layer-wise Hybrid" attention mechanism.

* **What the data suggests:** The "Layer-wise Hybrid" approach is a tunable method where increasing a specific architectural parameter (hybrid full layers) directly improves model performance (reduces LM Loss). Its goal appears to be to approximate the performance of the "Full Attention" mechanism, which is often considered a gold standard but may be computationally expensive.

* **How elements relate:** The "Full Attention" and "MoBA" lines act as critical reference points. They establish the performance ceiling (Full Attention) and floor (MoBA) for this experiment. The "Layer-wise Hybrid" line is the variable under test, showing its trajectory between these bounds.

* **Notable implications:** The key takeaway is that the "Layer-wise Hybrid" method is effective and scalable. At 10 layers, it achieves a loss nearly identical to "Full Attention," suggesting it could be a viable, potentially more efficient alternative. The constant, poor performance of "MoBA" highlights it as an inferior method in this specific comparison. The graph argues for the value of increasing hybrid layers in this architecture.