\n

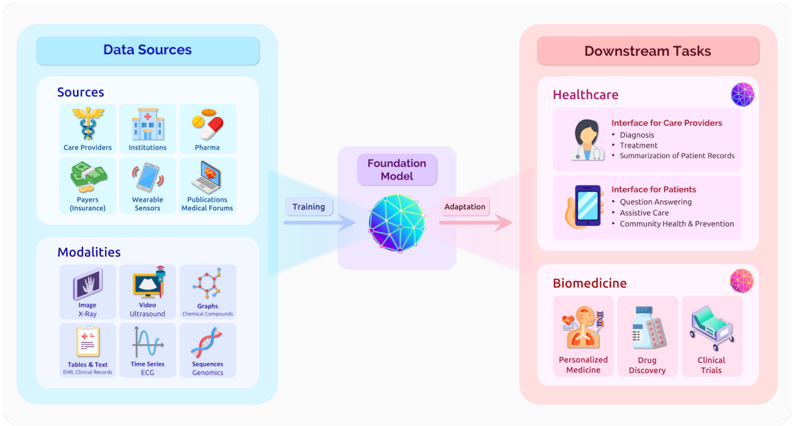

## Diagram: Foundation Model for Healthcare and Biomed

| Category | Description | Examples |

|---|---|---|

| **Data Sources** | Diverse data types used to train the model. | Electronic Health Records (EHR), Medical Imaging (X-rays, CT scans, MRIs), Genomic Data, Biomedical Literature (PubMed), Clinical Trials Data, Real-World Evidence (RWE), Wearable Sensor Data |

| **Foundation Model** | The core AI model, typically a large language model (LLM) or multimodal model. | BERT, GPT-3, PaLM, Med-PaLM, BioBERT, ClinicalBERT, GatorTron, TriLM |

| **Downstream Tasks** | Specific healthcare applications built on top of the foundation model. | Diagnosis Prediction, Drug Discovery, Personalized Medicine, Clinical Decision Support, Patient Risk Stratification, Medical Summarization, Report Generation, Anomaly Detection |

| **Evaluation Metrics** | Measures used to assess the performance of the model. | Accuracy, Precision, Recall, F1-score, AUC-ROC, BLEU score (for text generation), ROUGE score (for summarization), Clinical Utility |

| **Challenges** | Obstacles to overcome for successful implementation. | Data Privacy & Security (HIPAA compliance), Data Bias & Fairness, Model Interpretability & Explainability, Generalizability & Robustness, Computational Cost & Scalability, Regulatory Approval |

| **Key Technologies** | Technologies enabling the development and deployment of foundation models. | Transfer Learning, Self-Supervised Learning, Few-Shot Learning, Federated Learning, Distributed Training, Cloud Computing, GPUs, TPUs |

**Diagram Components:**

* **Input Layer:** Represents the various data sources feeding into the foundation model.

* **Foundation Model Layer:** Illustrates the core AI model processing the data.

* **Output Layer:** Shows the downstream tasks and applications utilizing the model's output.

* **Feedback Loop:** Indicates the iterative process of model refinement based on evaluation metrics and real-world feedback.

**Additional Notes:**

* Foundation models are pre-trained on massive datasets and can be adapted to a wide range of downstream tasks.

* Multimodal models can process and integrate data from multiple sources (e.g., text, images, genomics).

* Addressing challenges related to data privacy, bias, and interpretability is crucial for responsible AI in healthcare.

* Continuous monitoring and evaluation are essential to ensure the model's performance and safety over time.