TECHNICAL ASSET FINGERPRINT

e1b1e151da339a462dc51b9d

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Process Diagram: Chain-of-Thought (CoT) Training and Fine-tuning

### Overview

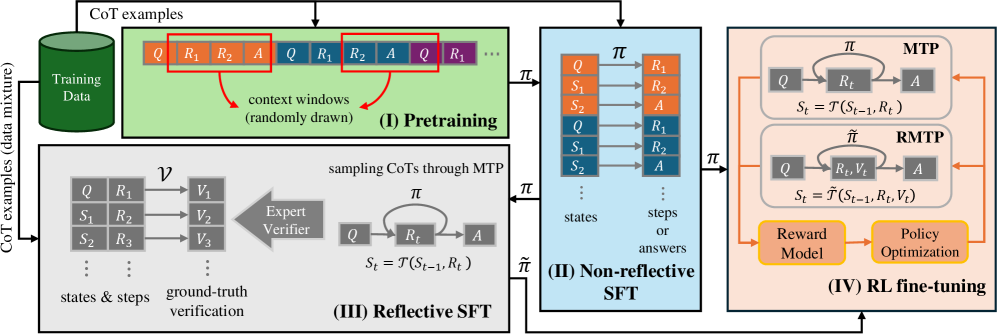

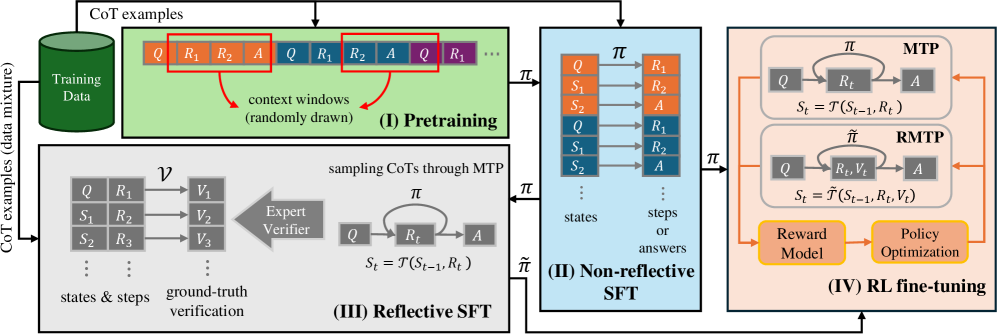

The image is a process diagram illustrating a four-stage approach for training and fine-tuning a model using Chain-of-Thought (CoT) examples. The stages are Pretraining, Non-reflective SFT (Supervised Fine-Tuning), Reflective SFT, and RL (Reinforcement Learning) fine-tuning. The diagram shows the flow of data and the interactions between different components in each stage.

### Components/Axes

* **Stage I: Pretraining (Top-Left, Green Box)**

* Input: "CoT examples (data mixture)" from a "Training Data" cylinder.

* Process: "context windows (randomly drawn)" from the training data.

* Data Representation: A sequence of blocks labeled "Q", "R1", "R2", "A", representing Question, Reasoning Step 1, Reasoning Step 2, and Answer, respectively. These blocks are arranged linearly, with red boxes highlighting example context windows.

* **Stage II: Non-reflective SFT (Center, Blue Box)**

* Input: Policy π from Pretraining.

* Process: A series of states (S1, S2, Q) and steps/answers (R1, R2, A) are processed.

* Data Representation: States are represented as "Q", "S1", "S2", and steps/answers are represented as "R1", "R2", "A". Arrows indicate the flow of information.

* **Stage III: Reflective SFT (Bottom-Left, Gray Box)**

* Input: "CoT examples (data mixture)".

* Process: "sampling CoTs through MTP" and "ground-truth verification" using an "Expert Verifier".

* Data Representation: States & steps are represented as "Q", "R1", "S1", "R2", "S2", "R3". These are mapped via ν to ground-truth verifications "V1", "V2", "V3". The policy π is used to transition between Q, Rt, and A, with the state St = T(St-1, Rt).

* **Stage IV: RL fine-tuning (Right, Peach Box)**

* Input: Policy π from Non-reflective SFT and Reflective SFT.

* Process: Two parallel processes, MTP (top) and RMTP (bottom), followed by "Reward Model" and "Policy Optimization".

* Data Representation:

* MTP: Transitions between Q, Rt, and A, with the state St = T(St-1, Rt).

* RMTP: Transitions between Q, Rt, Vt, and A, with the state St = T~(St-1, Rt, Vt).

* **Arrows**: Arrows indicate the flow of data and control between stages and components.

* **Labels**: "CoT examples (data mixture)", "Training Data", "context windows (randomly drawn)", "Pretraining", "Non-reflective SFT", "Reflective SFT", "RL fine-tuning", "Expert Verifier", "Reward Model", "Policy Optimization", "MTP", "RMTP".

### Detailed Analysis or ### Content Details

* **Pretraining (I)**: The model is initially trained on a mixture of CoT examples. Context windows are randomly drawn from this data.

* **Non-reflective SFT (II)**: The model is fine-tuned using supervised learning, without explicit reflection on the reasoning process. The policy π guides the transitions between states and answers.

* **Reflective SFT (III)**: This stage introduces reflection by sampling CoTs through MTP and verifying them against ground truth using an expert verifier. The policy π is used to transition between Q, Rt, and A, with the state St = T(St-1, Rt).

* **RL fine-tuning (IV)**: The model is further fine-tuned using reinforcement learning. Two processes, MTP and RMTP, are used. The reward model provides feedback, and the policy is optimized based on this feedback.

### Key Observations

* The diagram illustrates a multi-stage training process that incorporates both supervised and reinforcement learning.

* The "Reflective SFT" stage introduces a mechanism for verifying the reasoning process against ground truth.

* The "RL fine-tuning" stage uses two parallel processes, MTP and RMTP, which may represent different reward structures or training objectives.

### Interpretation

The diagram presents a comprehensive approach to training and fine-tuning models using Chain-of-Thought reasoning. The process starts with pretraining on a mixture of CoT examples, followed by supervised fine-tuning, reflective fine-tuning, and finally, reinforcement learning fine-tuning. The inclusion of an "Expert Verifier" in the "Reflective SFT" stage suggests an attempt to improve the quality of the reasoning process by comparing it against ground truth. The use of two parallel processes (MTP and RMTP) in the "RL fine-tuning" stage may indicate an exploration of different reward structures or training objectives. Overall, the diagram highlights the importance of both data quality and training methodology in developing effective CoT models.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Training Pipeline for Conversational Agents

### Overview

The image depicts a four-stage training pipeline for conversational agents, starting with pretraining on a mixture of CoT (Chain-of-Thought) examples and culminating in Reinforcement Learning fine-tuning (RL fine-tuning). The pipeline leverages techniques like MTP (Model-based Trajectory Planning), RMTMP (Reward-Model-based Trajectory Planning), and an Expert Verifier. The diagram illustrates the flow of data and the transformations applied at each stage.

### Components/Axes

The diagram is divided into four main sections, labeled (I) through (IV), representing the stages of the training process. Each section contains boxes and arrows illustrating data flow and processing. Key elements include:

* **CoT examples (data mixture):** The initial training data, consisting of question-reasoning-answer sequences.

* **Q:** Represents a question.

* **R1, R2, R3...:** Represents reasoning steps.

* **A:** Represents the answer.

* **S1, S2, S3...:** Represents states.

* **π:** Represents a policy.

* **ν:** Represents ground-truth verification.

* **V1, V2, V3...:** Represents verification scores.

* **MTP:** Model-based Trajectory Planning.

* **RMTMP:** Reward-Model-based Trajectory Planning.

* **Reward Model:** A component used in RL fine-tuning.

* **Policy Optimization:** A component used in RL fine-tuning.

### Detailed Analysis or Content Details

**(I) Pretraining:**

* The process begins with a "Training Data" block containing CoT examples. These examples are represented as sequences of Q, R1, R2, A, etc.

* "Context windows (randomly drawn)" are extracted from the CoT examples. These windows are fed into the next stage.

**(II) Non-reflective SFT:**

* The output of the Pretraining stage is fed into the "Non-reflective SFT" (Supervised Fine-Tuning) stage.

* The input is a sequence of states and steps/answers.

* The policy π is used to generate an action A based on the current state S.

* The state transitions to S<sub>t</sub> = τ(S<sub>t-1</sub>, R<sub>t</sub>).

**(III) Reflective SFT:**

* This stage introduces an "Expert Verifier" that evaluates the generated reasoning steps.

* The Expert Verifier provides verification scores (V1, V2, V3...) for the ground-truth verification.

* The policy π is used to generate an action A based on the current state S.

* The state transitions to S<sub>t</sub> = τ(S<sub>t-1</sub>, R<sub>t</sub>).

* Sampling CoTs is done through MTP.

**(IV) RL fine-tuning:**

* This stage utilizes both MTP and RMTMP.

* MTP takes the policy π and generates actions A based on states Q and reasoning steps R1, R2.

* RMTMP incorporates a "Reward Model" to evaluate the generated responses.

* The state transitions to S<sub>t</sub> = τ(S<sub>t-1</sub>, R<sub>t</sub>, V<sub>t</sub>).

* "Policy Optimization" is performed based on the rewards received from the Reward Model.

* An arrow indicates feedback from the RL fine-tuning stage back to the Non-reflective SFT stage.

### Key Observations

* The pipeline progressively refines the conversational agent's capabilities.

* The introduction of the Expert Verifier in stage (III) adds a layer of quality control.

* The use of MTP and RMTMP in stage (IV) enables reinforcement learning based on both model-based and reward-based feedback.

* The feedback loop from RL fine-tuning to Non-reflective SFT suggests iterative refinement of the model.

### Interpretation

The diagram illustrates a sophisticated training pipeline for building high-quality conversational agents. The pipeline moves from initial pretraining on a large dataset of CoT examples to supervised fine-tuning, then to a reflective SFT stage that incorporates expert verification, and finally to reinforcement learning fine-tuning. The use of MTP and RMTMP suggests a focus on planning and reward maximization. The feedback loop indicates a commitment to continuous improvement. The diagram highlights the importance of both supervised learning and reinforcement learning in achieving optimal performance. The inclusion of an Expert Verifier suggests a desire to ensure the generated reasoning steps are accurate and reliable. The overall architecture is designed to create a conversational agent that can not only generate coherent responses but also provide accurate and well-reasoned answers. The diagram suggests a focus on building agents that can "think" through problems and explain their reasoning, rather than simply providing answers.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Technical Diagram: Multi-Stage Training Pipeline for Reasoning Models

### Overview

The image is a technical flowchart illustrating a four-stage training pipeline for a language model designed to perform reasoning tasks, likely using Chain-of-Thought (CoT) methods. The pipeline progresses from left to right, showing data flow and transformations through Pretraining, Non-reflective Supervised Fine-Tuning (SFT), Reflective SFT, and Reinforcement Learning (RL) fine-tuning. The diagram uses color-coded boxes, arrows, and mathematical notation to represent processes, data structures, and model components.

### Components/Axes

The diagram is segmented into four primary, labeled stages:

1. **(I) Pretraining (Green Box, Top-Left):**

* **Input:** "Training Data" (depicted as a green cylinder) and "CoT examples" (arrow from top).

* **Process:** Shows sequences of tokens (Q, R₁, R₂, A, etc.) being processed. Red boxes highlight "context windows (randomly drawn)".

* **Output:** A policy model denoted by π.

2. **(II) Non-reflective SFT (Blue Box, Center):**

* **Input:** The policy model π from stage (I).

* **Process:** Depicts a sequence of "states" (Q, S₁, S₂, ...) mapping to "steps or answers" (R₁, R₂, A, ...). The transition is governed by the policy π.

* **Output:** A refined policy model π.

3. **(III) Reflective SFT (Gray Box, Bottom-Left):**

* **Input:** "CoT examples (data mixture)" and the policy model π from stage (II).

* **Process:** Involves "sampling CoTs through MTP" (Model-Thought-Process, inferred). Shows a matrix with columns Q, S₁, S₂, ... and rows R₁, R₂, R₃, ... leading to "ground-truth verification" (V₁, V₂, V₃, ...). An "Expert Verifier" block processes this.

* **Output:** A policy model denoted by π̃ (π-tilde).

4. **(IV) RL fine-tuning (Orange Box, Right):**

* **Input:** The policy model π̃ from stage (III).

* **Process:** Contains two sub-modules:

* **MTP:** Shows a loop: `Q -> [π] -> R_t -> A`. State update: `S_t = T(S_{t-1}, R_t)`.

* **RMTP:** Shows a loop: `Q -> [π] -> (R_t, V_t) -> A`. State update: `S_t = T(S_{t-1}, R_t, V_t)`.

* **Components:** Includes a "Reward Model" and "Policy Optimization" block, with feedback loops (orange arrows) connecting them to the MTP/RMTP processes.

**Flow Arrows:** Black arrows indicate the primary data/model flow from (I) -> (II) -> (III) -> (IV). An additional arrow feeds "CoT examples" from the start into stage (III).

### Detailed Analysis

**Stage (I) Pretraining:**

* **Data Structure:** Sequences consist of a Question (Q), reasoning steps (R₁, R₂, ...), and an Answer (A). The diagram shows two example sequences: `Q, R₁, R₂, A` and `Q, R₁, A, Q, R₁, R₂, A, Q, R₁...`.

* **Key Mechanism:** "Context windows (randomly drawn)" are highlighted, suggesting the model is pretrained on variable-length segments of these reasoning chains.

**Stage (II) Non-reflective SFT:**

* **Mapping:** Establishes a direct mapping from a sequence of states (starting with Q, then S₁, S₂...) to a sequence of steps/answers (R₁, R₂, A). This represents standard supervised fine-tuning on reasoning traces.

**Stage (III) Reflective SFT:**

* **Verification Process:** Introduces a verification step. For a given question (Q) and state sequence (S₁, S₂...), multiple reasoning steps (R₁, R₂, R₃...) are generated and paired with verification scores or labels (V₁, V₂, V₃...). An "Expert Verifier" evaluates these against ground truth.

* **Model Update:** This process uses the policy π to sample CoTs and produces an updated policy π̃, incorporating reflective or verified reasoning.

**Stage (IV) RL fine-tuning:**

* **MTP (Model-Thought-Process):** A basic reactive model where the action (A) is taken based on the current reasoning step (R_t), and the state (S_t) is updated based only on the previous state and the new reasoning step.

* **RMTP (Reflective/Reinforced MTP):** An enhanced model where the action (A) is based on both a reasoning step (R_t) and its associated verification/value (V_t). The state update incorporates both R_t and V_t.

* **Optimization Loop:** The Reward Model provides feedback, which drives Policy Optimization. This optimized policy is then used in the MTP/RMTP modules, creating a reinforcement learning loop.

### Key Observations

1. **Progressive Complexity:** The pipeline evolves from simple sequence modeling (I) to supervised step-by-step reasoning (II), then adds verification (III), and finally integrates reinforcement learning with value feedback (IV).

2. **Notation Consistency:** The policy model is consistently denoted by π, with a tilde (π̃) used after the reflective stage to indicate a modified version.

3. **State Representation:** The state `S_t` is explicitly defined as a function `T` of previous states and reasoning steps/values, formalizing the reasoning trajectory.

4. **Dual RL Paths:** The RL stage explicitly contrasts a basic MTP with an enhanced RMTP, highlighting the integration of verification signals (V_t) into the decision and state-update process.

### Interpretation

This diagram outlines a sophisticated methodology for training AI models to perform complex reasoning. The pipeline's core innovation lies in its multi-stage approach that moves beyond standard pretraining and SFT.

* **From Imitation to Reflection:** Stages (I) and (II) teach the model to mimic reasoning patterns from data. Stage (III) introduces a critical reflective component, where the model's outputs are verified, likely teaching it to distinguish between valid and invalid reasoning paths.

* **From Supervision to Optimization:** Stage (IV) transitions from learning from static examples (SFT) to learning from outcomes via RL. The inclusion of the RMTP module suggests that the verification signal (V_t) from stage (III) is not just used for filtering data but is integrated as a core component of the decision-making process during RL, potentially guiding the model towards more reliable and high-reward reasoning strategies.

* **Overall Goal:** The pipeline aims to produce a model (final policy π) that doesn't just generate plausible-sounding reasoning chains but can engage in a verifiable, step-by-step thought process (`S_t = T(...)`) that is optimized for correctness, as determined by a reward model. This is a common framework for developing "reasoning" or "chain-of-thought" capabilities in large language models.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Multi-Stage Model Training Process

### Overview

The diagram illustrates a four-phase technical workflow for training a machine learning model, combining pretraining, reflective/non-reflective supervised fine-tuning (SFT), and reinforcement learning (RL) fine-tuning. It emphasizes context window sampling, ground-truth verification, and reward-driven optimization.

### Components/Axes

- **Phases**:

- (I) Pretraining (green)

- (II) Non-reflective SFT (blue)

- (III) Reflective SFT (gray)

- (IV) RL fine-tuning (orange)

- **Key Elements**:

- **Training Data**: Green cylinder labeled "Training Data (data mixture)"

- **Context Windows**: Red boxes highlighting sequences like `Q, R1, R2, A`

- **States/Steps**: Labeled `S1, S2, S3` (states) and `R1, R2, R3` (steps)

- **Verification**: "Expert Verifier" block with ground-truth checks

- **Policy Optimization**: Arrows connecting "Reward Model" and "Policy Optimization" in RL phase

- **Flow Direction**: Left-to-right progression with feedback loops (e.g., `π` and `π̃` symbols)

### Detailed Analysis

1. **Pretraining (I)**:

- Input: Training data mixture → CoT examples

- Process: Randomly drawn context windows (e.g., `Q, R1, R2, A`)

- Output: Labeled `π` (policy parameter)

2. **Reflective SFT (III)**:

- Input: States (`S1, S2, S3`) and steps (`R1, R2, R3`)

- Process: Ground-truth verification via "Expert Verifier"

- Output: Transformed states `S_t = T(S_{t-1}, R_t)`

3. **Non-reflective SFT (II)**:

- Input: States and steps/answers

- Process: Direct mapping to `π` (policy parameter)

- Output: Labeled `π̃` (modified policy)

4. **RL Fine-tuning (IV)**:

- Input: `π` and `π̃` from prior phases

- Process:

- MTP (Model-based Training Process) with `Q → R_t → A`

- RMTP (Reward-based MTP) with `Q → R_t, V_t → A`

- Output: Reward Model and Policy Optimization

### Key Observations

- **Color Coding**:

- Green (Training Data), Red (Context Windows), Blue (Non-reflective SFT), Orange (RL Phase)

- **Feedback Loops**: Arrows indicate iterative refinement between phases (e.g., `π` → `π̃` → RL optimization).

- **Verification Integration**: Ground-truth checks in Reflective SFT ensure data quality before RL fine-tuning.

### Interpretation

This workflow demonstrates a hybrid approach to model training:

1. **Pretraining** establishes foundational knowledge using context-aware examples.

2. **Reflective SFT** introduces iterative state-step transformations with human/expert validation, ensuring alignment with ground-truth.

3. **Non-reflective SFT** focuses on direct policy parameterization without intermediate verification.

4. **RL Fine-tuning** optimizes policies using reward signals, balancing exploration (MTP) and exploitation (RMTP).

The separation of reflective/non-reflective SFT suggests a strategy to handle uncertainty: reflective SFT validates intermediate steps, while non-reflective SFT accelerates training. The final RL phase prioritizes real-world applicability through reward-driven adjustments, likely improving robustness in dynamic environments.

DECODING INTELLIGENCE...