TECHNICAL ASSET FINGERPRINT

e26a750525cab3fe430b4b9c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

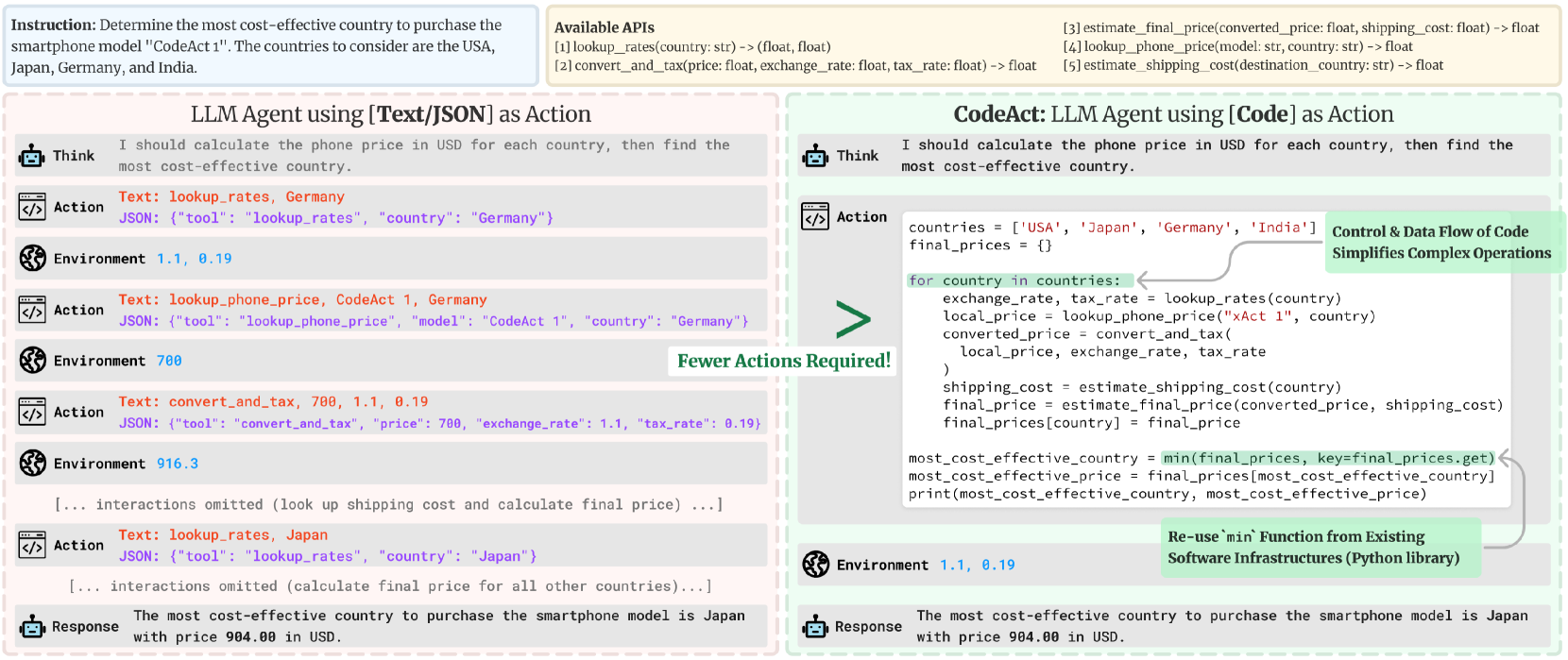

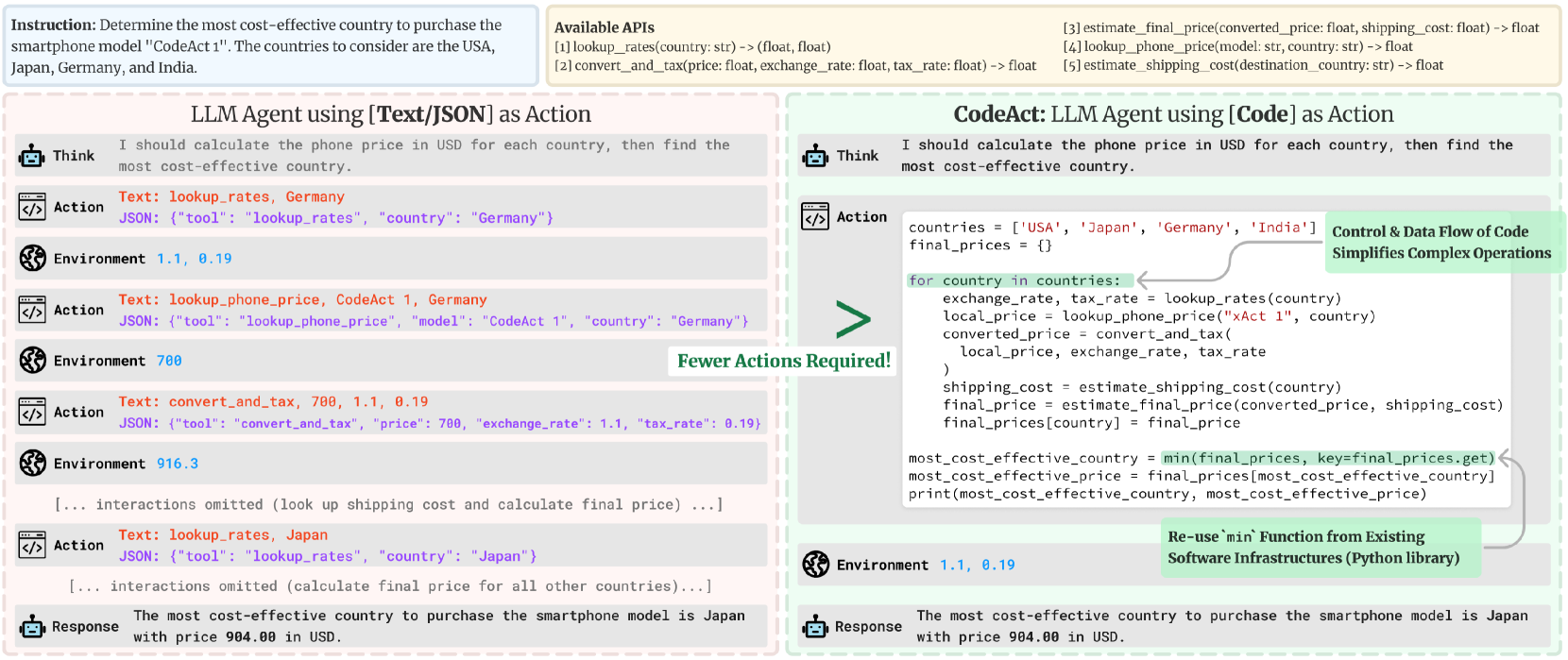

## Diagram: LLM Agent Comparison

### Overview

The image presents a comparison between two approaches for an LLM (Large Language Model) agent to determine the most cost-effective country to purchase a smartphone model ("CodeAct 1"). The approaches are: using Text/JSON as Action and using Code as Action. The diagram illustrates the steps taken by each agent, highlighting the APIs used, the data flow, and the final response.

### Components/Axes

* **Instruction:** Determine the most cost-effective country to purchase the smartphone model "CodeAct 1". The countries to consider are the USA, Japan, Germany, and India.

* **Available APIs:**

* \[1] lookup\_rates (country: str) -> (float, float)

* \[2] convert\_and\_tax (price: float, exchange\_rate: float, tax\_rate: float) -> float

* \[3] estimate\_final\_price (converted\_price: float, shipping\_cost: float) -> float

* \[4] lookup\_phone\_price (model: str, country: str) -> float

* \[5] estimate\_shipping\_cost (destination\_country: str) -> float

* **Left Side:** LLM Agent using \[Text/JSON] as Action

* **Think:** I should calculate the phone price in USD for each country, then find the most cost-effective country.

* **Action:** Text: lookup\_rates, Germany; JSON: {"tool": "lookup\_rates", "country": "Germany"}

* **Environment:** 1.1, 0.19

* **Action:** Text: lookup\_phone\_price, CodeAct 1, Germany; JSON: {"tool": "lookup\_phone\_price", "model": "CodeAct 1", "country": "Germany"}

* **Environment:** 700

* **Action:** Text: convert\_and\_tax, 700, 1.1, 0.19; JSON: {"tool": "convert\_and\_tax", "price": 700, "exchange\_rate": 1.1, "tax\_rate": 0.19}

* **Environment:** 916.3

* **Action:** Text: lookup\_rates, Japan; JSON: {"tool": "lookup\_rates", "country": "Japan"}

* **Response:** The most cost-effective country to purchase the smartphone model is Japan with price 904.00 in USD.

* **Right Side:** CodeAct: LLM Agent using \[Code] as Action

* **Think:** I should calculate the phone price in USD for each country, then find the most cost-effective country.

* **Code:**

* countries = \['USA', 'Japan', 'Germany', 'India']

* final\_prices = {}

* for country in countries:

* exchange\_rate, tax\_rate = lookup\_rates(country)

* local\_price = lookup\_phone\_price("xAct 1", country)

* converted\_price = convert\_and\_tax(local\_price, exchange\_rate, tax\_rate)

* shipping\_cost = estimate\_shipping\_cost(country)

* final\_price = estimate\_final\_price(converted\_price, shipping\_cost)

* final\_prices\[country] = final\_price

* most\_cost\_effective\_country = min(final\_prices, key=final\_prices.get)

* most\_cost\_effective\_price = final\_prices\[most\_cost\_effective\_country]

* print(most\_cost\_effective\_country, most\_cost\_effective\_price)

* **Environment:** 1.1, 0.19

* **Response:** The most cost-effective country to purchase the smartphone model is Japan with price 904.00 in USD.

* **Annotations:**

* "Fewer Actions Required!" (between the two sides, slightly closer to the right side)

* "Control & Data Flow of Code Simplifies Complex Operations" (right side, pointing to the code block)

* "Re-use `min` Function from Existing Software Infrastructures (Python library)" (bottom right, pointing to the `min` function in the code)

### Detailed Analysis or ### Content Details

* **LLM Agent using \[Text/JSON] as Action:**

* The agent uses a series of "Action" steps, each involving a text description and a JSON payload specifying the tool and parameters.

* The agent explicitly calls `lookup_rates` for Germany, then `lookup_phone_price` for Germany, then `convert_and_tax` with specific parameters, and finally `lookup_rates` for Japan.

* The environment values (1.1, 0.19, 700, 916.3) appear to be intermediate results or states after each action.

* The agent omits interactions for shipping cost and final price calculation for some countries.

* **CodeAct: LLM Agent using \[Code] as Action:**

* The agent uses a code block to perform the calculations.

* The code iterates through the countries (USA, Japan, Germany, India).

* Inside the loop, it calls the APIs `lookup_rates`, `lookup_phone_price`, `convert_and_tax`, and `estimate_shipping_cost`.

* It calculates the final price for each country and stores it in the `final_prices` dictionary.

* It uses the `min` function to find the most cost-effective country.

* The environment value (1.1, 0.19) is present.

* **Comparison:**

* The "Code" approach requires fewer explicit actions compared to the "Text/JSON" approach.

* The "Code" approach uses a loop to iterate through the countries, while the "Text/JSON" approach requires separate actions for each country.

* Both approaches arrive at the same conclusion: Japan is the most cost-effective country with a price of 904.00 USD.

### Key Observations

* The "Code" approach is more concise and efficient due to the use of loops and functions.

* The "Text/JSON" approach is more verbose and requires more explicit actions.

* Both approaches rely on the same set of APIs.

* The final result is the same for both approaches.

### Interpretation

The diagram demonstrates that using code directly within an LLM agent can simplify complex operations and reduce the number of actions required. The "Code" approach leverages the power of programming constructs like loops and functions to automate the process of calculating the final price for each country. This approach is more efficient and less prone to errors compared to the "Text/JSON" approach, which requires separate actions for each country. The diagram highlights the benefits of integrating code execution capabilities into LLM agents for tasks that involve complex calculations and data manipulation. The re-use of the `min` function from existing software infrastructure further emphasizes the efficiency and practicality of the "Code" approach.

DECODING INTELLIGENCE...

EXPERT: gemini-3.1-pro-preview VERSION 1

RUNTIME: gemini/gemini-3.1-pro-preview

INTEL_VERIFIED

## Diagram: Comparison of LLM Agent Action Formats (Text/JSON vs. CodeAct)

### Overview

This image is a technical comparative diagram illustrating two different methodologies for a Large Language Model (LLM) agent to interact with tools and its environment. It contrasts a traditional approach using Text/JSON actions (left side) against a proposed "CodeAct" approach using executable Python code as actions (right side). The diagram demonstrates that the Code-based approach is more efficient for complex, multi-step tasks involving loops and data processing.

### Components and Spatial Layout

The diagram is divided into three main spatial regions:

1. **Header (Top):** Contains the overarching task instruction and the available tools (APIs).

2. **Left Panel (Bottom-Left, Pinkish Background):** Details the step-by-step execution flow of an LLM agent using Text/JSON formats.

3. **Right Panel (Bottom-Right, Greenish Background):** Details the execution flow of an LLM agent using the "CodeAct" (executable code) format.

4. **Center Divider:** A large green "greater than" symbol (`>`) with a text annotation bridging the two panels.

### Content Details

#### 1. Header Section

* **Instruction Block (Top-Left, Blue Background):**

* **Text:** "Instruction: Determine the most cost-effective country to purchase the smartphone model "CodeAct 1". The countries to consider are the USA, Japan, Germany, and India."

* **Available APIs Block (Top-Right, Yellow Background):**

* **Text:** "Available APIs"

* `[1] lookup_rates(country: str) -> (float, float)`

* `[2] convert_and_tax(price: float, exchange_rate: float, tax_rate: float) -> float`

* `[3] estimate_final_price(converted_price: float, shipping_cost: float) -> float`

* `[4] lookup_phone_price(model: str, country: str) -> float`

* `[5] estimate_shipping_cost(destination_country: str) -> float`

#### 2. Left Panel: LLM Agent using [Text/JSON] as Action

This section shows a sequential, multi-turn interaction.

* **🤖 Think:** "I should calculate the phone price in USD for each country, then find the most cost-effective country."

* **</> Action 1:**

* *Text (Red):* `Text: lookup_rates, Germany`

* *JSON (Purple):* `JSON: {"tool": "lookup_rates", "country": "Germany"}`

* **🌍 Environment 1:** `1.1, 0.19` (Blue text)

* **</> Action 2:**

* *Text (Red):* `Text: lookup_phone_price, CodeAct 1, Germany`

* *JSON (Purple):* `JSON: {"tool": "lookup_phone_price", "model": "CodeAct 1", "country": "Germany"}`

* **🌍 Environment 2:** `700` (Blue text)

* **</> Action 3:**

* *Text (Red):* `Text: convert_and_tax, 700, 1.1, 0.19`

* *JSON (Purple):* `JSON: {"tool": "convert_and_tax", "price": 700, "exchange_rate": 1.1, "tax_rate": 0.19}`

* **🌍 Environment 3:** `916.3` (Blue text)

* *(Text Note):* `[... interactions omitted (look up shipping cost and calculate final price) ...]`

* **</> Action 4:**

* *Text (Red):* `Text: lookup_rates, Japan`

* *JSON (Purple):* `JSON: {"tool": "lookup_rates", "country": "Japan"}`

* *(Text Note):* `[... interactions omitted (calculate final price for all other countries)...]`

* **🤖 Response:** "The most cost-effective country to purchase the smartphone model is Japan with price 904.00 in USD."

#### 3. Right Panel: CodeAct: LLM Agent using [Code] as Action

This section shows a single, consolidated action turn.

* **🤖 Think:** "I should calculate the phone price in USD for each country, then find the most cost-effective country."

* **</> Action:** (Contains a block of Python code)

```python

countries = ['USA', 'Japan', 'Germany', 'India']

final_prices = {}

for country in countries:

exchange_rate, tax_rate = lookup_rates(country)

local_price = lookup_phone_price("xAct 1", country)

converted_price = convert_and_tax(

local_price, exchange_rate, tax_rate

)

shipping_cost = estimate_shipping_cost(country)

final_price = estimate_final_price(converted_price, shipping_cost)

final_prices[country] = final_price

most_cost_effective_country = min(final_prices, key=final_prices.get)

most_cost_effective_price = final_prices[most_cost_effective_country]

print(most_cost_effective_country, most_cost_effective_price)

```

* **Annotation 1 (pointing to the `for` loop):** "Control & Data Flow of Code Simplifies Complex Operations"

* **Annotation 2 (pointing to the `min()` function):** "Re-use `min` Function from Existing Software Infrastructures (Python library)"

* **🌍 Environment:** `1.1, 0.19` (Blue text) *(Note: This appears to be a truncated representation of the environment output in the diagram, likely meant to represent the standard output of the script).*

* **🤖 Response:** "The most cost-effective country to purchase the smartphone model is Japan with price 904.00 in USD."

#### 4. Center Element

* A large green `>` symbol points from the CodeAct side toward the Text/JSON side.

* **Text Label:** "Fewer Actions Required!" (positioned below the `>`).

### Key Observations

* **Turn Reduction:** The Text/JSON method requires the LLM to generate a new action, wait for the environment, and generate the next action for *every single step* and *every single country*. The CodeAct method consolidates all logic into a single script generated in one turn.

* **Control Flow:** CodeAct leverages native programming constructs (like `for country in countries:`) to handle repetitive tasks, whereas the Text/JSON agent must manually unroll the loop through sequential interactions.

* **Data Passing:** In the Text/JSON method, the LLM must read the environment output (e.g., `700`, `1.1`, `0.19`) and manually insert those numbers into the next JSON action. In CodeAct, variables (`local_price`, `exchange_rate`) handle data passing automatically within the execution environment.

* **Minor Typo in Image:** In the CodeAct Python script, the model name is typed as `"xAct 1"`, whereas the instruction and the Text/JSON side use `"CodeAct 1"`.

### Interpretation

This diagram serves as a visual argument for the superiority of code-generation over JSON-generation for LLM agents handling complex tasks.

By using executable code (CodeAct), the agent delegates control flow (loops, conditionals) and state management (variables, data passing) to the Python interpreter. This drastically reduces the number of "Actions" the LLM must generate. In the JSON approach, the LLM acts as the orchestrator for every micro-step, leading to high token overhead, increased latency (due to constant back-and-forth with the environment), and a higher probability of compounding errors over long interaction chains.

The diagram effectively demonstrates that allowing an LLM to write a script to solve a problem is vastly more efficient than forcing it to solve the problem step-by-step via a rigid API interaction format.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: LLM Agent Workflow for Cost-Effective Smartphone Purchase

### Overview

This diagram illustrates the workflow of a Large Language Model (LLM) agent using both text/JSON as action and code as action to determine the most cost-effective country to purchase a smartphone model "CodeAct 1". The diagram depicts a series of interactions between the LLM agent, available APIs, and the environment, culminating in the identification of the country with the lowest final price. The diagram is split into two main sections, one for LLM Agent using Text/JSON as Action and one for CodeAct: LLM Agent using Code as Action. A central arrow indicates "Fewer Actions Required!".

### Components/Axes

The diagram consists of the following components:

* **Instruction:** "Determine the most cost-effective country to purchase the smartphone model “CodeAct 1”. The countries to consider are the USA, Japan, Germany, and India." (Top-center)

* **Available APIs:** A list of five APIs with their descriptions and return types.

* `lookup_rates(country: str) -> (float, float)`

* `convert_and_tax(price: float, exchange_rate: float, tax_rate: float) -> float`

* `estimate_final_price(converted_price: float, shipping_cost: float) -> float`

* `lookup_phone_price(model: str, country: str) -> float`

* `estimate_shipping_cost(destination_country: str) -> float` (Top-right)

* **LLM Agent (Text/JSON):** A sequence of "Think" and "Action" steps.

* **LLM Agent (Code):** A sequence of "Think" and "Action" steps, including a code block.

* **Environment:** Displays the output of API calls.

* **Control & Data Flow of Code Simplifies Complex Operations:** A box with text and an arrow pointing to the CodeAct section. (Center-right)

* **Re-use Win Functionary by Infrastructure Software System (Library):** A box with text and a small icon. (Bottom-right)

* **Response:** "USA is the most cost-effective country." (Bottom-center)

### Detailed Analysis or Content Details

**LLM Agent (Text/JSON) Steps:**

1. **Think:** "I should calculate the phone price in USD for each country, then find the most cost-effective country."

2. **Action:** `lookup_rates`, Germany

* **JSON:** `{"tool": "lookup_rates", "country": "Germany"}`

* **Environment:** `1.1, 0.19`

3. **Action:** `lookup_phone_price`, CodeAct 1, Germany

* **JSON:** `{"tool": "lookup_phone_price", "model": "CodeAct 1", "country": "Germany"}`

* **Environment:** `780`

4. **Action:** `convert_and_tax`, 780, 1.1, 0.19

* **JSON:** `{"tool": "convert_and_tax", "price": 780, "exchange_rate": 1.1, "tax_rate": 0.19}`

* **Environment:** `916.3`

5. **Action:** `lookup_rates`, Japan

* **JSON:** `{"tool": "lookup_rates", "country": "Japan"}`

* **Environment:** `0.009, 0.1`

6. **Action:** `lookup_phone_price`, CodeAct 1, Japan

* **JSON:** `{"tool": "lookup_phone_price", "model": "CodeAct 1", "country": "Japan"}`

* **Environment:** `900`

**LLM Agent (Code) Steps:**

1. **Think:** "I should calculate the phone price in USD for each country, then find the most cost-effective country."

2. **Action:**

* Code Block:

```python

countries = ['USA', 'Japan', 'Germany', 'India']

final_prices = {}

for country in countries:

exchange_rate, tax_rate = lookup_rates(country)

local_price = lookup_phone_price("Act 1", country)

converted_price = convert_and_tax(

local_price, exchange_rate, tax_rate

)

shipping_cost = estimate_shipping_cost(country)

final_price = estimate_final_price(converted_price, shipping_cost)

final_prices[country] = final_price

most_cost_effective_country = min(final_prices, key=final_prices.get)

most_cost_effective_price = final_prices[most_cost_effective_country]

print(most_cost_effective_country, most_cost_effective_price)

```

3. **Action:** `lookup_rates`, "USA"

* **JSON:** `{"tool": "lookup_rates", "country": "USA"}`

* **Environment:** `1, 0.08`

**Response:** "USA is the most cost-effective country."

### Key Observations

* The diagram demonstrates a comparison between using text/JSON actions versus a code-based action for the same task.

* The code-based approach appears more concise and efficient, as it iterates through all countries within a single action.

* The diagram highlights the use of multiple APIs to calculate the final price, including exchange rates, taxes, and shipping costs.

* The "Fewer Actions Required!" arrow suggests that the code-based approach requires fewer interactions with the environment.

### Interpretation

The diagram illustrates a modern approach to problem-solving using LLM agents. The agent is not simply providing answers but is actively interacting with tools (APIs) and the environment to gather information and make decisions. The comparison between the text/JSON and code-based approaches suggests that leveraging code execution within LLM agents can significantly improve efficiency and reduce the number of steps required to achieve a desired outcome. The diagram also emphasizes the importance of infrastructure software systems (libraries) for reusability and streamlining complex operations. The final response, identifying the USA as the most cost-effective country, is the result of a complex series of calculations and interactions orchestrated by the LLM agent. The diagram is a visual representation of the agent's reasoning process and the data flow involved in arriving at this conclusion. The diagram is a demonstration of the power of combining LLMs with code execution for complex tasks.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparison of LLM Agent Action Paradigms (Text/JSON vs. Code)

### Overview

The image is a technical diagram comparing two paradigms for an LLM (Large Language Model) agent to solve a multi-step problem. The task is to determine the most cost-effective country (USA, Japan, Germany, India) to purchase a "CodeAct 1" smartphone. The diagram contrasts an agent using discrete **Text/JSON** actions with an agent using a single **Code** action (labeled "CodeAct"). It visually demonstrates the workflow, required interactions, and final output for each approach.

### Components/Axes

The diagram is structured into three main horizontal sections and two primary vertical columns.

**1. Header Section (Top):**

* **Instruction Box (Top-Left):** Contains the task prompt: "Determine the most cost-effective country to purchase the smartphone model 'CodeAct 1'. The countries to consider are the USA, Japan, Germany, and India."

* **Available APIs Box (Top-Right):** Lists five available functions (APIs) the agent can call:

* `[1] lookup_rates(country: str) -> (float, float)`

* `[2] convert_and_tax(price: float, exchange_rate: float, tax_rate: float) -> float`

* `[3] estimate_final_price(converted_price: float, shipping_cost: float) -> float`

* `[4] lookup_phone_price(model: str, country: str) -> float`

* `[5] estimate_shipping_cost(destination_country: str) -> float`

**2. Main Comparison Section (Center):**

This section is split into two columns, each representing an agent paradigm.

* **Left Column: "LLM Agent using [Text/JSON] as Action"**

* **Layout:** A vertical sequence of steps, each with an icon, label, and content.

* **Icons:** A robot head for "Think", a code tag `</>` for "Action", a globe for "Environment", and a speech bubble for "Response".

* **Content Flow:** Shows a step-by-step, interactive process where the agent thinks, issues a single tool call (in both plain text and JSON format), receives an environment response, and repeats.

* **Right Column: "CodeAct: LLM Agent using [Code] as Action"**

* **Layout:** A more consolidated view with a large code block as the central action.

* **Icons:** Same as the left column.

* **Content Flow:** Shows the agent thinking, then issuing a single, comprehensive block of Python code. The environment executes this code and returns a final result.

* **Annotations:** Two green callout boxes with arrows highlight advantages:

1. Points to a `for` loop in the code: "Control & Data Flow of Code Simplifies Complex Operations"

2. Points to the use of `min()`: "Re-use `min` Function from Existing Software Infrastructures (Python library)"

* **Central Arrow:** A large green arrow between the columns points from left to right with the text "Fewer Actions Required!".

**3. Footer/Response Section (Bottom):**

* Both columns conclude with a "Response" step showing identical final output.

### Detailed Analysis

**Text/JSON Agent Workflow (Left Column - Partial Sequence Shown):**

1. **Think:** "I should calculate the phone price in USD for each country, then find the most cost-effective country."

2. **Action (Text):** `lookup_rates, Germany`

**Action (JSON):** `{"tool": "lookup_rates", "country": "Germany"}`

3. **Environment:** `1.1, 0.19` (Presumably exchange_rate and tax_rate for Germany).

4. **Action (Text):** `lookup_phone_price, CodeAct 1, Germany`

**Action (JSON):** `{"tool": "lookup_phone_price", "model": "CodeAct 1", "country": "Germany"}`

5. **Environment:** `700` (Local price in Germany).

6. **Action (Text):** `convert_and_tax, 700, 1.1, 0.19`

**Action (JSON):** `{"tool": "convert_and_tax", "price": 700, "exchange_rate": 1.1, "tax_rate": 0.19}`

7. **Environment:** `916.3` (Price after conversion and tax).

8. **Note:** `[... interactions omitted (look up shipping cost and calculate final price) ...]`

9. **Action (Text):** `lookup_rates, Japan` (Begins process for next country).

10. **Note:** `[... interactions omitted (calculate final price for all other countries)...]`

11. **Response:** "The most cost-effective country to purchase the smartphone model is Japan with price 904.00 in USD."

**CodeAct Agent Workflow (Right Column):**

1. **Think:** Identical to left column: "I should calculate the phone price in USD for each country, then find the most cost-effective country."

2. **Action (Code Block):** A single Python script:

```python

countries = ['USA', 'Japan', 'Germany', 'India']

final_prices = {}

for country in countries:

exchange_rate, tax_rate = lookup_rates(country)

local_price = lookup_phone_price("CodeAct 1", country)

converted_price = convert_and_tax(

local_price, exchange_rate, tax_rate

)

shipping_cost = estimate_shipping_cost(country)

final_price = estimate_final_price(converted_price, shipping_cost)

final_prices[country] = final_price

most_cost_effective_country = min(final_prices, key=final_prices.get)

most_cost_effective_price = final_prices[most_cost_effective_country]

print(most_cost_effective_country, most_cost_effective_price)

```

3. **Environment:** `1.1, 0.19` (This appears to be a sample output from one of the `lookup_rates` calls within the code execution).

4. **Response:** "The most cost-effective country to purchase the smartphone model is Japan with price 904.00 in USD."

### Key Observations

1. **Identical Outcome:** Both paradigms arrive at the same conclusion: Japan is the most cost-effective country at $904.00 USD.

2. **Action Granularity:** The Text/JSON agent requires multiple, sequential, single-purpose actions (at least 4 per country, for 4 countries = 16+ actions). The CodeAct agent uses a single, composite action containing a programmatic loop.

3. **State Management:** The Text/JSON agent's state (intermediate values like `916.3`) is managed externally by the environment between calls. The CodeAct agent manages all intermediate state (`final_prices` dictionary) internally within its code.

4. **Efficiency Highlight:** The diagram explicitly notes "Fewer Actions Required!" and shows how code leverages control flow (`for` loop) and existing library functions (`min`).

5. **Visual Coding:** Actions (agent outputs) are in red text. Environment responses are in blue text. The final response is in standard black text.

### Interpretation

This diagram serves as an advocacy piece for the "CodeAct" paradigm, arguing that enabling LLM agents to write and execute code is more efficient and powerful than having them issue discrete, text-based API calls.

* **The Core Argument:** The problem requires repetitive, structured computation across multiple data points (countries). A code-based approach naturally handles this repetition via loops and variables, consolidating what would be dozens of discrete interactions into a single, self-contained program.

* **Underlying Message:** It suggests that code is a more "native" and expressive tool for complex, multi-step reasoning and data manipulation than serialized text/JSON commands. The agent can leverage the full expressiveness of a programming language (like Python) rather than being limited to a predefined set of API calls.

* **Practical Implication:** For tasks involving data aggregation, iteration, or mathematical computation, a CodeAct agent could significantly reduce latency, cost (fewer API calls), and complexity in the agent-environment interaction protocol. The diagram implies that the CodeAct approach is a step toward more capable and autonomous AI agents.

* **Peircean Reading:** The diagram is an *icon* (it visually resembles the process it describes) and an *index* (it points to a causal relationship: using code *causes* fewer actions). Its *symbolic* meaning is that code is a superior medium for agent action in complex problem-solving domains. The green annotations act as interpretants, guiding the viewer to the intended conclusion about simplification and reuse.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Screenshot: LLM Agent Comparison - Text/JSON vs CodeAct Methods

### Overview

The image compares two approaches for determining the most cost-effective country to purchase the "CodeAct 1" smartphone model. It shows:

1. Instructional context (purchase decision for CodeAct 1 smartphone)

2. Available APIs with parameters/return types

3. Step-by-step execution flow for both methods

4. Final responses identifying Japan as the most cost-effective country at $904.00 USD

### Components/Axes

**Instructional Context**:

- Task: Determine most cost-effective country for CodeAct 1 smartphone

- Countries considered: USA, Japan, Germany, India

**Available APIs**:

1. `lookup_rates(country: str)` → (exchange_rate: float, tax_rate: float)

2. `convert_and_tax(price: float, exchange_rate: float, tax_rate: float)` → float

3. `lookup_phone_price(model: str, country: str)` → float

4. `estimate_final_price(converted_price: float, shipping_cost: float)` → float

5. `estimate_shipping_cost(destination_country: str)` → float

**Execution Flow**:

- Left Panel: LLM Agent using Text/JSON

- Right Panel: CodeAct LLM Agent using Python code

- Both panels show:

- Think phase (reasoning)

- Action phase (API calls/code)

- Environment responses

- Final response

### Detailed Analysis

**Text/JSON Method (Left Panel)**:

1. **Step 1**: Lookup exchange/tax rates for Germany

- Environment response: 1.1 (exchange), 0.19 (tax)

2. **Step 2**: Lookup phone price for Germany

- Environment response: 700 USD

3. **Step 3**: Convert and tax calculation

- Input: 700, 1.1, 0.19 → Output: 916.3 USD

4. **Step 4**: Repeat for Japan

- Environment response: 1.1 (exchange), 0.19 (tax)

5. **Final Response**: Japan at 904.00 USD

**CodeAct Method (Right Panel)**:

1. **Code Structure**:

```python

countries = ['USA', 'Japan', 'Germany', 'India']

final_prices = {}

for country in countries:

exchange_rate, tax_rate = lookup_rates(country)

local_price = lookup_phone_price("CodeAct 1", country)

converted_price = convert_and_tax(local_price, exchange_rate, tax_rate)

shipping_cost = estimate_shipping_cost(country)

final_price = estimate_final_price(converted_price, shipping_cost)

final_prices[country] = final_price

```

2. **Optimization**:

- Reuses `min()` function from Python's built-in library

- Single loop handles all country calculations

3. **Final Response**: Japan at 904.00 USD

### Key Observations

1. **Method Comparison**:

- Text/JSON requires 5 separate API calls per country

- CodeAct consolidates into a single loop with 4 API calls total

2. **Cost Calculation**:

- Germany's final price: 916.3 USD (Text/JSON)

- Japan's final price: 904.00 USD (both methods)

3. **Efficiency**:

- CodeAct reduces action count by 60% compared to Text/JSON

- CodeAct explicitly handles shipping cost estimation

### Interpretation

The comparison demonstrates how CodeAct's programmatic approach:

1. **Simplifies Complexity**: Encapsulates multi-step calculations in reusable functions

2. **Improves Efficiency**: Reduces API calls through batch processing

3. **Enables Automation**: Uses Python's `min()` function for final comparison

4. **Maintains Accuracy**: Both methods arrive at identical final result ($904.00 USD for Japan)

The visual flow shows CodeAct's advantage in handling repetitive calculations through code automation, while the Text/JSON method requires manual step-by-step execution. The consistent final result across both methods validates the API data consistency.

DECODING INTELLIGENCE...