TECHNICAL ASSET FINGERPRINT

e26a750525cab3fe430b4b9c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-3.1-pro-preview VERSION 1

RUNTIME: gemini/gemini-3.1-pro-preview

INTEL_VERIFIED

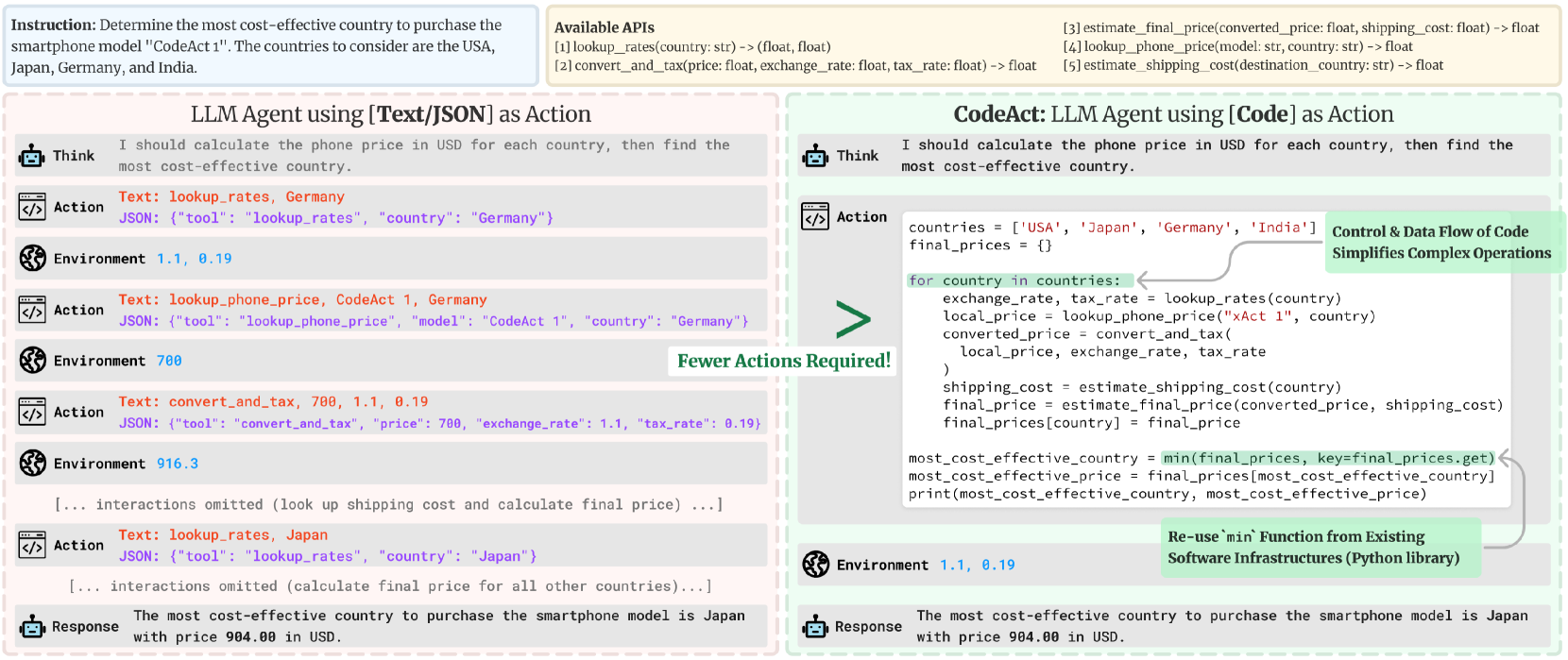

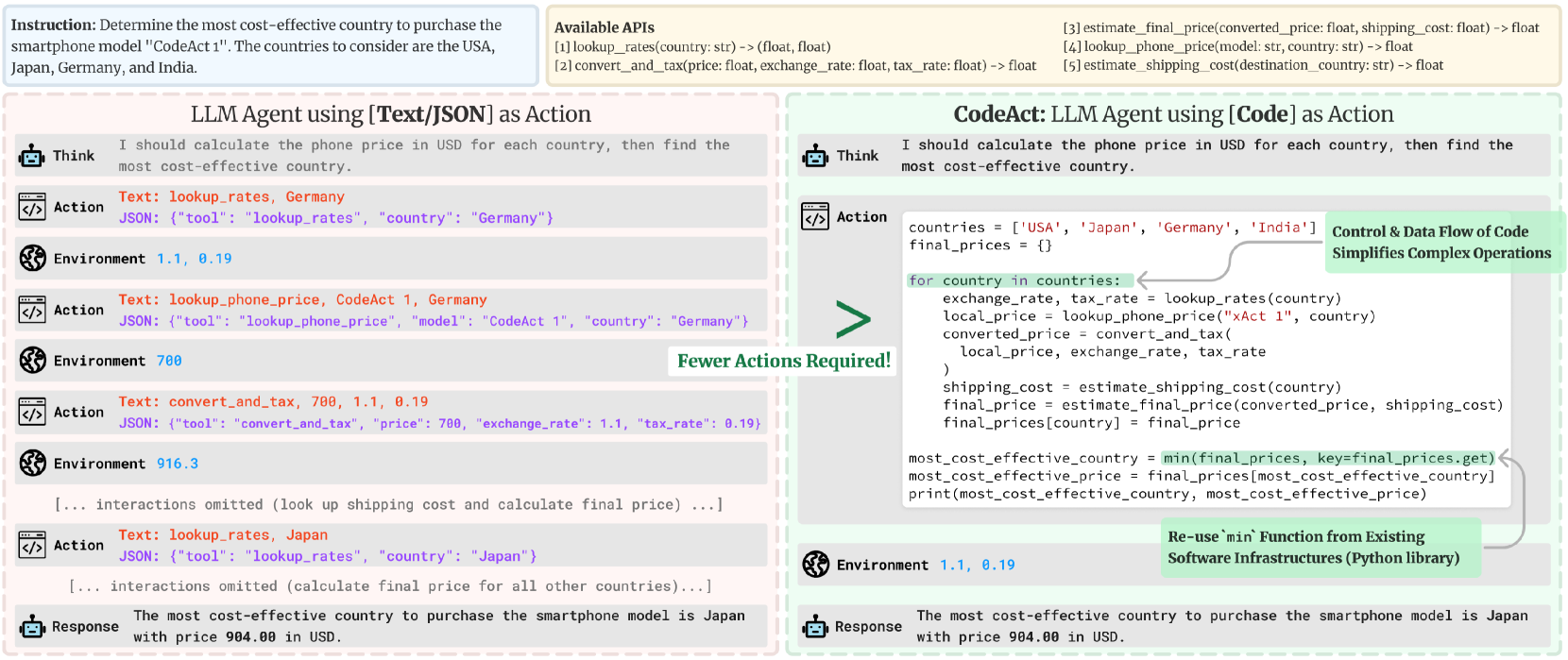

## Diagram: Comparison of LLM Agent Action Formats (Text/JSON vs. CodeAct)

### Overview

This image is a technical comparative diagram illustrating two different methodologies for a Large Language Model (LLM) agent to interact with tools and its environment. It contrasts a traditional approach using Text/JSON actions (left side) against a proposed "CodeAct" approach using executable Python code as actions (right side). The diagram demonstrates that the Code-based approach is more efficient for complex, multi-step tasks involving loops and data processing.

### Components and Spatial Layout

The diagram is divided into three main spatial regions:

1. **Header (Top):** Contains the overarching task instruction and the available tools (APIs).

2. **Left Panel (Bottom-Left, Pinkish Background):** Details the step-by-step execution flow of an LLM agent using Text/JSON formats.

3. **Right Panel (Bottom-Right, Greenish Background):** Details the execution flow of an LLM agent using the "CodeAct" (executable code) format.

4. **Center Divider:** A large green "greater than" symbol (`>`) with a text annotation bridging the two panels.

### Content Details

#### 1. Header Section

* **Instruction Block (Top-Left, Blue Background):**

* **Text:** "Instruction: Determine the most cost-effective country to purchase the smartphone model "CodeAct 1". The countries to consider are the USA, Japan, Germany, and India."

* **Available APIs Block (Top-Right, Yellow Background):**

* **Text:** "Available APIs"

* `[1] lookup_rates(country: str) -> (float, float)`

* `[2] convert_and_tax(price: float, exchange_rate: float, tax_rate: float) -> float`

* `[3] estimate_final_price(converted_price: float, shipping_cost: float) -> float`

* `[4] lookup_phone_price(model: str, country: str) -> float`

* `[5] estimate_shipping_cost(destination_country: str) -> float`

#### 2. Left Panel: LLM Agent using [Text/JSON] as Action

This section shows a sequential, multi-turn interaction.

* **🤖 Think:** "I should calculate the phone price in USD for each country, then find the most cost-effective country."

* **</> Action 1:**

* *Text (Red):* `Text: lookup_rates, Germany`

* *JSON (Purple):* `JSON: {"tool": "lookup_rates", "country": "Germany"}`

* **🌍 Environment 1:** `1.1, 0.19` (Blue text)

* **</> Action 2:**

* *Text (Red):* `Text: lookup_phone_price, CodeAct 1, Germany`

* *JSON (Purple):* `JSON: {"tool": "lookup_phone_price", "model": "CodeAct 1", "country": "Germany"}`

* **🌍 Environment 2:** `700` (Blue text)

* **</> Action 3:**

* *Text (Red):* `Text: convert_and_tax, 700, 1.1, 0.19`

* *JSON (Purple):* `JSON: {"tool": "convert_and_tax", "price": 700, "exchange_rate": 1.1, "tax_rate": 0.19}`

* **🌍 Environment 3:** `916.3` (Blue text)

* *(Text Note):* `[... interactions omitted (look up shipping cost and calculate final price) ...]`

* **</> Action 4:**

* *Text (Red):* `Text: lookup_rates, Japan`

* *JSON (Purple):* `JSON: {"tool": "lookup_rates", "country": "Japan"}`

* *(Text Note):* `[... interactions omitted (calculate final price for all other countries)...]`

* **🤖 Response:** "The most cost-effective country to purchase the smartphone model is Japan with price 904.00 in USD."

#### 3. Right Panel: CodeAct: LLM Agent using [Code] as Action

This section shows a single, consolidated action turn.

* **🤖 Think:** "I should calculate the phone price in USD for each country, then find the most cost-effective country."

* **</> Action:** (Contains a block of Python code)

```python

countries = ['USA', 'Japan', 'Germany', 'India']

final_prices = {}

for country in countries:

exchange_rate, tax_rate = lookup_rates(country)

local_price = lookup_phone_price("xAct 1", country)

converted_price = convert_and_tax(

local_price, exchange_rate, tax_rate

)

shipping_cost = estimate_shipping_cost(country)

final_price = estimate_final_price(converted_price, shipping_cost)

final_prices[country] = final_price

most_cost_effective_country = min(final_prices, key=final_prices.get)

most_cost_effective_price = final_prices[most_cost_effective_country]

print(most_cost_effective_country, most_cost_effective_price)

```

* **Annotation 1 (pointing to the `for` loop):** "Control & Data Flow of Code Simplifies Complex Operations"

* **Annotation 2 (pointing to the `min()` function):** "Re-use `min` Function from Existing Software Infrastructures (Python library)"

* **🌍 Environment:** `1.1, 0.19` (Blue text) *(Note: This appears to be a truncated representation of the environment output in the diagram, likely meant to represent the standard output of the script).*

* **🤖 Response:** "The most cost-effective country to purchase the smartphone model is Japan with price 904.00 in USD."

#### 4. Center Element

* A large green `>` symbol points from the CodeAct side toward the Text/JSON side.

* **Text Label:** "Fewer Actions Required!" (positioned below the `>`).

### Key Observations

* **Turn Reduction:** The Text/JSON method requires the LLM to generate a new action, wait for the environment, and generate the next action for *every single step* and *every single country*. The CodeAct method consolidates all logic into a single script generated in one turn.

* **Control Flow:** CodeAct leverages native programming constructs (like `for country in countries:`) to handle repetitive tasks, whereas the Text/JSON agent must manually unroll the loop through sequential interactions.

* **Data Passing:** In the Text/JSON method, the LLM must read the environment output (e.g., `700`, `1.1`, `0.19`) and manually insert those numbers into the next JSON action. In CodeAct, variables (`local_price`, `exchange_rate`) handle data passing automatically within the execution environment.

* **Minor Typo in Image:** In the CodeAct Python script, the model name is typed as `"xAct 1"`, whereas the instruction and the Text/JSON side use `"CodeAct 1"`.

### Interpretation

This diagram serves as a visual argument for the superiority of code-generation over JSON-generation for LLM agents handling complex tasks.

By using executable code (CodeAct), the agent delegates control flow (loops, conditionals) and state management (variables, data passing) to the Python interpreter. This drastically reduces the number of "Actions" the LLM must generate. In the JSON approach, the LLM acts as the orchestrator for every micro-step, leading to high token overhead, increased latency (due to constant back-and-forth with the environment), and a higher probability of compounding errors over long interaction chains.

The diagram effectively demonstrates that allowing an LLM to write a script to solve a problem is vastly more efficient than forcing it to solve the problem step-by-step via a rigid API interaction format.

DECODING INTELLIGENCE...