# Technical Document Extraction: Attention Forward Speed Benchmark

## 1. Header Information

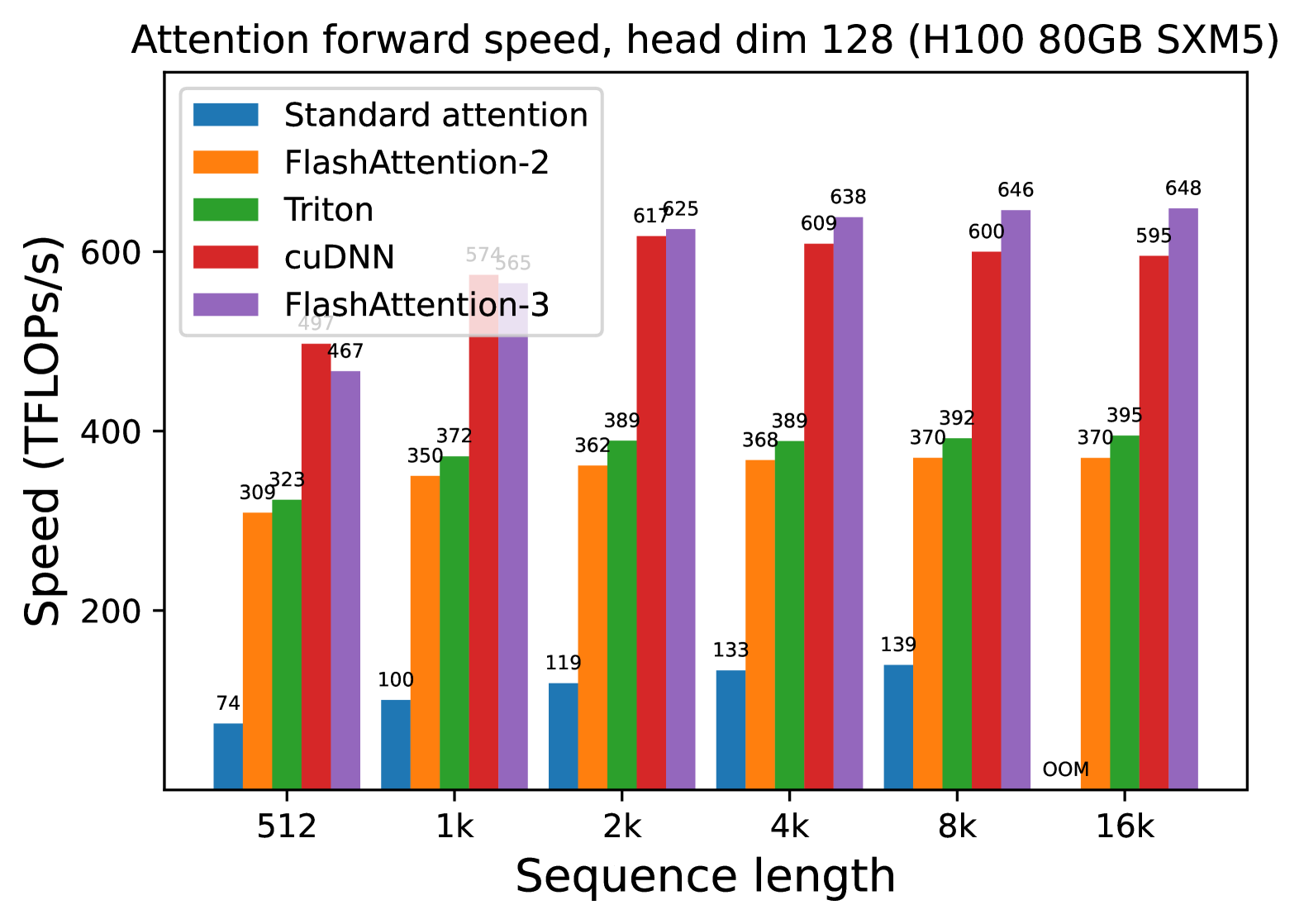

* **Title:** Attention forward speed, head dim 128 (H100 80GB SXM5)

* **Hardware Context:** NVIDIA H100 80GB SXM5 GPU.

* **Parameter Context:** Head dimension is fixed at 128.

## 2. Chart Structure and Metadata

* **Chart Type:** Grouped Bar Chart.

* **X-Axis (Independent Variable):** Sequence length.

* **Categories:** 512, 1k, 2k, 4k, 8k, 16k.

* **Y-Axis (Dependent Variable):** Speed (TFLOPS/s).

* **Scale:** Linear, ranging from 0 to 600+ (markers at 200, 400, 600).

* **Legend:**

1. **Standard attention** (Blue)

2. **FlashAttention-2** (Orange)

3. **Triton** (Green)

4. **cuDNN** (Red)

5. **FlashAttention-3** (Purple)

## 3. Data Table Reconstruction

The following table extracts the numerical values (TFLOPS/s) labeled above each bar in the chart.

| Sequence Length | Standard attention (Blue) | FlashAttention-2 (Orange) | Triton (Green) | cuDNN (Red) | FlashAttention-3 (Purple) |

| :--- | :---: | :---: | :---: | :---: | :---: |

| **512** | 74 | 309 | 323 | 497 | 467 |

| **1k** | 100 | 350 | 372 | 574 | 565 |

| **2k** | 119 | 362 | 389 | 617 | 625 |

| **4k** | 133 | 368 | 389 | 609 | 638 |

| **8k** | 139 | 370 | 392 | 600 | 646 |

| **16k** | OOM* | 370 | 395 | 595 | 648 |

*\*OOM: Out of Memory*

## 4. Trend Analysis and Component Isolation

### Series Trends

* **Standard attention (Blue):** Shows a slow upward slope from 74 to 139 TFLOPS/s as sequence length increases, but fails at 16k due to memory constraints (OOM). It is consistently the lowest performing method.

* **FlashAttention-2 (Orange):** Slopes upward initially and plateaus quickly around 370 TFLOPS/s from 8k onwards.

* **Triton (Green):** Follows a similar trend to FlashAttention-2 but maintains a consistent lead of ~20-25 TFLOPS/s over it, plateauing near 395 TFLOPS/s.

* **cuDNN (Red):** Shows a sharp upward trend, peaking at 2k (617 TFLOPS/s), then exhibits a slight downward trend as sequence length increases to 16k (595 TFLOPS/s).

* **FlashAttention-3 (Purple):** Shows the strongest scaling performance. While it starts slightly behind cuDNN at 512 and 1k, it overtakes all other methods at 2k and continues to slope upward, reaching the highest recorded value of 648 TFLOPS/s at 16k.

### Comparative Observations

* **Efficiency Gap:** There is a massive performance gap between "Standard attention" and all optimized kernels. At 8k, FlashAttention-3 is approximately 4.6x faster than Standard attention.

* **Crossover Point:** FlashAttention-3 overtakes cuDNN as the fastest implementation at the 2k sequence length mark.

* **Scalability:** FlashAttention-3 is the only implementation that shows continuous (albeit slowing) growth in TFLOPS/s across the entire tested range without regressing at higher sequence lengths.