# Technical Document Extraction: Attention Forward Speed Analysis

## Chart Title

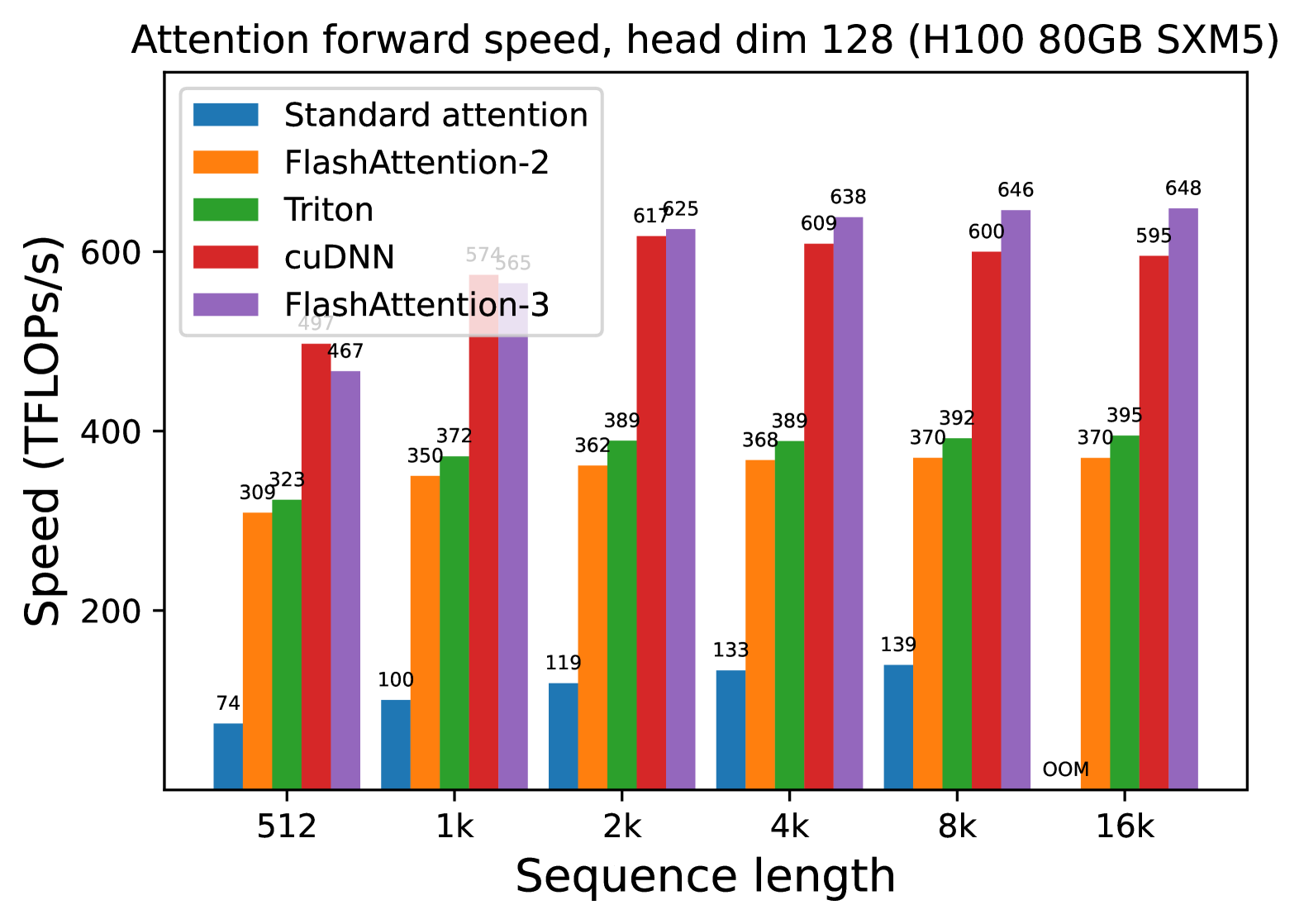

**Attention forward speed, head dim 128 (H100 80GB SXM5)**

---

### Axis Labels

- **X-axis**: Sequence length (categories: 512, 1k, 2k, 4k, 8k, 16k)

- **Y-axis**: Speed (TFLOPs/s)

---

### Legend

| Color | Method |

|-------------|----------------------|

| Blue | Standard attention |

| Orange | FlashAttention-2 |

| Green | Triton |

| Red | cuDNN |

| Purple | FlashAttention-3 |

---

### Data Points by Sequence Length

#### 512

- Standard attention: 74 TFLOPs/s

- FlashAttention-2: 309 TFLOPs/s

- Triton: 323 TFLOPs/s

- cuDNN: 467 TFLOPs/s

- FlashAttention-3: 497 TFLOPs/s

#### 1k

- Standard attention: 100 TFLOPs/s

- FlashAttention-2: 350 TFLOPs/s

- Triton: 372 TFLOPs/s

- cuDNN: 574 TFLOPs/s

- FlashAttention-3: 565 TFLOPs/s

#### 2k

- Standard attention: 119 TFLOPs/s

- FlashAttention-2: 362 TFLOPs/s

- Triton: 389 TFLOPs/s

- cuDNN: 617 TFLOPs/s

- FlashAttention-3: 625 TFLOPs/s

#### 4k

- Standard attention: 133 TFLOPs/s

- FlashAttention-2: 368 TFLOPs/s

- Triton: 389 TFLOPs/s

- cuDNN: 609 TFLOPs/s

- FlashAttention-3: 638 TFLOPs/s

#### 8k

- Standard attention: 139 TFLOPs/s

- FlashAttention-2: 370 TFLOPs/s

- Triton: 392 TFLOPs/s

- cuDNN: 600 TFLOPs/s

- FlashAttention-3: 646 TFLOPs/s

#### 16k

- Standard attention: **OOM** (Out of Memory)

- FlashAttention-2: 395 TFLOPs/s

- Triton: 395 TFLOPs/s

- cuDNN: 595 TFLOPs/s

- FlashAttention-3: 648 TFLOPs/s

---

### Key Trends

1. **Performance Scaling**: All methods show increased speed with longer sequence lengths, except Standard attention at 16k (OOM).

2. **FlashAttention-3 Dominance**: Consistently achieves highest TFLOPs/s across all sequence lengths (up to 648 TFLOPs/s at 16k).

3. **cuDNN Performance**: Second-highest performance, with a peak of 617 TFLOPs/s at 2k.

4. **Standard Attention Limitations**: Significantly lower performance and fails at 16k due to OOM.

5. **Triton vs. FlashAttention-2**: Triton slightly outperforms FlashAttention-2 in most cases (e.g., 389 vs. 368 TFLOPs/s at 4k).

---

### Critical Observations

- **OOM at 16k**: Standard attention cannot handle 16k sequence length on H100 80GB SXM5.

- **Efficiency Gaps**: FlashAttention-3 achieves ~30-40% higher speed than cuDNN at 16k (648 vs. 595 TFLOPs/s).

- **Consistency**: Triton and FlashAttention-2 show minimal variation across sequence lengths (368-395 TFLOPs/s range).