TECHNICAL ASSET FINGERPRINT

e2c859a4838409ae934fdbd9

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Charts: Evaluation Accuracy and Training Reward vs. Training Steps

### Overview

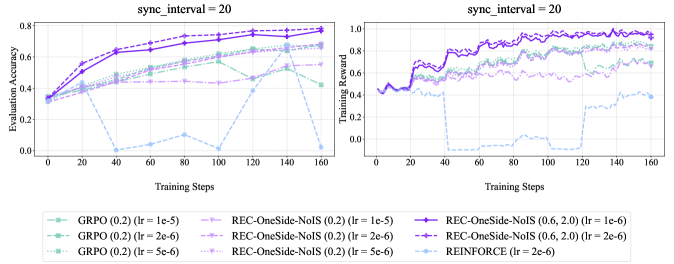

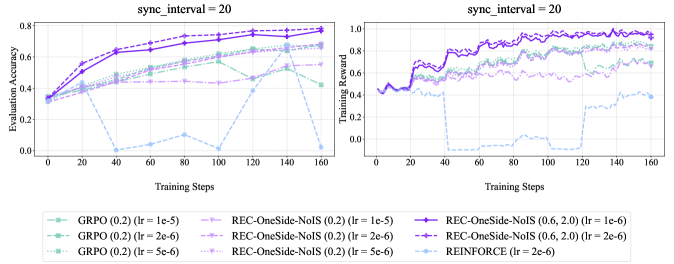

The image contains two line charts comparing the performance of different reinforcement learning algorithms. The left chart shows "Evaluation Accuracy" versus "Training Steps," while the right chart shows "Training Reward" versus "Training Steps." Both charts share the same x-axis ("Training Steps") and are configured with "sync_interval = 20". The charts compare GRPO, REC-OneSide-NoIS, and REINFORCE algorithms with varying learning rates (lr).

### Components/Axes

**Left Chart (Evaluation Accuracy):**

* **Title:** sync\_interval = 20

* **Y-axis:** Evaluation Accuracy, ranging from 0.0 to 0.8.

* **X-axis:** Training Steps, ranging from 0 to 160.

* **Algorithms (Legend):** Located at the bottom of the image, applies to both charts.

* GRPO (0.2) (lr = 1e-5): Light green, dashed line.

* GRPO (0.2) (lr = 2e-6): Light green, dash-dot line.

* GRPO (0.2) (lr = 5e-6): Light green, dotted line.

* REC-OneSide-NoIS (0.2) (lr = 1e-5): Light purple, dashed line.

* REC-OneSide-NoIS (0.2) (lr = 2e-6): Light purple, dash-dot line.

* REC-OneSide-NoIS (0.2) (lr = 5e-6): Light purple, dotted line.

* REC-OneSide-NoIS (0.6, 2.0) (lr = 1e-6): Dark purple, solid line with circle markers.

* REC-OneSide-NoIS (0.6, 2.0) (lr = 2e-6): Dark purple, solid line with plus markers.

* REINFORCE (lr = 2e-6): Light blue, dashed line.

**Right Chart (Training Reward):**

* **Title:** sync\_interval = 20

* **Y-axis:** Training Reward, ranging from 0.0 to 1.0.

* **X-axis:** Training Steps, ranging from 0 to 160.

* **Algorithms (Legend):** Same as the left chart.

### Detailed Analysis

**Left Chart (Evaluation Accuracy):**

* **GRPO (0.2) (lr = 1e-5):** Starts at approximately 0.35 and increases to around 0.65 by 160 training steps.

* **GRPO (0.2) (lr = 2e-6):** Starts at approximately 0.35 and increases to around 0.60 by 160 training steps.

* **GRPO (0.2) (lr = 5e-6):** Starts at approximately 0.35 and increases to around 0.50 by 160 training steps.

* **REC-OneSide-NoIS (0.2) (lr = 1e-5):** Starts at approximately 0.35 and increases to around 0.70 by 160 training steps.

* **REC-OneSide-NoIS (0.2) (lr = 2e-6):** Starts at approximately 0.35 and increases to around 0.75 by 160 training steps.

* **REC-OneSide-NoIS (0.2) (lr = 5e-6):** Starts at approximately 0.35 and increases to around 0.65 by 160 training steps.

* **REC-OneSide-NoIS (0.6, 2.0) (lr = 1e-6):** Starts at approximately 0.35 and increases to around 0.75 by 160 training steps.

* **REC-OneSide-NoIS (0.6, 2.0) (lr = 2e-6):** Starts at approximately 0.35 and increases to around 0.75 by 160 training steps.

* **REINFORCE (lr = 2e-6):** Starts at approximately 0.35, drops to near 0 around 40 training steps, fluctuates, and ends around 0.45 at 160 training steps.

**Right Chart (Training Reward):**

* **GRPO (0.2) (lr = 1e-5):** Starts at approximately 0.45, increases to around 0.60, and fluctuates around that value.

* **GRPO (0.2) (lr = 2e-6):** Starts at approximately 0.45, increases to around 0.60, and fluctuates around that value.

* **GRPO (0.2) (lr = 5e-6):** Starts at approximately 0.45, increases to around 0.55, and fluctuates around that value.

* **REC-OneSide-NoIS (0.2) (lr = 1e-5):** Starts at approximately 0.45, increases to around 0.70, and fluctuates around that value.

* **REC-OneSide-NoIS (0.2) (lr = 2e-6):** Starts at approximately 0.45, increases to around 0.70, and fluctuates around that value.

* **REC-OneSide-NoIS (0.2) (lr = 5e-6):** Starts at approximately 0.45, increases to around 0.65, and fluctuates around that value.

* **REC-OneSide-NoIS (0.6, 2.0) (lr = 1e-6):** Starts at approximately 0.45, increases to around 0.90, and fluctuates around that value.

* **REC-OneSide-NoIS (0.6, 2.0) (lr = 2e-6):** Starts at approximately 0.45, increases to around 0.90, and fluctuates around that value.

* **REINFORCE (lr = 2e-6):** Starts at approximately 0.45, drops to near 0 around 40 training steps, remains low until around 140 training steps, then increases to around 0.20.

### Key Observations

* REC-OneSide-NoIS (0.6, 2.0) with learning rates 1e-6 and 2e-6 consistently achieve the highest evaluation accuracy and training reward.

* REINFORCE (lr = 2e-6) performs poorly in both evaluation accuracy and training reward, showing a significant drop early in training.

* GRPO and REC-OneSide-NoIS (0.2) show similar performance trends, with REC-OneSide-NoIS generally performing slightly better.

* The "sync\_interval = 20" parameter is present on both charts.

### Interpretation

The data suggests that the REC-OneSide-NoIS algorithm, particularly with parameters (0.6, 2.0) and learning rates of 1e-6 or 2e-6, is the most effective among those tested for this specific task, as it achieves the highest evaluation accuracy and training reward. REINFORCE, with a learning rate of 2e-6, appears to be unsuitable for this task, as it fails to learn effectively. The performance differences between GRPO and REC-OneSide-NoIS (0.2) are relatively minor, indicating that they may be viable alternatives, although not as effective as REC-OneSide-NoIS (0.6, 2.0). The "sync\_interval = 20" parameter likely refers to the frequency at which the target network is updated in the reinforcement learning algorithms.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Charts: Training Performance Comparison

### Overview

The image presents two line charts comparing the training performance of different reinforcement learning algorithms. Both charts share the same x-axis (Training Steps) but differ in their y-axis metrics: the left chart displays "Evaluation Accuracy", while the right chart shows "Training Reward". Both charts are labeled with `sync_interval = 20`. The charts visualize the performance of GRPO, REC-OneSide-NoIS, and REINFORCE algorithms under varying learning rates.

### Components/Axes

* **X-axis (Both Charts):** "Training Steps", ranging from 0 to 160, with markers at intervals of 20.

* **Left Chart Y-axis:** "Evaluation Accuracy", ranging from 0.0 to 0.8, with markers at intervals of 0.2.

* **Right Chart Y-axis:** "Training Reward", ranging from 0.0 to 1.0, with markers at intervals of 0.2.

* **Legend (Bottom Center):** Contains labels for each data series, including the algorithm name and learning rate (lr).

### Detailed Analysis or Content Details

**Left Chart (Evaluation Accuracy):**

* **GRPO (0.2) (lr = 1e-5):** Line starts at approximately 0.3, increases steadily to around 0.7 by step 80, then plateaus around 0.72-0.75. (Color: Light Green, Dashed)

* **GRPO (0.2) (lr = 2e-6):** Line starts at approximately 0.3, increases to around 0.6 by step 80, then plateaus around 0.65-0.7. (Color: Dark Green, Dashed-Dot)

* **GRPO (0.2) (lr = 5e-6):** Line starts at approximately 0.3, increases to around 0.5 by step 80, then plateaus around 0.55-0.6. (Color: Green, Dot)

* **REC-OneSide-NoIS (0.2) (lr = 1e-5):** Line starts at approximately 0.3, increases to around 0.65 by step 80, then plateaus around 0.65-0.7. (Color: Light Blue, Dashed)

* **REC-OneSide-NoIS (0.2) (lr = 2e-6):** Line starts at approximately 0.3, increases to around 0.55 by step 80, then plateaus around 0.55-0.6. (Color: Blue, Dashed-Dot)

* **REC-OneSide-NoIS (0.2) (lr = 5e-6):** Line starts at approximately 0.3, increases to around 0.45 by step 80, then plateaus around 0.45-0.5. (Color: Blue, Dot)

**Right Chart (Training Reward):**

* **REC-OneSide-NoIS (0.6, 2.0) (lr = 1e-6):** Line starts at approximately 0.4, fluctuates between 0.6 and 0.9, and stabilizes around 0.85-0.9. (Color: Dark Magenta, Solid)

* **REC-OneSide-NoIS (0.6, 2.0) (lr = 2e-6):** Line starts at approximately 0.4, fluctuates between 0.5 and 0.8, and stabilizes around 0.7-0.8. (Color: Magenta, Dashed)

* **REINFORCE (lr = 2e-6):** Line starts at approximately 0.4, fluctuates significantly between 0.2 and 0.8, and stabilizes around 0.6-0.7. (Color: Light Grey, Dot)

### Key Observations

* In the Evaluation Accuracy chart, GRPO (0.2) with a learning rate of 1e-5 consistently achieves the highest accuracy.

* Lower learning rates for GRPO and REC-OneSide-NoIS generally result in lower peak accuracy but potentially more stable training.

* The Training Reward chart shows that REC-OneSide-NoIS (0.6, 2.0) with a learning rate of 1e-6 achieves the highest and most stable reward.

* REINFORCE exhibits the most volatile training reward, suggesting potential instability.

### Interpretation

The data suggests that GRPO with a learning rate of 1e-5 performs best in terms of evaluation accuracy, while REC-OneSide-NoIS (0.6, 2.0) with a learning rate of 1e-6 achieves the highest and most stable training reward. The difference between the two charts indicates a potential trade-off between accuracy and reward. The instability observed in REINFORCE suggests that it may require more careful tuning or a different approach to stabilize training. The `sync_interval = 20` parameter likely refers to the frequency of synchronization between the actor and critic networks, and its value of 20 appears to be consistent across all experiments. The varying learning rates demonstrate the sensitivity of these algorithms to hyperparameter tuning. The REC-OneSide-NoIS algorithm appears to be more robust to learning rate changes than GRPO.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Comparison of Reinforcement Learning Algorithms: Evaluation Accuracy and Training Reward over Steps

### Overview

The image displays two line charts side-by-side, sharing a common legend. Both charts plot the performance of several reinforcement learning algorithms over 160 training steps, with a fixed `sync_interval = 20`. The left chart measures "Evaluation Accuracy," and the right chart measures "Training Reward." The charts compare variants of GRPO, REC-OneSide-NoIS, and REINFORCE algorithms with different learning rates (lr).

### Components/Axes

* **Common Title (Top Center):** `sync_interval = 20` (appears above both charts).

* **Left Chart:**

* **Y-Axis Label:** `Evaluation Accuracy`

* **Y-Axis Scale:** Linear, from 0 to 0.8, with major ticks at 0.0, 0.2, 0.4, 0.6, 0.8.

* **X-Axis Label:** `Training Steps`

* **X-Axis Scale:** Linear, from 0 to 160, with major ticks every 20 steps (0, 20, 40, ..., 160).

* **Right Chart:**

* **Y-Axis Label:** `Training Reward`

* **Y-Axis Scale:** Linear, from 0.0 to 1.0, with major ticks at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **X-Axis Label:** `Training Steps`

* **X-Axis Scale:** Identical to the left chart.

* **Legend (Bottom Center, spanning both charts):** Contains 8 entries, each with a unique color, marker, and label.

1. **Color:** Light teal, **Marker:** Circle, **Label:** `GRPO (0.2) (lr = 1e-5)`

2. **Color:** Medium teal, **Marker:** Square, **Label:** `GRPO (0.2) (lr = 2e-6)`

3. **Color:** Dark teal, **Marker:** Diamond, **Label:** `GRPO (0.2) (lr = 5e-6)`

4. **Color:** Light purple, **Marker:** Circle, **Label:** `REC-OneSide-NoIS (0.2) (lr = 1e-5)`

5. **Color:** Medium purple, **Marker:** Square, **Label:** `REC-OneSide-NoIS (0.2) (lr = 2e-6)`

6. **Color:** Dark purple, **Marker:** Diamond, **Label:** `REC-OneSide-NoIS (0.2) (lr = 5e-6)`

7. **Color:** Deep violet, **Marker:** Circle, **Label:** `REC-OneSide-NoIS (0.6, 2.0) (lr = 1e-6)`

8. **Color:** Light blue, **Marker:** Circle, **Label:** `REINFORCE (lr = 2e-6)`

### Detailed Analysis

**Left Chart: Evaluation Accuracy**

* **Trend Verification:** Most lines show an upward trend, indicating improving accuracy with training. The REINFORCE line (light blue) is a major exception, showing high volatility and a significant drop.

* **Data Series & Approximate Values:**

* **REC-OneSide-NoIS (0.6, 2.0) (lr = 1e-6) [Deep violet, circle]:** The top performer. Starts ~0.35, rises steadily to ~0.78 by step 160.

* **REC-OneSide-NoIS (0.2) variants [Purples]:** Cluster in the middle. The `lr=5e-6` (dark purple, diamond) variant performs best among them, reaching ~0.70. The `lr=1e-5` (light purple, circle) variant is the lowest of this group, ending near ~0.65.

* **GRPO (0.2) variants [Teals]:** Also cluster in the middle, slightly below the REC-OneSide-NoIS (0.2) group. The `lr=5e-6` (dark teal, diamond) variant is the best GRPO, ending near ~0.68. The `lr=1e-5` (light teal, circle) variant ends near ~0.62.

* **REINFORCE (lr = 2e-6) [Light blue, circle]:** Highly anomalous. Starts ~0.35, rises to ~0.45 by step 20, then plummets to near 0.0 by step 40. It shows a partial, volatile recovery between steps 100-140 (peaking ~0.55) before dropping again to near 0.0 at step 160.

**Right Chart: Training Reward**

* **Trend Verification:** Similar to accuracy, most reward lines trend upward. The REINFORCE line again shows a catastrophic drop and poor recovery.

* **Data Series & Approximate Values:**

* **REC-OneSide-NoIS (0.6, 2.0) (lr = 1e-6) [Deep violet, circle]:** Clearly dominant. Starts ~0.45, climbs rapidly to ~0.8 by step 40, and continues to a near-perfect reward of ~0.98 by step 160.

* **REC-OneSide-NoIS (0.2) variants [Purples]:** Form a middle cluster. The `lr=5e-6` (dark purple, diamond) variant leads this subgroup, reaching ~0.90. The `lr=1e-5` (light purple, circle) variant is the lowest, ending near ~0.70.

* **GRPO (0.2) variants [Teals]:** Cluster below the REC (0.2) group. The `lr=5e-6` (dark teal, diamond) variant is the best GRPO, ending near ~0.80. The `lr=1e-5` (light teal, circle) variant ends near ~0.65.

* **REINFORCE (lr = 2e-6) [Light blue, circle]:** Shows a severe failure mode. Starts ~0.45, rises briefly to ~0.65 by step 20, then crashes to 0.0 by step 40. It remains near 0.0 until step 120, after which it shows a weak, noisy recovery to only ~0.40 by step 160.

### Key Observations

1. **Clear Performance Hierarchy:** The `REC-OneSide-NoIS (0.6, 2.0)` algorithm with a low learning rate (`1e-6`) is the unequivocal best performer on both metrics, achieving near-maximum reward and highest accuracy.

2. **Learning Rate Sensitivity:** For both GRPO and REC-OneSide-NoIS (0.2), the intermediate learning rate (`5e-6`) consistently outperforms the higher (`1e-5`) and lower (`2e-6`) rates tested, suggesting an optimal LR exists in that range for these configurations.

3. **Catastrophic Failure of REINFORCE:** The REINFORCE algorithm exhibits a complete collapse in performance early in training (around step 40) on both metrics. Its recovery is minimal and unstable, indicating severe instability with the given hyperparameters (`lr=2e-6`, `sync_interval=20`).

4. **Correlation Between Metrics:** There is a strong positive correlation between Evaluation Accuracy and Training Reward for all stable algorithms. The line shapes in both charts are very similar for each corresponding algorithm/color.

### Interpretation

This data demonstrates a controlled experiment comparing policy gradient methods in reinforcement learning. The key finding is the significant superiority of the `REC-OneSide-NoIS` algorithm, particularly with the `(0.6, 2.0)` parameterization and a very low learning rate. This configuration achieves robust, high-performance learning.

The results highlight two critical factors for successful training in this context:

1. **Algorithm Choice:** The `REC-OneSide-NoIS` approach appears more stable and sample-efficient than both GRPO and the classic REINFORCE under these conditions.

2. **Hyperparameter Tuning:** Learning rate is a crucial hyperparameter. The performance gap between LR variants of the same algorithm is substantial, and the wrong choice (as seen with REINFORCE) can lead to complete training failure.

The `sync_interval=20` parameter is held constant, so its effect is not evaluated here. The catastrophic drop in the REINFORCE line suggests a potential issue with high variance in gradient estimates, which the other algorithms seem to mitigate more effectively. This visualization would be used to justify the selection of `REC-OneSide-NoIS (0.6, 2.0) (lr=1e-6)` for further experiments or deployment, and to caution against using REINFORCE without significant modification or different hyperparameter tuning.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Evaluation Accuracy and Training Reward with sync_interval = 20

### Overview

Two line graphs are presented side-by-side, comparing the performance of different reinforcement learning algorithms over training steps. The left graph shows **Evaluation Accuracy**, and the right graph shows **Training Reward**. Both graphs use a sync interval of 20 and include multiple data series with distinct line styles and colors.

---

### Components/Axes

#### Left Graph (Evaluation Accuracy)

- **X-axis**: Training Steps (0 to 160, increments of 20)

- **Y-axis**: Evaluation Accuracy (0.0 to 0.8, increments of 0.2)

- **Legend**: Located at the bottom, mapping colors to algorithms:

- **Green (solid)**: GRPO (0.2) (lr = 1e-5)

- **Green (dashed)**: GRPO (0.2) (lr = 2e-6)

- **Green (dotted)**: GRPO (0.2) (lr = 5e-6)

- **Purple (solid)**: REC-OneSide-NoIS (0.2) (lr = 1e-5)

- **Purple (dashed)**: REC-OneSide-NoIS (0.2) (lr = 2e-6)

- **Purple (dotted)**: REC-OneSide-NoIS (0.2) (lr = 5e-6)

- **Blue (dashed)**: REINFORCE (lr = 2e-6)

#### Right Graph (Training Reward)

- **X-axis**: Training Steps (0 to 160, increments of 20)

- **Y-axis**: Training Reward (0.0 to 1.0, increments of 0.2)

- **Legend**: Same as the left graph, with identical color-to-algorithm mappings.

---

### Detailed Analysis

#### Left Graph (Evaluation Accuracy)

1. **REC-OneSide-NoIS (solid purple, lr = 1e-5)**:

- Starts at ~0.35, steadily increases to ~0.75 by step 160.

- Smooth upward trend with minimal fluctuation.

2. **GRPO (solid green, lr = 1e-5)**:

- Begins at ~0.3, rises to ~0.65 by step 160.

- Slightly less steep than REC-OneSide-NoIS.

3. **REINFORCE (blue dashed, lr = 2e-6)**:

- Starts at ~0.3, drops sharply to ~0.0 at step 40.

- Recovers to ~0.2 by step 160 but remains unstable.

4. **GRPO (dashed green, lr = 2e-6)**:

- Starts at ~0.35, plateaus at ~0.55 by step 160.

- Less volatile than REINFORCE but slower growth.

5. **REC-OneSide-NoIS (dashed purple, lr = 2e-6)**:

- Similar to solid purple but slightly lower (~0.7 vs. 0.75).

6. **GRPO (dotted green, lr = 5e-6)**:

- Starts at ~0.3, peaks at ~0.6, then drops to ~0.4 by step 160.

- Most volatile among GRPO variants.

#### Right Graph (Training Reward)

1. **REC-OneSide-NoIS (solid purple, lr = 1e-6)**:

- Starts at ~0.4, rises to ~0.95 by step 160.

- Consistent upward trend with minor fluctuations.

2. **REC-OneSide-NoIS (dashed purple, lr = 2e-6)**:

- Similar to solid purple but slightly lower (~0.9 vs. 0.95).

3. **REINFORCE (blue dashed, lr = 2e-6)**:

- Starts at ~0.4, drops to ~0.0 at step 40.

- Recovers to ~0.6 by step 160 but with sharp dips.

4. **GRPO (solid green, lr = 1e-5)**:

- Starts at ~0.4, rises to ~0.85 by step 160.

- Smooth growth with minor oscillations.

5. **GRPO (dashed green, lr = 2e-6)**:

- Starts at ~0.45, plateaus at ~0.75 by step 160.

6. **GRPO (dotted green, lr = 5e-6)**:

- Starts at ~0.4, peaks at ~0.8, then drops to ~0.6 by step 160.

---

### Key Observations

1. **REC-OneSide-NoIS (lr = 1e-6)** consistently outperforms other algorithms in both metrics, achieving the highest evaluation accuracy (~0.75) and training reward (~0.95).

2. **REINFORCE** exhibits significant instability, with sharp drops in both graphs (e.g., to ~0.0 at step 40 in Evaluation Accuracy).

3. **GRPO** variants show mixed performance:

- Lower learning rates (1e-5) yield better results than higher rates (5e-6).

- Dotted GRPO (lr = 5e-6) underperforms in Evaluation Accuracy but matches REC-OneSide-NoIS in Training Reward.

4. **Sync Interval = 20** appears to stabilize training for REC-OneSide-NoIS but does not mitigate REINFORCE's volatility.

---

### Interpretation

The data suggests that **REC-OneSide-NoIS with a learning rate of 1e-6** is the most robust algorithm for this task, balancing high evaluation accuracy and stable training rewards. **REINFORCE** struggles with convergence, likely due to its high learning rate (2e-6), which causes overshooting during training. **GRPO** performs well with lower learning rates but becomes unstable at higher rates. The sync interval of 20 may help synchronize updates for REC-OneSide-NoIS, reducing noise in its training process. These results highlight the importance of tuning learning rates and sync intervals for algorithm stability and performance.

DECODING INTELLIGENCE...