## Flowchart: Multi-Round Agent Evaluation Process

### Overview

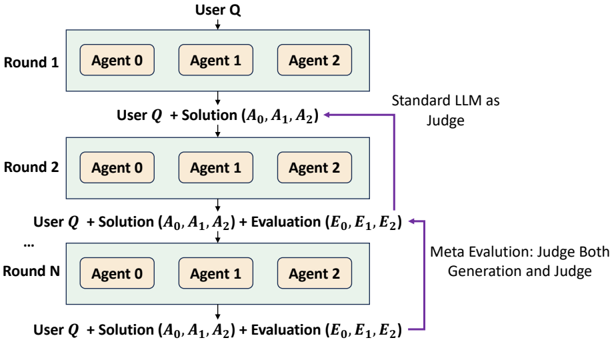

The diagram illustrates a multi-stage iterative process where three agents (Agent 0, Agent 1, Agent 2) generate solutions to a user query (User Q) across N rounds. Each round incorporates prior solutions and evaluations, culminating in a meta-evaluation phase that assesses both solution generation and judgment quality.

### Components/Axes

1. **Rounds**: Labeled "Round 1" to "Round N" (top to bottom)

2. **Agents**: Three agents per round (Agent 0, Agent 1, Agent 2)

3. **Input Flow**:

- Starts with "User Q"

- Progresses through "User Q + Solution (A₀, A₁, A₂)" in each round

- Adds "Evaluation (E₀, E₁, E₂)" after Round 2 onward

4. **Evaluation Paths**:

- Standard LLM as Judge (purple arrow)

- Meta Evaluation: Judge Both Generation and Judge (purple dashed arrow)

5. **Legend**:

- Standard LLM as Judge (solid purple)

- Meta Evaluation (dashed purple)

### Detailed Analysis

- **Round 1**:

- Input: User Q

- Output: Solutions (A₀, A₁, A₂) from all agents

- Evaluation: Not yet applied

- **Round 2+**:

- Input: Previous round's solutions + evaluations

- Output: New solutions (A₀, A₁, A₂) + evaluations (E₀, E₁, E₂)

- **Evaluation Flow**:

- Standard LLM evaluates solutions in parallel across rounds

- Meta-evaluation path diverges after Round 2, assessing both solution quality and judgment reliability

### Key Observations

1. **Iterative Refinement**: Each round incorporates prior evaluations (E₀-E₂) to inform subsequent agent responses

2. **Dual Evaluation Framework**:

- Standard LLM provides immediate solution assessment

- Meta-evaluation adds a layer of judgment quality analysis

3. **Agent Consistency**: All three agents operate identically across rounds with identical input/output structures

4. **Scalability**: Process extends to N rounds, suggesting adaptability to complex problem domains

### Interpretation

This architecture demonstrates a hierarchical approach to AI system evaluation:

1. **First-Order Evaluation**: Standard LLM judges solution quality (A₀-A₂) against user requirements (Q)

2. **Second-Order Evaluation**: Meta-evaluation assesses whether the judging process itself is reliable and consistent

3. **Cumulative Learning**: Evaluations (E₀-E₂) from earlier rounds inform later agent responses, creating a feedback loop for continuous improvement

The dual-path evaluation suggests an awareness of potential biases in both solution generation and judgment processes. By explicitly evaluating the judging mechanism (meta-evaluation), the system acknowledges that evaluation quality itself can be a variable requiring optimization. This design could be particularly valuable in high-stakes applications where both solution accuracy and evaluation reliability are critical.