## Heatmap: Activation Magnitude Across Model Layers and Training Steps

### Overview

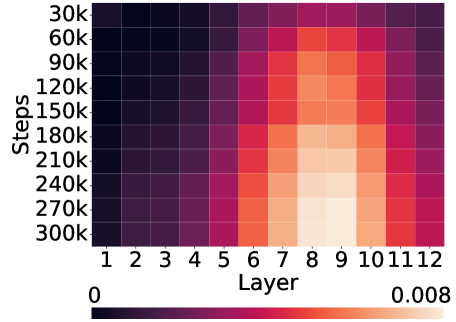

The image is a heatmap visualizing a numerical metric (likely activation magnitude, gradient norm, or a similar measure) across two dimensions: the layer index of a neural network (x-axis) and the number of training steps (y-axis). The color intensity represents the value of the metric, with a scale provided at the bottom.

### Components/Axes

* **Y-Axis (Vertical):** Labeled **"Steps"**. It represents the progression of training, with tick marks at:

* 30k, 60k, 90k, 120k, 150k, 180k, 210k, 240k, 270k, 300k.

* The axis runs from top (30k steps) to bottom (300k steps).

* **X-Axis (Horizontal):** Labeled **"Layer"**. It represents the sequential layers of a model, with tick marks for each integer from **1 to 12**.

* **Color Scale/Legend:** Located at the bottom of the chart. It is a horizontal gradient bar.

* **Left Label:** `0`

* **Right Label:** `0.008`

* **Gradient:** Transitions from a very dark purple/black (representing 0) through shades of purple, red, and orange, to a very light peach/cream color (representing 0.008).

### Detailed Analysis

The heatmap displays a clear, non-uniform pattern of values across the layer-step grid.

* **Spatial Pattern & Trend Verification:**

* **Left Region (Layers 1-5):** This entire vertical band is consistently dark across all training steps. The color suggests values are very close to the minimum of the scale (0). There is no significant trend with increasing steps; values remain low.

* **Middle Region (Layers 6-9):** This region shows the most dynamic change.

* **Trend with Steps:** For layers 6 through 9, the color lightens (value increases) as we move down the y-axis (from 30k to 300k steps). The gradient is most pronounced in Layers 8 and 9.

* **Trend with Layers:** At any given step (e.g., 300k), the value increases from Layer 6 to a peak at Layers 8-9, then begins to decrease.

* **Right Region (Layers 10-12):** This band is darker than the middle region but lighter than the left region. The color (value) appears relatively stable across steps, with a slight darkening (decrease) visible in the very last rows (270k-300k steps) for Layers 11-12.

* **Key Data Points (Approximate from Color):**

* **Highest Values:** The lightest peach/cream colors, indicating values approaching or at **0.008**, are concentrated in **Layers 8 and 9** at the highest training steps (**270k and 300k**).

* **Moderate Values:** Layers 6, 7, 10, and 11 show mid-range colors (oranges and reds), suggesting values roughly between **0.003 and 0.006** at later steps.

* **Lowest Values:** Layers 1-5 and, to a lesser extent, Layer 12 at early steps show the darkest colors, indicating values near **0**.

### Key Observations

1. **Layer-Specific Activation:** The measured phenomenon is not uniform across the model. It is strongly concentrated in the middle layers (6-11), with a distinct peak in Layers 8-9.

2. **Training Progression:** The metric in the critical middle layers (6-9) shows a clear positive correlation with training steps. The effect becomes more pronounced as training progresses.

3. **Stability of Early Layers:** The first five layers show negligible activity (near-zero values) throughout the entire training period shown, suggesting they are either not involved in the measured process or their contribution is minimal and constant.

4. **Asymmetry:** The pattern is not symmetric around the peak. The drop-off in value is sharper moving from Layer 9 to Layer 12 than it is moving from Layer 9 to Layer 6.

### Interpretation

This heatmap likely visualizes the **evolution of internal representations or gradient flow** during the training of a 12-layer neural network.

* **What it Suggests:** The data indicates that as training progresses (steps increase), the middle layers of the network (particularly 8 and 9) become increasingly "active" or "important" according to the measured metric. This is a common pattern in deep learning, where intermediate layers often learn the most complex and useful features.

* **Relationship Between Elements:** The x-axis (Layer) represents the model's depth, and the y-axis (Steps) represents time. The heatmap shows how the "hotspot" of activity not only exists in a specific depth region but also intensifies over time. The early layers (1-5) may be performing stable, low-level feature extraction that doesn't change dramatically, while the later layers (10-12) might be involved in more task-specific, final processing that stabilizes earlier.

* **Notable Anomaly/Insight:** The most striking insight is the **localized and growing importance of Layers 8-9**. This could identify them as a critical bottleneck or the core "reasoning" component of the model for the task it was trained on. Monitoring these layers could be key to understanding model performance or diagnosing training issues. The near-zero values in early layers might also prompt an investigation into whether those layers are necessary or if the model could be pruned.