## Chart: Model & Hardware Growth

### Overview

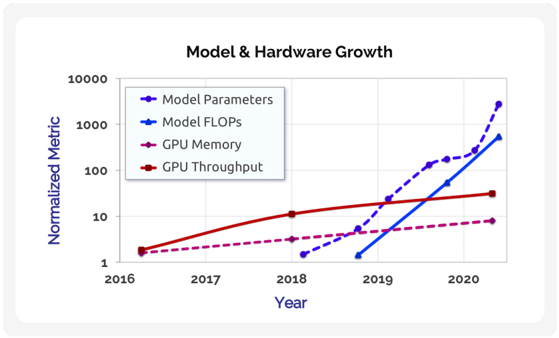

The image is a line chart comparing the growth of model parameters, model FLOPS, GPU memory, and GPU throughput from 2016 to 2020. The y-axis represents a normalized metric on a logarithmic scale, ranging from 1 to 10000. The x-axis represents the year.

### Components/Axes

* **Title:** Model & Hardware Growth

* **X-axis:** Year (2016, 2017, 2018, 2019, 2020)

* **Y-axis:** Normalized Metric (Logarithmic scale: 1, 10, 100, 1000, 10000)

* **Legend (top-left):**

* Model Parameters (dashed blue line)

* Model FLOPS (solid blue line)

* GPU Memory (dashed purple line)

* GPU Throughput (solid red line)

### Detailed Analysis

* **Model Parameters (dashed blue line):**

* Trend: Initially slow growth, then rapid increase from 2019 to 2020.

* 2016: ~2

* 2017: ~3

* 2018: ~5

* 2019: ~15

* 2020: ~3000

* **Model FLOPS (solid blue line):**

* Trend: Stagnant until 2019, then rapid increase from 2019 to 2020.

* 2016: Not visible

* 2017: Not visible

* 2018: Not visible

* 2019: ~2

* 2020: ~400

* **GPU Memory (dashed purple line):**

* Trend: Slow, steady growth.

* 2016: ~2

* 2017: ~3

* 2018: ~4

* 2019: ~5

* 2020: ~8

* **GPU Throughput (solid red line):**

* Trend: Steady growth, but slower than Model Parameters and Model FLOPS.

* 2016: ~2

* 2017: ~5

* 2018: ~12

* 2019: ~20

* 2020: ~35

### Key Observations

* Model Parameters and Model FLOPS show exponential growth between 2019 and 2020.

* GPU Memory and GPU Throughput show linear growth over the period.

* The y-axis is on a logarithmic scale, which emphasizes the rapid growth of Model Parameters and Model FLOPS.

### Interpretation

The chart illustrates the rapid advancement in model complexity (Model Parameters and Model FLOPS) compared to the more gradual improvement in GPU hardware capabilities (GPU Memory and GPU Throughput). The exponential growth in model size and computational requirements suggests a trend towards more complex and resource-intensive AI models. The slower growth of GPU hardware may indicate a potential bottleneck in training and deploying these models, or that software optimizations are allowing for more efficient use of existing hardware. The divergence in trends highlights the increasing demand for more powerful hardware to support the growing complexity of AI models.