# Technical Document Extraction: Attention Forward Speed Benchmark

## 1. Document Header

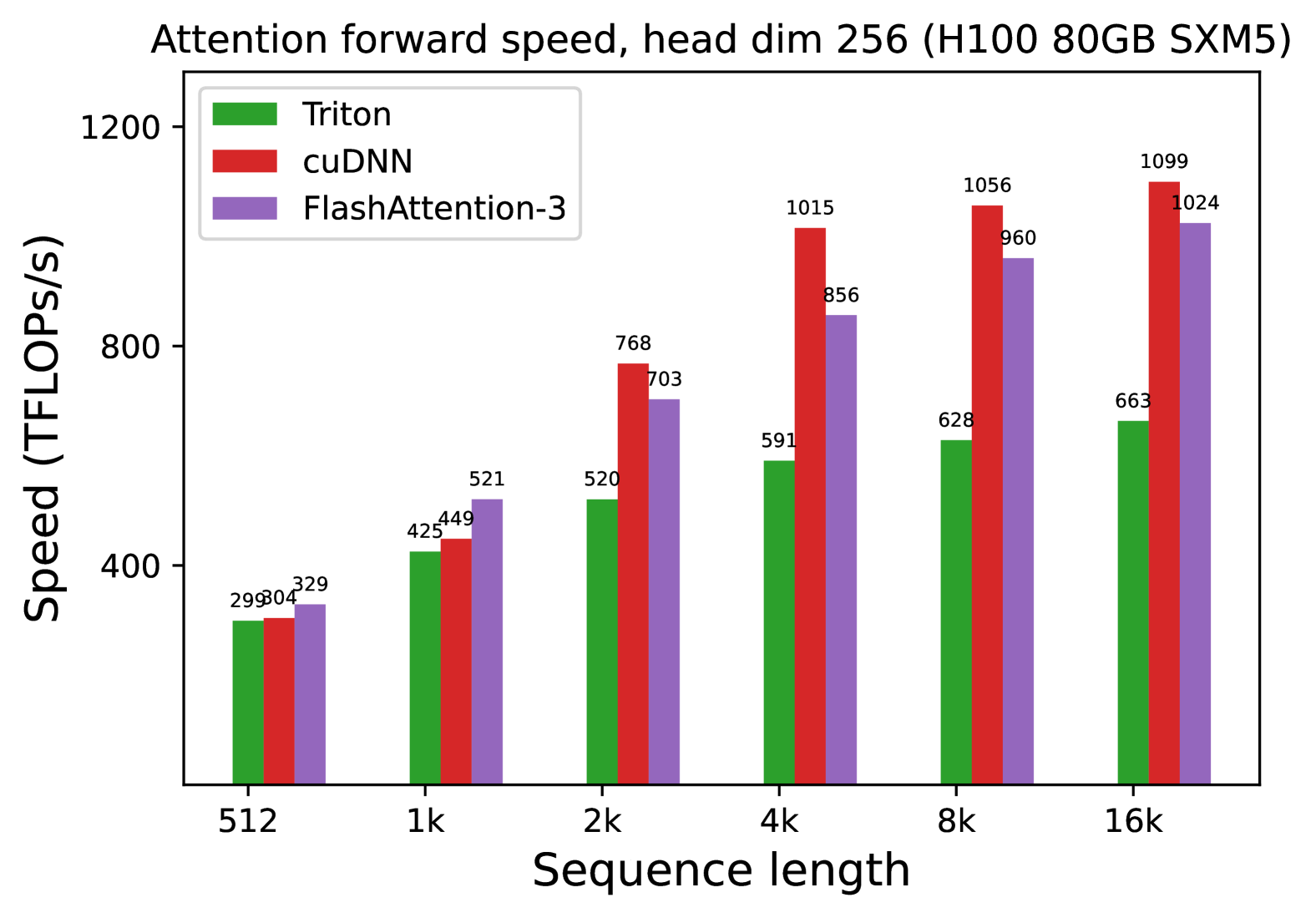

* **Title:** Attention forward speed, head dim 256 (H100 80GB SXM5)

* **Hardware Context:** NVIDIA H100 80GB SXM5 GPU.

* **Operation:** Attention forward pass with a head dimension of 256.

## 2. Chart Metadata and Structure

* **Chart Type:** Grouped Bar Chart.

* **X-Axis Label:** Sequence length

* **X-Axis Categories:** 512, 1k, 2k, 4k, 8k, 16k.

* **Y-Axis Label:** Speed (TFLOPS/s)

* **Y-Axis Scale:** Linear, ranging from 0 to 1200 with major ticks at 400, 800, and 1200.

* **Legend Categories:**

* **Triton:** Green bar

* **cuDNN:** Red bar

* **FlashAttention-3:** Purple bar

## 3. Data Extraction and Trend Analysis

### Trend Verification

* **Triton (Green):** Shows a consistent upward slope as sequence length increases, starting at ~300 TFLOPS/s and reaching ~660 TFLOPS/s. It is consistently the lowest performing of the three across all sequence lengths.

* **cuDNN (Red):** Shows a steep upward slope, particularly between 1k and 4k. It becomes the dominant performer starting at the 2k sequence length and maintains the highest TFLOPS/s through 16k.

* **FlashAttention-3 (Purple):** Shows a strong upward slope. It is the fastest at the smallest sequence length (512) and the second fastest from 2k to 16k.

### Data Table (Reconstructed)

| Sequence Length | Triton (Green) | cuDNN (Red) | FlashAttention-3 (Purple) |

| :--- | :--- | :--- | :--- |

| **512** | 299 | 304 | 329 |

| **1k** | 425 | 449 | 521 |

| **2k** | 520 | 768 | 703 |

| **4k** | 591 | 1015 | 856 |

| **8k** | 628 | 1056 | 960 |

| **16k** | 663 | 1099 | 1024 |

## 4. Component Analysis

* **Header Region:** Contains the descriptive title specifying the operation, head dimension, and specific GPU hardware.

* **Main Chart Region:** Contains the grouped bars. Each group corresponds to a sequence length. Data labels are placed directly above each bar for precision.

* **Legend Region:** Clearly distinguishes the three software implementations (Triton, cuDNN, FlashAttention-3) using color coding.

* **Footer/Axis Region:** Defines the independent variable (Sequence length) and the dependent variable (Speed in TFLOPS/s).

## 5. Summary of Findings

The benchmark indicates that for an H100 GPU with a head dimension of 256:

1. **FlashAttention-3** is the most efficient for very short sequences (512 to 1k).

2. **cuDNN** scales most effectively for medium to long sequences (2k to 16k), peaking at **1099 TFLOPS/s**.

3. **Triton** provides the lowest throughput of the three tested methods across the entire range of sequence lengths.

4. All methods show improved TFLOPS/s utilization as the sequence length increases, suggesting better hardware saturation at higher scales.