## Screenshot: ChatInterface with Population Query and Fact-Check

### Overview

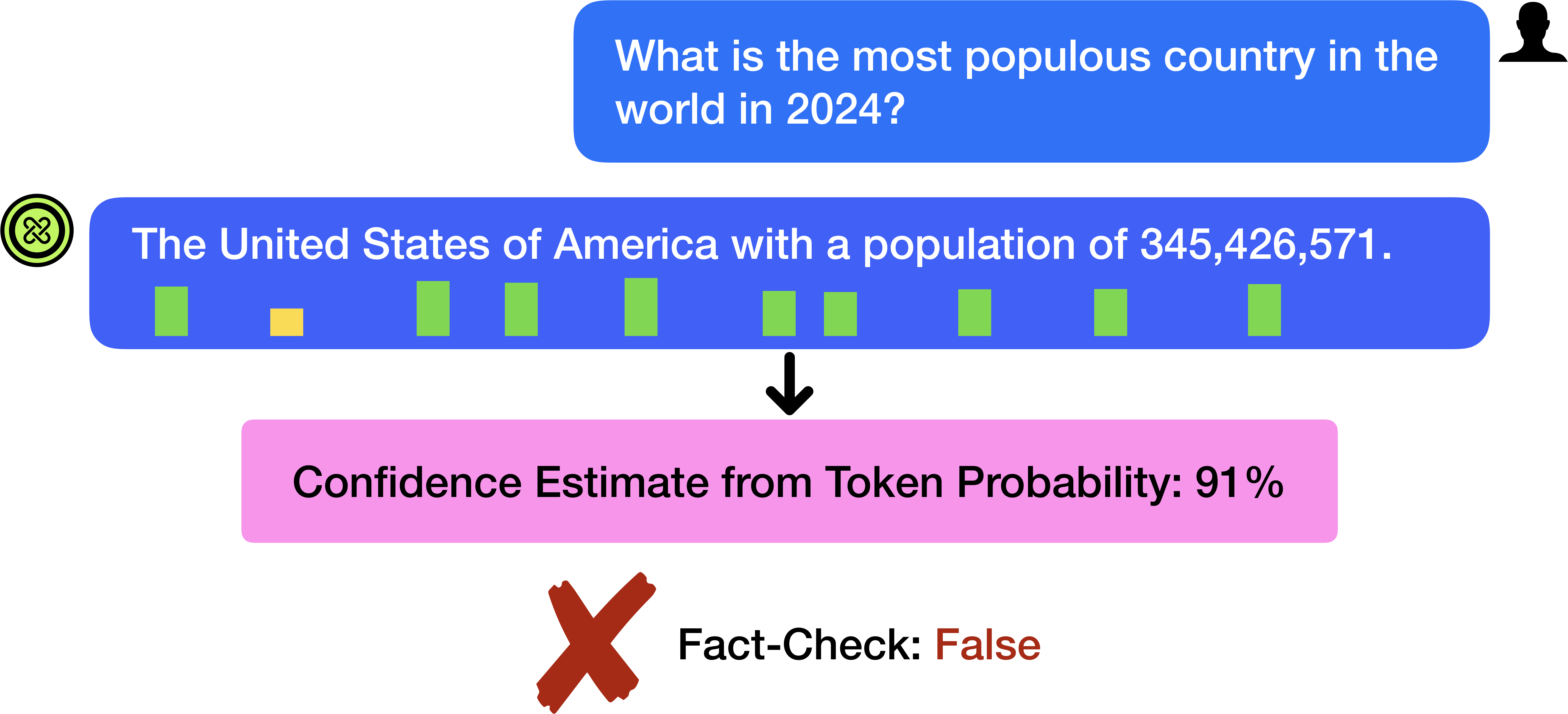

The image depicts a chat interface where a user asks, "What is the most populous country in the world in 2024?" The response claims the United States of America has a population of **345,426,571**, accompanied by a confidence estimate of **91%** from token probability. A subsequent fact-check explicitly labels this claim as **False**, marked with a red "X".

---

### Components/Axes

1. **Chat Messages**:

- **User Query (Top-Right)**:

- Text: *"What is the most populous country in the world in 2024?"*

- Visual: Blue speech bubble with white text; silhouette icon (anonymous user) in the top-right corner.

- **AI Response (Bottom-Left)**:

- Text: *"The United States of America with a population of 345,426,571."*

- Visual: Blue speech bubble with white text; green vertical bars (likely representing population data) and one yellow bar (smaller than green bars).

- **Confidence Estimate**:

- Text: *"Confidence Estimate from Token Probability: 91%"* in a pink rectangle.

- **Fact-Check**:

- Text: *"Fact-Check: False"* with a red "X" symbol.

2. **Visual Elements**:

- **Green Bars**: 8 vertical bars of varying heights (likely representing population data for multiple countries, though unlabeled).

- **Yellow Bar**: 1 shorter bar (possibly indicating a secondary data point, e.g., a different country or metric).

- **Red "X"**: Overlays the fact-check section, signaling incorrectness.

---

### Detailed Analysis

- **Population Claim**: The AI asserts the U.S. population is **345,426,571** (exact numerical value provided).

- **Confidence Estimate**: The model assigns a **91% probability** to this answer, suggesting high internal confidence in the token sequence.

- **Fact-Check Discrepancy**: The fact-check explicitly contradicts the AI’s response, indicating the claim is **False**.

---

### Key Observations

1. **Incorrect Answer**: The AI’s response is factually wrong. The most populous country in 2024 is **India** (estimated ~1.428 billion), not the U.S. (~339 million in 2023, with minimal growth projected for 2024).

2. **High Confidence, Low Accuracy**: The 91% confidence estimate highlights a potential flaw in the model’s calibration—high token probability does not guarantee factual correctness.

3. **Visual Ambiguity**: The green/yellow bars lack labels, making it impossible to verify their intended representation (e.g., country populations, growth rates).

---

### Interpretation

- **Model Limitations**: The AI’s response demonstrates that language models can generate plausible-sounding but incorrect answers, even with high confidence scores. This underscores the need for external fact-checking in critical applications.

- **User Interface Design**: The use of color-coded bars (green/yellow) and a red "X" provides visual cues for data validity, but the absence of labels reduces interpretability.

- **Ethical Implications**: Deploying such systems without robust fact-checking mechanisms risks spreading misinformation, particularly in high-stakes domains like demographics, healthcare, or policy.

---

### Conclusion

This screenshot illustrates the tension between model confidence and factual accuracy. While the AI’s response is visually structured and numerically specific, the explicit fact-check reveals its inaccuracy. The image serves as a cautionary example of the importance of integrating verification systems into AI-driven information pipelines.