## Line Chart: Loss Curve for Vicuna-7B-v1.5-Chat Model Training

### Overview

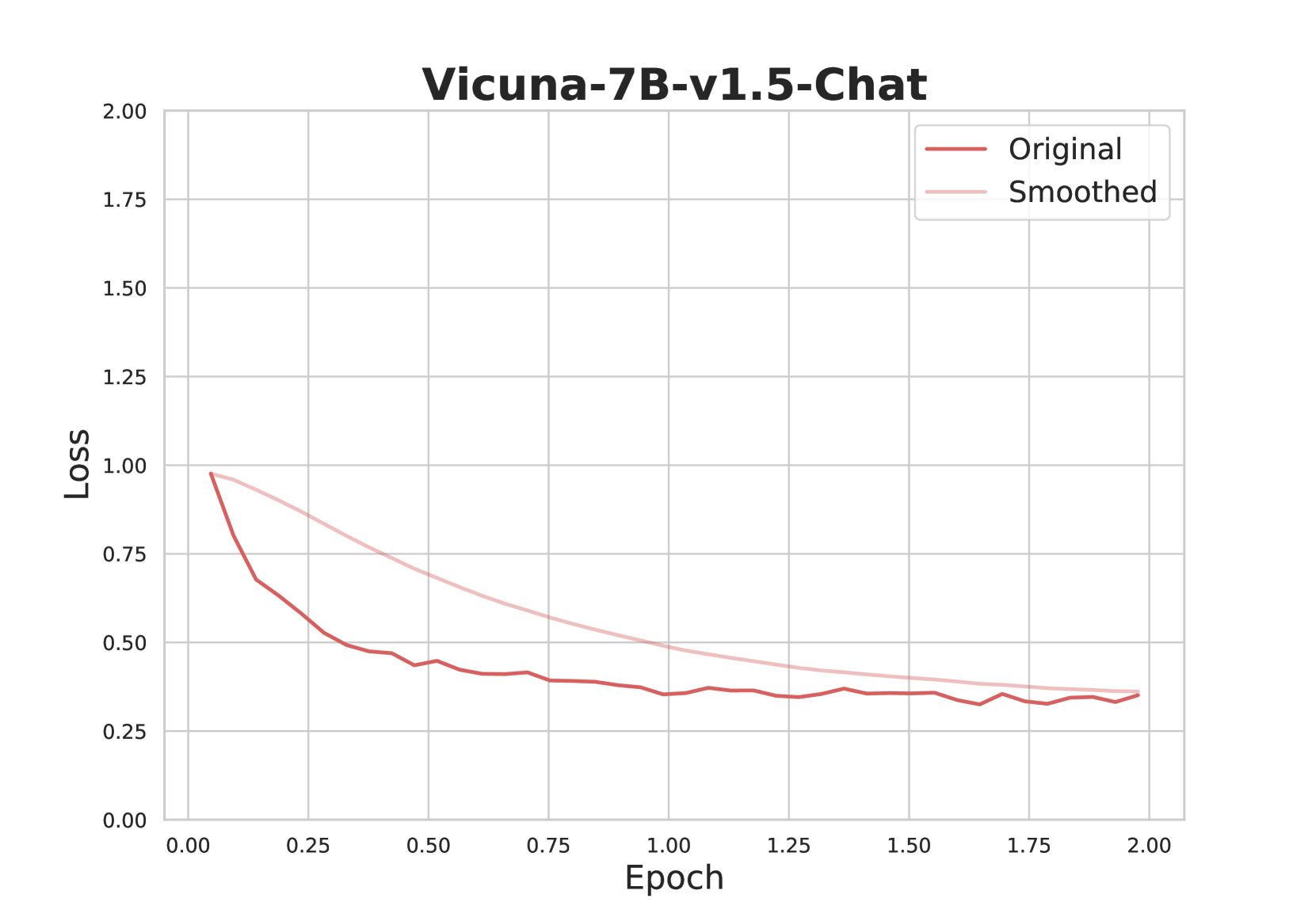

The image displays a line chart titled "Vicuna-7B-v1.5-Chat," illustrating the training loss of a machine learning model over a series of epochs. The chart compares two data series: the raw, fluctuating loss values ("Original") and a smoothed version of the same data ("Smoothed").

### Components/Axes

* **Title:** "Vicuna-7B-v1.5-Chat" (centered at the top).

* **Y-Axis (Vertical):** Labeled "Loss". The scale ranges from 0.00 to 2.00, with major gridlines and numerical markers at intervals of 0.25 (0.00, 0.25, 0.50, 0.75, 1.00, 1.25, 1.50, 1.75, 2.00).

* **X-Axis (Horizontal):** Labeled "Epoch". The scale ranges from 0.00 to 2.00, with major gridlines and numerical markers at intervals of 0.25 (0.00, 0.25, 0.50, 0.75, 1.00, 1.25, 1.50, 1.75, 2.00).

* **Legend:** Positioned in the top-right corner of the chart area. It contains two entries:

* A dark red line labeled "Original".

* A light pink line labeled "Smoothed".

* **Grid:** A light gray grid is present, with both horizontal and vertical lines corresponding to the axis markers.

### Detailed Analysis

**1. "Original" Data Series (Dark Red Line):**

* **Trend Verification:** This line exhibits a steep initial decline followed by a gradual, noisy descent. It shows significant high-frequency fluctuations throughout.

* **Data Points (Approximate):**

* Epoch 0.00: Loss ≈ 1.00

* Epoch 0.25: Loss ≈ 0.65

* Epoch 0.50: Loss ≈ 0.45

* Epoch 0.75: Loss ≈ 0.40

* Epoch 1.00: Loss ≈ 0.35

* Epoch 1.25: Loss ≈ 0.35

* Epoch 1.50: Loss ≈ 0.35

* Epoch 1.75: Loss ≈ 0.33

* Epoch 2.00: Loss ≈ 0.35

* **Spatial Grounding:** The line starts at the top-left of the plotted data area and moves towards the bottom-right, remaining consistently below the "Smoothed" line after the initial point.

**2. "Smoothed" Data Series (Light Pink Line):**

* **Trend Verification:** This line shows a smooth, monotonic decrease, curving gently downward from left to right without any visible fluctuations.

* **Data Points (Approximate):**

* Epoch 0.00: Loss ≈ 1.00 (coincides with the start of the "Original" line).

* Epoch 0.50: Loss ≈ 0.70

* Epoch 1.00: Loss ≈ 0.50

* Epoch 1.50: Loss ≈ 0.40

* Epoch 2.00: Loss ≈ 0.35

* **Spatial Grounding:** This line originates at the same top-left point as the "Original" line but follows a higher, smoother path, converging with the "Original" line near the end of the plotted range at Epoch 2.00.

### Key Observations

1. **Convergence:** Both loss curves show a clear downward trend, indicating the model is learning and its performance is improving (loss is decreasing) as training progresses over the epochs.

2. **Smoothing Effect:** The "Smoothed" line effectively filters out the high-frequency noise present in the "Original" loss signal, revealing the underlying, steady trend of improvement.

3. **Rate of Learning:** The most significant reduction in loss occurs in the first quarter of the training period shown (Epoch 0.00 to 0.50). The rate of decrease slows considerably after Epoch 1.00, suggesting the model is approaching a plateau or convergence point.

4. **Final Value:** By Epoch 2.00, both the original and smoothed loss values converge to approximately 0.35.

### Interpretation

This chart is a standard diagnostic tool for monitoring the training of a neural network, specifically the Vicuna-7B-v1.5-Chat large language model. The "Loss" metric quantifies the error between the model's predictions and the target outputs; a decreasing trend is the desired outcome.

The relationship between the two lines demonstrates the utility of loss smoothing. The raw "Original" loss is volatile, which can make it difficult to discern the true training trajectory from batch-to-batch variance. The "Smoothed" line provides a clearer, more interpretable view of the model's consistent progress. The steep initial drop suggests effective early learning, while the flattening curve indicates diminishing returns as training continues, a common phenomenon as models fine-tune their parameters. The convergence of both lines at a low value (~0.35) by Epoch 2.00 suggests the training process for this segment was stable and successful in reducing error.