TECHNICAL ASSET FINGERPRINT

e3f6bdfb55b220be5e1ac780

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

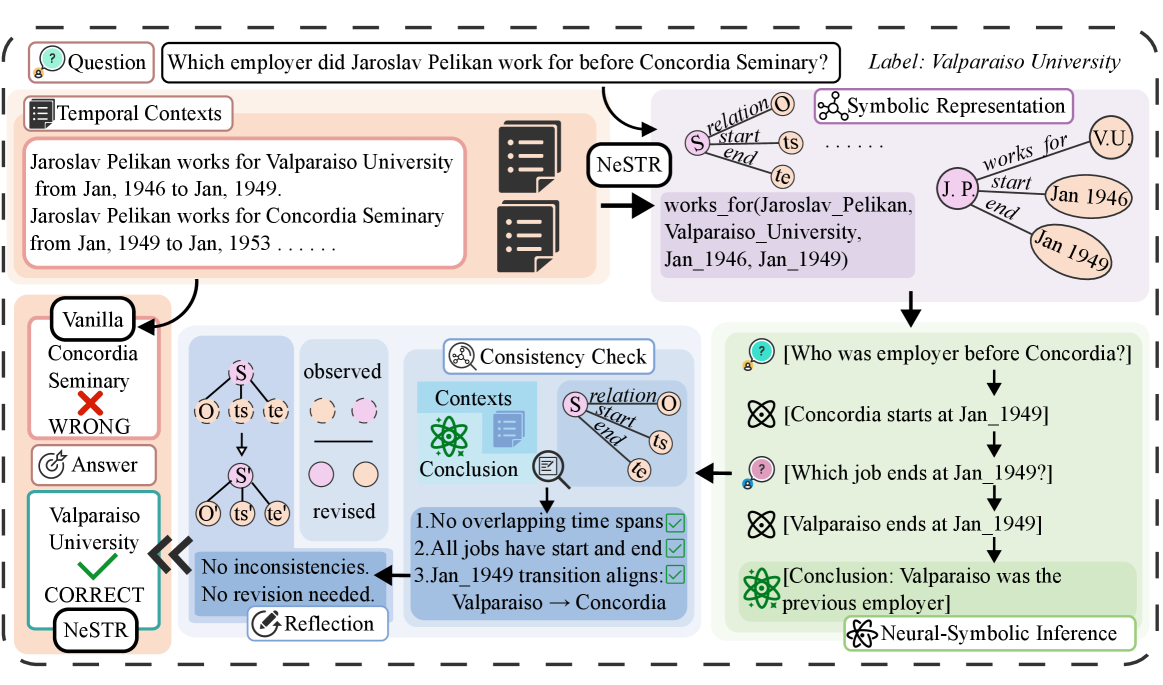

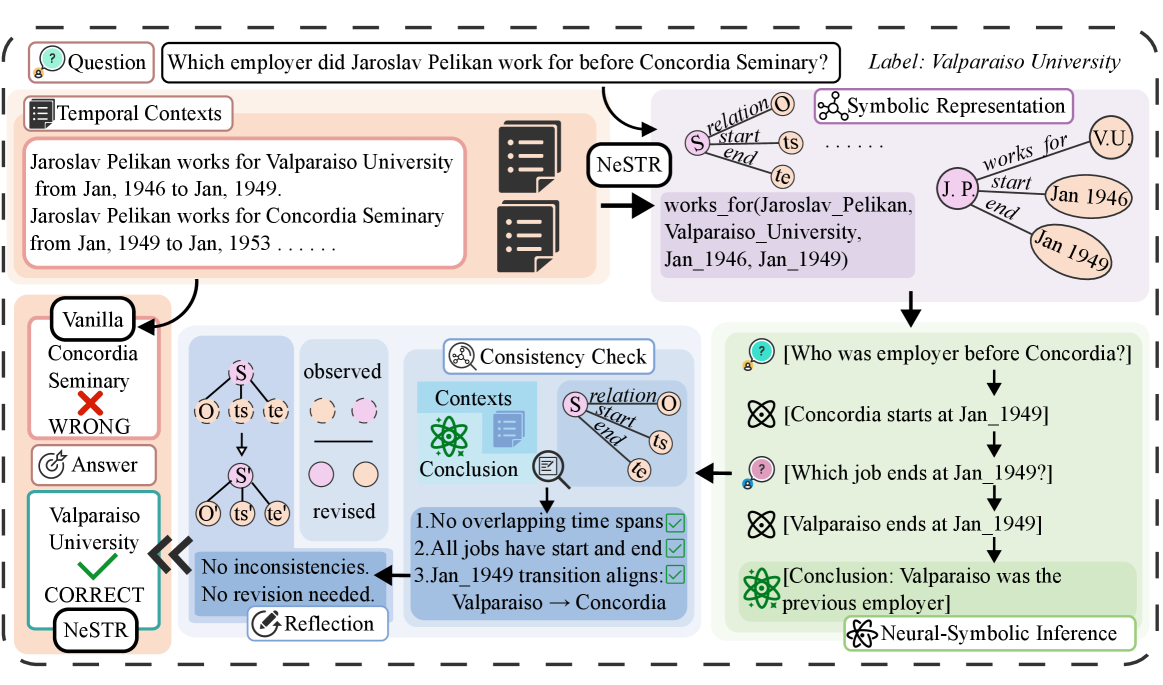

## Diagram: Neural-Symbolic Inference Process

### Overview

The image illustrates a neural-symbolic inference process for answering a question about Jaroslav Pelikan's employment history. It shows how temporal contexts are used to derive a symbolic representation, perform consistency checks, and arrive at a correct answer using neural-symbolic inference.

### Components/Axes

The diagram is divided into several key components:

1. **Question:** "Which employer did Jaroslav Pelikan work for before Concordia Seminary?" with the correct label "Valparaiso University".

2. **Temporal Contexts:**

* "Jaroslav Pelikan works for Valparaiso University from Jan, 1946 to Jan, 1949."

* "Jaroslav Pelikan works for Concordia Seminary from Jan, 1949 to Jan, 1953..."

3. **NeSTR (Neural-Symbolic Transformer):** This component transforms the temporal contexts into a symbolic representation.

4. **Symbolic Representation:** A graph-like structure representing the relationships between entities and time periods. It shows "works_for(Jaroslav_Pelikan, Valparaiso_University, Jan_1946, Jan_1949)". A simplified graph shows J.P. (Jaroslav Pelikan) works for V.U. (Valparaiso University) from Jan 1946 to Jan 1949.

5. **Consistency Check:** This component verifies the consistency of the derived information.

* **Contexts:** Uses the temporal contexts.

* **Conclusion:** Checks for:

1. No overlapping time spans (✅).

2. All jobs have start and end (✅).

3. Jan_1949 transition aligns: Valparaiso -> Concordia (✅).

6. **Vanilla Answer:** A direct answer without the neural-symbolic process, which is "Concordia Seminary" (marked as WRONG).

7. **Answer:** The correct answer, "Valparaiso University" (marked as CORRECT).

8. **Reflection:** A process that occurs when inconsistencies are found, leading to revisions. In this case, "No inconsistencies. No revision needed."

9. **Neural-Symbolic Inference:** This component uses the symbolic representation and consistency checks to infer the correct answer. It involves a series of questions and inferences:

* "Who was employer before Concordia?"

* "Concordia starts at Jan_1949"

* "Which job ends at Jan_1949?"

* "Valparaiso ends at Jan_1949"

* "Conclusion: Valparaiso was the previous employer"

### Detailed Analysis or Content Details

* **Temporal Contexts:** The provided temporal contexts establish the employment timeline of Jaroslav Pelikan at Valparaiso University and Concordia Seminary.

* **NeSTR:** The NeSTR component converts the natural language temporal contexts into a structured symbolic representation. This representation captures the entities (Jaroslav Pelikan, Valparaiso University, Concordia Seminary) and their relationships (works for) along with the corresponding time intervals.

* **Symbolic Representation:** The symbolic representation is a key element in the neural-symbolic approach. It allows for reasoning and inference based on structured knowledge. The representation explicitly states that Jaroslav Pelikan worked for Valparaiso University from January 1946 to January 1949.

* **Consistency Check:** The consistency check ensures that the derived information is logically sound. It verifies that there are no overlapping time spans, that all jobs have defined start and end dates, and that the transition between jobs aligns with the temporal contexts.

* **Neural-Symbolic Inference:** The neural-symbolic inference process uses a series of logical steps to arrive at the correct answer. It starts by asking "Who was employer before Concordia?" and then uses the temporal information to deduce that Valparaiso University was the previous employer.

### Key Observations

* The diagram highlights the importance of temporal reasoning in answering questions about employment history.

* The neural-symbolic approach combines the strengths of neural networks (for representation learning) and symbolic reasoning (for logical inference).

* The consistency check plays a crucial role in ensuring the accuracy of the inferred answer.

* The "Vanilla" approach, which directly answers the question without considering temporal contexts and consistency, leads to an incorrect answer.

### Interpretation

The diagram demonstrates a neural-symbolic approach to question answering that leverages temporal contexts and consistency checks to arrive at accurate conclusions. The process begins with a question and relevant temporal information. This information is then transformed into a symbolic representation, which allows for structured reasoning. A consistency check ensures the validity of the derived information. Finally, neural-symbolic inference is used to deduce the correct answer.

The diagram highlights the limitations of a purely "Vanilla" approach, which can lead to incorrect answers due to a lack of temporal reasoning. By incorporating temporal contexts and consistency checks, the neural-symbolic approach provides a more robust and accurate solution. The diagram showcases the power of combining neural networks and symbolic reasoning to solve complex question answering tasks.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Neural-Symbolic Inference Process for Employer Identification

### Overview

This diagram illustrates a neural-symbolic inference process used to answer a question about Jaroslav Pelikan's employment history. The process involves temporal context extraction, symbolic representation, consistency checking, and ultimately, arriving at a conclusion. The diagram visually represents the flow of information from a natural language question to a structured answer, leveraging both neural network (NeSTR) and symbolic reasoning components.

### Components/Axes

The diagram is segmented into several key areas:

* **Question & Temporal Contexts (Left):** Presents the initial question and relevant temporal facts.

* **NeSTR & Symbolic Representation (Top-Right):** Shows the transformation of information into a symbolic format.

* **Consistency Check (Center-Right):** Depicts the validation of the inferred information.

* **Neural-Symbolic Inference (Bottom-Right):** Illustrates the final reasoning steps leading to the answer.

* **Answer & Validation (Bottom-Left):** Displays the proposed answer and its correctness.

There are no explicit axes in the traditional sense, but the diagram uses arrows to indicate the flow of information and relationships between components.

### Detailed Analysis or Content Details

**1. Question & Temporal Contexts:**

* **Question:** "Which employer did Jaroslav Pelikan work for before Concordia Seminary?"

* **Temporal Contexts:**

* "Jaroslav Pelikan works for Valparaiso University from Jan. 1946 to Jan. 1949."

* "Jaroslav Pelikan works for Concordia Seminary from Jan. 1949 to Jan. 1953."

**2. NeSTR & Symbolic Representation:**

* **NeSTR:** The NeSTR component is shown processing the temporal contexts.

* **Symbolic Representation:**

* `relation(S_start, te)`: Represents the start time of a relation.

* `relation(S_end, te)`: Represents the end time of a relation.

* `works_for(Jaroslav_Pelikan, Valparaiso_University, Jan_1946, Jan_1949)`

* `works_for(Jaroslav_Pelikan, Concordia_Seminary, Jan_1949, Jan_1953)`

* Visual representation of "works for" relation with "J.P." connected to "V.U." and "Jan 1946" to "Jan 1949".

**3. Consistency Check:**

* **Contexts:** The symbolic representation of the contexts is shown.

* **Conclusion:** The conclusion is that there are no inconsistencies.

* **Checks:**

* "1. No overlapping time spans" - Checkmark.

* "2. All jobs have start and end" - Checkmark.

* "3. Jan_1949 transition aligns: Valparaiso -> Concordia" - Checkmark.

* **Reflection:** A "Reflection" icon is present.

**4. Neural-Symbolic Inference:**

* **Question 1:** "[Who was employer before Concordia?]"

* **Question 2:** "[Which job ends at Jan 1949?]"

* **Answer:** "[Valparaiso ends at Jan 1949]"

* **Conclusion:** "[Conclusion: Valparaiso was the previous employer]"

**5. Answer & Validation:**

* **Vanilla:** Concordia Seminary - Marked with a red "X" (WRONG).

* **Answer:** Valparaiso University - Marked as CORRECT.

* **NeSTR:** Indicates the NeSTR component is involved in the answer.

### Key Observations

* The diagram clearly demonstrates a multi-step reasoning process.

* The use of symbolic representation allows for explicit reasoning about temporal relationships.

* The consistency check ensures the validity of the inferred information.

* The diagram highlights the successful identification of Valparaiso University as the employer preceding Concordia Seminary.

* The "Vanilla" answer (Concordia Seminary) is explicitly marked as incorrect, demonstrating the value of the neural-symbolic approach.

### Interpretation

The diagram illustrates a sophisticated approach to question answering that combines the strengths of neural networks (NeSTR for context extraction) and symbolic reasoning (for logical inference and consistency checking). The process begins with extracting temporal contexts from the input question and relevant facts. These facts are then transformed into a symbolic representation, enabling the system to reason about the relationships between employers and time periods. The consistency check validates the inferred information, ensuring that there are no temporal overlaps or missing data. Finally, the neural-symbolic inference engine uses this validated information to arrive at the correct answer: Valparaiso University. The explicit marking of the incorrect "Vanilla" answer underscores the importance of the combined approach. The diagram suggests a robust and reliable method for answering complex questions that require reasoning about temporal relationships and factual knowledge. The use of checkmarks and visual cues (red X) enhances the clarity and interpretability of the process.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: NeSTR Temporal Reasoning Process

### Overview

This image is a technical flowchart illustrating the **NeSTR (Neural-Symbolic Temporal Reasoning)** process. It demonstrates how the system answers a temporal question by extracting facts from text, performing symbolic reasoning, checking consistency, and revising an initial incorrect answer. The diagram contrasts a "Vanilla" (naive) approach with the NeSTR method.

### Components/Axes

The diagram is organized into several interconnected regions, each with specific labels and functions:

1. **Top Header Region:**

* **Question Box (Top-Center):** Contains the query: `Which employer did Jaroslav Pelikan work for before Concordia Seminary?`

* **Label Box (Top-Right):** `Label: Valparaiso University` (This is the ground-truth answer).

2. **Left Column - Input & Vanilla Approach:**

* **Temporal Contexts Box (Top-Left):** Contains two extracted facts:

* `Jaroslav Pelikan works for Valparaiso University from Jan, 1946 to Jan, 1949.`

* `Jaroslav Pelikan works for Concordia Seminary from Jan, 1949 to Jan, 1953 ......`

* **Vanilla Box (Middle-Left):** Shows the flawed output of a basic method.

* Header: `Vanilla`

* Output: `Concordia Seminary` with a red `X` and the label `WRONG`.

* **Answer Box (Bottom-Left):** Shows the corrected output.

* Header: `Answer`

* Output: `Valparaiso University` with a green checkmark and the label `CORRECT`.

* Labeled with `NeSTR` below it.

3. **Center Column - NeSTR Core Processing:**

* **NeSTR Box (Top-Center):** The central processing unit. It takes input from the "Temporal Contexts" and feeds into the "Symbolic Representation".

* **Symbolic Representation (Top-Right of Center):** A graphical knowledge graph.

* Nodes: `J P` (Jaroslav Pelikan), `V.U.` (Valparaiso University), `Jan 1946`, `Jan 1949`.

* Edges: `works_for` (from J P to V.U.), `start` (from works_for to Jan 1946), `end` (from works_for to Jan 1949).

* A legend defines the node types: `relation`, `start`, `end`.

* **Structured Fact (Below Symbolic Rep.):** The symbolic representation written as a logical predicate: `works_for(Jaroslav_Pelikan, Valparaiso_University, Jan_1946, Jan_1949)`.

4. **Right Column - Reasoning & Consistency Check:**

* **Neural-Symbolic Inference Chain (Right Side):** A step-by-step reasoning process depicted as a vertical flow.

* `[Who was employer before Concordia?]`

* `[Concordia starts at Jan_1949]`

* `[Which job ends at Jan_1949?]`

* `[Valparaiso ends at Jan_1949]`

* `[Conclusion: Valparaiso was the previous employer]` (marked with a green brain icon).

* **Consistency Check Box (Center-Right):** Validates the reasoning.

* Header: `Consistency Check`

* Sub-components: `Contexts` (icon), `Conclusion` (icon).

* A small diagram shows the temporal relation: `S` (start) -> `relation` -> `O` (end), with `ts` and `te` markers.

* **Checklist:**

1. `No overlapping time spans` ✅

2. `All jobs have start and end` ✅

3. `Jan_1949 transition aligns: Valparaiso -> Concordia` ✅

* **Result:** `No inconsistencies. No revision needed.`

5. **Bottom Region - Reflection & Comparison:**

* **Reflection Box (Bottom-Center):** Compares observed vs. revised symbolic structures.

* `observed`: Shows a structure with nodes `S`, `O`, `ts`, `te`.

* `revised`: Shows a similar structure, indicating no change was needed.

* **Arrow Flow:** A large arrow points from the "Consistency Check" result back to the "Answer" box, confirming the correct answer.

### Detailed Analysis

The diagram meticulously traces the flow of information:

1. **Input:** A natural language question and a set of temporal context sentences.

2. **Initial Error:** The "Vanilla" approach incorrectly answers "Concordia Seminary," likely by matching the employer name in the question without temporal reasoning.

3. **NeSTR Processing:**

* **Extraction:** Facts are extracted from the text into a structured, symbolic form (the knowledge graph and predicate).

* **Inference:** A chain of logical questions is generated to find the employer whose tenure ended at the start date of the target job (Concordia Seminary, Jan 1949).

* **Verification:** The consistency check validates that the inferred timeline is logical (no overlaps, proper start/end dates, correct transition).

4. **Output:** The system arrives at the correct answer, "Valparaiso University," and confirms its validity through the consistency check.

### Key Observations

* **Color Coding:** Green is used for correct elements (checkmarks, conclusion icon). Red is used for the incorrect "Vanilla" output. Purple/pink highlights symbolic nodes and relations.

* **Spatial Layout:** The process flows generally from left (input) to center (processing) to right (reasoning/validation), and then back to the left (final answer). The "Vanilla" and "Answer" boxes are placed side-by-side for direct comparison.

* **Symbolic vs. Neural:** The diagram explicitly separates the "Neural" part (implied in the initial fact extraction and question understanding) from the "Symbolic" part (the explicit knowledge graph, logical predicate, and rule-based consistency checks).

* **Temporal Logic:** The core of the reasoning is temporal. The system doesn't just find "before"; it finds the job that *ends* at the exact time the target job *starts*.

### Interpretation

This diagram is a **conceptual demonstration of a hybrid AI system** designed for robust temporal question answering. It argues that pure neural methods (the "Vanilla" approach) can fail on tasks requiring precise temporal logic. The NeSTR framework addresses this by:

1. **Grounding Language in Symbols:** Converting text into an unambiguous knowledge representation.

2. **Explicit Reasoning:** Using a transparent, step-by-step inference chain that mimics human logical deduction.

3. **Self-Validation:** Incorporating a consistency check module that acts as a "sanity check" on the reasoning process, ensuring the conclusion aligns with all constraints.

The underlying message is that for complex, logic-heavy tasks like temporal reasoning, combining neural perception (understanding text) with symbolic reasoning (logic, rules) leads to more accurate, reliable, and interpretable results than using either approach in isolation. The "Reflection" component suggests the system can also compare its initial parsing with the final validated structure, potentially for learning or debugging.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Temporal Context Analysis for Employer Identification

### Overview

The image depicts a multi-stage flowchart for determining Jaroslav Pelikan's employer before Concordia Seminary. It combines temporal data, symbolic reasoning, and neural-symbolic inference to validate conclusions. The process includes temporal context extraction, consistency checks, and logical inference steps.

### Components/Axes

1. **Temporal Contexts Box** (Left)

- Labels: "Jaroslav Pelikan works for Valparaiso University from Jan, 1946 to Jan, 1949" and "Jaroslav Pelikan works for Concordia Seminary from Jan, 1949 to Jan, 1953"

- Visual: Two document icons with timeline annotations

2. **Symbolic Representation** (Center)

- Labels: "works_for(Jaroslav_Pelikan, Valparaiso_University, Jan_1946, Jan_1949)" and "works_for(Jaroslav_Pelikan, Concordia_Seminary, Jan_1949, Jan_1953)"

- Visual: Node graph with "S" (subject), "O" (object), "ts" (start), "te" (end) relationships

3. **Consistency Check** (Center-Right)

- Labels:

1. "No overlapping time spans"

2. "All jobs have start and end"

3. "Jan_1949 transition aligns: Valparaiso → Concordia"

- Visual: Three green checkmarks with verification symbols

4. **Neural-Symbolic Inference** (Right)

- Labels:

- "[Who was employer before Concordia?]"

- "[Concordia starts at Jan_1949]"

- "[Which job ends at Jan_1949?]"

- "[Valparaiso ends at Jan_1949]"

- "[Conclusion: Valparaiso was the previous employer]"

- Visual: Arrow-based logical flow with question-answer pairs

5. **Reflection** (Bottom)

- Labels: "No inconsistencies. No revision needed."

- Visual: Pencil icon with "No revision needed" annotation

### Detailed Analysis

- **Temporal Contexts**: Explicitly states Pelikan's employment periods with precise dates (1946-1949 for Valparaiso, 1949-1953 for Concordia).

- **Symbolic Representation**: Uses formal logic notation (S=subject, O=object, ts=start, te=end) to map employment relationships.

- **Consistency Check**: Validates three critical temporal constraints with binary verification (✓).

- **Neural-Symbolic Inference**: Breaks down the reasoning process into sequential questions leading to the conclusion.

- **Reflection**: Confirms the absence of contradictions requiring data revision.

### Key Observations

1. **Temporal Alignment**: The 1949 transition point serves as the critical boundary between institutions.

2. **Verification Completeness**: All three consistency checks are explicitly validated.

3. **Logical Flow**: The inference process mirrors human reasoning steps (question → temporal analysis → conclusion).

4. **Color Coding**:

- Orange: Temporal contexts

- Purple: Symbolic relationships

- Blue: Consistency checks

- Green: Inference results

### Interpretation

The flowchart demonstrates a hybrid reasoning system combining:

1. **Temporal Grounding**: Precise date-based employment records

2. **Symbolic Logic**: Formal relationship mapping between entities

3. **Neural Inference**: Question-answer progression mimicking human cognition

4. **Validation Framework**: Systematic consistency checks prevent errors

The system correctly identifies Valparaiso University as the prior employer by:

1. Establishing non-overlapping employment periods

2. Confirming all jobs have defined start/end dates

3. Validating the 1949 transition alignment

4. Using backward reasoning from Concordia's start date to identify the preceding employer

This structured approach ensures factual accuracy while maintaining interpretability, making it suitable for both automated processing and human verification.

DECODING INTELLIGENCE...